手撕LLM(一):从源码出发,探索LLM推理全流程

2025年,大模型爆发元年,各种各样的大模型、框架、工具层出不穷,不断刷新人们应用大模型的门槛,短短10行代码,就能完成“加载模型+加载数据集+推理+强化学习”的全流程训练,但其底层的运行机制也被高度抽象的接口给掩盖,大模型那些超参是如何在代码中起作用的我们不得而知,而这种似见非见的感觉总让人感觉惴惴不安;

而最近,我发现了一个宝藏项目,叫MiniMind,作者通过Pytorch自己构建了大模型框架,自己实现的模型的预训练、SFT、Lora、RLHF、蒸馏等一系列的操作,并且开源了相关的训练集数据,同时上传了自己的各种预训练模型,感兴趣的同学可以自己到Github尝试,这里也致敬该作者的开源精神;那接下来我就以此项目为基础,分享一些我在该项目中学习到的知识,通过我的经验,将代码中的‘故事’汇集成一个系列讲述给屏幕前的你;

关于项目的环境搭建和安装运行在README中以及说的非常清楚了,这里就不再过多的去浪费时间在这些上面,今天就以项目的推理代码‘eval_model.py’为起点,手撕模型的推理代码,探索大模型推理的全流程;

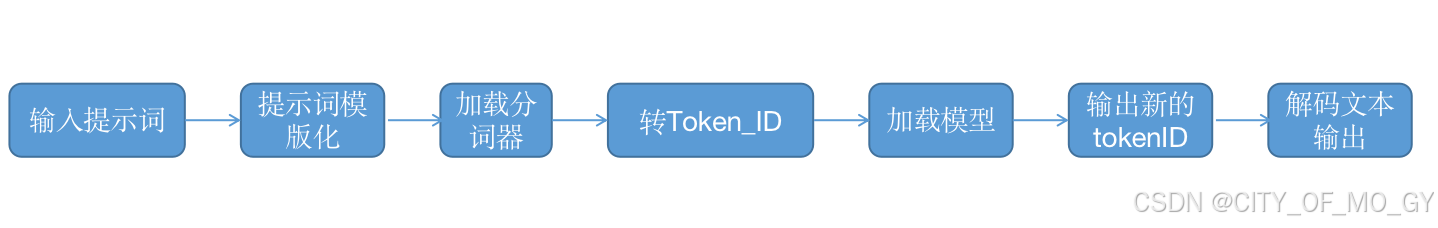

一、俯视整个推理框架

我们先从eval_model.py代码看起,拆解eval_model.py,整体来看一下该项目的推理过程都包含了哪些模块;

# eval_model.py

import argparse

import random

import time

import numpy as np

import torch

import warnings

from transformers import AutoTokenizer, AutoModelForCausalLM

from model.model import MiniMindLM

from model.LMConfig import LMConfig

from model.model_lora import *

warnings.filterwarnings('ignore')

def init_model(args):

tokenizer = AutoTokenizer.from_pretrained('./model/minimind_tokenizer')

if args.load == 0:

moe_path = '_moe' if args.use_moe else ''

modes = {0: 'pretrain', 1: 'full_sft', 2: 'rlhf', 3: 'reason'}

# ckp = f'./{args.out_dir}/{modes[args.model_mode]}_{args.dim}{moe_path}.pth'

ckp = f'../Weights/MiniMind2-PyTorch/{modes[args.model_mode]}_{args.dim}{moe_path}.pth'

model = MiniMindLM(LMConfig(

dim=args.dim,

n_layers=args.n_layers,

max_seq_len=args.max_seq_len,

use_moe=args.use_moe

))

state_dict = torch.load(ckp, map_location=args.device)

model.load_state_dict({k: v for k, v in state_dict.items() if 'mask' not in k}, strict=True)

if args.lora_name != 'None':

apply_lora(model)

load_lora(model, f'./{args.out_dir}/lora/{args.lora_name}_{args.dim}.pth')

else:

transformers_model_path = '../Weights/MiniMind2'

tokenizer = AutoTokenizer.from_pretrained(transformers_model_path)

model = AutoModelForCausalLM.from_pretrained(transformers_model_path, trust_remote_code=True)

print(f'MiniMind模型参数量: {sum(p.numel() for p in model.parameters() if p.requires_grad) / 1e6:.2f}M(illion)')

return model.eval().to(args.device), tokenizer

def get_prompt_datas(args):

if args.model_mode == 0:

# pretrain模型的接龙能力(无法对话)

prompt_datas = [

'马克思主义基本原理',

'人类大脑的主要功能',

'万有引力原理是',

'世界上最高的山峰是',

'二氧化碳在空气中',

'地球上最大的动物有',

'杭州市的美食有'

]

else:

if args.lora_name == 'None':

# 通用对话问题

prompt_datas = [

'请介绍一下自己。',

'你更擅长哪一个学科?',

'鲁迅的《狂人日记》是如何批判封建礼教的?',

'我咳嗽已经持续了两周,需要去医院检查吗?',

'详细的介绍光速的物理概念。',

'推荐一些杭州的特色美食吧。',

'请为我讲解“大语言模型”这个概念。',

'如何理解ChatGPT?',

'Introduce the history of the United States, please.'

]

else:

# 特定领域问题

lora_prompt_datas = {

'lora_identity': [

"你是ChatGPT吧。",

"你叫什么名字?",

"你和openai是什么关系?"

],

'lora_medical': [

'我最近经常感到头晕,可能是什么原因?',

'我咳嗽已经持续了两周,需要去医院检查吗?',

'服用抗生素时需要注意哪些事项?',

'体检报告中显示胆固醇偏高,我该怎么办?',

'孕妇在饮食上需要注意什么?',

'老年人如何预防骨质疏松?',

'我最近总是感到焦虑,应该怎么缓解?',

'如果有人突然晕倒,应该如何急救?'

],

}

prompt_datas = lora_prompt_datas[args.lora_name]

return prompt_datas

# 设置可复现的随机种子

def setup_seed(seed):

random.seed(seed)

np.random.seed(seed)

torch.manual_seed(seed)

torch.cuda.manual_seed(seed)

torch.cuda.manual_seed_all(seed)

torch.backends.cudnn.deterministic = True

torch.backends.cudnn.benchmark = False

def main():

parser = argparse.ArgumentParser(description="Chat with MiniMind")

parser.add_argument('--lora_name', default='None', type=str)

parser.add_argument('--out_dir', default='out', type=str)

parser.add_argument('--temperature', default=0.85, type=float)

parser.add_argument('--top_p', default=0.85, type=float)

parser.add_argument('--device', default='cuda' if torch.cuda.is_available() else 'cpu', type=str)

# 此处max_seq_len(最大允许输入长度)并不意味模型具有对应的长文本的性能,仅防止QA出现被截断的问题

# MiniMind2-moe (145M):(dim=640, n_layers=8, use_moe=True)

# MiniMind2-Small (26M):(dim=512, n_layers=8)

# MiniMind2 (104M):(dim=768, n_layers=16)

parser.add_argument('--dim', default=512, type=int)

parser.add_argument('--n_layers', default=8, type=int)

parser.add_argument('--max_seq_len', default=8192, type=int)

parser.add_argument('--use_moe', default=False, type=bool)

# 携带历史对话上下文条数

# history_cnt需要设为偶数,即【用户问题, 模型回答】为1组;设置为0时,即当前query不携带历史上文

# 模型未经过外推微调时,在更长的上下文的chat_template时难免出现性能的明显退化,因此需要注意此处设置

parser.add_argument('--history_cnt', default=0, type=int)

parser.add_argument('--stream', default=True, type=bool)

parser.add_argument('--load', default=0, type=int, help="0: 原生torch权重,1: transformers加载")

parser.add_argument('--model_mode', default=1, type=int,

help="0: 预训练模型,1: SFT-Chat模型,2: RLHF-Chat模型,3: Reason模型")

args = parser.parse_args()

model, tokenizer = init_model(args)

prompts = get_prompt_datas(args)

test_mode = int(input('[0] 自动测试\n[1] 手动输入\n'))

messages = []

for idx, prompt in enumerate(prompts if test_mode == 0 else iter(lambda: input('👶: '), '')):

setup_seed(random.randint(0, 2048))

# setup_seed(2025) # 如需固定每次输出则换成【固定】的随机种子

if test_mode == 0: print(f'👶: {prompt}')

messages = messages[-args.history_cnt:] if args.history_cnt else []

messages.append({"role": "user", "content": prompt})

new_prompt = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)[-args.max_seq_len + 1:] if args.model_mode != 0 else (tokenizer.bos_token + prompt)

answer = new_prompt

with torch.no_grad():

x = torch.tensor(tokenizer(new_prompt)['input_ids'], device=args.device).unsqueeze(0)

outputs = model.generate(

x,

eos_token_id=tokenizer.eos_token_id,

max_new_tokens=args.max_seq_len,

temperature=args.temperature,

top_p=args.top_p,

stream=True,

pad_token_id=tokenizer.pad_token_id

)

print('🤖️: ', end='')

try:

if not args.stream:

print(tokenizer.decode(outputs.squeeze()[x.shape[1]:].tolist(), skip_special_tokens=True), end='')

else:

history_idx = 0

for y in outputs:

answer = tokenizer.decode(y[0].tolist(), skip_special_tokens=True)

if (answer and answer[-1] == '�') or not answer:

continue

print(answer[history_idx:], end='', flush=True)

history_idx = len(answer)

except StopIteration:

print("No answer")

print('\n')

messages.append({"role": "assistant", "content": answer})

if __name__ == "__main__":

main()

该脚本中一共定义了4个函数API,分别是:

| 函数API | 作用 |

| init_model() | 加载模型和分词器 |

| get_prompt_datas() | 定义自动测试的提示词 |

| setup_seed() | 设定可复现的随机种子 |

| main() | 指定超参、推理、后处理 |

1.1 main()

def main():

parser = argparse.ArgumentParser(description="Chat with MiniMind")

parser.add_argument('--lora_name', default='None', type=str)

parser.add_argument('--out_dir', default='out', type=str)

parser.add_argument('--temperature', default=0.85, type=float)

parser.add_argument('--top_p', default=0.85, type=float)

parser.add_argument('--device', default='cuda' if torch.cuda.is_available() else 'cpu', type=str)

# 此处max_seq_len(最大允许输入长度)并不意味模型具有对应的长文本的性能,仅防止QA出现被截断的问题

# MiniMind2-moe (145M):(dim=640, n_layers=8, use_moe=True)

# MiniMind2-Small (26M):(dim=512, n_layers=8)

# MiniMind2 (104M):(dim=768, n_layers=16)

parser.add_argument('--dim', default=512, type=int)

parser.add_argument('--n_layers', default=8, type=int)

parser.add_argument('--max_seq_len', default=8192, type=int)

parser.add_argument('--use_moe', default=False, type=bool)

# 携带历史对话上下文条数

# history_cnt需要设为偶数,即【用户问题, 模型回答】为1组;设置为0时,即当前query不携带历史上文

# 模型未经过外推微调时,在更长的上下文的chat_template时难免出现性能的明显退化,因此需要注意此处设置

parser.add_argument('--history_cnt', default=0, type=int)

parser.add_argument('--stream', default=True, type=bool)

parser.add_argument('--load', default=0, type=int, help="0: 原生torch权重,1: transformers加载")

parser.add_argument('--model_mode', default=1, type=int,

help="0: 预训练模型,1: SFT-Chat模型,2: RLHF-Chat模型,3: Reason模型")

args = parser.parse_args()

model, tokenizer = init_model(args)

prompts = get_prompt_datas(args)

test_mode = int(input('[0] 自动测试\n[1] 手动输入\n'))

messages = []

for idx, prompt in enumerate(prompts if test_mode == 0 else iter(lambda: input('👶: '), '')):

setup_seed(random.randint(0, 2048))

# setup_seed(2025) # 如需固定每次输出则换成【固定】的随机种子

if test_mode == 0: print(f'👶: {prompt}')

messages = messages[-args.history_cnt:] if args.history_cnt else []

messages.append({"role": "user", "content": prompt})

new_prompt = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)[-args.max_seq_len + 1:] if args.model_mode != 0 else (tokenizer.bos_token + prompt)

answer = new_prompt

with torch.no_grad():

x = torch.tensor(tokenizer(new_prompt)['input_ids'], device=args.device).unsqueeze(0)

outputs = model.generate(

x,

eos_token_id=tokenizer.eos_token_id,

max_new_tokens=args.max_seq_len,

temperature=args.temperature,

top_p=args.top_p,

stream=True,

pad_token_id=tokenizer.pad_token_id

)

print('🤖️: ', end='')

try:

if not args.stream:

print(tokenizer.decode(outputs.squeeze()[x.shape[1]:].tolist(), skip_special_tokens=True), end='')

else:

history_idx = 0

for y in outputs:

answer = tokenizer.decode(y[0].tolist(), skip_special_tokens=True)

if (answer and answer[-1] == '�') or not answer:

continue

print(answer[history_idx:], end='', flush=True)

history_idx = len(answer)

except StopIteration:

print("No answer")

print('\n')

messages.append({"role": "assistant", "content": answer})1.1.1 超参

| 参数 | 含义 |

| lora_name | 指定Lora模型(本章未用到) |

| out_dir | 指定输出路径(本章未用到) |

| temperature | 用于控制生成文本随机性的超参数 |

| top_p | 用于控制生成文本随机性的超参数 |

| device | 指定算力设备 |

| dim | 指定大模型的宽度,即token转化为向量的维度 |

| n_layers | 指定transformer模块的个数 |

| max_seq_len | 指定最大允许输入长度 |

| use_moe | 使用MOE专家模型 |

| history_cnt | 指定携带历史对话上下文条数 |

| stream | 指定是否流式输出 |

| load | 指定加载模型的方式,0: 原生torch权重,1: transformers加载 |

| model_mode | 指定加载模型的类型,0: 预训练模型,1: SFT-Chat模型,2: RLHF-Chat模型,3: Reason模型 |

1.1.2 推理前

# 加载模型和分词器

model, tokenizer = init_model(args)

# 加载/遍历提示词

prompts = get_prompt_datas(args)

# 设置随机种子

setup_seed(random.randint(0, 2048))

# 将提示词以字典的形式添加到messages

if test_mode == 0: print(f'👶: {prompt}')

messages = messages[-args.history_cnt:] if args.history_cnt else []

messages.append({"role": "user", "content": prompt})

# 将messages通过对话模版输出

new_prompt = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)[-args.max_seq_len + 1:] if args.model_mode != 0 else (tokenizer.bos_token + prompt)

# 通过分词器将文本转换成对应的token_ID

x = torch.tensor(tokenizer(new_prompt)['input_ids'], device=args.device).unsqueeze(0)1.1.3 推理

# 将转换为token_ID的数据送入大模型推理,得到对应输出

outputs = model.generate(

x,

eos_token_id=tokenizer.eos_token_id,

max_new_tokens=args.max_seq_len,

temperature=args.temperature,

top_p=args.top_p,

stream=True,

pad_token_id=tokenizer.pad_token_id

)1.1.4 推理后

# 解码模型的输出,转换为文本数据

tokenizer.decode(outputs.squeeze()[x.shape[1]:].tolist(), skip_special_tokens=True)1.2 init_model()

def init_model(args):

tokenizer = AutoTokenizer.from_pretrained('./model/minimind_tokenizer')

if args.load == 0:

moe_path = '_moe' if args.use_moe else ''

modes = {0: 'pretrain', 1: 'full_sft', 2: 'rlhf', 3: 'reason'}

# ckp = f'./{args.out_dir}/{modes[args.model_mode]}_{args.dim}{moe_path}.pth'

ckp = f'../Weights/MiniMind2-PyTorch/{modes[args.model_mode]}_{args.dim}{moe_path}.pth'

model = MiniMindLM(LMConfig(

dim=args.dim,

n_layers=args.n_layers,

max_seq_len=args.max_seq_len,

use_moe=args.use_moe

))

state_dict = torch.load(ckp, map_location=args.device)

model.load_state_dict({k: v for k, v in state_dict.items() if 'mask' not in k}, strict=True)

if args.lora_name != 'None':

apply_lora(model)

load_lora(model, f'./{args.out_dir}/lora/{args.lora_name}_{args.dim}.pth')

else:

transformers_model_path = '../Weights/MiniMind2'

tokenizer = AutoTokenizer.from_pretrained(transformers_model_path)

model = AutoModelForCausalLM.from_pretrained(transformers_model_path, trust_remote_code=True)

print(f'MiniMind模型参数量: {sum(p.numel() for p in model.parameters() if p.requires_grad) / 1e6:.2f}M(illion)')

return model.eval().to(args.device), tokenizer1.2.1 加载分词器

tokenizer = AutoTokenizer.from_pretrained('./model/minimind_tokenizer')1.2.2 初始化模型

model = MiniMindLM(LMConfig(

dim=args.dim,

n_layers=args.n_layers,

max_seq_len=args.max_seq_len,

use_moe=args.use_moe

))1.2.3 读取模型权重并赋值给模型

这里我们选择通过Pytorch加载,最后返回模型和分词器;

moe_path = '_moe' if args.use_moe else ''

modes = {0: 'pretrain', 1: 'full_sft', 2: 'rlhf', 3: 'reason'}

# ckp = f'./{args.out_dir}/{modes[args.model_mode]}_{args.dim}{moe_path}.pth'

ckp = f'../Weights/MiniMind2-PyTorch/{modes[args.model_mode]}_{args.dim}{moe_path}.pth'

state_dict = torch.load(ckp, map_location=args.device)

model.load_state_dict({k: v for k, v in state_dict.items() if 'mask' not in k}, strict=True)1.3 get_prompt_datas()

作者定义了很多不同类型模型对应的提示词;

def get_prompt_datas(args):

if args.model_mode == 0:

# pretrain模型的接龙能力(无法对话)

prompt_datas = [

'马克思主义基本原理',

'人类大脑的主要功能',

'万有引力原理是',

'世界上最高的山峰是',

'二氧化碳在空气中',

'地球上最大的动物有',

'杭州市的美食有'

]

else:

if args.lora_name == 'None':

# 通用对话问题

prompt_datas = [

'请介绍一下自己。',

'你更擅长哪一个学科?',

'鲁迅的《狂人日记》是如何批判封建礼教的?',

'我咳嗽已经持续了两周,需要去医院检查吗?',

'详细的介绍光速的物理概念。',

'推荐一些杭州的特色美食吧。',

'请为我讲解“大语言模型”这个概念。',

'如何理解ChatGPT?',

'Introduce the history of the United States, please.'

]

else:

# 特定领域问题

lora_prompt_datas = {

'lora_identity': [

"你是ChatGPT吧。",

"你叫什么名字?",

"你和openai是什么关系?"

],

'lora_medical': [

'我最近经常感到头晕,可能是什么原因?',

'我咳嗽已经持续了两周,需要去医院检查吗?',

'服用抗生素时需要注意哪些事项?',

'体检报告中显示胆固醇偏高,我该怎么办?',

'孕妇在饮食上需要注意什么?',

'老年人如何预防骨质疏松?',

'我最近总是感到焦虑,应该怎么缓解?',

'如果有人突然晕倒,应该如何急救?'

],

}

prompt_datas = lora_prompt_datas[args.lora_name]

return prompt_datas1.4 整体流程

二、模型初始化及推理详情

根据‘from model.model import MiniMindLM’我们可以找到定义模型结构的模型类;

# model.py

import math

import struct

import inspect

import time

from .LMConfig import LMConfig

from typing import Any, Optional, Tuple, List

import numpy as np

import torch

import torch.nn.functional as F

from torch import nn

from transformers import PreTrainedModel

from transformers.modeling_outputs import CausalLMOutputWithPast

class RMSNorm(torch.nn.Module):

def __init__(self, dim: int, eps: float = 1e-6):

super().__init__()

self.eps = eps

self.weight = nn.Parameter(torch.ones(dim))

def _norm(self, x):

return x * torch.rsqrt(x.pow(2).mean(-1, keepdim=True) + self.eps)

def forward(self, x):

return self.weight * self._norm(x.float()).type_as(x)

def precompute_pos_cis(dim: int, end: int = int(32 * 1024), theta: float = 1e6):

freqs = 1.0 / (theta ** (torch.arange(0, dim, 2)[: (dim // 2)].float() / dim))

t = torch.arange(end, device=freqs.device) # type: ignore

freqs = torch.outer(t, freqs).float() # type: ignore

pos_cis = torch.polar(torch.ones_like(freqs), freqs) # complex64

return pos_cis

def apply_rotary_emb(xq, xk, pos_cis):

def unite_shape(pos_cis, x):

ndim = x.ndim

assert 0 <= 1 < ndim

assert pos_cis.shape == (x.shape[1], x.shape[-1])

shape = [d if i == 1 or i == ndim - 1 else 1 for i, d in enumerate(x.shape)]

return pos_cis.view(*shape)

xq_ = torch.view_as_complex(xq.float().reshape(*xq.shape[:-1], -1, 2))

xk_ = torch.view_as_complex(xk.float().reshape(*xk.shape[:-1], -1, 2))

pos_cis = unite_shape(pos_cis, xq_)

xq_out = torch.view_as_real(xq_ * pos_cis).flatten(3)

xk_out = torch.view_as_real(xk_ * pos_cis).flatten(3)

return xq_out.type_as(xq), xk_out.type_as(xk)

def repeat_kv(x: torch.Tensor, n_rep: int) -> torch.Tensor:

"""torch.repeat_interleave(x, dim=2, repeats=n_rep)"""

bs, slen, n_kv_heads, head_dim = x.shape

if n_rep == 1:

return x

return (

x[:, :, :, None, :]

.expand(bs, slen, n_kv_heads, n_rep, head_dim)

.reshape(bs, slen, n_kv_heads * n_rep, head_dim)

)

class Attention(nn.Module):

def __init__(self, args: LMConfig):

super().__init__()

self.n_kv_heads = args.n_heads if args.n_kv_heads is None else args.n_kv_heads

assert args.n_heads % self.n_kv_heads == 0

self.n_local_heads = args.n_heads

self.n_local_kv_heads = self.n_kv_heads

self.n_rep = self.n_local_heads // self.n_local_kv_heads

self.head_dim = args.dim // args.n_heads

self.wq = nn.Linear(args.dim, args.n_heads * self.head_dim, bias=False)

self.wk = nn.Linear(args.dim, self.n_kv_heads * self.head_dim, bias=False)

self.wv = nn.Linear(args.dim, self.n_kv_heads * self.head_dim, bias=False)

self.wo = nn.Linear(args.n_heads * self.head_dim, args.dim, bias=False)

self.attn_dropout = nn.Dropout(args.dropout)

self.resid_dropout = nn.Dropout(args.dropout)

self.dropout = args.dropout

self.flash = hasattr(torch.nn.functional, 'scaled_dot_product_attention') and args.flash_attn

# print("WARNING: using slow attention. Flash Attention requires PyTorch >= 2.0")

mask = torch.full((1, 1, args.max_seq_len, args.max_seq_len), float("-inf"))

mask = torch.triu(mask, diagonal=1)

self.register_buffer("mask", mask, persistent=False)

def forward(self,

x: torch.Tensor,

pos_cis: torch.Tensor,

past_key_value: Optional[Tuple[torch.Tensor, torch.Tensor]] = None,

use_cache=False):

bsz, seq_len, _ = x.shape

xq, xk, xv = self.wq(x), self.wk(x), self.wv(x)

xq = xq.view(bsz, seq_len, self.n_local_heads, self.head_dim)

xk = xk.view(bsz, seq_len, self.n_local_kv_heads, self.head_dim)

xv = xv.view(bsz, seq_len, self.n_local_kv_heads, self.head_dim)

xq, xk = apply_rotary_emb(xq, xk, pos_cis)

# kv_cache实现

if past_key_value is not None:

xk = torch.cat([past_key_value[0], xk], dim=1)

xv = torch.cat([past_key_value[1], xv], dim=1)

past_kv = (xk, xv) if use_cache else None

xq, xk, xv = (

xq.transpose(1, 2),

repeat_kv(xk, self.n_rep).transpose(1, 2),

repeat_kv(xv, self.n_rep).transpose(1, 2)

)

if self.flash and seq_len != 1:

dropout_p = self.dropout if self.training else 0.0

output = F.scaled_dot_product_attention(

xq, xk, xv,

attn_mask=None,

dropout_p=dropout_p,

is_causal=True

)

else:

scores = (xq @ xk.transpose(-2, -1)) / math.sqrt(self.head_dim)

scores += self.mask[:, :, :seq_len, :seq_len]

scores = F.softmax(scores.float(), dim=-1).type_as(xq)

scores = self.attn_dropout(scores)

output = scores @ xv

output = output.transpose(1, 2).reshape(bsz, seq_len, -1)

output = self.resid_dropout(self.wo(output))

return output, past_kv

class FeedForward(nn.Module):

def __init__(self, config: LMConfig):

super().__init__()

if config.hidden_dim is None:

hidden_dim = 4 * config.dim

hidden_dim = int(2 * hidden_dim / 3)

config.hidden_dim = config.multiple_of * ((hidden_dim + config.multiple_of - 1) // config.multiple_of)

self.w1 = nn.Linear(config.dim, config.hidden_dim, bias=False)

self.w2 = nn.Linear(config.hidden_dim, config.dim, bias=False)

self.w3 = nn.Linear(config.dim, config.hidden_dim, bias=False)

self.dropout = nn.Dropout(config.dropout)

def forward(self, x):

return self.dropout(self.w2(F.silu(self.w1(x)) * self.w3(x)))

class MoEGate(nn.Module):

def __init__(self, config: LMConfig):

super().__init__()

self.config = config

self.top_k = config.num_experts_per_tok

self.n_routed_experts = config.n_routed_experts

self.scoring_func = config.scoring_func

self.alpha = config.aux_loss_alpha

self.seq_aux = config.seq_aux

self.norm_topk_prob = config.norm_topk_prob

self.gating_dim = config.dim

self.weight = nn.Parameter(torch.empty((self.n_routed_experts, self.gating_dim)))

self.reset_parameters()

def reset_parameters(self) -> None:

import torch.nn.init as init

init.kaiming_uniform_(self.weight, a=math.sqrt(5))

def forward(self, hidden_states):

bsz, seq_len, h = hidden_states.shape

hidden_states = hidden_states.view(-1, h)

logits = F.linear(hidden_states, self.weight, None)

if self.scoring_func == 'softmax':

scores = logits.softmax(dim=-1)

else:

raise NotImplementedError(f'insupportable scoring function for MoE gating: {self.scoring_func}')

topk_weight, topk_idx = torch.topk(scores, k=self.top_k, dim=-1, sorted=False)

if self.top_k > 1 and self.norm_topk_prob:

denominator = topk_weight.sum(dim=-1, keepdim=True) + 1e-20

topk_weight = topk_weight / denominator

if self.training and self.alpha > 0.0:

scores_for_aux = scores

aux_topk = self.top_k

topk_idx_for_aux_loss = topk_idx.view(bsz, -1)

if self.seq_aux:

scores_for_seq_aux = scores_for_aux.view(bsz, seq_len, -1)

ce = torch.zeros(bsz, self.n_routed_experts, device=hidden_states.device)

ce.scatter_add_(1, topk_idx_for_aux_loss,

torch.ones(bsz, seq_len * aux_topk, device=hidden_states.device)).div_(

seq_len * aux_topk / self.n_routed_experts)

aux_loss = (ce * scores_for_seq_aux.mean(dim=1)).sum(dim=1).mean() * self.alpha

else:

mask_ce = F.one_hot(topk_idx_for_aux_loss.view(-1), num_classes=self.n_routed_experts)

ce = mask_ce.float().mean(0)

Pi = scores_for_aux.mean(0)

fi = ce * self.n_routed_experts

aux_loss = (Pi * fi).sum() * self.alpha

else:

aux_loss = 0

return topk_idx, topk_weight, aux_loss

class MOEFeedForward(nn.Module):

def __init__(self, config: LMConfig):

super().__init__()

self.config = config

self.experts = nn.ModuleList([

FeedForward(config)

for _ in range(config.n_routed_experts)

])

self.gate = MoEGate(config)

if config.n_shared_experts is not None:

self.shared_experts = FeedForward(config)

def forward(self, x):

identity = x

orig_shape = x.shape

bsz, seq_len, _ = x.shape

# 使用门控机制选择专家

topk_idx, topk_weight, aux_loss = self.gate(x)

x = x.view(-1, x.shape[-1])

flat_topk_idx = topk_idx.view(-1)

if self.training:

# 训练模式下,重复输入数据

x = x.repeat_interleave(self.config.num_experts_per_tok, dim=0)

y = torch.empty_like(x, dtype=torch.float16)

for i, expert in enumerate(self.experts):

y[flat_topk_idx == i] = expert(x[flat_topk_idx == i]).to(y.dtype) # 确保类型一致

y = (y.view(*topk_weight.shape, -1) * topk_weight.unsqueeze(-1)).sum(dim=1)

y = y.view(*orig_shape)

else:

# 推理模式下,只选择最优专家

y = self.moe_infer(x, flat_topk_idx, topk_weight.view(-1, 1)).view(*orig_shape)

if self.config.n_shared_experts is not None:

y = y + self.shared_experts(identity)

self.aux_loss = aux_loss

return y

@torch.no_grad()

def moe_infer(self, x, flat_expert_indices, flat_expert_weights):

expert_cache = torch.zeros_like(x)

idxs = flat_expert_indices.argsort()

tokens_per_expert = flat_expert_indices.bincount().cpu().numpy().cumsum(0)

token_idxs = idxs // self.config.num_experts_per_tok

# 例如当tokens_per_expert=[6, 15, 20, 26, 33, 38, 46, 52]

# 当token_idxs=[3, 7, 19, 21, 24, 25, 4, 5, 6, 10, 11, 12...]

# 意味着当token_idxs[:6] -> [3, 7, 19, 21, 24, 25, 4]位置的token都由专家0处理,token_idxs[6:15]位置的token都由专家1处理......

for i, end_idx in enumerate(tokens_per_expert):

start_idx = 0 if i == 0 else tokens_per_expert[i - 1]

if start_idx == end_idx:

continue

expert = self.experts[i]

exp_token_idx = token_idxs[start_idx:end_idx]

expert_tokens = x[exp_token_idx]

expert_out = expert(expert_tokens).to(expert_cache.dtype)

expert_out.mul_(flat_expert_weights[idxs[start_idx:end_idx]])

# 使用 scatter_add_ 进行 sum 操作

expert_cache.scatter_add_(0, exp_token_idx.view(-1, 1).repeat(1, x.shape[-1]), expert_out)

return expert_cache

class MiniMindBlock(nn.Module):

def __init__(self, layer_id: int, config: LMConfig):

super().__init__()

self.n_heads = config.n_heads

self.dim = config.dim

self.head_dim = config.dim // config.n_heads

self.attention = Attention(config)

self.layer_id = layer_id

self.attention_norm = RMSNorm(config.dim, eps=config.norm_eps)

self.ffn_norm = RMSNorm(config.dim, eps=config.norm_eps)

self.feed_forward = FeedForward(config) if not config.use_moe else MOEFeedForward(config)

def forward(self, x, pos_cis, past_key_value=None, use_cache=False):

h_attn, past_kv = self.attention(

self.attention_norm(x),

pos_cis,

past_key_value=past_key_value,

use_cache=use_cache

)

h = x + h_attn

out = h + self.feed_forward(self.ffn_norm(h))

return out, past_kv

class MiniMindLM(PreTrainedModel):

config_class = LMConfig

def __init__(self, params: LMConfig = None):

self.params = params or LMConfig()

super().__init__(self.params)

self.vocab_size, self.n_layers = params.vocab_size, params.n_layers

self.tok_embeddings = nn.Embedding(params.vocab_size, params.dim)

self.dropout = nn.Dropout(params.dropout)

self.layers = nn.ModuleList([MiniMindBlock(l, params) for l in range(self.n_layers)])

self.norm = RMSNorm(params.dim, eps=params.norm_eps)

self.output = nn.Linear(params.dim, params.vocab_size, bias=False)

self.tok_embeddings.weight = self.output.weight

self.register_buffer("pos_cis",

precompute_pos_cis(dim=params.dim // params.n_heads, theta=params.rope_theta),

persistent=False)

self.OUT = CausalLMOutputWithPast()

def forward(self,

input_ids: Optional[torch.Tensor] = None,

past_key_values: Optional[List[Tuple[torch.Tensor, torch.Tensor]]] = None,

use_cache: bool = False,

**args):

past_key_values = past_key_values or [None] * len(self.layers)

start_pos = args.get('start_pos', 0)

h = self.dropout(self.tok_embeddings(input_ids))

# print(h.shape)

pos_cis = self.pos_cis[start_pos:start_pos + input_ids.size(1)]

past_kvs = []

for l, layer in enumerate(self.layers):

h, past_kv = layer(

h, pos_cis,

past_key_value=past_key_values[l],

use_cache=use_cache

)

past_kvs.append(past_kv)

logits = self.output(self.norm(h))

aux_loss = sum(l.feed_forward.aux_loss for l in self.layers if isinstance(l.feed_forward, MOEFeedForward))

self.OUT.__setitem__('logits', logits)

self.OUT.__setitem__('aux_loss', aux_loss)

self.OUT.__setitem__('past_key_values', past_kvs)

return self.OUT

@torch.inference_mode()

def generate(self, input_ids, eos_token_id=2, max_new_tokens=1024, temperature=0.75, top_p=0.90,

stream=False, rp=1., use_cache=True, pad_token_id=0, **args):

# 流式生成

if stream:

return self._stream(input_ids, eos_token_id, max_new_tokens, temperature, top_p, rp, use_cache, **args)

# 直接生成

generated = []

for i in range(input_ids.size(0)):

non_pad = input_ids[i][input_ids[i] != pad_token_id].unsqueeze(0)

out = self._stream(non_pad, eos_token_id, max_new_tokens, temperature, top_p, rp, use_cache, **args)

tokens_list = [tokens[:, -1:] for tokens in out]

gen = torch.cat(tokens_list, dim=-1) if tokens_list else non_pad

full_sequence = torch.cat([non_pad, gen], dim=-1)

generated.append(full_sequence)

max_length = max(seq.size(1) for seq in generated)

generated = [

torch.cat(

[seq, torch.full((1, max_length - seq.size(1)), pad_token_id, dtype=seq.dtype, device=seq.device)],

dim=-1)

for seq in generated

]

return torch.cat(generated, dim=0)

def _stream(self, input_ids, eos_token_id, max_new_tokens, temperature, top_p, rp, use_cache, **args):

start, first_seq, past_kvs = input_ids.shape[1], True, None

while input_ids.shape[1] < max_new_tokens - 1:

if first_seq or not use_cache:

out, first_seq = self(input_ids, past_key_values=past_kvs, use_cache=use_cache, **args), False

else:

out = self(input_ids[:, -1:], past_key_values=past_kvs, use_cache=use_cache,

start_pos=input_ids.shape[1] - 1, **args)

logits, past_kvs = out.logits[:, -1, :], out.past_key_values

logits[:, list(set(input_ids.tolist()[0]))] /= rp

logits /= (temperature + 1e-9)

if top_p is not None and top_p < 1.0:

sorted_logits, sorted_indices = torch.sort(logits, descending=True, dim=-1)

sorted_probs = F.softmax(sorted_logits, dim=-1)

cumulative_probs = torch.cumsum(sorted_probs, dim=-1)

sorted_indices_to_remove = cumulative_probs > top_p

sorted_indices_to_remove[:, 1:] = sorted_indices_to_remove[:, :-1].clone()

sorted_indices_to_remove[:, 0] = False

indices_to_remove = sorted_indices_to_remove.scatter(1, sorted_indices, sorted_indices_to_remove)

logits[indices_to_remove] = -float('Inf')

input_ids_next = torch.multinomial(F.softmax(logits, dim=-1), num_samples=1)

input_ids = torch.cat((input_ids, input_ids_next), dim=1)

yield input_ids[:, start:]

if input_ids_next.item() == eos_token_id:

break

2.1 模型初始化

class MiniMindLM(PreTrainedModel):

config_class = LMConfig

def __init__(self, params: LMConfig = None):

self.params = params or LMConfig()

super().__init__(self.params)

self.vocab_size, self.n_layers = params.vocab_size, params.n_layers

self.tok_embeddings = nn.Embedding(params.vocab_size, params.dim)

self.dropout = nn.Dropout(params.dropout)

self.layers = nn.ModuleList([MiniMindBlock(l, params) for l in range(self.n_layers)])

self.norm = RMSNorm(params.dim, eps=params.norm_eps)

self.output = nn.Linear(params.dim, params.vocab_size, bias=False)

self.tok_embeddings.weight = self.output.weight

self.register_buffer("pos_cis",

precompute_pos_cis(dim=params.dim // params.n_heads, theta=params.rope_theta),

persistent=False)

self.OUT = CausalLMOutputWithPast()2.1.1 超参

# LMConfig.py

from transformers import PretrainedConfig

from typing import List

class LMConfig(PretrainedConfig):

model_type = "minimind"

def __init__(

self,

dim: int = 512, # 模型宽度

n_layers: int = 8, # 模型深度

n_heads: int = 8, # 自注意力Q的头数

n_kv_heads: int = 2, # K、V的头数

vocab_size: int = 6400, # 词汇表数量

hidden_dim: int = None,

multiple_of: int = 64,

norm_eps: float = 1e-5, #这是一个非常小的数值,用于确保数值稳定性,避免除以接近零的数导致的数值问题

max_seq_len: int = 8192,

rope_theta: int = 1e6,

dropout: float = 0.0,

flash_attn: bool = True,

####################################################

# Here are the specific configurations of MOE

# When use_moe is false, the following is invalid

####################################################

use_moe: bool = False,

####################################################

num_experts_per_tok: int = 2,

n_routed_experts: int = 4,

n_shared_experts: bool = True,

scoring_func: str = 'softmax',

aux_loss_alpha: float = 0.1,

seq_aux: bool = True,

norm_topk_prob: bool = True,

**kwargs,

):

self.dim = dim

self.n_layers = n_layers

self.n_heads = n_heads

self.n_kv_heads = n_kv_heads

self.vocab_size = vocab_size

self.hidden_dim = hidden_dim

self.multiple_of = multiple_of

self.norm_eps = norm_eps

self.max_seq_len = max_seq_len

self.rope_theta = rope_theta

self.dropout = dropout

self.flash_attn = flash_attn

####################################################

# Here are the specific configurations of MOE

# When use_moe is false, the following is invalid

####################################################

self.use_moe = use_moe

self.num_experts_per_tok = num_experts_per_tok # 每个token选择的专家数量

self.n_routed_experts = n_routed_experts # 总的专家数量

self.n_shared_experts = n_shared_experts # 共享专家

self.scoring_func = scoring_func # 评分函数,默认为'softmax'

self.aux_loss_alpha = aux_loss_alpha # 辅助损失的alpha参数

self.seq_aux = seq_aux # 是否在序列级别上计算辅助损失

self.norm_topk_prob = norm_topk_prob # 是否标准化top-k概率

super().__init__(**kwargs)

2.1.2 各层介绍

2.1.2.1 embedding词嵌入层

用于将tokenID转换为指定的dim维度的张量,该层的可训练参数维度(6400,512),在这里会将其转换为(1,512);

self.tok_embeddings = nn.Embedding(params.vocab_size, params.dim)2.1.2.2 Dropout层

torch的一个标准层,在训练过程中会随机的不更新一定比例的权重的梯度,防止过拟合;

self.dropout = nn.Dropout(params.dropout)2.1.2.3 MiniMindBlock核心层

定义transformer模块的具体结构,像这样的模块该模型一共叠加了8个;

class MiniMindBlock(nn.Module):

def __init__(self, layer_id: int, config: LMConfig):

super().__init__()

self.n_heads = config.n_heads

self.dim = config.dim

self.head_dim = config.dim // config.n_heads

self.attention = Attention(config)

self.layer_id = layer_id

self.attention_norm = RMSNorm(config.dim, eps=config.norm_eps)

self.ffn_norm = RMSNorm(config.dim, eps=config.norm_eps)

self.feed_forward = FeedForward(config) if not config.use_moe else MOEFeedForward(config)

def forward(self, x, pos_cis, past_key_value=None, use_cache=False):

h_attn, past_kv = self.attention(

self.attention_norm(x),

pos_cis,

past_key_value=past_key_value,

use_cache=use_cache

)

h = x + h_attn

out = h + self.feed_forward(self.ffn_norm(h))

return out, past_kv2.1.2.4 归一化层

RMSNorm 是一种替代传统 LayerNorm(层归一化)的技术,旨在减少计算开销和内存占用,同时保持或改善模型的性能;

self.norm = RMSNorm(params.dim, eps=params.norm_eps)2.1.2.5 输出层

输出层将512维的张量通过线性变换转换为6400维与词汇表数量对齐;

self.output = nn.Linear(params.dim, params.vocab_size, bias=False)2.2 推理

大家安装好环境,在eval_model.py同级目录创建一个jujupyterNotebook文件,这里带领大家一起推理一次,感受一下推理全过程,以及中间的数据处理细节;

2.2.1 导包

代码:

import argparse

import random

import time

import numpy as np

import torch

import warnings

from transformers import AutoTokenizer, AutoModelForCausalLM

from model.model import MiniMindLM

from model.LMConfig import LMConfig

from model.model_lora import *

warnings.filterwarnings('ignore')2.2.2 定义超参

代码 :

class ARG():

def __init__(self):

self.lora_name = 'None'

self.out_dir = 'out'

self.temperature = 0.85

self.top_p = 0.85

self.device = 'cpu'

self.dim = 512

self.n_layers = 8

self.max_seq_len = 8192

self.use_moe = False

self.history_cnt = 0

self.stream = True

self.load = 0

self.model_mode = 12.2.3 定义模型、分词器初始化API

代码:

def init_model(args):

tokenizer = AutoTokenizer.from_pretrained('./model/minimind_tokenizer')

if args.load == 0:

moe_path = '_moe' if args.use_moe else ''

modes = {0: 'pretrain', 1: 'full_sft', 2: 'rlhf', 3: 'reason'}

# ckp = f'./{args.out_dir}/{modes[args.model_mode]}_{args.dim}{moe_path}.pth'

ckp = f'../Weights/MiniMind2-PyTorch/{modes[args.model_mode]}_{args.dim}{moe_path}.pth'

model = MiniMindLM(LMConfig(

dim=args.dim,

n_layers=args.n_layers,

max_seq_len=args.max_seq_len,

use_moe=args.use_moe

))

state_dict = torch.load(ckp, map_location=args.device)

model.load_state_dict({k: v for k, v in state_dict.items() if 'mask' not in k}, strict=True)

if args.lora_name != 'None':

apply_lora(model)

load_lora(model, f'./{args.out_dir}/lora/{args.lora_name}_{args.dim}.pth')

else:

transformers_model_path = '../Weights/MiniMind2'

tokenizer = AutoTokenizer.from_pretrained(transformers_model_path)

model = AutoModelForCausalLM.from_pretrained(transformers_model_path, trust_remote_code=True)

print(f'MiniMind模型参数量: {sum(p.numel() for p in model.parameters() if p.requires_grad) / 1e6:.2f}M(illion)')

return model.eval().to(args.device), tokenizer2.2.4 创建提示词API

代码:

def get_prompt_datas(args):

if args.model_mode == 0:

# pretrain模型的接龙能力(无法对话)

prompt_datas = [

'马克思主义基本原理',

'人类大脑的主要功能',

'万有引力原理是',

'世界上最高的山峰是',

'二氧化碳在空气中',

'地球上最大的动物有',

'杭州市的美食有'

]

else:

if args.lora_name == 'None':

# 通用对话问题

prompt_datas = [

'请介绍一下自己。',

'你更擅长哪一个学科?',

'鲁迅的《狂人日记》是如何批判封建礼教的?',

'我咳嗽已经持续了两周,需要去医院检查吗?',

'详细的介绍光速的物理概念。',

'推荐一些杭州的特色美食吧。',

'请为我讲解“大语言模型”这个概念。',

'如何理解ChatGPT?',

'Introduce the history of the United States, please.'

]

else:

# 特定领域问题

lora_prompt_datas = {

'lora_identity': [

"你是ChatGPT吧。",

"你叫什么名字?",

"你和openai是什么关系?"

],

'lora_medical': [

'我最近经常感到头晕,可能是什么原因?',

'我咳嗽已经持续了两周,需要去医院检查吗?',

'服用抗生素时需要注意哪些事项?',

'体检报告中显示胆固醇偏高,我该怎么办?',

'孕妇在饮食上需要注意什么?',

'老年人如何预防骨质疏松?',

'我最近总是感到焦虑,应该怎么缓解?',

'如果有人突然晕倒,应该如何急救?'

],

}

prompt_datas = lora_prompt_datas[args.lora_name]

return prompt_datas2.2.5 加载超参、加载模型、加载分词器、选择输入提示词

代码:

args = ARG()

model, tokenizer = init_model(args)

prompts = get_prompt_datas(args)

prompt = prompts[3]

print(prompt)输出结果:

MiniMind模型参数量: 25.83M(illion)

我咳嗽已经持续了两周,需要去医院检查吗?2.2.6 格式化信息

代码:

messages = []

messages.append({"role": "user", "content": prompt})

print(messages)输出结果:

[{'role': 'user', 'content': '我咳嗽已经持续了两周,需要去医院检查吗?'}]2.2.7 套用对话模版

代码:

template = tokenizer.apply_chat_template(messages, tokenize=False, add_generation_prompt=True)

print(template)输出结果:

<s>system

你是 MiniMind,是一个有用的人工智能助手。</s>

<s>user

我咳嗽已经持续了两周,需要去医院检查吗?</s>

<s>assistant2.2.8 截取最大文本长度

这里因为没有超出最大输入长度,所以没有任何变化;

代码:

new_prompt = template[-args.max_seq_len + 1:] if args.model_mode != 0 else (tokenizer.bos_token + prompt)

print(new_prompt)输出结果:

<s>system

你是 MiniMind,是一个有用的人工智能助手。</s>

<s>user

我咳嗽已经持续了两周,需要去医院检查吗?</s>

<s>assistant

2.2.9 文本2tokenID

我们可以看到分词器将上述的提示词分成了52个token,并且生成对应的tokenID;

代码:

embedding_tensor = tokenizer(new_prompt)['input_ids']

print(len(embedding_tensor))

print(embedding_tensor)输出结果:

52

[1, 85, 736, 201, 608, 345, 562, 261, 75, 47, 807, 270, 1589, 400, 411, 1946, 740, 1728, 945, 1184, 286, 2, 201, 1, 320, 275, 201, 397, 312, 114, 6339, 124, 2434, 3011, 446, 1346, 2055, 270, 590, 1473, 2037, 4238, 3351, 2235, 814, 2, 201, 1, 1078, 538, 501, 201]2.2.10 剔除填充token并升维

代码:

x = torch.tensor(embedding_tensor, device=args.device).unsqueeze(0)

print(x.shape)

non_pad = x[0][x[0] != tokenizer.pad_token_id]

print(non_pad.shape)

non_pad = non_pad.unsqueeze(0)

print(non_pad.shape)输出结果:

torch.Size([1, 52])

torch.Size([52])

torch.Size([1, 52])2.2.11 推理

这里的out包括三部分的内容,分别是logits、aux_loss、past_key_values;logits是模型的输出张量,past_key_values是缓存的K、V张量,提高推理速度,因为有8个transformer模块,所以past_key_values长度为8;

代码:

start, first_seq, past_kvs = non_pad.shape[1], True, None

print(start, first_seq, past_kvs)

out, first_seq = model(non_pad, past_key_values=past_kvs, use_cache=True, **{}), False

print(out.logits.shape)

print(len(out.past_key_values))输出结果:

52 True None

torch.Size([1, 52, 6400])

82.2.12 获取最后一个token的张量及K、V缓存

因为后面进行softmax使用的是最后一个token的张量信息,所以获取输出最后一个token的张量;

代码:

logits, past_kvs = out.logits[:, -1, :], out.past_key_values

print(logits.shape)

print(len(past_kvs))输出结果:

torch.Size([1, 6400])

82.2.13 提高输出的随机性

这里的0.85就是超参中的temperature;

代码:

logits[:, list(set(non_pad.tolist()[0]))] /= 1.0

logits /= (0.85 + 1e-9)2.2.14 下面进入Top_p随机采样

这里简单说一下top_p随机采样的流程和原理;首先进行top_p采样的是最后一个token的概率分布张量,维度为(1,6400);然后将其最后一维按照从大到小的概率排序,计算累加概率,比如排序后的概率为[0.5, 0.2, 0.1, 0.1, 0.1]那么他的累积概率就是[0.5, 0.7, 0.8, 0.9, 1];接着找到累加概率大于top_p的位置,保留所有小于top_p的累加概率的索引对应的原始概率值,其余的全部变为负无穷大,再一次计算softmax;然后按照这个概率加权随机选择一个分类对应的tokenID作为下一个预测token;这样做的目的是让输出有一定的随机性,增加了模型的创新力;

代码1:

# 将logits按最后一个维度从大到小排序,返回排序后的张量以及对应张量在原始位置上的索引张量

sorted_logits, sorted_indices = torch.sort(logits, descending=True, dim=-1)

print(sorted_logits.shape)

print(sorted_indices)输出结果1:

torch.Size([1, 6400])

tensor([[ 453, 368, 345, ..., 4166, 252, 5999]])代码2:

# 将排序后的张量在最后一个维度上进行softmax计算,得到和logits维度相同(1,6400)的概率值

import torch.nn.functional as F

sorted_probs = F.softmax(sorted_logits, dim=-1)

print(sorted_probs.shape)

print(sorted_probs)输出结果2:

torch.Size([1, 6400])

tensor([[1.2660e-01, 1.2199e-01, 1.1748e-01, ..., 3.4979e-13, 3.1038e-13,

2.8181e-13]], grad_fn=<SoftmaxBackward0>)代码3:

# 计算概率张量在最后一个维度上的累加值,返回累加张量

cumulative_probs = torch.cumsum(sorted_probs, dim=-1)

print(cumulative_probs)输出结果3:

tensor([[0.1266, 0.2486, 0.3661, ..., 1.0000, 1.0000, 1.0000]],

grad_fn=<CumsumBackward0>)代码4:

# 定义累加张量上概率值大于top_p的mask遮罩

sorted_indices_to_remove = cumulative_probs > 0.85

print(sorted_indices_to_remove.shape)输出结果4:

torch.Size([1, 6400])代码5:

# 将上一位的遮罩状态赋值给下一位,因为累积概率是从第一个 token 开始累加的,直接移除会导致可能没有 token 被保留

sorted_indices_to_remove[:, 1:] = sorted_indices_to_remove[:, :-1].clone()

# 将第一位token概率赋值为False,确保至少保留一个token

sorted_indices_to_remove[:, 0] = False

print(sorted_indices_to_remove.shape)输出结果5:

torch.Size([1, 6400])代码6:

# 使用 scatter 函数将 sorted_indices_to_remove 映射回原始 logits 的顺序;

# scatter 的作用是根据 sorted_indices 将布尔标记重新分配到原始 logits 的位置

# indices_to_remove 是一个布尔张量,形状与 logits 相同,表示哪些位置的 logits 应该被移除

indices_to_remove = sorted_indices_to_remove.scatter(1, sorted_indices, sorted_indices_to_remove)

print(indices_to_remove.shape)输出结果6:

torch.Size([1, 6400])代码7:

# 将移除位置的张量赋值为负无穷

logits[indices_to_remove] = -float('Inf')

print(logits.shape)代码8:

# 对剔除很小概率值的张量再次进行softmax

logits_softmax = F.softmax(logits, dim=-1)

print(logits_softmax.shape)代码9:

# 通过multinomial函数按照概率进行加权随机采样,num_samples=1表示在对应维度上只采样1个,返回被选中的张量的索引

input_ids_next = torch.multinomial(logits_softmax, num_samples=1)

print(input_ids_next.shape)

print(input_ids_next)输出结果9:

torch.Size([1, 1])

tensor([[835]])2.2.15 合并预测tokenID

合并后的input_ids会作为下一轮的上下文信息进行新一轮的预测,当预测tokenID为停止tokenID时就会停止推理;

代码:

# 将挑选出来的token的索引添加到之前的上下文索引中

print(non_pad.shape)

input_ids = torch.cat((non_pad, input_ids_next), dim=1)

print(input_ids.shape)输出结果:

torch.Size([1, 52])

torch.Size([1, 53])2.2.16 预测tokenID转文本

这样就完成一轮的推理预测啦;

代码1:

# 查看预测出来的token_ID是835

new_token = input_ids[:, start:]

print(new_token)输出结果1:

tensor([[835]])代码2:

# 将这个token_ID放入到分词器中进行文本解码

text = tokenizer.decode([835], skip_special_tokens=True)

print(text)输出结果2:

确三、总结

该篇博客介绍了一下大模型的模型架构,以及模型推理的整个流程,包括文本数据的预处理和模型输出结果的后处理等;比较详细的呈现了大模型推理的完整流程,希望对大家理解大模型有一定的帮助;该系列还会以minimind项目为基础,从代码出发,持续更新分享大模型的预训练、微调、蒸馏、量化等一系列的底层过程,如果感觉有帮助到你,可以点一个关注,持续关注该系列的后续,谢谢~