RTDETR融合DECS-Net中的FFM模块

RT-DETR使用教程: RT-DETR使用教程

RT-DETR改进汇总贴:RT-DETR更新汇总贴

《A dual encoder crack segmentation network with Haar wavelet-based high–low frequency attention》

一、 模块介绍

论文链接:https://www.sciencedirect.com/science/article/abs/pii/S0957417424018177

代码链接:https://github.com/zZhiG/DECS-Net?tab=readme-ov-file

论文速览:

裂缝是结构严重损伤的早期标志,也是结构健康评价和监测过程中的重要指标。然而,复杂的背景干扰使得小裂纹的分割成为一项极具挑战性的任务。为此,构建了一种基于卷积神经网络(CNN)和变压器的双编码器裂纹分割网络(DECS-Net),实现了裂纹的自动检测。首先,提出了一种高低频注意(HLA)机制,利用Haar小波提取信号的近似分量和详细分量,并进一步处理得到信号的低频和高频特征;此外,还设计了局部增强前馈网络(local Enhanced Feedforward Network, LEFN),以提高网络的局部信息感知能力。其次,提出特征融合模块(feature Fusion Module, FFM),将CNN编码器提取的局部特征与变压器编码器提取的全局上下文特征融合,实现跨域融合和相关性增强;

总结:

⭐⭐本文二创模块仅更新于付费群中,往期免费教程可看下方链接⭐⭐

RT-DETR更新汇总贴(含免费教程)文章浏览阅读264次。RT-DETR使用教程:缝合教程: RT-DETR中的yaml文件详解:labelimg使用教程:_rt-deterhttps://xy2668825911.blog.csdn.net/article/details/143696113

二、二创融合模块

2.1 相关代码

# https://blog.csdn.net/StopAndGoyyy?spm=1011.2124.3001.5343

# A dual encoder crack segmentation network with Haar wavelet-based high–low frequency attention

class DSC(nn.Module):def __init__(self, c_in, c_out, k_size=3, stride=1, padding=1):super(DSC, self).__init__()self.c_in = c_inself.c_out = c_outself.dw = nn.Conv2d(c_in, c_in, k_size, stride, padding, groups=c_in)self.pw = nn.Conv2d(c_in, c_out, 1, 1)def forward(self, x):out = self.dw(x)out = self.pw(out)return outclass IDSC(nn.Module):def __init__(self, c_in, c_out, k_size=3, stride=1, padding=1):super(IDSC, self).__init__()self.c_in = c_inself.c_out = c_outself.dw = nn.Conv2d(c_out, c_out, k_size, stride, padding, groups=c_out)self.pw = nn.Conv2d(c_in, c_out, 1, 1)def forward(self, x):out = self.pw(x)out = self.dw(out)return outclass FFM(nn.Module):def __init__(self, dim1):super().__init__()self.trans_c = nn.Conv2d(dim1, dim1, 1)self.avg = nn.AdaptiveAvgPool2d(1)self.li1 = nn.Linear(dim1, dim1)self.li2 = nn.Linear(dim1, dim1)self.qx = DSC(dim1, dim1)self.kx = DSC(dim1, dim1)self.vx = DSC(dim1, dim1)self.projx = DSC(dim1, dim1)self.qy = DSC(dim1, dim1)self.ky = DSC(dim1, dim1)self.vy = DSC(dim1, dim1)self.projy = DSC(dim1, dim1)self.concat = nn.Conv2d(dim1 * 2, dim1, 1)self.fusion = nn.Sequential(IDSC(dim1 * 4, dim1),nn.BatchNorm2d(dim1),nn.GELU(),DSC(dim1, dim1),nn.BatchNorm2d(dim1),nn.GELU(),nn.Conv2d(dim1, dim1, 1),nn.BatchNorm2d(dim1),nn.GELU())def forward(self, x1):x, y = x1y = y.reshape(y.size()[0], -1, y.size()[1])b, c, h, w = x.shapeB, N, C = y.shapeH = W = int(N ** 0.5)x = self.trans_c(x)y = y.reshape(B, H, W, C).permute(0, 3, 1, 2)avg_x = self.avg(x).permute(0, 2, 3, 1)avg_y = self.avg(y).permute(0, 2, 3, 1)x_weight = self.li1(avg_x)y_weight = self.li2(avg_y)x = x.permute(0, 2, 3, 1) * x_weighty = y.permute(0, 2, 3, 1) * y_weightout1 = x * yout1 = out1.permute(0, 3, 1, 2)x = x.permute(0, 3, 1, 2)y = y.permute(0, 3, 1, 2)qy = self.qy(y).reshape(B, 8, C // 8, H // 4, 4, W // 4, 4).permute(0, 3, 5, 1, 4, 6, 2).reshape(B, N // 16, 8,16, C // 8)kx = self.kx(x).reshape(B, 8, C // 8, H // 4, 4, W // 4, 4).permute(0, 3, 5, 1, 4, 6, 2).reshape(B, N // 16, 8,16, C // 8)vx = self.vx(x).reshape(B, 8, C // 8, H // 4, 4, W // 4, 4).permute(0, 3, 5, 1, 4, 6, 2).reshape(B, N // 16, 8,16, C // 8)attnx = (qy @ kx.transpose(-2, -1)) * (C ** -0.5)attnx = attnx.softmax(dim=-1)attnx = (attnx @ vx).transpose(2, 3).reshape(B, H // 4, w // 4, 4, 4, C)attnx = attnx.transpose(2, 3).reshape(B, H, W, C).permute(0, 3, 1, 2)attnx = self.projx(attnx)qx = self.qx(x).reshape(B, 8, C // 8, H // 4, 4, W // 4, 4).permute(0, 3, 5, 1, 4, 6, 2).reshape(B, N // 16, 8,16, C // 8)ky = self.ky(y).reshape(B, 8, C // 8, H // 4, 4, W // 4, 4).permute(0, 3, 5, 1, 4, 6, 2).reshape(B, N // 16, 8,16, C // 8)vy = self.vy(y).reshape(B, 8, C // 8, H // 4, 4, W // 4, 4).permute(0, 3, 5, 1, 4, 6, 2).reshape(B, N // 16, 8,16, C // 8)attny = (qx @ ky.transpose(-2, -1)) * (C ** -0.5)attny = attny.softmax(dim=-1)attny = (attny @ vy).transpose(2, 3).reshape(B, H // 4, w // 4, 4, 4, C)attny = attny.transpose(2, 3).reshape(B, H, W, C).permute(0, 3, 1, 2)attny = self.projy(attny)out2 = torch.cat([attnx, attny], dim=1)out2 = self.concat(out2)out = torch.cat([x, y, out1, out2], dim=1)out = self.fusion(out)return out2.2 更改yaml文件 (以自研模型加入为例)

yam文件解读:YOLO系列 “.yaml“文件解读_yolo yaml文件-CSDN博客

打开更改ultralytics/cfg/models/rt-detr路径下的rtdetr-l.yaml文件,替换原有模块。

# Ultralytics YOLO 🚀, AGPL-3.0 license

# RT-DETR-l object detection model with P3-P5 outputs. For details see https://docs.ultralytics.com/models/rtdetr

# ⭐⭐Powered by https://blog.csdn.net/StopAndGoyyy, 技术指导QQ:2668825911⭐⭐# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolov8n-cls.yaml' will call yolov8-cls.yaml with scale 'n'# [depth, width, max_channels]l: [1.00, 1.00, 512]

# n: [ 0.33, 0.25, 1024 ]

# s: [ 0.33, 0.50, 1024 ]

# m: [ 0.67, 0.75, 768 ]

# l: [ 1.00, 1.00, 512 ]

# x: [ 1.00, 1.25, 512 ]

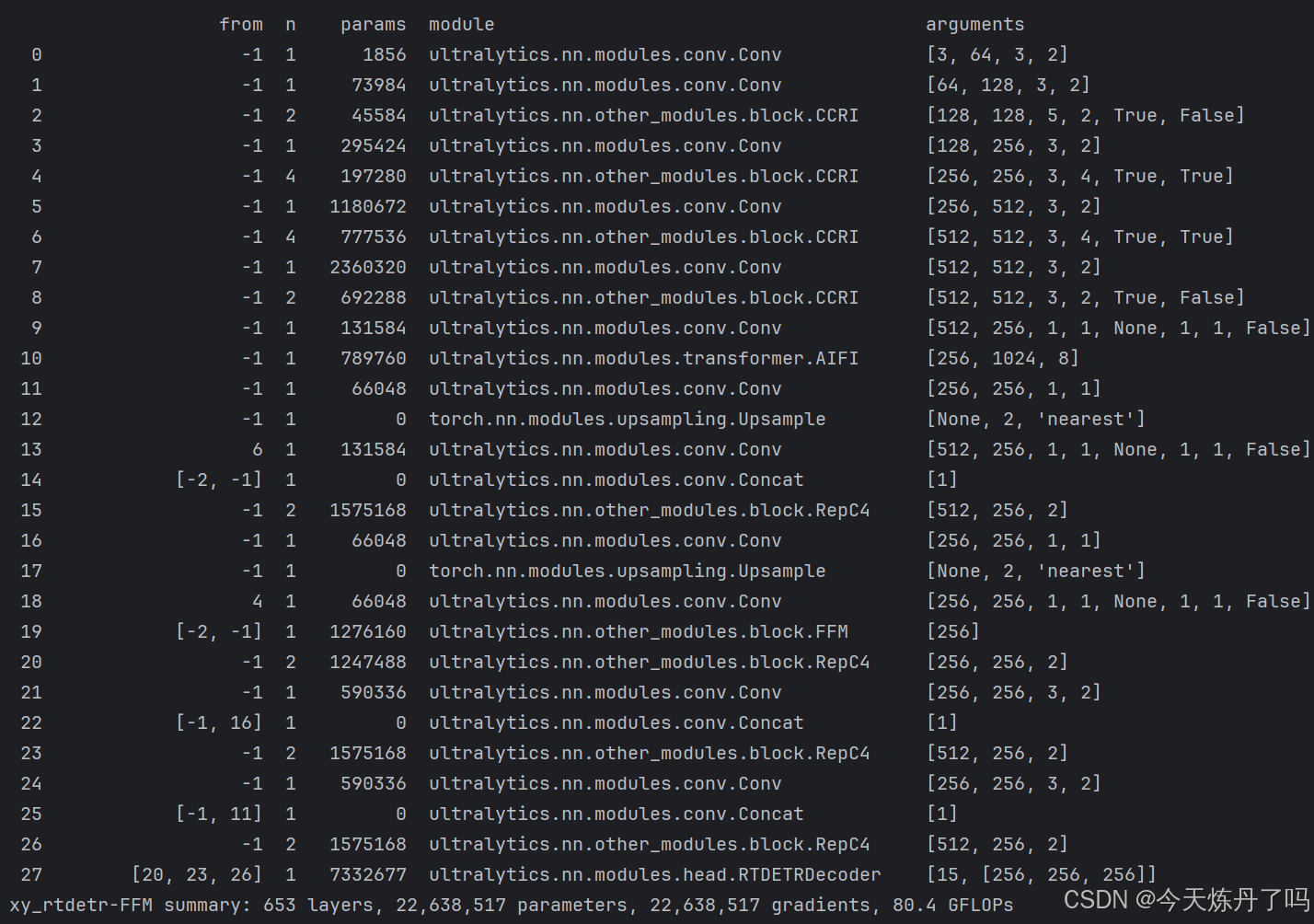

# ⭐⭐Powered by https://blog.csdn.net/StopAndGoyyy, 技术指导QQ:2668825911⭐⭐backbone:# [from, repeats, module, args]- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4- [-1, 2, CCRI, [128, 5, True, False]]- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8- [-1, 4, CCRI, [256, 3, True, True]]- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16- [-1, 4, CCRI, [512, 3, True, True]]- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32- [-1, 2, CCRI, [1024, 3, True, False]]head:- [-1, 1, Conv, [256, 1, 1, None, 1, 1, False]] # 9 input_proj.2- [-1, 1, AIFI, [1024, 8]]- [-1, 1, Conv, [256, 1, 1]] # 11, Y5, lateral_convs.0- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [6, 1, Conv, [256, 1, 1, None, 1, 1, False]] # 13 input_proj.1- [[-2, -1], 1, Concat, [1]]- [-1, 2, RepC4, [256]] # 15, fpn_blocks.0- [-1, 1, Conv, [256, 1, 1]] # 16, Y4, lateral_convs.1- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [4, 1, Conv, [256, 1, 1, None, 1, 1, False]] # 18 input_proj.0- [[-2, -1], 1, FFM, [ ]] # cat backbone P4- [-1, 2, RepC4, [256]] # X3 (20), fpn_blocks.1- [-1, 1, Conv, [256, 3, 2]] # 22, downsample_convs.0- [[-1, 16], 1, Concat, [1]] # cat Y4- [-1, 2, RepC4, [256]] # F4 (23), pan_blocks.0- [-1, 1, Conv, [256, 3, 2]] # 24, downsample_convs.1- [[-1, 11], 1, Concat, [1]] # cat Y5- [-1, 2, RepC4, [256]] # F5 (26), pan_blocks.1- [[20, 23, 26], 1, RTDETRDecoder, [nc]] # Detect(P3, P4, P5)

# ⭐⭐Powered by https://blog.csdn.net/StopAndGoyyy, 技术指导QQ:2668825911⭐⭐

2.2 修改train.py文件

创建Train_RT脚本用于训练。

from ultralytics.models import RTDETR

import os

os.environ['KMP_DUPLICATE_LIB_OK'] = 'True'if __name__ == '__main__':model = RTDETR(model='ultralytics/cfg/models/rt-detr/rtdetr-l.yaml')# model.load('yolov8n.pt')model.train(data='./data.yaml', epochs=2, batch=1, device='0', imgsz=640, workers=2, cache=False,amp=True, mosaic=False, project='runs/train', name='exp')

在train.py脚本中填入修改好的yaml路径,运行即可训。