P25:LSTM实现糖尿病探索与预测

- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊

一、相关技术

1.LSTM基本概念

LSTM(长短期记忆网络)是RNN(循环神经网络)的一种变体,它通过引入特殊的结构来解决传统RNN中的梯度消失和梯度爆炸问题,特别适合处理序列数据。

-

结构组成:

- 遗忘门:决定丢弃哪些信息,通过sigmoid函数输出0-1之间的值,表示保留或遗忘的程度。

- 输入门:决定更新哪些信息,同样通过sigmoid函数控制更新的程度,并结合tanh函数生成新的候选记忆内容。

- 输出门:决定输出哪些信息,根据sigmoid函数的结果筛选记忆单元中的信息。

-

应用场景:

- 语言模型与文本生成:如自动补全句子、生成文本。

- 语音识别:将语音信号转换为文字。

- 时间序列预测:如股票价格预测、天气预报。

2.PyTorch核心组件

PyTorch是一个开源的机器学习库,广泛应用于深度学习领域。以下是案例中用到的核心组件:

-

nn.Module:

- 所有神经网络模块的基类,用于构建自定义模型。

- 需要实现

forward方法定义前向传播逻辑。

-

DataLoader与TensorDataset:

DataLoader:负责批量加载数据,支持并行处理和随机打乱。TensorDataset:将张量数据包装成数据集,便于与DataLoader配合使用。

-

优化器与损失函数:

torch.optim.Adam:自适应学习率优化算法,适合处理稀疏数据和非平稳目标。nn.CrossEntropyLoss:适用于多分类任务,结合了softmax和交叉熵损失。

3.实验设计要点

-

特征选择与工程:

- 移除与目标负相关的特征(如本例中的"高密度脂蛋白胆固醇"),减少噪声提高模型性能。

- 使用领域知识选择对目标变量有预测能力的特征。

-

数据预处理:

- 数据标准化:将特征缩放到相似范围,加速模型收敛(本例虽未使用但推荐)。

- 数据集划分:通常采用70%-30%或80%-20%的比例划分训练集和测试集。

-

模型结构设计:

- 输入层:与特征数量匹配。

- 隐藏层:根据数据复杂度和实验效果调整神经元数量。

- 输出层:与分类类别数量一致(本例为二分类输出2个节点)。

4.训练过程解析

-

前向传播:

- 将输入数据通过网络,得到预测结果。

- 计算预测结果与真实标签之间的损失值。

-

反向传播:

- 计算损失对各参数的梯度。

- 使用优化器更新网络权重。

-

性能评估:

- 准确率(Accuracy):正确预测的样本数占总样本数的比例。

- 损失值(Loss):衡量预测结果与真实值之间的差异。

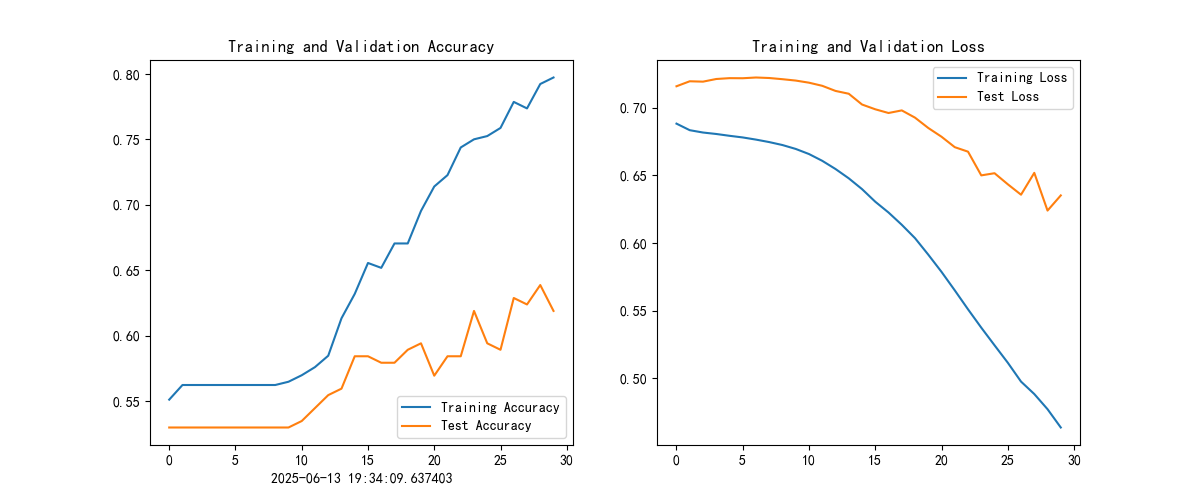

5.结果可视化

-

绘制训练曲线:

- 使用Matplotlib库绘制训练和测试的准确率、损失曲线。

- 通过曲线观察模型的收敛趋势和过拟合风险。

-

解读图表:

- 准确率曲线:训练集和测试集的准确率越接近,模型泛化能力越强。

- 损失曲线:损失值逐渐下降表明模型在学习,若测试损失上升可能表示过拟合。

6.总结

通过这个糖尿病预测案例,我们学习了LSTM网络的基本原理和PyTorch框架的使用方法。从数据预处理到模型训练,再到结果评估,每一步都蕴含着重要的机器学习知识。希望读者能够举一反三,将这些技术应用到其他领域的问题解决中。在实际应用中,不断尝试和优化是提高模型性能的关键。记住,深度学习不仅需要理论知识,更需要大量的实践和耐心调试。

二、代码实现

1.导入库函数

import torch.nn as nn

import torch.nn.functional as F

import torchvision,torch# 设置硬件设备,如果有GPU则使用,没有则使用cpu

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

import matplotlib.pyplot as plt

#隐藏警告

import warnings

import numpy as npimport pandas as pd

import seaborn as sns

from sklearn.model_selection import train_test_split

import matplotlib.pyplot as plt

2.导入数据

plt.rcParams['savefig.dpi'] = 500 #图片像素

plt.rcParams['figure.dpi'] = 500 #分辨率

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

warnings.filterwarnings("ignore")

DataFrame=pd.read_excel(r"E:\Code\pytorch_gpu\data\dia.xls")

print(DataFrame.head())# 查看数据是否有缺失值

print('数据缺失值---------------------------------')

print(DataFrame.isnull().sum())

# 查看数据是否有重复值

print('数据重复值---------------------------------')

print('数据集的重复值为:'f'{DataFrame.duplicated().sum()}')

卡号 性别 年龄 高密度脂蛋白胆固醇 低密度脂蛋白胆固醇 ... 尿素氮 尿酸 肌酐 体重检查结果 是否糖尿病

0 18054421 0 38 1.25 2.99 ... 4.99 243.3 50 1 0

1 18054422 0 31 1.15 1.99 ... 4.72 391.0 47 1 0

2 18054423 0 27 1.29 2.21 ... 5.87 325.7 51 1 0

3 18054424 0 33 0.93 2.01 ... 2.40 203.2 40 2 0

4 18054425 0 36 1.17 2.83 ... 4.09 236.8 43 0 0[5 rows x 16 columns]

数据缺失值---------------------------------

卡号 0

性别 0

年龄 0

高密度脂蛋白胆固醇 0

低密度脂蛋白胆固醇 0

极低密度脂蛋白胆固醇 0

甘油三酯 0

总胆固醇 0

脉搏 0

舒张压 0

高血压史 0

尿素氮 0

尿酸 0

肌酐 0

体重检查结果 0

是否糖尿病 0

dtype: int64

数据重复值---------------------------------

数据集的重复值为:0

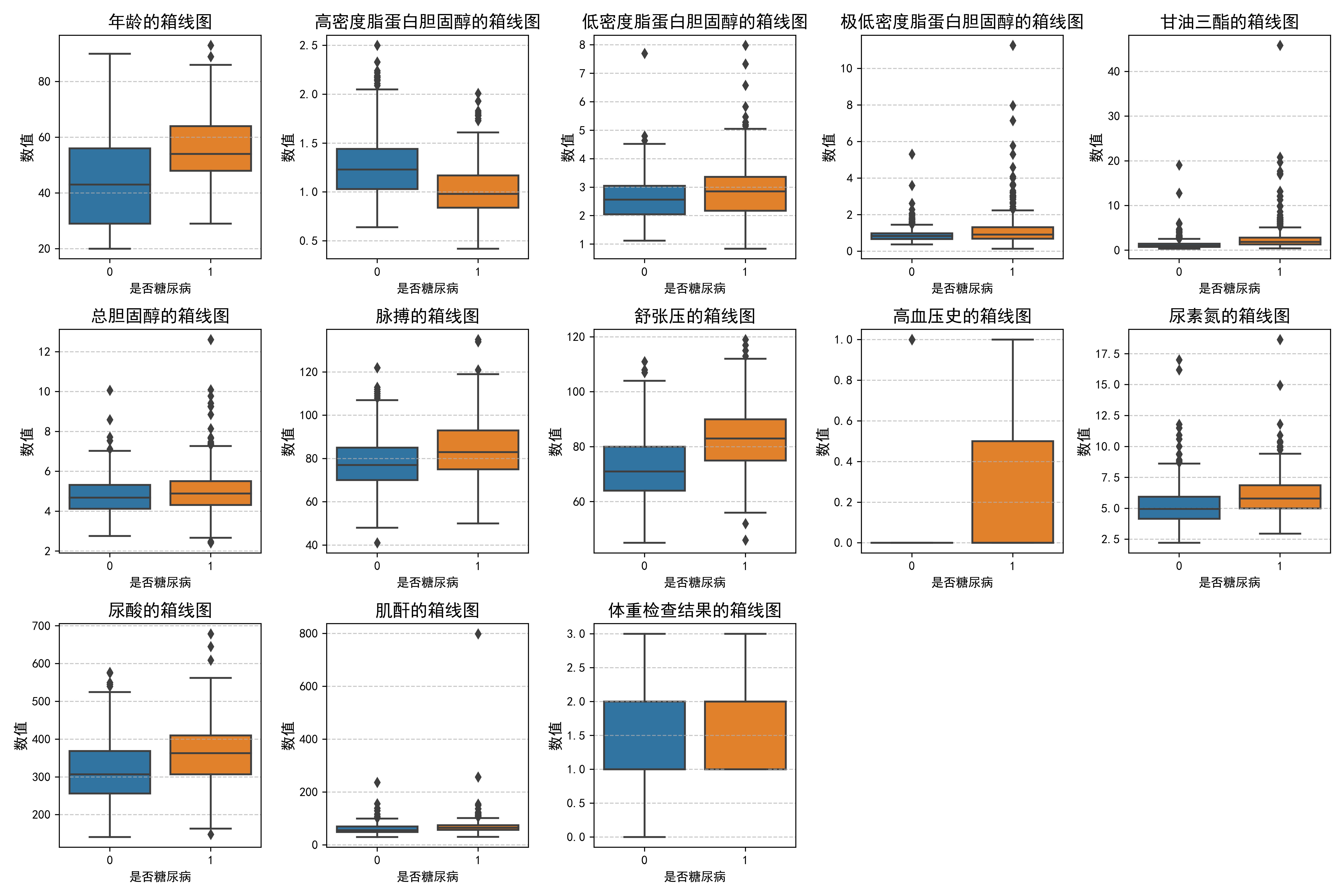

3.数据分布分析

feature_map = {'年龄': '年龄','高密度脂蛋白胆固醇': '高密度脂蛋白胆固醇','低密度脂蛋白胆固醇': '低密度脂蛋白胆固醇','极低密度脂蛋白胆固醇': '极低密度脂蛋白胆固醇','甘油三酯': '甘油三酯','总胆固醇': '总胆固醇','脉搏': '脉搏','舒张压':'舒张压','高血压史':'高血压史','尿素氮':'尿素氮','尿酸':'尿酸','肌酐':'肌酐','体重检查结果':'体重检查结果'}

plt.figure(figsize=(15, 10))for i, (col, col_name) in enumerate(feature_map.items(), 1):plt.subplot(3, 5, i)sns.boxplot(x=DataFrame['是否糖尿病'], y=DataFrame[col])plt.title(f'{col_name}的箱线图', fontsize=14)plt.ylabel('数值', fontsize=12)plt.grid(axis='y', linestyle='--', alpha=0.7)plt.tight_layout()

plt.show()

4.数据集构建

# '高密度脂蛋白胆固醇'字段与糖尿病负相关,故而在 X 中去掉该字段

X = DataFrame.drop(['卡号','是否糖尿病','高密度脂蛋白胆固醇'],axis=1)

y = DataFrame['是否糖尿病']# sc_X = StandardScaler()

# X = sc_X.fit_transform(X)

X = torch.tensor(np.array(X), dtype=torch.float32)

y = torch.tensor(np.array(y), dtype=torch.int64)

train_X, test_X, train_y, test_y = train_test_split(X, y,

test_size=0.2,

random_state=1)

from torch.utils.data import TensorDataset, DataLoadertrain_dl = DataLoader(TensorDataset(train_X, train_y),batch_size=64,shuffle=False)

test_dl = DataLoader(TensorDataset(test_X, test_y),batch_size=64,shuffle=False)

#train_X.shape, train_y.shape

5.模型构建

class model_lstm(nn.Module):def __init__(self):super(model_lstm, self).__init__()self.lstm0 = nn.LSTM(input_size=13 ,hidden_size=200,num_layers=1, batch_first=True)self.lstm1 = nn.LSTM(input_size=200 ,hidden_size=200,num_layers=1, batch_first=True)self.fc0 = nn.Linear(200, 2)def forward(self, x):out, hidden1 = self.lstm0(x)out, _ = self.lstm1(out, hidden1)out = self.fc0(out)return outmodel = model_lstm().to(device)

model lstm((lstm0):LSTM(13,200,batch first=True)(1stm1):LSTM(200,200,batch first=True)(fc0): Linear(in features=200,out features=2,bias=True)

6.构建测试训练

def train(dataloader, model, loss_fn, optimizer):size = len(dataloader.dataset) # 训练集的大小num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)train_loss, train_acc = 0, 0 # 初始化训练损失和正确率for X, y in dataloader: # 获取图片及其标签X, y = X.to(device), y.to(device)# 计算预测误差pred = model(X) # 网络输出loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失# 反向传播optimizer.zero_grad() # grad属性归零loss.backward() # 反向传播optimizer.step() # 每一步自动更新# 记录acc与losstrain_acc += (pred.argmax(1) == y).type(torch.float).sum().item()train_loss += loss.item()train_acc /= sizetrain_loss /= num_batchesreturn train_acc, train_loss

7. 构建训练函数

def test (dataloader, model, loss_fn):size = len(dataloader.dataset) # 测试集的大小num_batches = len(dataloader) # 批次数目, (size/batch_size,向上取整)test_loss, test_acc = 0, 0# 当不进行训练时,停止梯度更新,节省计算内存消耗with torch.no_grad():for imgs, target in dataloader:imgs, target = imgs.to(device), target.to(device)# 计算losstarget_pred = model(imgs)loss = loss_fn(target_pred, target)test_loss += loss.item()test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()test_acc /= sizetest_loss /= num_batchesreturn test_acc, test_loss

8.训练模型

loss_fn = nn.CrossEntropyLoss() # 创建损失函数learn_rate = 1e-4 # 学习率opt = torch.optim.Adam(model.parameters(),lr=learn_rate)epochs = 30train_loss = []train_acc = []test_loss = []test_acc = []for epoch in range(epochs):model.train()epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, opt)model.eval()epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)train_acc.append(epoch_train_acc)train_loss.append(epoch_train_loss)test_acc.append(epoch_test_acc)test_loss.append(epoch_test_loss)# 获取当前的学习率lr = opt.state_dict()['param_groups'][0]['lr']template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%, Test_loss:{:.3f}, Lr:{:.2E}')print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss,epoch_test_acc*100, epoch_test_loss, lr))print("="*20, 'Done', "="*20)

Epoch: 1, Train_acc:55.1%, Train_loss:0.688, Test_acc:53.0%, Test_loss:0.716, Lr:1.00E-04

==================== Done ====================

Epoch: 2, Train_acc:56.2%, Train_loss:0.683, Test_acc:53.0%, Test_loss:0.720, Lr:1.00E-04

==================== Done ====================

Epoch: 3, Train_acc:56.2%, Train_loss:0.682, Test_acc:53.0%, Test_loss:0.719, Lr:1.00E-04

==================== Done ====================

Epoch: 4, Train_acc:56.2%, Train_loss:0.681, Test_acc:53.0%, Test_loss:0.721, Lr:1.00E-04

==================== Done ====================

Epoch: 5, Train_acc:56.2%, Train_loss:0.679, Test_acc:53.0%, Test_loss:0.722, Lr:1.00E-04

==================== Done ====================

Epoch: 6, Train_acc:56.2%, Train_loss:0.678, Test_acc:53.0%, Test_loss:0.722, Lr:1.00E-04

==================== Done ====================

Epoch: 7, Train_acc:56.2%, Train_loss:0.677, Test_acc:53.0%, Test_loss:0.722, Lr:1.00E-04

==================== Done ====================

Epoch: 8, Train_acc:56.2%, Train_loss:0.675, Test_acc:53.0%, Test_loss:0.722, Lr:1.00E-04

==================== Done ====================

Epoch: 9, Train_acc:56.2%, Train_loss:0.672, Test_acc:53.0%, Test_loss:0.721, Lr:1.00E-04

==================== Done ====================

Epoch:10, Train_acc:56.5%, Train_loss:0.670, Test_acc:53.0%, Test_loss:0.720, Lr:1.00E-04

==================== Done ====================

Epoch:11, Train_acc:57.0%, Train_loss:0.666, Test_acc:53.5%, Test_loss:0.719, Lr:1.00E-04

==================== Done ====================

Epoch:12, Train_acc:57.6%, Train_loss:0.661, Test_acc:54.5%, Test_loss:0.716, Lr:1.00E-04

==================== Done ====================

Epoch:13, Train_acc:58.5%, Train_loss:0.655, Test_acc:55.4%, Test_loss:0.713, Lr:1.00E-04

==================== Done ====================

Epoch:14, Train_acc:61.3%, Train_loss:0.648, Test_acc:55.9%, Test_loss:0.710, Lr:1.00E-04

==================== Done ====================

Epoch:15, Train_acc:63.2%, Train_loss:0.640, Test_acc:58.4%, Test_loss:0.702, Lr:1.00E-04

==================== Done ====================

Epoch:16, Train_acc:65.5%, Train_loss:0.631, Test_acc:58.4%, Test_loss:0.699, Lr:1.00E-04

==================== Done ====================

Epoch:17, Train_acc:65.2%, Train_loss:0.623, Test_acc:57.9%, Test_loss:0.696, Lr:1.00E-04

==================== Done ====================

Epoch:18, Train_acc:67.0%, Train_loss:0.614, Test_acc:57.9%, Test_loss:0.698, Lr:1.00E-04

==================== Done ====================

Epoch:19, Train_acc:67.0%, Train_loss:0.604, Test_acc:58.9%, Test_loss:0.693, Lr:1.00E-04

==================== Done ====================

Epoch:20, Train_acc:69.5%, Train_loss:0.591, Test_acc:59.4%, Test_loss:0.685, Lr:1.00E-04

==================== Done ====================

Epoch:21, Train_acc:71.4%, Train_loss:0.579, Test_acc:56.9%, Test_loss:0.679, Lr:1.00E-04

==================== Done ====================

Epoch:22, Train_acc:72.3%, Train_loss:0.565, Test_acc:58.4%, Test_loss:0.671, Lr:1.00E-04

==================== Done ====================

Epoch:23, Train_acc:74.4%, Train_loss:0.551, Test_acc:58.4%, Test_loss:0.668, Lr:1.00E-04

==================== Done ====================

Epoch:24, Train_acc:75.0%, Train_loss:0.537, Test_acc:61.9%, Test_loss:0.650, Lr:1.00E-04

==================== Done ====================

Epoch:25, Train_acc:75.2%, Train_loss:0.524, Test_acc:59.4%, Test_loss:0.652, Lr:1.00E-04

==================== Done ====================

Epoch:26, Train_acc:75.9%, Train_loss:0.512, Test_acc:58.9%, Test_loss:0.643, Lr:1.00E-04

==================== Done ====================

Epoch:27, Train_acc:77.9%, Train_loss:0.498, Test_acc:62.9%, Test_loss:0.636, Lr:1.00E-04

==================== Done ====================

Epoch:28, Train_acc:77.4%, Train_loss:0.488, Test_acc:62.4%, Test_loss:0.652, Lr:1.00E-04

==================== Done ====================

Epoch:29, Train_acc:79.2%, Train_loss:0.477, Test_acc:63.9%, Test_loss:0.624, Lr:1.00E-04

==================== Done ====================

Epoch:30, Train_acc:79.7%, Train_loss:0.464, Test_acc:61.9%, Test_loss:0.635, Lr:1.00E-04

==================== Done ====================

9.模型评估

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 #分辨率from datetime import datetime

current_time = datetime.now() # 获取当前时间epochs_range = range(epochs)plt.figure(figsize=(12, 5))

plt.subplot(1, 2, 1)plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.xlabel(current_time) # 打卡请带上时间戳,否则代码截图无效

plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()