报错记录 Error: CUDNN_STATUS_BAD_PARAM; Reason: finalize_internal()

最近在复盘测试nvidia公司的cosmos -transfer1

nvidia-cosmos/cosmos-transfer1: Cosmos-Transfer1 is a world-to-world transfer model designed to bridge the perceptual divide between simulated and real-world environments.

的时候出现了报错完整报错信息为:

E! CuDNN (v8907) function cudnnBackendFinalize() called:

e! Error: CUDNN_STATUS_BAD_PARAM; Reason: finalize_internal()

e! Time: 2025-08-28T18:13:22.548087 (0d+0h+16m+8s since start)

e! Process=2027580; Thread=2027580; GPU=NULL; Handle=NULL; StreamId=NULL.

RuntimeError: /TransformerEngine/transformer_engine/common/fused_attn/fused_attn_f16_arbitrary_seqlen.cu:358 in function operator(): cuDNN Error: [cudnn_frontend] Error: No execution plans support the graph.. For more information, enable cuDNN error logging by setting CUDNN_LOGERR_DBG=1 and CUDNN_LOGDEST_DBG=stderr in the environment.

E0828 18:13:30.058000 2027356 site-packages/torch/distributed/elastic/multiprocessing/api.py:874] failed (exitcode: 1) local_rank: 0 (pid: 2027580) of binary: /home/intern/miniconda3/envs/cosmos-transfer111/bin/python3.12

最初困扰我很长时间,发现错误的核心在于CUDNN(v8907)但是我在Conda环境里面的nvidia-cuda-cudnn版本是9.7.1和报错的cudnn v8907版本不同,再结合与transformer engine包有关的报错信息。

transformer engine是nvidia开发的一个库,核心是加速和优化基于 Transformer 架构的模型的训练和推理。transformer engine在install时候需要下载并编译推测,pip 默认的构建隔离 + 系统 /usr/local/cuda 抢位,导致 transformer-engine 在编译时捡到了系统 CUDA/cuDNN,而不是你 conda 环境里与 PyTorch 匹配的那套。

最终的解决办法是:

1.首先卸载安装的transformer engine

pip uninstall transformer-engine2.设定conda相关的环境变量

export CUDA_HOME="$CONDA_PREFIX"

export CMAKE_PREFIX_PATH="$CONDA_PREFIX"

export LD_LIBRARY_PATH="$CONDA_PREFIX/lib:$CONDA_PREFIX/lib64"

export PATH="$CONDA_PREFIX/bin:$PATH"3.重新安装transformer engine

pip install --no-build-isolation --no-cache-dir transformer-engine此时再去跑命令就没有问题了:

export CUDNN_LOGERR_DBG=1

export CUDNN_LOGDEST_DBG=stderr

export LD_LIBRARY_PATH=$CONDA_PREFIX/lib/python3.12/site-packages/nvidia/cudnn/lib:$LD_LIBRARY_PATH

CUDA_VISIBLE_DEVICES=0,3,6,7 PYTHONPATH=$(pwd) torchrun --nproc_per_node=4 --nnodes=1 --node_rank=0 \

cosmos_transfer1/diffusion/inference/transfer.py \--checkpoint_dir ./checkpoints \--video_save_folder outputs/robot_example_spatial_temporal_setting1 \--controlnet_specs assets/robot_augmentation_example/example1/inference_cosmos_transfer1_robot_spatiotemporal_weights.json \--offload_text_encoder_model \--offload_guardrail_models \--num_gpus 4export CUDNN_LOGERR_DBG=1

export CUDNN_LOGDEST_DBG=stderrexport LD_LIBRARY_PATH=$CONDA_PREFIX/lib/python3.12/site-packages/nvidia/cudnn/lib:$LD_LIBRARY_PATH

CUDA_VISIBLE_DEVICES=3 PYTHONPATH=$(pwd) torchrun --nproc_per_node=1 --nnodes=1 --node_rank=0 \

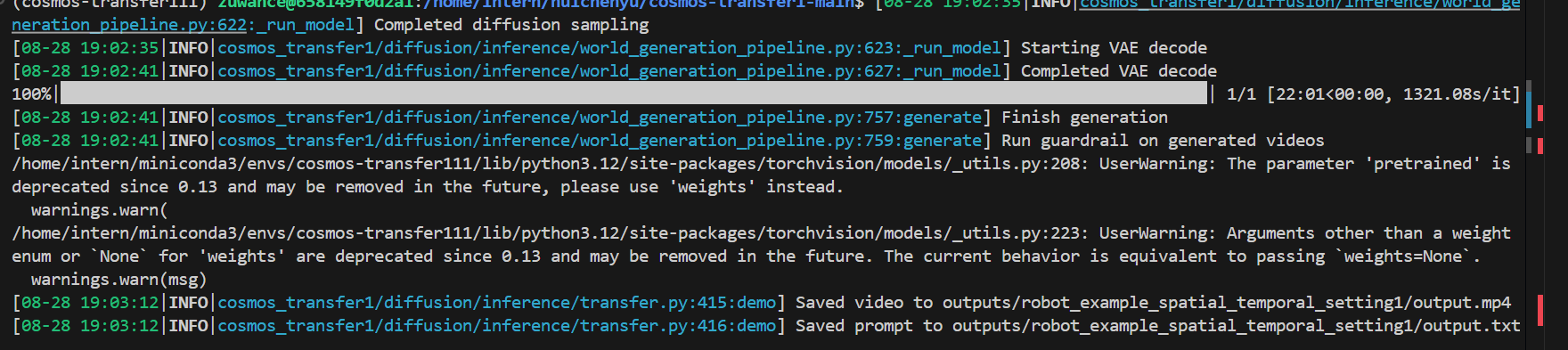

cosmos_transfer1/diffusion/inference/transfer.py \--checkpoint_dir ./checkpoints \--video_save_folder outputs/robot_example_spatial_temporal_setting1 \--controlnet_specs assets/robot_augmentation_example/example1/inference_cosmos_transfer1_robot_spatiotemporal_weights.json \--offload_text_encoder_model \--offload_guardrail_models \--num_gpus 1运行结果为: