YOLO v11 目标检测+关键点检测 实战记录

流水账记录一下yolo目标检测

1.搭建pytorch 不做解释 看以往博客或网上搜都行

2.下载yolo源码 : https://github.com/ultralytics/ultralytics

3.样本标注工具:labelimg 自己下载

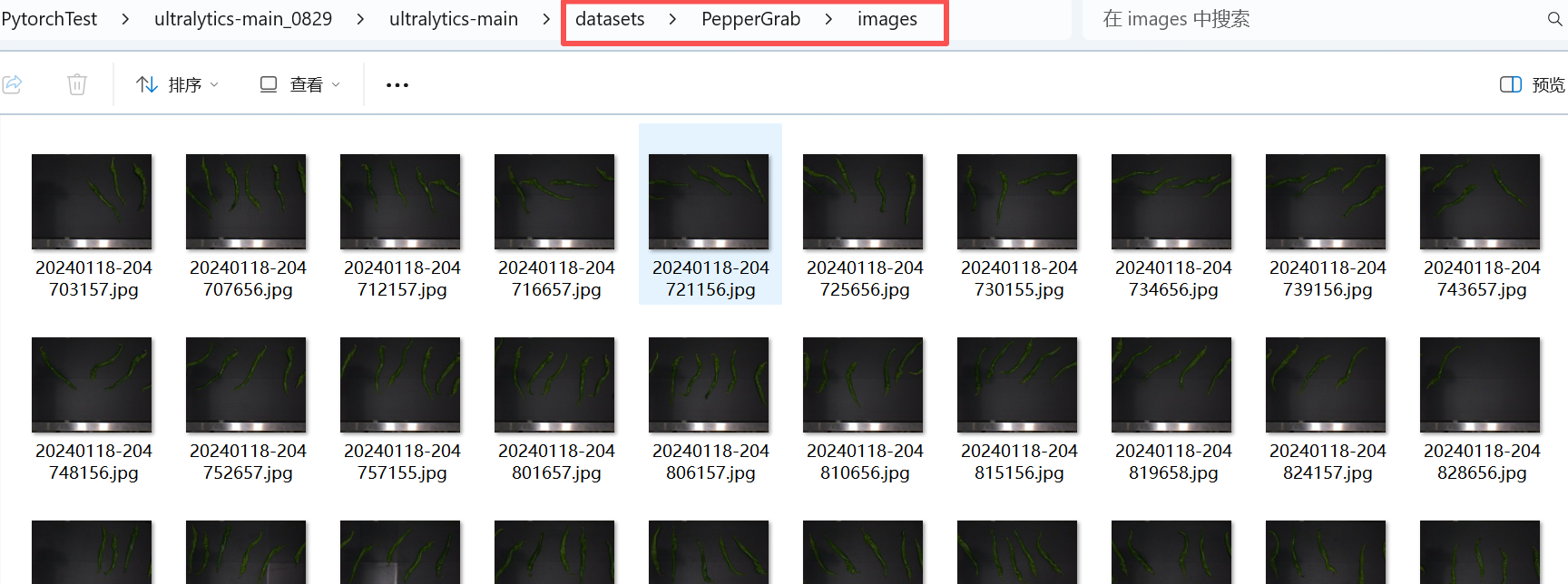

4.准备数据集

4.1 新建一个放置数据集的路径

4.2 构建训练集和测试集

运行以下脚本,将数据集划分为训练集和测试集,比例是7:3,看自己需求

import os

import shutilfrom tqdm import tqdm

import random""" 使用:只需要修改 1. Dataset_folde, 2. os.chdir(os.path.join(Dataset_folder, 'images'))里的 images, 3. val_scal = 0.2 4. os.chdir('../label_json') label_json换成自己json标签文件夹名称 """# 图片文件夹与json标签文件夹的根目录

Dataset_folder = r'D:\Software\Python\deeplearing\PytorchTest\ultralytics-main\datasets\PepperGrab'

# 把当前工作目录改为指定路径

os.chdir(os.path.join(Dataset_folder, 'images')) # images : 图片文件夹的名称

folder = '.' # 代表os.chdir(os.path.join(Dataset_folder, 'images'))这个路径

imgs_list = os.listdir(folder)

random.seed(123) # 固定随机种子,防止运行时出现bug后再次运行导致imgs_list 里面的图片名称顺序不一致

random.shuffle(imgs_list) # 打乱

val_scal = 0.3 # 验证集比列

val_number = int(len(imgs_list) * val_scal)

val_files = imgs_list[:val_number]

train_files = imgs_list[val_number:]

print('all_files:', len(imgs_list))

print('train_files:', len(train_files))

print('val_files:', len(val_files))

os.mkdir('train')

for each in tqdm(train_files):shutil.move(each, 'train')

os.mkdir('val')

for each in tqdm(val_files):shutil.move(each, 'val')

os.chdir('../label_json')

os.mkdir('train')

for each in tqdm(train_files):json_file = os.path.splitext(each)[0] + '.json'shutil.move(json_file, 'train')

os.mkdir('val')

for each in tqdm(val_files):json_file = os.path.splitext(each)[0] + '.json'shutil.move(json_file, 'val')

print('划分完成')

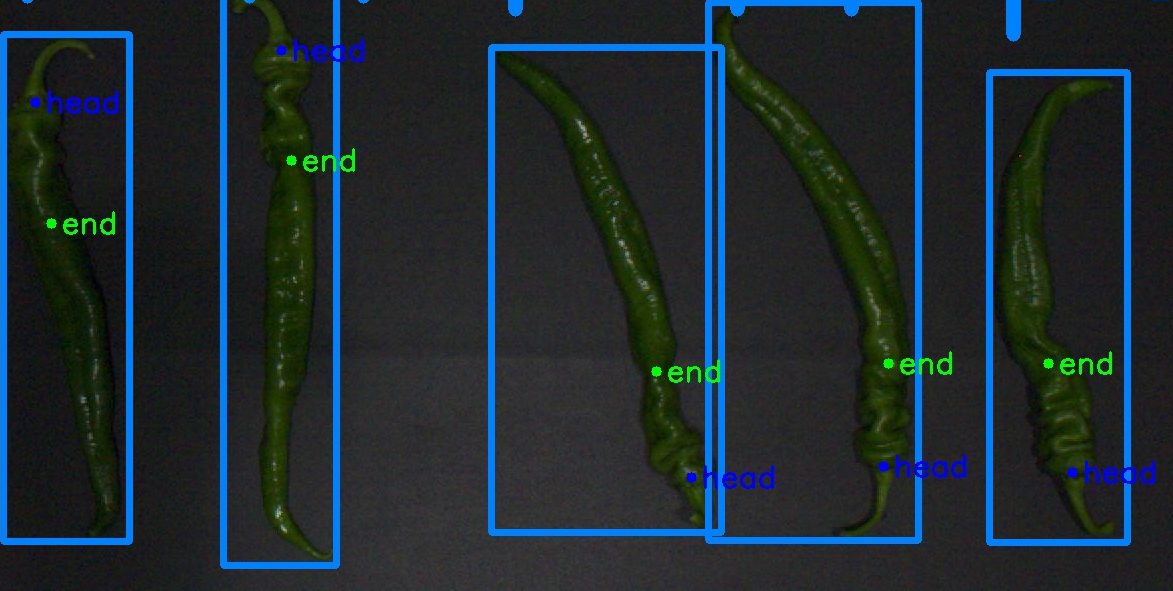

4.2 然后就开始标样本了,用labelimg标,本次测试出了目标检测外,还需要检测关键点,类似标成下面这种,我之前标过一次样本,我就直接用了,没有标注的截图

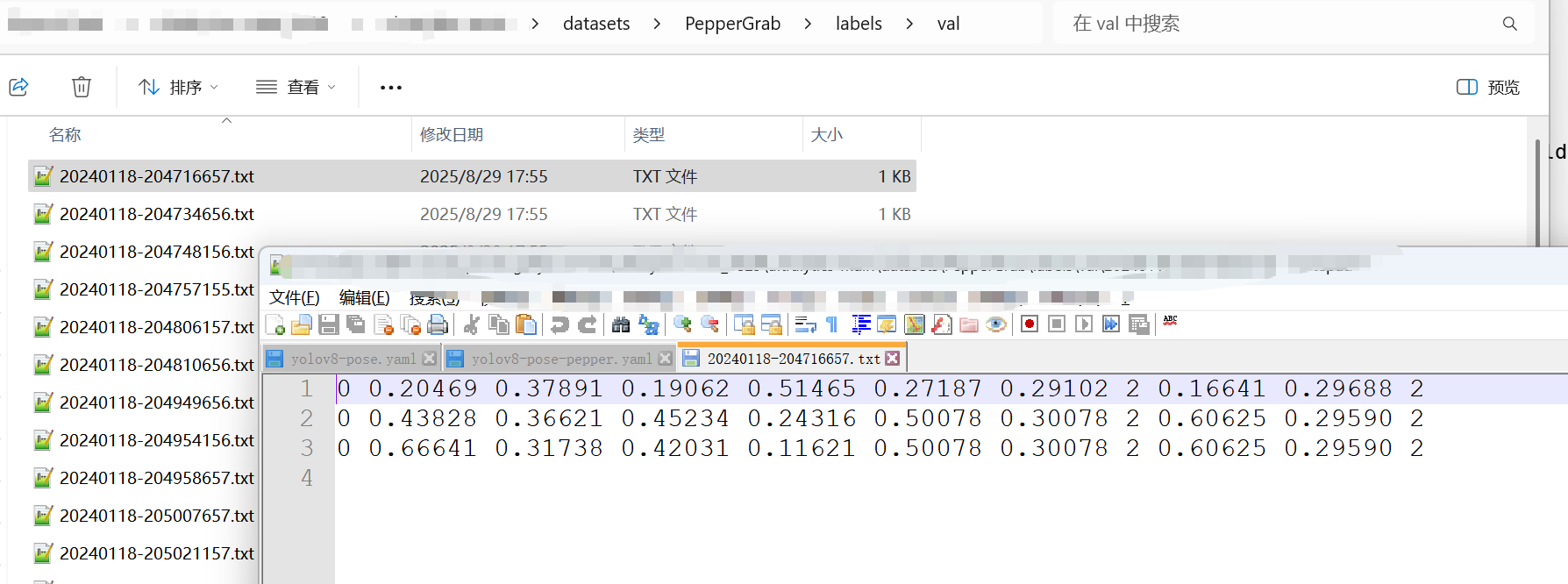

4.3 因为labelimg标注完是json格式,要转化为yolo格式,运行以下脚本

import os

import json

import shutil

import numpy as np

from tqdm import tqdm""""#使用:1.改 bbox_class = {'sjb_rect': 0},我的框的类别是sjb_rect,赋值它为0,如你是dog则改成:bbox_cls = {'dog': 0}2.改 keypoint_class = ['angle_30', 'angle_60', 'angle_90'],我的关键点类别是三个,分别是'angle_30', 'angle_60', 'angle_90' 3.改 Dataset_root 成你自己的图片与json文件的路径 4.改 os.chdir('json_label/train')与os.chdir('json_label/val') 成你的json文件夹下的train与val文件夹 """# 数据集根慕录(即图片文件夹与标签文件夹的上一级目录)

Dataset_root = r'D:\Software\Python\deeplearing\PytorchTest\ultralytics-main\datasets\PepperGrab'# 框的类别

bbox_class = {'pepper': 0

}# 关键点的类别

keypoint_class = ['head', 'end'] # 这里类别放的顺序对应关键点类别的标签 0,1,2os.chdir(Dataset_root)os.mkdir('labels')

os.mkdir('labels/train')

os.mkdir('labels/val')def process_single_json(labelme_path, save_folder='../../labels/train'):with open(labelme_path, 'r', encoding='utf-8') as f:labelme = json.load(f)img_width = labelme['imageWidth'] # 图像宽度img_height = labelme['imageHeight'] # 图像高度# 生成 YOLO 格式的 txt 文件suffix = labelme_path.split('.')[-2]yolo_txt_path = suffix + '.txt'with open(yolo_txt_path, 'w', encoding='utf-8') as f:for each_ann in labelme['shapes']: # 遍历每个标注if each_ann['shape_type'] == 'rectangle': # 每个框,在 txt 里写一行yolo_str = ''## 框的信息# 框的类别 IDbbox_class_id = bbox_class[each_ann['label']]yolo_str += '{} '.format(bbox_class_id)# 左上角和右下角的 XY 像素坐标bbox_top_left_x = int(min(each_ann['points'][0][0], each_ann['points'][1][0]))bbox_bottom_right_x = int(max(each_ann['points'][0][0], each_ann['points'][1][0]))bbox_top_left_y = int(min(each_ann['points'][0][1], each_ann['points'][1][1]))bbox_bottom_right_y = int(max(each_ann['points'][0][1], each_ann['points'][1][1]))# 框中心点的 XY 像素坐标bbox_center_x = int((bbox_top_left_x + bbox_bottom_right_x) / 2)bbox_center_y = int((bbox_top_left_y + bbox_bottom_right_y) / 2)# 框宽度bbox_width = bbox_bottom_right_x - bbox_top_left_x# 框高度bbox_height = bbox_bottom_right_y - bbox_top_left_y# 框中心点归一化坐标bbox_center_x_norm = bbox_center_x / img_widthbbox_center_y_norm = bbox_center_y / img_height# 框归一化宽度bbox_width_norm = bbox_width / img_width# 框归一化高度bbox_height_norm = bbox_height / img_heightyolo_str += '{:.5f} {:.5f} {:.5f} {:.5f} '.format(bbox_center_x_norm, bbox_center_y_norm,bbox_width_norm, bbox_height_norm)## 找到该框中所有关键点,存在字典 bbox_keypoints_dict 中bbox_keypoints_dict = {}for each_ann in labelme['shapes']: # 遍历所有标注if each_ann['shape_type'] == 'point': # 筛选出关键点标注# 关键点XY坐标、类别x = int(each_ann['points'][0][0])y = int(each_ann['points'][0][1])label = each_ann['label']if (x > bbox_top_left_x) & (x < bbox_bottom_right_x) & (y < bbox_bottom_right_y) & (y > bbox_top_left_y): # 筛选出在该个体框中的关键点bbox_keypoints_dict[label] = [x, y]## 把关键点按顺序排好for each_class in keypoint_class: # 遍历每一类关键点if each_class in bbox_keypoints_dict:keypoint_x_norm = bbox_keypoints_dict[each_class][0] / img_widthkeypoint_y_norm = bbox_keypoints_dict[each_class][1] / img_heightyolo_str += '{:.5f} {:.5f} {} '.format(keypoint_x_norm, keypoint_y_norm,2) # 2-可见不遮挡 1-遮挡 0-没有点else: # 不存在的点,一律为0yolo_str += '0 0 0 '# 写入 txt 文件中f.write(yolo_str + '\n')shutil.move(yolo_txt_path, save_folder)print('{} --> {} 转换完成'.format(labelme_path, yolo_txt_path))os.chdir('label_json/train')save_folder = '../../labels/train'

for labelme_path in os.listdir():try:process_single_json(labelme_path, save_folder=save_folder)except:print('******有误******', labelme_path)

print('YOLO格式的txt标注文件已保存至 ', save_folder)os.chdir('../../')os.chdir('label_json/val')save_folder = '../../labels/val'

for labelme_path in os.listdir():try:process_single_json(labelme_path, save_folder=save_folder)except:print('******有误******', labelme_path)

print('YOLO格式的txt标注文件已保存至 ', save_folder)os.chdir('../../')os.chdir('../')

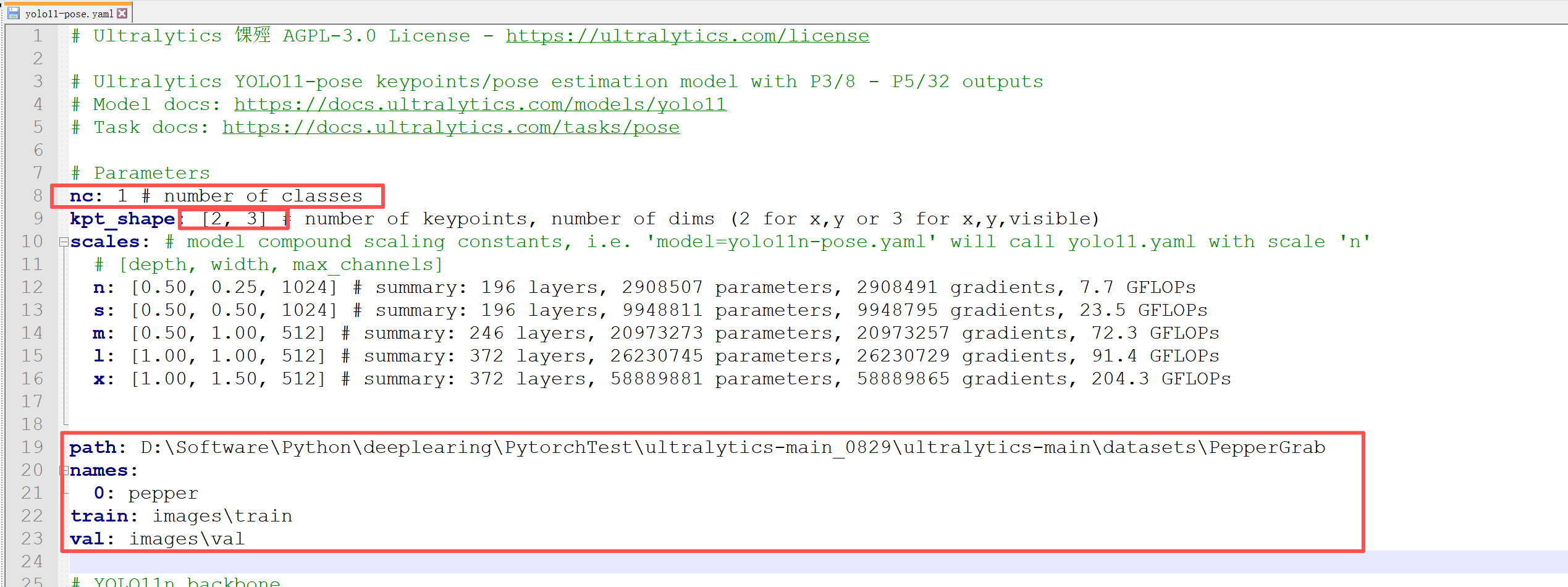

4.3 构造yaml

直接拷贝…\ultralytics-main\ultralytics\cfg\models\11\yolo11-pose.yaml

修改内容如下:

4.4 开始训练模型

先下载预训练模型 yolo11n.pt yolo11n-pose.pt

然后直接训练,先不看详细训练参数,先能跑起来

from ultralytics import YOLO

import cv2

# #训练

model = YOLO("./yolo11n-pose.pt")

model.train(data = "...../ultralytics-main_0829/ultralytics-main/yolo11-pose.yaml",workers=0,epochs=640,batch=8)

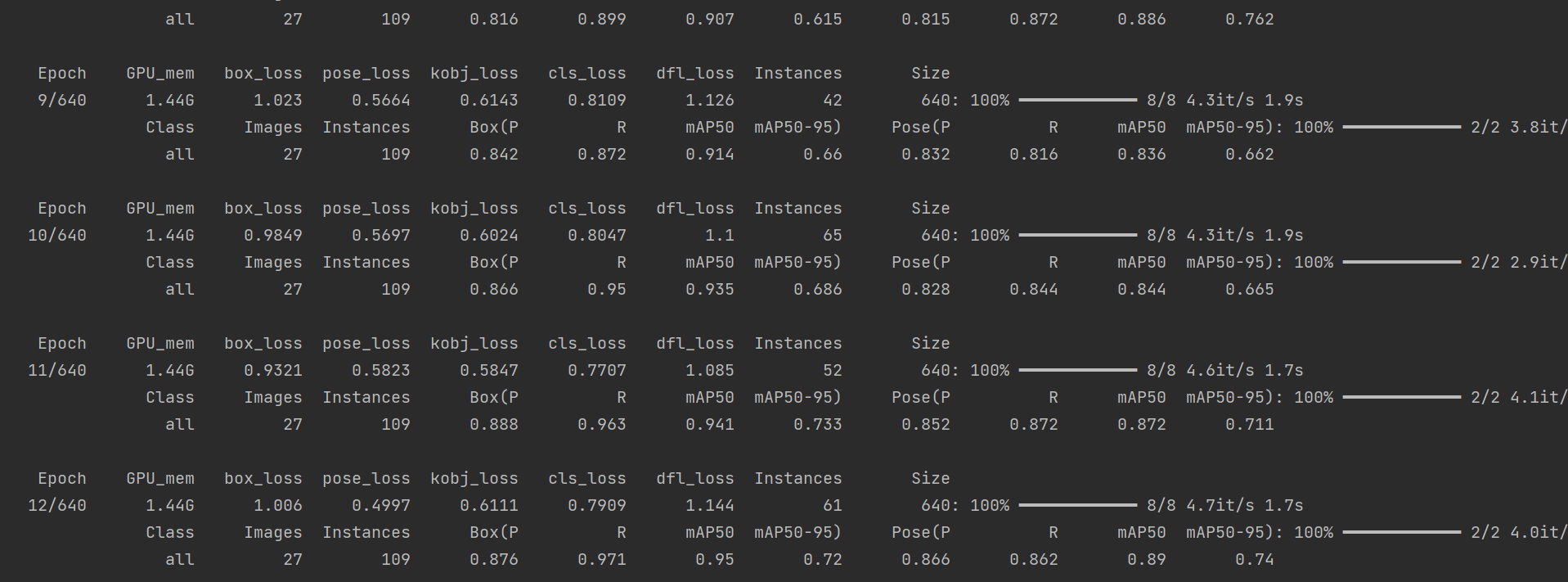

跑起来了

4.5 开始预测结果

yolo = YOLO("best.pt", task = "detect")

result = yolo(source=".../ultralytics-main_0829/ultralytics-main/datasets/PepperGrab/images/val",conf=0.4,vid_stride=1,iou=0.3,save = True)

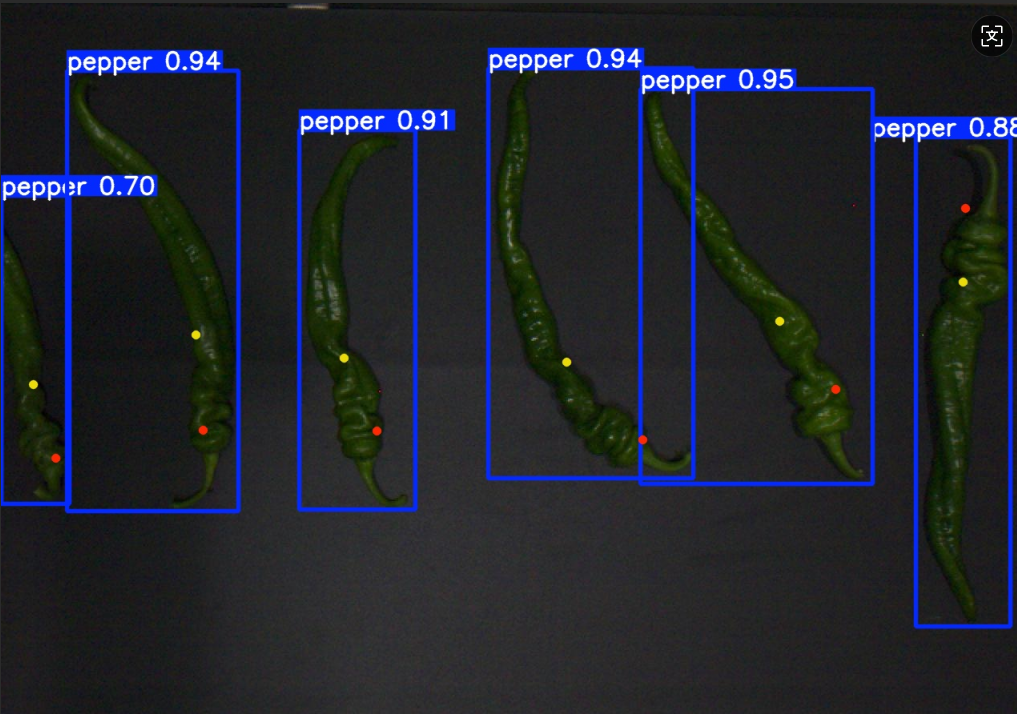

效果一般般,起码是把流程走通了,精度后面再看吧

4.6 移植到C++测试

后面再说吧