k8s集群1.20.9

一.k8s安装

搭建K8S集群,准备三台2核4G的虚拟机(内存至少2G以上),操作系统选择用centos 7以上版本

| 主机 | 说明 |

|---|---|

| 192.168.33.35 | k8s-master |

| 192.168.33.190 | k8s-node1 |

1、安装Docker

sudo yum remove docker*sudo yum install -y yum-utils#配置docker的yum地址

sudo yum-config-manager \

--add-repo \

http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo#安装指定版本

sudo yum install -y docker-ce-20.10.7 docker-ce-cli-20.10.7 containerd.io-1.4.6#启动&开机启动docker

systemctl enable docker --now# docker镜像加速器配置

sudo mkdir -p /etc/docker

sudo tee /etc/docker/daemon.json <<-'EOF'

{"registry-mirrors": ["https://jbw52uwf.mirror.aliyuncs.com"],"exec-opts": ["native.cgroupdriver=systemd"],"log-driver": "json-file","log-opts": {"max-size": "100m"},"storage-driver": "overlay2"

}

EOF

sudo systemctl daemon-reload

sudo systemctl restart docker

2、安装Kubernetes

2.1 基本环境

所有机器执行以下操作

每个机器使用内网ip互通

#1. 关闭防火墙并设置开机不启动

systemctl stop firewalld

systemctl disable firewalld# 2、关闭 selinux

# 将 SELinux 设置为 permissive 模式(相当于将其禁用)

sudo setenforce 0

sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config#3、关闭swap

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstabsystemctl reboot #重启生效

free -m #查看下swap交换区是否都为0,如果都为0则swap关闭成功#4、给三台机器分别设置主机名

hostnamectl set-hostname k8s-master

hostnamectl set-hostname k8s-node1

第一台:k8s-master

第二台:k8s-node1#5、添加hosts,执行如下命令,ip需要修改成你自己机器的ip

cat >> /etc/hosts << EOF

192.168.33.35 k8s-master

192.168.33.190 k8s-node1

EOF#6、允许 iptables 检查桥接流量

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

br_netfilter

EOFcat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sudo sysctl --system #7、设置时间同步

yum install ntpdate -y

ntpdate time.windows.com2.2 安装kubelet、kubeadm、kubectl

#8、配置k8s的yum源地址

cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpghttp://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF#9、如果之前安装过k8s,先卸载旧版本

yum remove -y kubelet kubeadm kubectl#10、查看可以安装的版本

yum list kubelet --showduplicates | sort -r#11、安装 kubelet,kubeadm,kubectl指定版本,我们使用kubeadm方式安装k8s集群

sudo yum install -y kubelet-1.20.9 kubeadm-1.20.9 kubectl-1.20.9#12、开机启动kubelet

sudo systemctl enable --now kubelet

2.3 初始化master节点

2.3.1 下载各个机器需要的镜像

sudo tee ./images.sh <<-'EOF'

#!/bin/bash

images=(

kube-apiserver:v1.20.9

kube-proxy:v1.20.9

kube-controller-manager:v1.20.9

kube-scheduler:v1.20.9

coredns:1.7.0

etcd:3.4.13-0

pause:3.2

)

for imageName in ${images[@]} ; do

docker pull registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/$imageName

done

EOFchmod +x ./images.sh && ./images.sh

2.3.2 初始化主节点

# 在k8s-master机器上执行初始化操作(里面的第一个ip地址就是k8s-master机器的ip,改成你自己机器的,后面两个ip网段不用动)

#所有网络范围不重叠

kubeadm init \

--apiserver-advertise-address=192.168.33.35 \

--control-plane-endpoint=k8s-master \

--image-repository registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images \

--kubernetes-version v1.20.9 \

--service-cidr=10.96.0.0/16 \

--pod-network-cidr=10.244.0.0/16# 可以查看kubelet日志

journalctl -xefu kubelet #如果初始化失败,重置kubeadm

kubeadm resetrm -rf /etc/cni/net.d $HOME/.kube/config

#清理 iptables 规则

iptables -F

iptables -X

iptables -t nat -F

iptables -t nat -X

iptables -t mangle -F

iptables -t mangle -X

iptables -P INPUT ACCEPT

iptables -P FORWARD ACCEPT

iptables -P OUTPUT ACCEPT

2.3.3 根据提示继续

Your Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user:mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/configAlternatively, if you are the root user, you can run:export KUBECONFIG=/etc/kubernetes/admin.confYou should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:https://kubernetes.io/docs/concepts/cluster-administration/addons/You can now join any number of control-plane nodes by copying certificate authorities

and service account keys on each node and then running the following as root:kubeadm join k8s-master:6443 --token 50rexj.yb0ys92ynnxxbo2s \--discovery-token-ca-cert-hash sha256:10fd9d2a9f4e2d7dff502aa3fb31a80f0372666efc92defde3707b499ba000e9 \--control-plane Then you can join any number of worker nodes by running the following on each as root:kubeadm join k8s-master:6443 --token 50rexj.yb0ys92ynnxxbo2s \--discovery-token-ca-cert-hash sha256:10fd9d2a9f4e2d7dff502aa3fb31a80f0372666efc92defde3707b499ba000e9

设置.kube/config

# 配置使用 kubectl 命令工具

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/configexport KUBECONFIG=/etc/kubernetes/admin.conf

echo $KUBECONFIG

安装Calico网络插件

curl https://docs.projectcalico.org/archive/v3.20/manifests/calico.yaml -Okubectl apply -f calico.yaml

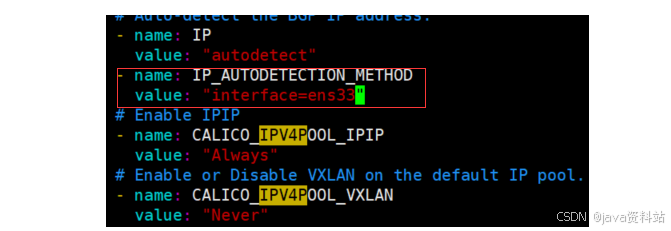

如果配置多网卡,出现下面的错误:calico/node is not ready: BIRD is not ready: BGP not established with xxx

修改calico.yaml的内容,指定网卡,添加下面两行:

- name: IP_AUTODETECTION_METHODvalue: "interface=ens33"

2.3.4 加入node节点

可以通过下面的命令重新生成令牌

kubeadm token create --print-join-command

# 将node节点加入进master节点的集群里

kubeadm join k8s-master:6443 --token 50rexj.yb0ys92ynnxxbo2s \--discovery-token-ca-cert-hash sha256:10fd9d2a9f4e2d7dff502aa3fb31a80f0372666efc92defde3707b499ba000e9

# node节点执行kubectl命令kubectl get nodes出现下面的错误:

The connection to the server localhost:8080 was refused - did you specify the right host or port?

# 解决方案: 在node节点配置KUBECONFIG环境变量即可

echo "export KUBECONFIG=/etc/kubernetes/kubelet.conf" >> /etc/profile

source /etc/profile

2.3.5 验证集群节点状态

[root@192 kubernetes]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 44m v1.20.9

k8s-node1 Ready <none> 10m v1.20.9修改节点角色 :kubectl label node k8s-node1 node-role.kubernetes.io/worker=worker