Ceph集群存储部署

目录

一、环境准备

1.节点规划

二、应用部署

1. 环境准备

2.部署Ceph集群

2.1 创建Ceph集群

2.2 创建OSD

2.3 Ceph集群运维命令

一、环境准备

1.节点规划

系统版本:CentOS 7.9

表 1-1-1 规划节点

| IP地址 | 主机名 | 节点 |

| 172.128.11.15 | ceph-node1 | Monitor/OSD |

| 172.128.11.26 | ceph-node2 | OSD |

| 172.128.11.64 | ceph-node3 | OSD |

2.基础准备

使用提供的CentOS7.9镜像创建3个云主机,flavor使用2vCPU/4G/50G硬盘+临时磁盘20G类型。

二、应用部署

1. 环境准备

修改三个节点的主机名为ceph-node1、ceph-node2和ceph-node3,命令如下:

[root@ceph-node1 ~]# hostnamectl set-hostname ceph-node1

[root@ceph-node1 ~]# hostnamectl Static hostname: ceph-node1Icon name: computer-vmChassis: vmMachine ID: cc2c86fe566741e6a2ff6d399c5d5daaBoot ID: 677af9a2cd3046b7958bbb268342ad69Virtualization: kvmOperating System: CentOS Linux 7 (Core)CPE OS Name: cpe:/o:centos:centos:7Kernel: Linux 3.10.0-1160.el7.x86_64Architecture: x86-64这3台虚拟机各需要有50 GB的空闲硬盘。可以使用lsblk命令进行验证。

[root@ceph-node1 ~]# lsblk

NAME MAJ:MIN RM SIZE RO TYPE MOUNTPOINT

sda 8:0 0 50G 0 disk

├─sda1 8:1 0 1G 0 part /boot

└─sda2 8:2 0 49G 0 part├─centos-root 253:0 0 47G 0 lvm /└─centos-swap 253:1 0 2G 0 lvm [SWAP]

sdb 8:16 0 50G 0 disk

sr0 11:0 1 4.4G 0 rom修改3台虚拟机的/etc/hosts文件,修改主机名地址映射关系。

[root@ceph-node1 ~]# cat /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

192.168.20.26 ceph-node1

192.168.20.27 ceph-node2

192.168.20.24 ceph-node3在所有Ceph节点上修改Yum源,均使用本地源。(软件包使用提供的ceph-14.2.22.tar.gz)

将提供的软件包ceph-14.2.22.tar.gz上传至三个节点的/root目录下,并解压缩到/opt目录下,命令如下:(三个节点都要执行)

# tar -zxvf ceph-14.2.22.tar.gz -C /opt解压完成,然后配置repo文件,首先将/etc/yum.repos.d下面的所有repo文件移走,并创建local.repo文件,命令如下:(三个节点都要执行)

[root@ceph-node1 ~]# cd /etc/yum.repos.d/

[root@ceph-node1 yum.repos.d]# mv * /media/

[root@ceph-node1 yum.repos.d]# vi ceph.repo

[root@ceph-node1 yum.repos.d]# cat ceph.repo

[ceph]

name=ceph

baseurl=file:///opt/ceph

gpgcheck=0

enabled=12.部署Ceph集群

要部署Ceph集群,需要使用ceph-deploy工具在3台虚拟机上安装和配置Ceph。ceph-deploy是Ceph软件定义存储系统的一部分,用来方便地配置和管理Ceph存储集群。

2.1 创建Ceph集群

首先,在ceph-node1上安装Ceph,并配置它为Ceph monitor和OSD节点。

(1)在ceph-node1上安装ceph-deploy。

[root@ceph-node1 ~]# yum install ceph-deploy -y(2)通过在cep-node1上执行以下命令,用ceph-deploy创建一个Ceph集群。

[root@ceph-node1 ~]# mkdir /etc/ceph

[root@ceph-node1 ~]# cd /etc/ceph

[root@ceph-node1 ceph]# sudo yum install -y epel-release

[root@ceph-node1 ceph]# sudo yum install -y python2-pip

[root@ceph-node1 ceph]# python2 -m pip install --upgrade setuptools==44.1.0 --trusted-host pypi.python.org --trusted-host files.pythonhosted.org --trusted-host pypi.org

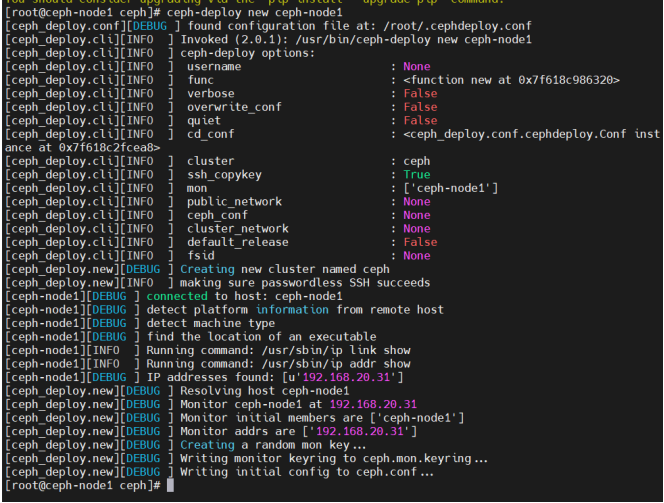

[root@ceph-node1 ceph]# ceph-deploy new ceph-node1注意:python版本和setuptools版本要适配。

(3)ceph-deploy的new子命令能够部署一个默认名称为Ceph的新集群,并且它能生成集群配置文件和密钥文件。列出当前的工作目录,可以查看到ceph.conf和ceph.mon.keying文件。

[root@ceph-node1 ceph]# ll

total 12

-rw-r--r-- 1 root root 229 Sep 20 16:20 ceph.conf

-rw-r--r-- 1 root root 2960 Sep 20 16:20 ceph-deploy-ceph.log

-rw------- 1 root root 73 Sep 20 16:20 ceph.mon.keyring(4)在ceph-node1上执行以下命令,使用ceph-deploy工具在所有节点上安装Ceph二进制软件包。命令如下:(注意需要加上--no-adjust-repos参数,不然系统会默认去安装epel-release源,此处全使用本地安装)

[root@ceph-node1 ceph]# ceph-deploy install ceph-node1 ceph-node2 ceph-node3 --no-adjust-repos

[ceph_deploy.conf][DEBUG ] found configuration file at: /root/.cephdeploy.conf

...

...

[ceph-node3][INFO ] Running command: ceph --version

[ceph-node3][DEBUG ] ceph version 14.2.22 (584a20eb0237c657dc0567da126be145106aa47e) luminous (stable)

(5)ceph-deploy工具包首先会安装Ceph组件所有的依赖包。命令成功完成后,检查所有节点上Ceph的版本信息。

[root@ceph-node1 ceph]# ceph -v

ceph version 14.2.22 (584a20eb0237c657dc0567da126be145106aa47e) luminous (stable)

[root@ceph-node2 ~]# ceph -v

ceph version 14.2.22 (584a20eb0237c657dc0567da126be145106aa47e) luminous (stable)

[root@ceph-node3 ~]# ceph -v

ceph version 14.2.22 (584a20eb0237c657dc0567da126be145106aa47e) luminous (stable)(6)在ceph-node1上创建第一个Ceph monitor。

[root@ceph-node1 ceph]# ceph-deploy mon create-initial

[ceph_deploy.conf][DEBUG ] found configuration file at: /root/.cephdeploy.conf

...

...

[ceph_deploy.gatherkeys][DEBUG ] Got ceph.bootstrap-rgw.keyring key from ceph-node1.

(7)Monitor创建成功后,检查集群的状态,这个时候Ceph集群并不处于健康状态。

[root@ceph-node1 ceph]# ceph -scluster:id: e901edda-49e8-4910-a5de-b860da4811c2health: HEALTH_WARNmon is allowing insecure global_id reclaimservices:mon: 1 daemons, quorum ceph-node1 (age 47s)mgr: no daemons activeosd: 0 osds: 0 up, 0 indata:pools: 0 pools, 0 pgsobjects: 0 objects, 0 Busage: 0 B used, 0 B / 0 B availpgs: 2.2 创建OSD

(1)列出ceph-node1上所有的可用磁盘。

[root@ceph-node1 ceph]# ceph-deploy disk list ceph-node1

... ...

[ceph-node1][INFO ] Disk /dev/vda: 53.7 GB, 53687091200 bytes, 104857600 sectors

[ceph-node1][INFO ] Disk /dev/vdb: 21.5 GB, 21474836480 bytes, 41943040 sectors(2) 创建共享磁盘,3个节点都要执行。对系统上的空闲硬盘进行分区操作。

先umount磁盘,在使用fdisk工具分区。

(3)分区完毕后,使用命令将这些分区添加至osd,命令如下:

ceph-node1节点:

[root@ceph-node1 ceph]# ceph-deploy osd create --data /dev/sdb1 ceph-node1

[ceph_deploy.conf][DEBUG ] found configuration file at: /root/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (2.0.1): /usr/bin/ceph-deploy osd create --data /dev/sdb1 ceph-node1

... ...

[ceph-node1][INFO ] Running command: /bin/ceph --cluster=ceph osd stat --format=json

[ceph_deploy.osd][DEBUG ] Host ceph-node1 is now ready for osd use.

ceph-node2节点:

[root@ceph-node1 ceph]# ceph-deploy osd create --data /dev/sdb1 ceph-node2

[ceph_deploy.conf][DEBUG ] found configuration file at: /root/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (2.0.1): /usr/bin/ceph-deploy osd create --data /dev/vdb1 ceph-node2

... ...

[ceph-node2][INFO ] Running command: /bin/ceph --cluster=ceph osd stat --format=json

[ceph_deploy.osd][DEBUG ] Host ceph-node2 is now ready for osd use.

ceph-node3节点:

[root@ceph-node1 ceph]# ceph-deploy osd create --data /dev/sdb1 ceph-node3

[ceph_deploy.conf][DEBUG ] found configuration file at: /root/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (2.0.1): /usr/bin/ceph-deploy osd create --data /dev/vdb1 ceph-node3

... ...

[ceph-node3][INFO ] Running command: /bin/ceph --cluster=ceph osd stat --format=json

[ceph_deploy.osd][DEBUG ] Host ceph-node3 is now ready for osd use.

(4)添加完osd节点后,查看集群的状态,命令如下:

[root@ceph-node1 ceph]# ceph -scluster:id: 09226a46-f96d-4bfa-b20b-9021898ddf53health: HEALTH_WARNno active mgrservices:mon: 1 daemons, quorum ceph-node1mgr: no daemons activeosd: 3 osds: 3 up, 3 indata:pools: 0 pools, 0 pgsobjects: 0 objects, 0Busage: 0B used, 0B / 0B availpgs:

可以看到此时的状态为警告,因为还没有设置mgr节点,安装mgr,命令如下:

[root@ceph-node1 ceph]# ceph-deploy mgr create ceph-node1 ceph-node2 ceph-node3

[ceph_deploy.conf][DEBUG ] found configuration file at: /root/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (2.0.1): /usr/bin/ceph-deploy mgr create ceph-node1 ceph-node2 ceph-node3

... ...

[ceph-node3][INFO ] Running command: systemctl start ceph-mgr@ceph-node3

[ceph-node3][INFO ] Running command: systemctl enable ceph.target

(5)检查Ceph集群的状态。此时任然是warn的状态,需要禁用不安全模式命令如下:

[root@ceph-node1 ceph]# ceph -scluster:id: e901edda-49e8-4910-a5de-b860da4811c2health: HEALTH_WARNmon is allowing insecure global_id reclaimservices:mon: 1 daemons, quorum ceph-node1 (age 6m)mgr: ceph-node1(active, since 27s), standbys: ceph-node2, ceph-node3osd: 3 osds: 3 up (since 47s), 3 in (since 47s)data:pools: 0 pools, 0 pgsobjects: 0 objects, 0 Busage: 3.0 GiB used, 57 GiB / 60 GiB availpgs: 使用如下命令禁用不安全模式:

[root@ceph-node1 ceph]# ceph config set mon auth_allow_insecure_global_id_reclaim false再次查看集群状态,集群是HEALTH_OK状态。

[root@ceph-node1 ceph]# ceph -scluster:id: e901edda-49e8-4910-a5de-b860da4811c2health: HEALTH_OKservices:mon: 1 daemons, quorum ceph-node1 (age 9m)mgr: ceph-node1(active, since 3m), standbys: ceph-node2, ceph-node3osd: 3 osds: 3 up (since 3m), 3 in (since 3m)data:pools: 0 pools, 0 pgsobjects: 0 objects, 0 Busage: 3.0 GiB used, 57 GiB / 60 GiB availpgs: (6)开放权限给其他节点,进行灾备处理。

# ceph-deploy admin ceph-node{1,2,3}

[ceph_deploy.conf][DEBUG ] found configuration file at: /root/.cephdeploy.conf

[ceph_deploy.cli][INFO ] Invoked (1.5.31): /usr/bin/ceph-deploy admin ceph-node1 ceph-node2 ceph-node3

[ceph_deploy.admin][DEBUG ] Pushing admin keys and conf to ceph-node1

[ceph_deploy.admin][DEBUG ] Pushing admin keys and conf to ceph-node2

[ceph-node2][DEBUG ] connected to host: ceph-node2

[ceph-node2][DEBUG ] detect platform information from remote host

[ceph_deploy.admin][DEBUG ] Pushing admin keys and conf to ceph-node3

[ceph-node3][DEBUG ] connected to host: ceph-node3

[ceph-node3][DEBUG ] write cluster configuration to /etc/ceph/{cluster}.conf

# chmod +r /etc/ceph/ceph.client.admin.keyring2.3 Ceph集群运维命令

有了可运行的Ceph集群后,现在可以用一些简单的命令来体验Ceph。

(1)检查Ceph的安装状态。

# ceph status

(2)观察集群的健康状况。

# ceph health

(3)检查Ceph monitor仲裁状态。

# ceph quorum_status --format json-pretty

(4)导出Ceph monitor信息。

# ceph mon dump

(5) 检查集群使用状态。

# ceph df

(6) 检查Ceph Monitor、OSD和PG(配置组)状态。

# ceph mon stat

# ceph osd stat

# ceph pg stat