InternLM 论文分类微调实践(XTuner 版)

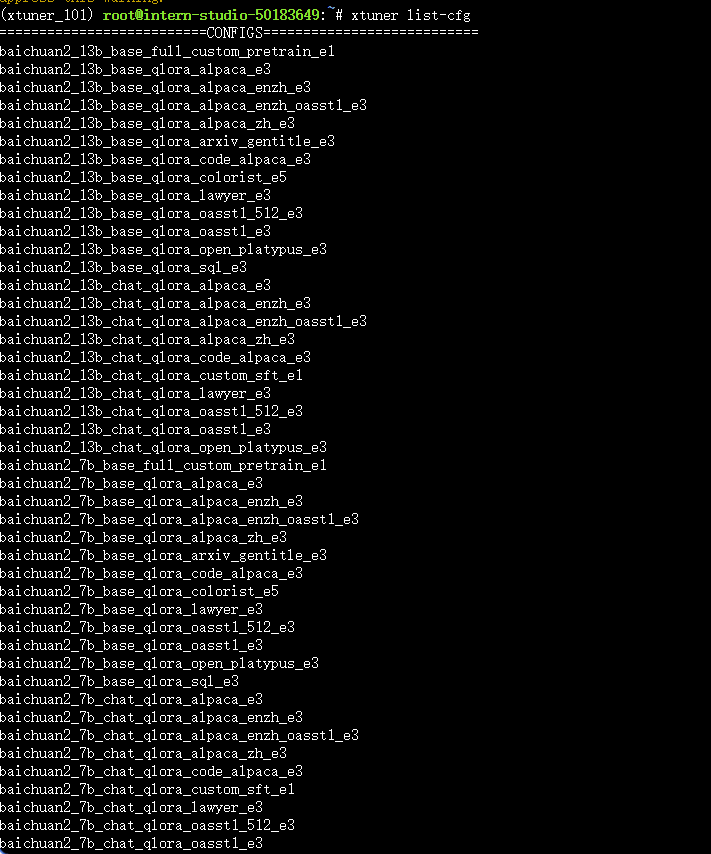

1.环境安装

我创建开发机选择镜像为Cuda12.2-conda,选择GPU为100%A100的资源配置

Conda 管理环境

conda create -n xtuner_101 python=3.10 -y

conda activate xtuner_101

pip install torch==2.4.0+cu121 torchvision torchaudio --extra-index-url https://download.pytorch.org/whl/cu121

pip install xtuner timm flash_attn datasets==2.21.0 deepspeed==0.16.1

conda install mpi4py -y

#为了兼容模型,降级transformers版本

pip uninstall transformers -y

pip install transformers==4.48.0 --no-cache-dir -i https://pypi.tuna.tsinghua.edu.cn/simple检验环境安装

xtuner list-cfg

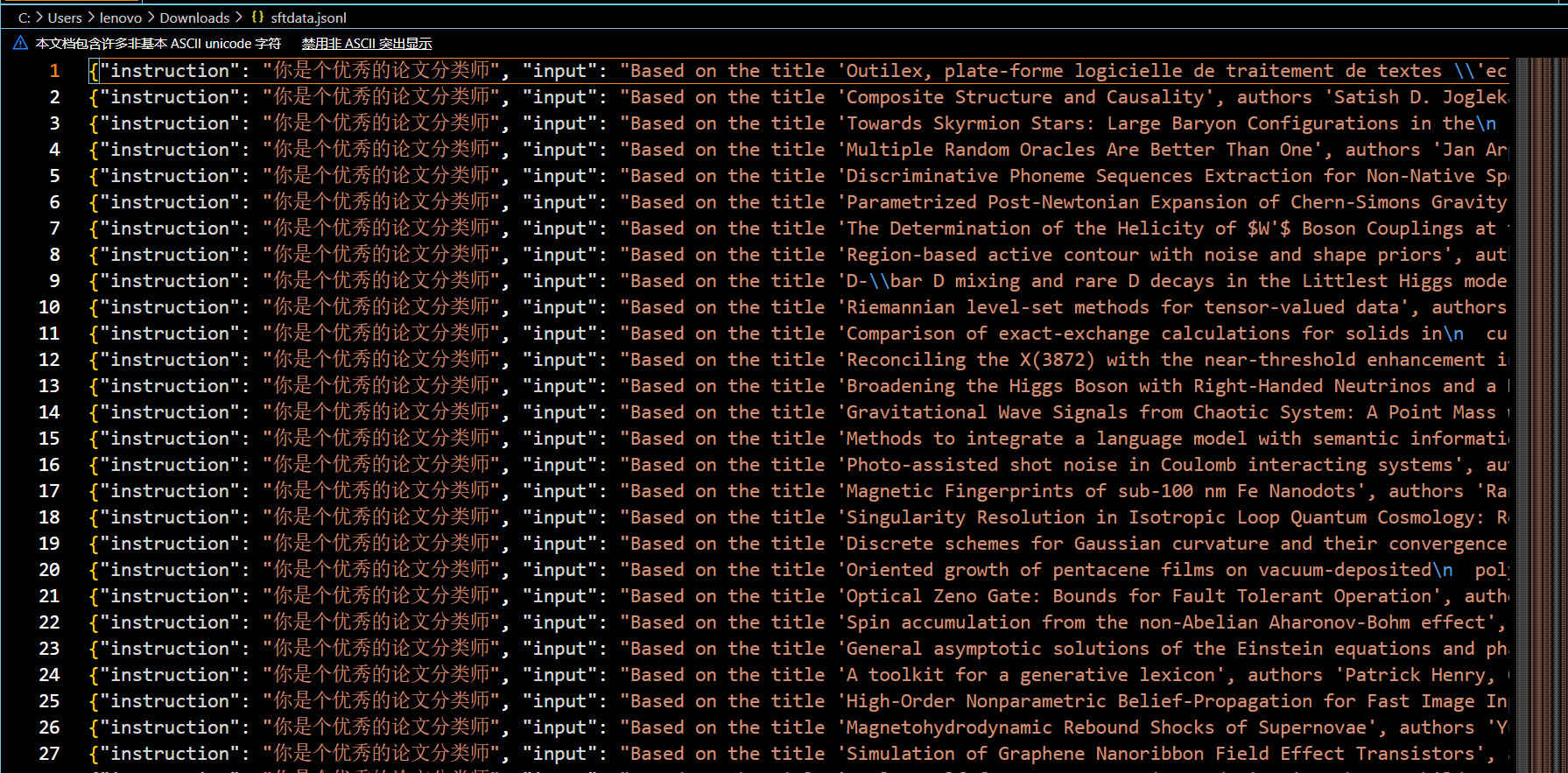

2.数据获取

数据为sftdata.jsonl,已上传。

3.训练

链接模型位置命令

ln -s /root/share/new_models/Shanghai_AI_Laboratory/internlm2_5-7b-chat ./3.1 微调脚本

# Copyright (c) OpenMMLab. All rights reserved.

import torch

from datasets import load_dataset

from mmengine.dataset import DefaultSampler

from mmengine.hooks import (CheckpointHook,DistSamplerSeedHook,IterTimerHook,LoggerHook,ParamSchedulerHook,

)

from mmengine.optim import AmpOptimWrapper, CosineAnnealingLR, LinearLR

from peft import LoraConfig

from torch.optim import AdamW

from transformers import AutoModelForCausalLM, AutoTokenizer, BitsAndBytesConfigfrom xtuner.dataset import process_hf_dataset

from xtuner.dataset.collate_fns import default_collate_fn

from xtuner.dataset.map_fns import alpaca_map_fn, template_map_fn_factory

from xtuner.engine.hooks import (DatasetInfoHook,EvaluateChatHook,VarlenAttnArgsToMessageHubHook,

)

from xtuner.engine.runner import TrainLoop

from xtuner.model import SupervisedFinetune

from xtuner.parallel.sequence import SequenceParallelSampler

from xtuner.utils import PROMPT_TEMPLATE, SYSTEM_TEMPLATE#######################################################################

# PART 1 Settings #

#######################################################################

# Model

pretrained_model_name_or_path = "./internlm2_5-7b-chat"

use_varlen_attn = False# Data

alpaca_en_path = "/root/xtuner/datasets/train/sftdata.jsonl"#换成自己的数据路径

prompt_template = PROMPT_TEMPLATE.internlm2_chat

max_length = 2048

pack_to_max_length = True# parallel

sequence_parallel_size = 1# Scheduler & Optimizer

batch_size = 1 # per_device

accumulative_counts = 1

accumulative_counts *= sequence_parallel_size

dataloader_num_workers = 0

max_epochs = 3

optim_type = AdamW

lr = 2e-4

betas = (0.9, 0.999)

weight_decay = 0

max_norm = 1 # grad clip

warmup_ratio = 0.03# Save

save_steps = 500

save_total_limit = 2 # Maximum checkpoints to keep (-1 means unlimited)# Evaluate the generation performance during the training

evaluation_freq = 500

SYSTEM = SYSTEM_TEMPLATE.alpaca

evaluation_inputs = ["请给我介绍五个上海的景点", "Please tell me five scenic spots in Shanghai"]#######################################################################

# PART 2 Model & Tokenizer #

#######################################################################

tokenizer = dict(type=AutoTokenizer.from_pretrained,pretrained_model_name_or_path=pretrained_model_name_or_path,trust_remote_code=True,padding_side="right",

)model = dict(type=SupervisedFinetune,use_varlen_attn=use_varlen_attn,llm=dict(type=AutoModelForCausalLM.from_pretrained,pretrained_model_name_or_path=pretrained_model_name_or_path,trust_remote_code=True,torch_dtype=torch.float16,quantization_config=dict(type=BitsAndBytesConfig,load_in_4bit=True,load_in_8bit=False,llm_int8_threshold=6.0,llm_int8_has_fp16_weight=False,bnb_4bit_compute_dtype=torch.float16,bnb_4bit_use_double_quant=True,bnb_4bit_quant_type="nf4",),),lora=dict(type=LoraConfig,r=64,lora_alpha=16,lora_dropout=0.1,bias="none",task_type="CAUSAL_LM",),

)#######################################################################

# PART 3 Dataset & Dataloader #

#######################################################################

alpaca_en = dict(type=process_hf_dataset,dataset=dict(type=load_dataset, path='json', data_files=alpaca_en_path),tokenizer=tokenizer,max_length=max_length,dataset_map_fn=alpaca_map_fn,template_map_fn=dict(type=template_map_fn_factory, template=prompt_template),remove_unused_columns=True,shuffle_before_pack=True,pack_to_max_length=pack_to_max_length,use_varlen_attn=use_varlen_attn,

)sampler = SequenceParallelSampler if sequence_parallel_size > 1 else DefaultSampler

train_dataloader = dict(batch_size=batch_size,num_workers=dataloader_num_workers,dataset=alpaca_en,sampler=dict(type=sampler, shuffle=True),collate_fn=dict(type=default_collate_fn, use_varlen_attn=use_varlen_attn),

)#######################################################################

# PART 4 Scheduler & Optimizer #

#######################################################################

# optimizer

optim_wrapper = dict(type=AmpOptimWrapper,optimizer=dict(type=optim_type, lr=lr, betas=betas, weight_decay=weight_decay),clip_grad=dict(max_norm=max_norm, error_if_nonfinite=False),accumulative_counts=accumulative_counts,loss_scale="dynamic",dtype="float16",

)# learning policy

# More information: https://github.com/open-mmlab/mmengine/blob/main/docs/en/tutorials/param_scheduler.md # noqa: E501

param_scheduler = [dict(type=LinearLR,start_factor=1e-5,by_epoch=True,begin=0,end=warmup_ratio * max_epochs,convert_to_iter_based=True,),dict(type=CosineAnnealingLR,eta_min=0.0,by_epoch=True,begin=warmup_ratio * max_epochs,end=max_epochs,convert_to_iter_based=True,),

]# train, val, test setting

train_cfg = dict(type=TrainLoop, max_epochs=max_epochs)#######################################################################

# PART 5 Runtime #

#######################################################################

# Log the dialogue periodically during the training process, optional

custom_hooks = [dict(type=DatasetInfoHook, tokenizer=tokenizer),dict(type=EvaluateChatHook,tokenizer=tokenizer,every_n_iters=evaluation_freq,evaluation_inputs=evaluation_inputs,system=SYSTEM,prompt_template=prompt_template,),

]if use_varlen_attn:custom_hooks += [dict(type=VarlenAttnArgsToMessageHubHook)]# configure default hooks

default_hooks = dict(# record the time of every iteration.timer=dict(type=IterTimerHook),# print log every 10 iterations.logger=dict(type=LoggerHook, log_metric_by_epoch=False, interval=10),# enable the parameter scheduler.param_scheduler=dict(type=ParamSchedulerHook),# save checkpoint per `save_steps`.checkpoint=dict(type=CheckpointHook,by_epoch=False,interval=save_steps,max_keep_ckpts=save_total_limit,),# set sampler seed in distributed evrionment.sampler_seed=dict(type=DistSamplerSeedHook),

)# configure environment

env_cfg = dict(# whether to enable cudnn benchmarkcudnn_benchmark=False,# set multi process parametersmp_cfg=dict(mp_start_method="fork", opencv_num_threads=0),# set distributed parametersdist_cfg=dict(backend="nccl"),

)# set visualizer

visualizer = None# set log level

log_level = "INFO"# load from which checkpoint

load_from = None# whether to resume training from the loaded checkpoint

resume = False# Defaults to use random seed and disable `deterministic`

randomness = dict(seed=None, deterministic=False)# set log processor

log_processor = dict(by_epoch=False)

将模型和地址改为自己的路径

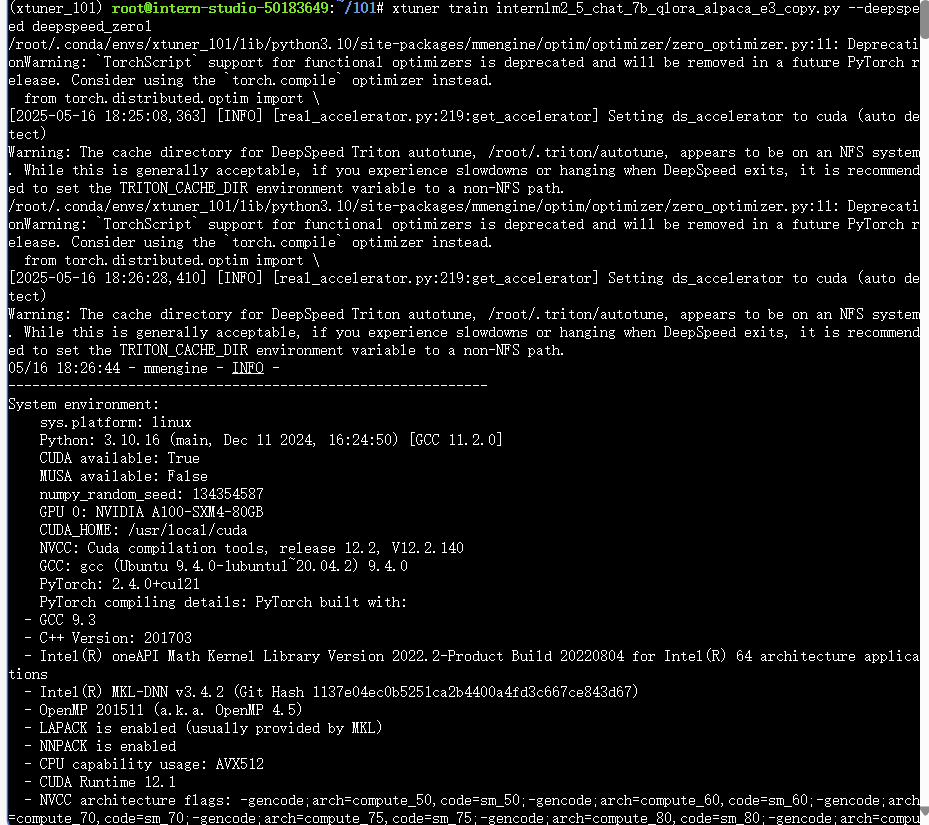

3.2 启动微调

cd /root/101

conda activate xtuner_101

xtuner train internlm2_5_chat_7b_qlora_alpaca_e3_copy.py --deepspeed deepspeed_zero1

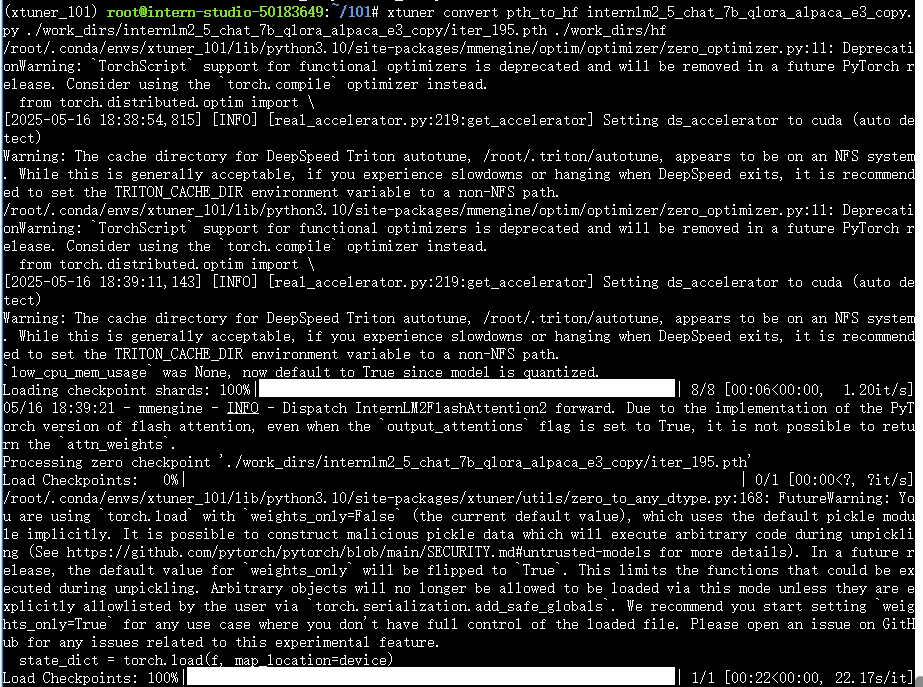

3.3 合并

3.3.1 将PTH格式转换为HuggingFace格式

xtuner convert pth_to_hf internlm2_5_chat_7b_qlora_alpaca_e3_copy.py ./work_dirs/internlm2_5_chat_7b_qlora_alpaca_e3_copy/iter_195.pth ./work_dirs/hf

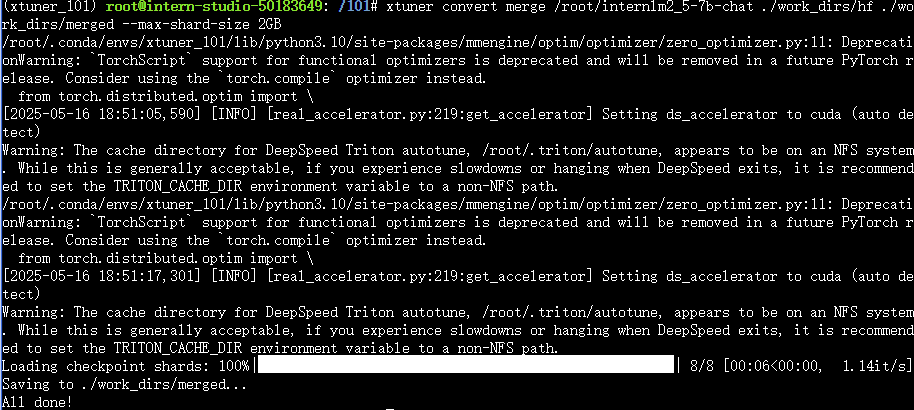

3.3.2 合并adapter和基础模型

xtuner convert merge \

/root/internlm2_5-7b-chat \

./work_dirs/hf \

./work_dirs/merged \

--max-shard-size 2GB \

完成这两个步骤后,合并好的模型将保存在./work_dirs/merged目录下,你可以直接使用这个模型进行推理了。

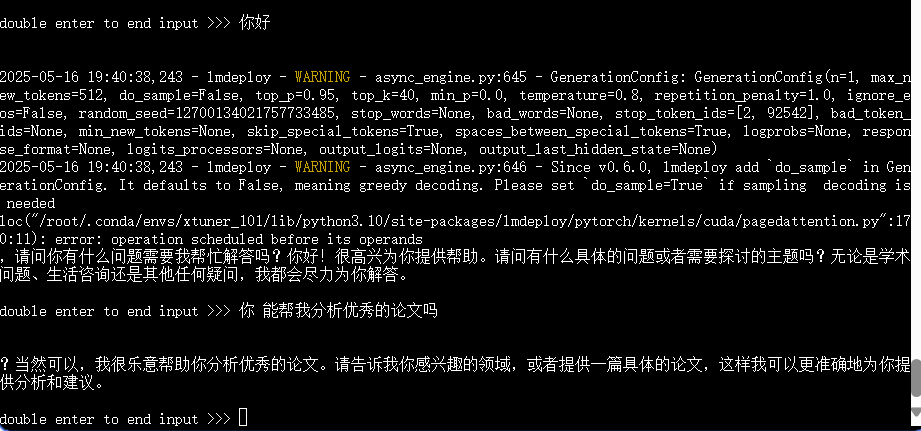

3.4 推理

from transformers import AutoModelForCausalLM, AutoTokenizer

import time# 加载模型和分词器

# model_path = "./lora_output/merged"

model_path = "./internlm2_5-7b-chat"

print(f"加载模型:{model_path}")start_time = time.time()tokenizer = AutoTokenizer.from_pretrained(model_path, trust_remote_code=True)

model = AutoModelForCausalLM.from_pretrained(model_path, trust_remote_code=True, torch_dtype="auto", device_map="auto"

)def classify_paper(title, authors, abstract, additional_info=""):# 构建输入,包含多选选项prompt = f"Based on the title '{title}', authors '{authors}', and abstract '{abstract}', please determine the scientific category of this paper. {additional_info}\n\nA. astro-ph\nB. cond-mat.mes-hall\nC. cond-mat.mtrl-sci\nD. cs.CL\nE. cs.CV\nF. cs.LG\nG. gr-qc\nH. hep-ph\nI. hep-th\nJ. quant-ph"# 设置系统信息messages = [{"role": "system", "content": "你是个优秀的论文分类师"},{"role": "user", "content": prompt},]# 应用聊天模板input_text = tokenizer.apply_chat_template(messages, tokenize=False)# 生成回答inputs = tokenizer(input_text, return_tensors="pt").to(model.device)outputs = model.generate(**inputs,max_new_tokens=10, # 减少生成长度,只需要简短答案temperature=0.1, # 降低温度提高确定性top_p=0.95,repetition_penalty=1.0,)# 解码输出response = tokenizer.decode(outputs[0][inputs.input_ids.shape[1] :], skip_special_tokens=True).strip()# 如果回答中包含选项标识符,只返回该标识符for option in ["A", "B", "C", "D", "E", "F", "G", "H", "I", "J"]:if option in response:return option# 如果回答不包含选项,返回完整回答return response# 示例使用

if __name__ == "__main__":title = "Outilex, plate-forme logicielle de traitement de textes 'ecrits"authors = "Olivier Blanc (IGM-LabInfo), Matthieu Constant (IGM-LabInfo), Eric Laporte (IGM-LabInfo)"abstract = "The Outilex software platform, which will be made available to research, development and industry, comprises software components implementing all the fundamental operations of written text processing: processing without lexicons, exploitation of lexicons and grammars, language resource management. All data are structured in XML formats, and also in more compact formats, either readable or binary, whenever necessary; the required format converters are included in the platform; the grammar formats allow for combining statistical approaches with resource-based approaches. Manually constructed lexicons for French and English, originating from the LADL, and of substantial coverage, will be distributed with the platform under LGPL-LR license."result = classify_paper(title, authors, abstract)print(result)# 计算并打印总耗时end_time = time.time()total_time = end_time - start_timeprint(f"程序总耗时:{total_time:.2f}秒")

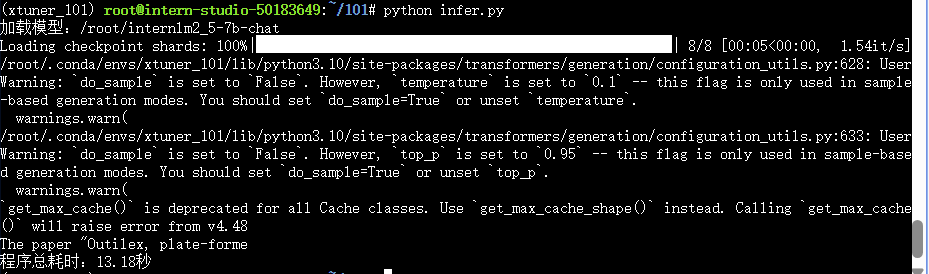

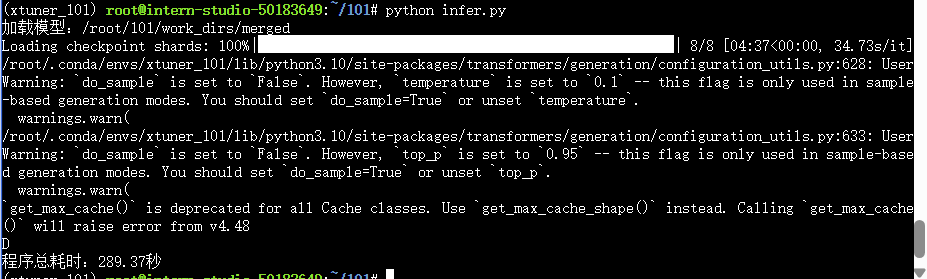

推理结果如下:

微调前模型推理

微调后模型推理

3.5 部署

pip install lmdeploy

python -m lmdeploy.pytorch.chat ./work_dirs/merged \

--max_new_tokens 256 \

--temperture 0.8 \

--top_p 0.95 \

--seed 04.评测(跳过)

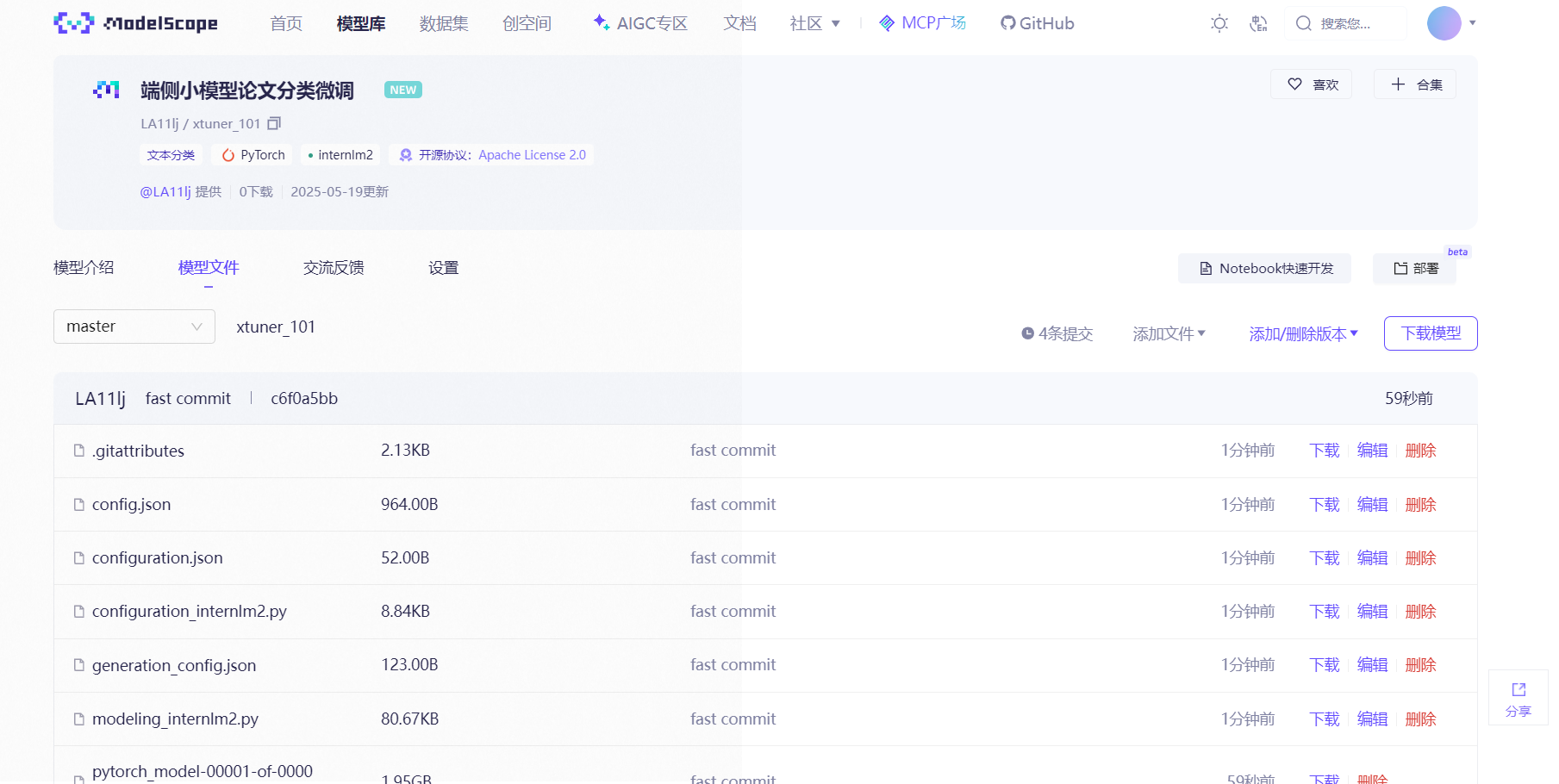

5.上传模型到魔搭

pip install modelscope

使用脚本

from modelscope.hub.api import HubApi

YOUR_ACCESS_TOKEN='自己的令牌'

api=HubApi()

api.login(YOUR_ACCESS_TOKEN)from modelscope.hub.constants import Licenses, ModelVisibility

owner_name='Raven10086'

model_name='InternLM-gmz-camp5'

model_id=f"{owner_name}/{model_name}"

api.create_model(model_id,visibility=ModelVisibility.PUBLIC,license=Licenses.APACHE_V2,chinese_name="gmz文本分类微调端侧小模型"

)

api.upload_folder(repo_id=f"{owner_name}/{model_name}",folder_path='/root/swift_output/InternLM3-8B-SFT-Lora/v5-20250517-164316/checkpoint-62-merged',commit_message='fast commit',)上传成功截图