kubernetes-ubuntu24.04操作系统部署k8s集群

ubuntu24.04操作系统部署k8s集群

- 一.初始化k8s集群

- 1.修改主机名称

- 1.2关闭防火墙

- 1.3配置主机hosts文件,相互之间通过主机名访问

- 1.4关闭交换分区

- 1.5修改内核参数

- 二.安装容器运行时

- 1.1安装docker服务

- 1.2配置 Docker 使用 systemd 作为 cgroup 驱动,并设置镜像加速

- 2.1安装containerd服务

- 2.2修改配置文件

- 三.安装k8s组件

- 1.安装kubeadm kubectl kubelet

- 2.初始化Master节点

- 3.将节点加入集群

- 4.安装网络插件calico

- 5.验证集群状态

环境准备

| 角色 | ip |

|---|---|

| master | 192.168.40.181 |

| node1 | 192.168.40.182 |

| node2 | 192.168.40.183 |

一.初始化k8s集群

1.修改主机名称

master1

# 修改主机名称

hostnamectl set-hostname master1 && bash

node1

hostnamectl set-hostname node1 && bash

node2

hostnamectl set-hostname node2 && bash

1.2关闭防火墙

三台设备都执行

ufw disable

systemctl stop ufw

systemctl disable ufw

1.3配置主机hosts文件,相互之间通过主机名访问

三台设备都执行

[root@master1 ~]# ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Created directory '/root/.ssh'.

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:jCUEBllzJUq672lZGR5ck5BTy9C59vXsKAaG3JgbLUM root@master1

The key's randomart image is:

+---[RSA 2048]----+

| .+*.**oo |

| .+ =o+*. |

| . ...o+o |

| . E=o . |

| . +.@S. . o |

| . % = . o |

| .o * . o |

| .o.. o . . |

| .o . . |

+----[SHA256]-----+

# 传输到noded1节点

[root@master1 ~]# ssh-copy-id node1

[root@master1 ~]# ssh-copy-id node2

1.4关闭交换分区

三台设备都执行

swapoff -a

sed -i '/swap/ s%/swap%#/swap%g' /etc/fstab

1.5修改内核参数

三台设备都执行

cat << EOF | sudo tee /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOFsudo modprobe overlay

sudo modprobe br_netfiltercat << EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOFsudo sysctl --system # 应用配置

二.安装容器运行时

三台机器都操作

1.1安装docker服务

sudo apt-get update

sudo apt-get install -y docker.io

sudo systemctl enable docker && sudo systemctl start docker

1.2配置 Docker 使用 systemd 作为 cgroup 驱动,并设置镜像加速

# 配置镜像加速和驱动

cat >> /etc/docker/daemon.json <<EOF

{

"registry-mirrors":["https://rsbud4vc.mirror.aliyuncs.com","https://registry.docker-cn.com","https://docker.mirrors.ustc.edu.cn","https://dockerhub.azk8s.cn","http://hub-mirror.c.163.com"],

"exec-opts":["native.cgroupdriver=systemd"]

}

EOF# 重启服务

systemctl daemon-reload && systemctl restart docker

2.1安装containerd服务

# 安装依赖

sudo apt-get update

sudo apt-get install -y ca-certificates curl gnupg lsb-release

# 添加仓库

vim/etc/apt/sources.list

deb https://mirrors.aliyun.com/ubuntu/ focal main restricted universe multiverse

deb-src https://mirrors.aliyun.com/ubuntu/ focal main restricted universe multiversedeb https://mirrors.aliyun.com/ubuntu/ focal-security main restricted universe multiverse

deb-src https://mirrors.aliyun.com/ubuntu/ focal-security main restricted universe multiversedeb https://mirrors.aliyun.com/ubuntu/ focal-updates main restricted universe multiverse

deb-src https://mirrors.aliyun.com/ubuntu/ focal-updates main restricted universe multiverse# deb https://mirrors.aliyun.com/ubuntu/ focal-proposed main restricted universe multiverse

# deb-src https://mirrors.aliyun.com/ubuntu/ focal-proposed main restricted universe multiversedeb https://mirrors.aliyun.com/ubuntu/ focal-backports main restricted universe multiverse

deb-src https://mirrors.aliyun.com/ubuntu/ focal-backports main restricted universe multiverse# 安装 containerd

sudo apt update

sudo apt install -y containerd.io

2.2修改配置文件

# 生成默认配置

sudo mkdir -p /etc/containerd

containerd config default | sudo tee /etc/containerd/config.toml

# 修改配置

把SystemdCgroup = false修改成SystemdCgroup = true

把sandbox_image = "k8s.gcr.io/pause:3.6"修改成sandbox_image="registry.aliyuncs.com/google_containers/pause:3.7"

# 重启 containerd

sudo systemctl restart containerd

sudo systemctl enable containerd

# 配置containerd镜像加速

配置containerd镜像加速器,k8s所有节点均按照以下配置:

编辑vim /etc/containerd/config.toml文件

找到config_path = "",修改成如下目录:

config_path = "/etc/containerd/certs.d"

保存退出# 创建并配置存放镜像加速器的目录

mkdir /etc/containerd/certs.d/docker.io/ -p

vim /etc/containerd/certs.d/docker.io/hosts.toml

#写入如下内容:

[host."https://ag38j4ig.mirror.aliyuncs.com",host."https://registry.docker-cn.com"]capabilities = ["pull"]

#重启containerd服务

重启containerd:

systemctl restart containerd

三.安装k8s组件

1.安装kubeadm kubectl kubelet

三台机器都执行

sudo apt update && sudo apt install -y apt-transport-https curl# 添加 Kubernetes 的阿里云 APT 源(国内用户推荐)

curl https://mirrors.aliyun.com/kubernetes/apt/doc/apt-key.gpg | sudo apt-key add -

cat << EOF | sudo tee /etc/apt/sources.list.d/kubernetes.list

deb https://mirrors.aliyun.com/kubernetes/apt/ kubernetes-xenial main

EOFsudo apt update

# 安装特定版本,例如 1.27.6-00(请替换为最新的 1.27 版本)

sudo apt install -y kubelet=1.27.6-00 kubeadm=1.27.6-00 kubectl=1.27.6-00

# 阻止这三者被自动升级

sudo apt-mark hold kubelet kubeadm kubectl

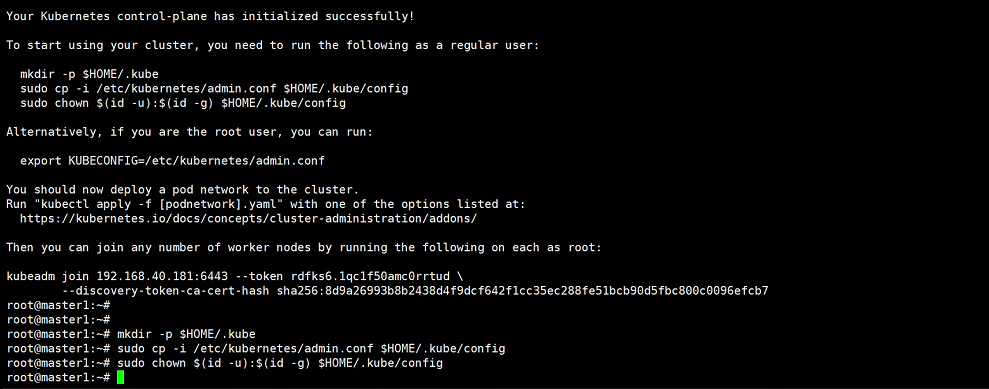

2.初始化Master节点

kubeadm init --image-repository registry.aliyuncs.com/google_containers --pod-network-cidr=10.244.0.0/16 --apiserver-advertise-address=192.168.40.181

接着,按提示配置 kubectl(在 Master 节点上执行):

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

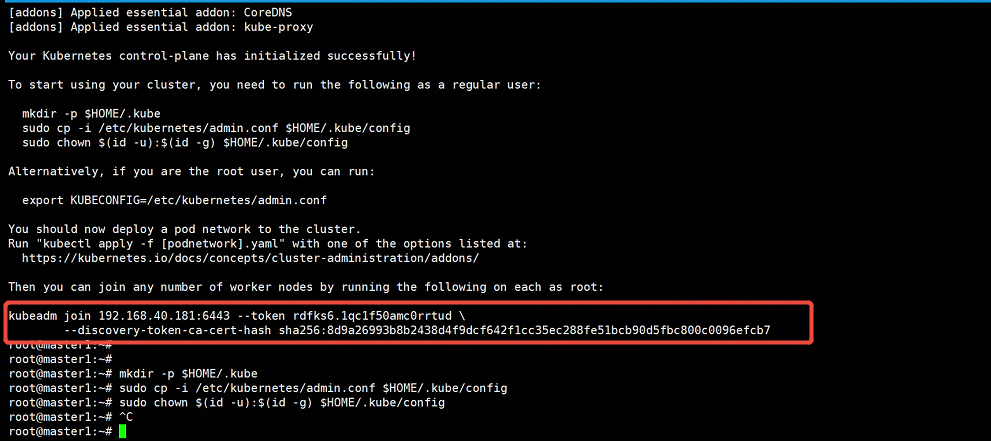

3.将节点加入集群

node节点执行初始化集群产生的命令

root@node1:~# kubeadm join 192.168.40.181:6443 --token rdfks6.1qc1f50amc0rrtud --discovery-token-ca-cert-hash sha256:8d9a26993b8b2438d4f9dcf642f1cc35ec288fe51bcb90d5fbc800c0096efcb7 root@node2:~# kubeadm join 192.168.40.181:6443 --token rdfks6.1qc1f50amc0rrtud --discovery-token-ca-cert-hash sha256:8d9a26993b8b2438d4f9dcf642f1cc35ec288fe51bcb90d5fbc800c0096efcb7

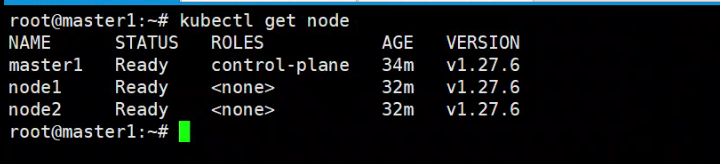

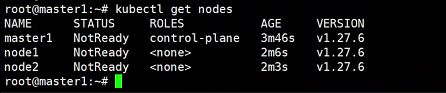

查看集群状态

root@master1:~# kubectl get nodes

4.安装网络插件calico

通过网盘分享的文件:calico.tar.gz

链接: https://pan.baidu.com/s/1BhvSUzKB7DO0pEhliO_k3g?pwd=9kik 提取码: 9kik

–来自百度网盘超级会员v5的分享

通过网盘分享的文件:calico.yaml

链接: https://pan.baidu.com/s/1OnT5loSTgacrspK_xMCYSQ?pwd=6f3f 提取码: 6f3f

–来自百度网盘超级会员v5的分享

把安装calico需要的镜像calico.tar.gz传到所有机器上

[root@master1 ~]# ctr -n=k8s.io images import calico.tar.gz

[root@node1 ~]# ctr -n=k8s.io images import calico.tar.gz

[root@node2 ~]# ctr -n=k8s.io images import calico.tar.gz

上传calico.yaml到master1上,使用yaml文件安装calico 网络插件

修改calico.yaml文件

找到 CALICO_IPV4POOL_CIDR

- name: CALICO_IPV4POOL_CIDR

value: “10.244.0.0/16”

执行calico.yaml文件

root@master1:~# kubectl apply -f calico.yaml

5.验证集群状态

root@master1:~# kubectl get nodes