[深度学习]目标检测YOLO v3

目录

一、实验目的

二、实验平台

三、实验内容

3.1 绘制锚框

3.2 计算IoU交并比

3.3 绘制所有预测框

3.4 非极大值抑制

3.5 产生候选区域、输出特征图形状

3.6 检测头设计(计算预测框位置和类别)

3.7 目标检测实战:基于YOLOv3完成林业病虫害检测

四、总结

一、实验目的

- 了解python语法

- 了解目标检测的原理

- 实现目标检测YOLOv3并对比不同的结果

二、实验平台

Baidu 飞桨AI Studio

三、实验内容

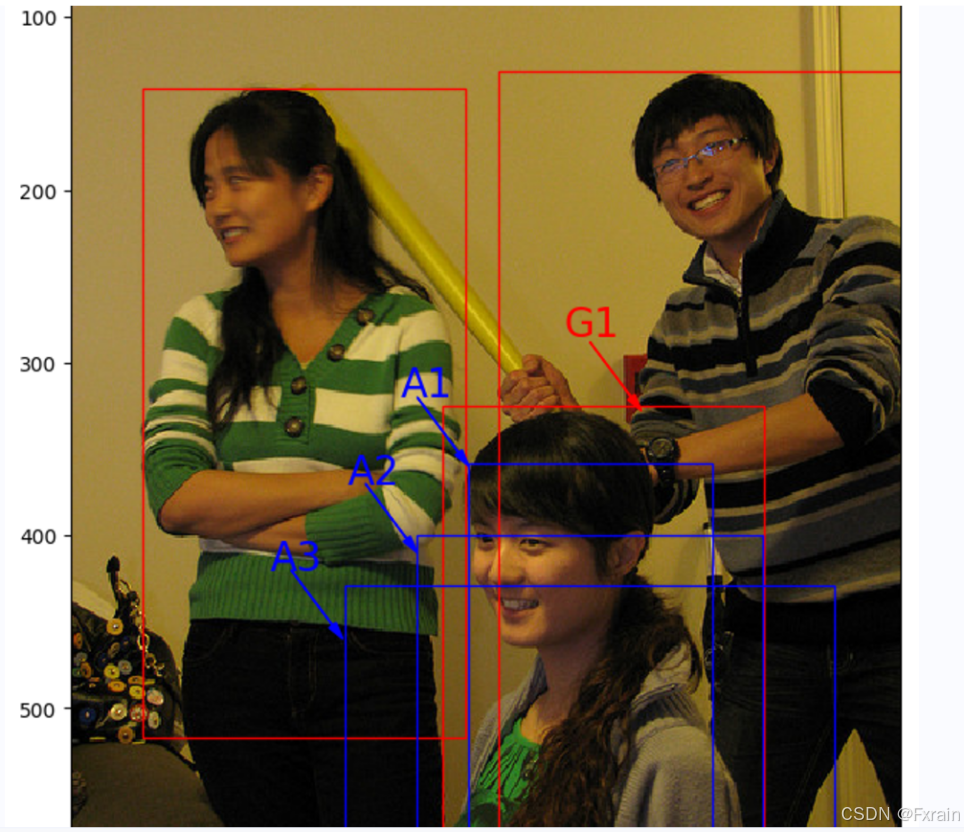

3.1 绘制锚框

3.1.1 实验代码

import numpy as np

import matplotlib.pyplot as plt

%matplotlib inline

import matplotlib.patches as patches

from matplotlib.image import imread

import mathdef draw_rectangle(currentAxis, bbox, edgecolor = 'k', facecolor = 'y', fill=False, linestyle='-'):rect=patches.Rectangle((bbox[0], bbox[1]), bbox[2]-bbox[0]+1, bbox[3]-bbox[1]+1, linewidth=1,edgecolor=edgecolor,facecolor=facecolor,fill=fill, linestyle=linestyle)currentAxis.add_patch(rect)plt.figure(figsize=(10, 10))filename = '/home/aistudio/work/images/section3/000000086956.jpg'

im = imread(filename)

plt.imshow(im)bbox1 = [214.29, 325.03, 399.82, 631.37]

bbox2 = [40.93, 141.1, 226.99, 515.73]

bbox3 = [247.2, 131.62, 480.0, 639.32]currentAxis=plt.gca()draw_rectangle(currentAxis, bbox1, edgecolor='r')

draw_rectangle(currentAxis, bbox2, edgecolor='r')

draw_rectangle(currentAxis, bbox3,edgecolor='r')def draw_anchor_box(center, length, scales, ratios, img_height, img_width):bboxes = []for scale in scales:for ratio in ratios:h = length*scale*math.sqrt(ratio)w = length*scale/math.sqrt(ratio) x1 = max(center[0] - w/2., 0.)y1 = max(center[1] - h/2., 0.)x2 = min(center[0] + w/2. - 1.0, img_width - 1.0)y2 = min(center[1] + h/2. - 1.0, img_height - 1.0)print(center[0], center[1], w, h)bboxes.append([x1, y1, x2, y2])for bbox in bboxes:draw_rectangle(currentAxis, bbox, edgecolor = 'b')img_height = im.shape[0]

img_width = im.shape[1]

draw_anchor_box([300., 500.], 100., [2.0], [0.5, 1.0, 2.0], img_height, img_width)plt.text(285, 285, 'G1', color='red', fontsize=20)

plt.arrow(300, 288, 30, 40, color='red', width=0.001, length_includes_head=True, \head_width=5, head_length=10, shape='full')plt.text(190, 320, 'A1', color='blue', fontsize=20)

plt.arrow(200, 320, 30, 40, color='blue', width=0.001, length_includes_head=True, \head_width=5, head_length=10, shape='full')plt.text(160, 370, 'A2', color='blue', fontsize=20)

plt.arrow(170, 370, 30, 40, color='blue', width=0.001, length_includes_head=True, \head_width=5, head_length=10, shape='full')plt.text(115, 420, 'A3', color='blue', fontsize=20)

plt.arrow(127, 420, 30, 40, color='blue', width=0.001, length_includes_head=True, \head_width=5, head_length=10, shape='full')plt.show()3.1.2 绘制锚框实验结果

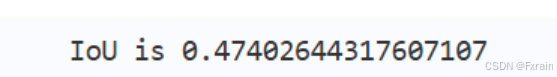

3.2 计算IoU交并比

3.2.1 实验代码

def box_iou_xyxy(box1, box2):x1min, y1min, x1max, y1max = box1[0], box1[1], box1[2], box1[3]s1 = (y1max - y1min + 1.) * (x1max - x1min + 1.)x2min, y2min, x2max, y2max = box2[0], box2[1], box2[2], box2[3]s2 = (y2max - y2min + 1.) * (x2max - x2min + 1.)xmin = np.maximum(x1min, x2min)ymin = np.maximum(y1min, y2min)xmax = np.minimum(x1max, x2max)ymax = np.minimum(y1max, y2max)inter_h = np.maximum(ymax - ymin + 1., 0.)inter_w = np.maximum(xmax - xmin + 1., 0.)intersection = inter_h * inter_wunion = s1 + s2 - intersectioniou = intersection / unionreturn ioubbox1 = [100., 100., 200., 200.]

bbox2 = [120., 120., 220., 220.]

iou = box_iou_xyxy(bbox1, bbox2)

print('IoU is {}'.format(iou)) 3.2.2 实验结果

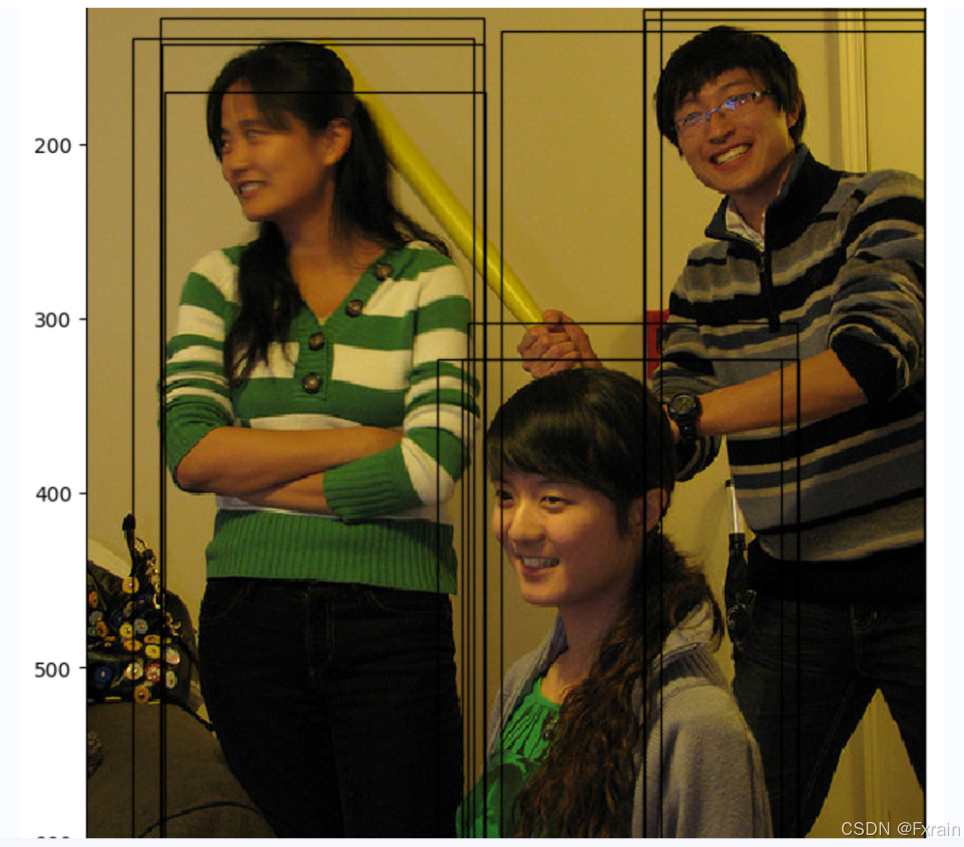

3.3 绘制所有预测框

3.3.1 实验代码

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.patches as patches

from matplotlib.image import imread

import mathdef draw_rectangle(currentAxis, bbox, edgecolor = 'k', facecolor = 'y', fill=False, linestyle='-'):rect=patches.Rectangle((bbox[0], bbox[1]), bbox[2]-bbox[0]+1, bbox[3]-bbox[1]+1, linewidth=1,edgecolor=edgecolor,facecolor=facecolor,fill=fill, linestyle=linestyle)currentAxis.add_patch(rect)plt.figure(figsize=(10, 10))filename = '/home/aistudio/000000086956.jpg'

im = imread(filename)

plt.imshow(im)currentAxis=plt.gca()boxes = np.array([[4.21716537e+01, 1.28230896e+02, 2.26547668e+02, 6.00434631e+02],[3.18562988e+02, 1.23168472e+02, 4.79000000e+02, 6.05688416e+02],[2.62704697e+01, 1.39430557e+02, 2.20587097e+02, 6.38959656e+02],[4.24965363e+01, 1.42706665e+02, 2.25955185e+02, 6.35671204e+02],[2.37462646e+02, 1.35731537e+02, 4.79000000e+02, 6.31451294e+02],[3.19390472e+02, 1.29295090e+02, 4.79000000e+02, 6.33003845e+02],[3.28933838e+02, 1.22736115e+02, 4.79000000e+02, 6.39000000e+02],[4.44292603e+01, 1.70438187e+02, 2.26841858e+02, 6.39000000e+02],[2.17988785e+02, 3.02472412e+02, 4.06062927e+02, 6.29106628e+02],[2.00241089e+02, 3.23755096e+02, 3.96929321e+02, 6.36386108e+02],[2.14310303e+02, 3.23443665e+02, 4.06732849e+02, 6.35775269e+02]])scores = np.array([0.5247661 , 0.51759845, 0.86075854, 0.9910175 , 0.39170712,0.9297706 , 0.5115228 , 0.270992 , 0.19087596, 0.64201415, 0.879036])for box in boxes:draw_rectangle(currentAxis, box)3.3.2 实验结果

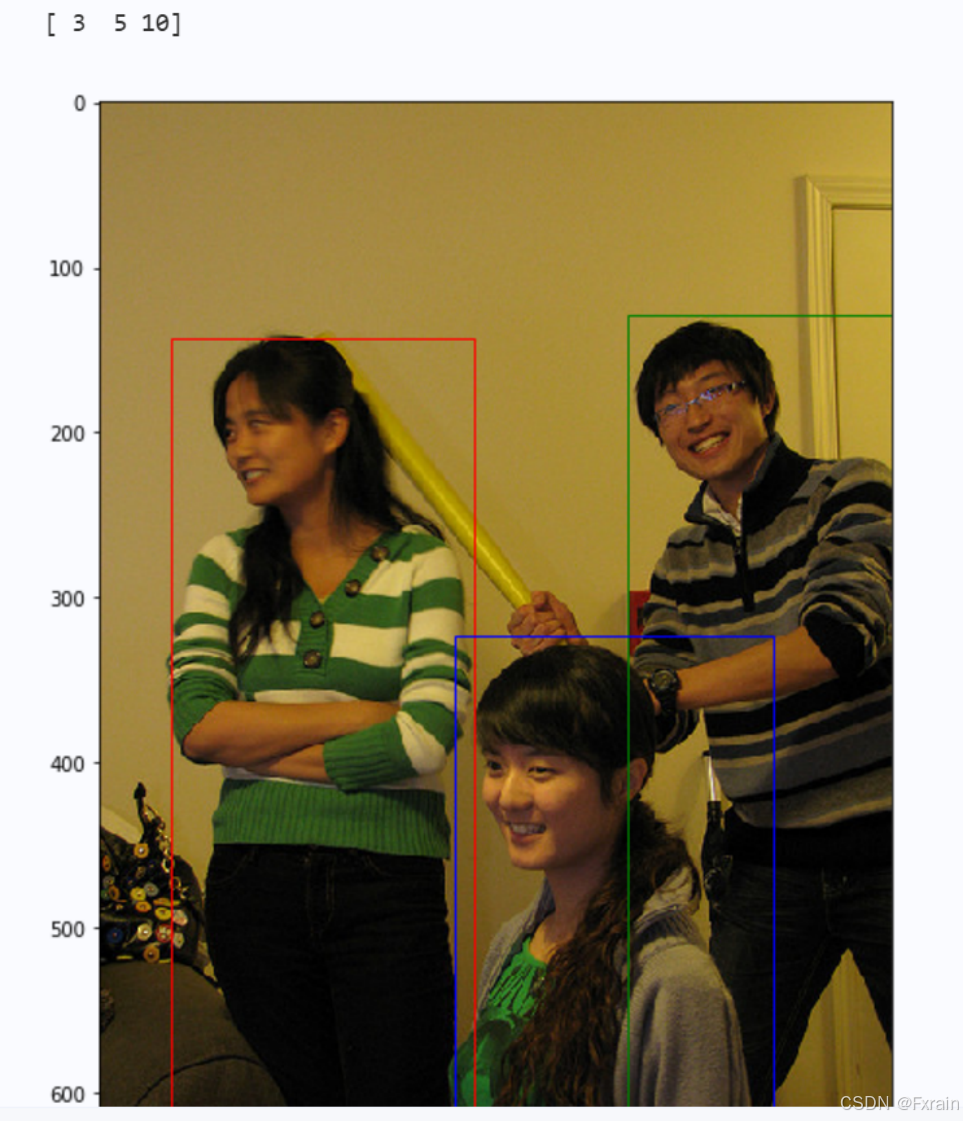

3.4 非极大值抑制

3.4.1 实验代码

def nms(bboxes, scores, score_thresh, nms_thresh):inds = np.argsort(scores)inds = inds[::-1]keep_inds = []while(len(inds) > 0):cur_ind = inds[0]cur_score = scores[cur_ind]if cur_score < score_thresh:breakkeep = Truefor ind in keep_inds:current_box = bboxes[cur_ind]remain_box = bboxes[ind]iou = box_iou_xyxy(current_box, remain_box)if iou > nms_thresh:keep = Falsebreakif keep:keep_inds.append(cur_ind)inds = inds[1:]return np.array(keep_inds)plt.figure(figsize=(10, 10))

plt.imshow(im)

currentAxis=plt.gca()

colors = ['r', 'g', 'b', 'k']inds = nms(boxes, scores, score_thresh=0.01, nms_thresh=0.5)

print(inds)

for i in range(len(inds)):box = boxes[inds[i]]draw_rectangle(currentAxis, box, edgecolor=colors[i])3.4.2 实验结果(如图5所示)

3.5 产生候选区域、输出特征图形状

3.5.1 实验代码

import paddle

import paddle.nn.functional as F

import numpy as npclass ConvBNLayer(paddle.nn.Layer):def __init__(self, ch_in, ch_out, kernel_size=3, stride=1, groups=1,padding=0, act="leaky"):super(ConvBNLayer, self).__init__()self.conv = paddle.nn.Conv2D(in_channels=ch_in,out_channels=ch_out,kernel_size=kernel_size,stride=stride,padding=padding,groups=groups,weight_attr=paddle.ParamAttr(initializer=paddle.nn.initializer.Normal(0., 0.02)),bias_attr=False)self.batch_norm = paddle.nn.BatchNorm2D(num_features=ch_out,weight_attr=paddle.ParamAttr(initializer=paddle.nn.initializer.Normal(0., 0.02),regularizer=paddle.regularizer.L2Decay(0.)),bias_attr=paddle.ParamAttr(initializer=paddle.nn.initializer.Constant(0.0),regularizer=paddle.regularizer.L2Decay(0.)))self.act = actdef forward(self, inputs):out = self.conv(inputs)out = self.batch_norm(out)if self.act == 'leaky':out = F.leaky_relu(x=out, negative_slope=0.1)return outclass DownSample(paddle.nn.Layer):def __init__(self,ch_in,ch_out,kernel_size=3,stride=2,padding=1):super(DownSample, self).__init__()self.conv_bn_layer = ConvBNLayer(ch_in=ch_in,ch_out=ch_out,kernel_size=kernel_size,stride=stride,padding=padding)self.ch_out = ch_outdef forward(self, inputs):out = self.conv_bn_layer(inputs)return outclass BasicBlock(paddle.nn.Layer):def __init__(self, ch_in, ch_out):super(BasicBlock, self).__init__()self.conv1 = ConvBNLayer(ch_in=ch_in,ch_out=ch_out,kernel_size=1,stride=1,padding=0)self.conv2 = ConvBNLayer(ch_in=ch_out,ch_out=ch_out*2,kernel_size=3,stride=1,padding=1)def forward(self, inputs):conv1 = self.conv1(inputs)conv2 = self.conv2(conv1)out = paddle.add(x=inputs, y=conv2)return outclass LayerWarp(paddle.nn.Layer):def __init__(self, ch_in, ch_out, count, is_test=True):super(LayerWarp,self).__init__()self.basicblock0 = BasicBlock(ch_in,ch_out)self.res_out_list = []for i in range(1, count):res_out = self.add_sublayer("basic_block_%d" % (i), BasicBlock(ch_out*2,ch_out))self.res_out_list.append(res_out)def forward(self,inputs):y = self.basicblock0(inputs)for basic_block_i in self.res_out_list:y = basic_block_i(y)return yDarkNet_cfg = {53: ([1, 2, 8, 8, 4])}class DarkNet53_conv_body(paddle.nn.Layer):def __init__(self):super(DarkNet53_conv_body, self).__init__()self.stages = DarkNet_cfg[53]self.stages = self.stages[0:5]self.conv0 = ConvBNLayer(ch_in=3,ch_out=32,kernel_size=3,stride=1,padding=1)self.downsample0 = DownSample(ch_in=32,ch_out=32 * 2)self.darknet53_conv_block_list = []self.downsample_list = []for i, stage in enumerate(self.stages):conv_block = self.add_sublayer("stage_%d" % (i),LayerWarp(32*(2**(i+1)),32*(2**i),stage))self.darknet53_conv_block_list.append(conv_block)for i in range(len(self.stages) - 1):downsample = self.add_sublayer("stage_%d_downsample" % i,DownSample(ch_in=32*(2**(i+1)),ch_out=32*(2**(i+2))))self.downsample_list.append(downsample)def forward(self,inputs):out = self.conv0(inputs)out = self.downsample0(out)blocks = []for i, conv_block_i in enumerate(self.darknet53_conv_block_list): out = conv_block_i(out)blocks.append(out)if i < len(self.stages) - 1:out = self.downsample_list[i](out)return blocks[-1:-4:-1] import numpy as np

backbone = DarkNet53_conv_body()

x = np.random.randn(1, 3, 640, 640).astype('float32')

x = paddle.to_tensor(x)

C0, C1, C2 = backbone(x)

print(C0.shape, C1.shape, C2.shape)3.6 检测头设计(计算预测框位置和类别)

3.6.1 对C0进行多次卷积以得到跟预测框相关的特征图P0 代码

class YoloDetectionBlock(paddle.nn.Layer):def __init__(self,ch_in,ch_out,is_test=True):super(YoloDetectionBlock, self).__init__()assert ch_out % 2 == 0, \"channel {} cannot be divided by 2".format(ch_out)self.conv0 = ConvBNLayer(ch_in=ch_in,ch_out=ch_out,kernel_size=1,stride=1,padding=0)self.conv1 = ConvBNLayer(ch_in=ch_out,ch_out=ch_out*2,kernel_size=3,stride=1,padding=1)self.conv2 = ConvBNLayer(ch_in=ch_out*2,ch_out=ch_out,kernel_size=1,stride=1,padding=0)self.conv3 = ConvBNLayer(ch_in=ch_out,ch_out=ch_out*2,kernel_size=3,stride=1,padding=1)self.route = ConvBNLayer(ch_in=ch_out*2,ch_out=ch_out,kernel_size=1,stride=1,padding=0)self.tip = ConvBNLayer(ch_in=ch_out,ch_out=ch_out*2,kernel_size=3,stride=1,padding=1)def forward(self, inputs):out = self.conv0(inputs)out = self.conv1(out)out = self.conv2(out)out = self.conv3(out)route = self.route(out)tip = self.tip(route)return route, tipNUM_ANCHORS = 3

NUM_CLASSES = 7

num_filters=NUM_ANCHORS * (NUM_CLASSES + 5)backbone = DarkNet53_conv_body()

detection = YoloDetectionBlock(ch_in=1024, ch_out=512)

conv2d_pred = paddle.nn.Conv2D(in_channels=1024, out_channels=num_filters, kernel_size=1)x = np.random.randn(1, 3, 640, 640).astype('float32')

x = paddle.to_tensor(x)

C0, C1, C2 = backbone(x)

route, tip = detection(C0)

P0 = conv2d_pred(tip)print(P0.shape)3.6.2 计算预测框是否包含物体的概率 代码

NUM_ANCHORS = 3

NUM_CLASSES = 7

num_filters=NUM_ANCHORS * (NUM_CLASSES + 5)backbone = DarkNet53_conv_body()

detection = YoloDetectionBlock(ch_in=1024, ch_out=512)

conv2d_pred = paddle.nn.Conv2D(in_channels=1024, out_channels=num_filters, kernel_size=1)x = np.random.randn(1, 3, 640, 640).astype('float32')

x = paddle.to_tensor(x)

C0, C1, C2 = backbone(x)

route, tip = detection(C0)

P0 = conv2d_pred(tip)reshaped_p0 = paddle.reshape(P0, [-1, NUM_ANCHORS, NUM_CLASSES + 5, P0.shape[2], P0.shape[3]])

pred_objectness = reshaped_p0[:, :, 4, :, :]

pred_objectness_probability = F.sigmoid(pred_objectness)

print(pred_objectness.shape, pred_objectness_probability.shape)3.6.3 计算预测框位置坐标 代码

NUM_ANCHORS = 3

NUM_CLASSES = 7

num_filters=NUM_ANCHORS * (NUM_CLASSES + 5)backbone = DarkNet53_conv_body()

detection = YoloDetectionBlock(ch_in=1024, ch_out=512)

conv2d_pred = paddle.nn.Conv2D(in_channels=1024, out_channels=num_filters, kernel_size=1)x = np.random.randn(1, 3, 640, 640).astype('float32')

x = paddle.to_tensor(x)

C0, C1, C2 = backbone(x)

route, tip = detection(C0)

P0 = conv2d_pred(tip)reshaped_p0 = paddle.reshape(P0, [-1, NUM_ANCHORS, NUM_CLASSES + 5, P0.shape[2], P0.shape[3]])

pred_objectness = reshaped_p0[:, :, 4, :, :]

pred_objectness_probability = F.sigmoid(pred_objectness)pred_location = reshaped_p0[:, :, 0:4, :, :]

print(pred_location.shape)3.6.4 从P0计算出预测框坐标 代码

def sigmoid(x):return 1./(1.0 + np.exp(-x))

def get_yolo_box_xxyy(pred, anchors, num_classes, downsample):batchsize = pred.shape[0]num_rows = pred.shape[-2]num_cols = pred.shape[-1]input_h = num_rows * downsampleinput_w = num_cols * downsamplenum_anchors = len(anchors) // 2pred = pred.reshape([-1, num_anchors, 5+num_classes, num_rows, num_cols])pred_location = pred[:, :, 0:4, :, :]pred_location = np.transpose(pred_location, (0,3,4,1,2))anchors_this = []for ind in range(num_anchors):anchors_this.append([anchors[ind*2], anchors[ind*2+1]])anchors_this = np.array(anchors_this).astype('float32')pred_box = np.zeros(pred_location.shape)for n in range(batchsize):for i in range(num_rows):for j in range(num_cols):for k in range(num_anchors):pred_box[n, i, j, k, 0] = jpred_box[n, i, j, k, 1] = ipred_box[n, i, j, k, 2] = anchors_this[k][0]pred_box[n, i, j, k, 3] = anchors_this[k][1]pred_box[:, :, :, :, 0] = (sigmoid(pred_location[:, :, :, :, 0]) + pred_box[:, :, :, :, 0]) / num_colspred_box[:, :, :, :, 1] = (sigmoid(pred_location[:, :, :, :, 1]) + pred_box[:, :, :, :, 1]) / num_rowspred_box[:, :, :, :, 2] = np.exp(pred_location[:, :, :, :, 2]) * pred_box[:, :, :, :, 2] / input_wpred_box[:, :, :, :, 3] = np.exp(pred_location[:, :, :, :, 3]) * pred_box[:, :, :, :, 3] / input_hpred_box[:, :, :, :, 0] = pred_box[:, :, :, :, 0] - pred_box[:, :, :, :, 2] / 2.pred_box[:, :, :, :, 1] = pred_box[:, :, :, :, 1] - pred_box[:, :, :, :, 3] / 2.pred_box[:, :, :, :, 2] = pred_box[:, :, :, :, 0] + pred_box[:, :, :, :, 2]pred_box[:, :, :, :, 3] = pred_box[:, :, :, :, 1] + pred_box[:, :, :, :, 3]pred_box = np.clip(pred_box, 0., 1.0)return pred_boxNUM_ANCHORS = 3

NUM_CLASSES = 7

num_filters=NUM_ANCHORS * (NUM_CLASSES + 5)backbone = DarkNet53_conv_body()

detection = YoloDetectionBlock(ch_in=1024, ch_out=512)

conv2d_pred = paddle.nn.Conv2D(in_channels=1024, out_channels=num_filters, kernel_size=1)x = np.random.randn(1, 3, 640, 640).astype('float32')

x = paddle.to_tensor(x)

C0, C1, C2 = backbone(x)

route, tip = detection(C0)

P0 = conv2d_pred(tip)reshaped_p0 = paddle.reshape(P0, [-1, NUM_ANCHORS, NUM_CLASSES + 5, P0.shape[2], P0.shape[3]])

pred_objectness = reshaped_p0[:, :, 4, :, :]

pred_objectness_probability = F.sigmoid(pred_objectness)pred_location = reshaped_p0[:, :, 0:4, :, :]anchors = [116, 90, 156, 198, 373, 326]

pred_boxes = get_yolo_box_xxyy(P0.numpy(), anchors, num_classes=7, downsample=32)

print(pred_boxes.shape)3.6.5 计算物体属于每个类别概率 代码

NUM_ANCHORS = 3

NUM_CLASSES = 7

num_filters=NUM_ANCHORS * (NUM_CLASSES + 5)backbone = DarkNet53_conv_body()

detection = YoloDetectionBlock(ch_in=1024, ch_out=512)

conv2d_pred = paddle.nn.Conv2D(in_channels=1024, out_channels=num_filters, kernel_size=1)x = np.random.randn(1, 3, 640, 640).astype('float32')

x = paddle.to_tensor(x)

C0, C1, C2 = backbone(x)

route, tip = detection(C0)

P0 = conv2d_pred(tip)reshaped_p0 = paddle.reshape(P0, [-1, NUM_ANCHORS, NUM_CLASSES + 5, P0.shape[2], P0.shape[3]])

pred_objectness = reshaped_p0[:, :, 4, :, :]

pred_objectness_probability = F.sigmoid(pred_objectness)

pred_location = reshaped_p0[:, :, 0:4, :, :]

pred_classification = reshaped_p0[:, :, 5:5+NUM_CLASSES, :, :]

pred_classification_probability = F.sigmoid(pred_classification)

print(pred_classification.shape)3.7 目标检测实战:基于YOLOv3完成林业病虫害检测

3.7.1 得到表示名称字符串和数字类别之间映射关系的字典 代码

INSECT_NAMES = ['Boerner', 'Leconte', 'Linnaeus', 'acuminatus', 'armandi', 'coleoptera', 'linnaeus']def get_insect_names():insect_category2id = {}for i, item in enumerate(INSECT_NAMES):insect_category2id[item] = ireturn insect_category2idcname2cid = get_insect_names()

cname2cid3.7.2 读取所有文件标注信息 代码

import os

import numpy as np

import xml.etree.ElementTree as ETdef get_annotations(cname2cid, datadir):filenames = os.listdir(os.path.join(datadir, 'annotations', 'xmls'))records = []ct = 0for fname in filenames:fid = fname.split('.')[0]fpath = os.path.join(datadir, 'annotations', 'xmls', fname)img_file = os.path.join(datadir, 'images', fid + '.jpeg')tree = ET.parse(fpath)if tree.find('id') is None:im_id = np.array([ct])else:im_id = np.array([int(tree.find('id').text)])objs = tree.findall('object')im_w = float(tree.find('size').find('width').text)im_h = float(tree.find('size').find('height').text)gt_bbox = np.zeros((len(objs), 4), dtype=np.float32)gt_class = np.zeros((len(objs), ), dtype=np.int32)is_crowd = np.zeros((len(objs), ), dtype=np.int32)difficult = np.zeros((len(objs), ), dtype=np.int32)for i, obj in enumerate(objs):cname = obj.find('name').textgt_class[i] = cname2cid[cname]_difficult = int(obj.find('difficult').text)x1 = float(obj.find('bndbox').find('xmin').text)y1 = float(obj.find('bndbox').find('ymin').text)x2 = float(obj.find('bndbox').find('xmax').text)y2 = float(obj.find('bndbox').find('ymax').text)x1 = max(0, x1)y1 = max(0, y1)x2 = min(im_w - 1, x2)y2 = min(im_h - 1, y2)gt_bbox[i] = [(x1+x2)/2.0 , (y1+y2)/2.0, x2-x1+1., y2-y1+1.]is_crowd[i] = 0difficult[i] = _difficultvoc_rec = {'im_file': img_file,'im_id': im_id,'h': im_h,'w': im_w,'is_crowd': is_crowd,'gt_class': gt_class,'gt_bbox': gt_bbox,'gt_poly': [],'difficult': difficult}if len(objs) != 0:records.append(voc_rec)ct += 1return recordsTRAINDIR = '/home/aistudio/work/insects/train'

TESTDIR = '/home/aistudio/work/insects/test'

VALIDDIR = '/home/aistudio/work/insects/val'

cname2cid = get_insect_names()

records = get_annotations(cname2cid, TRAINDIR)print('records num:{}\n recored[0]:{}'.format(len(records), records[0]))3.7.3 数据预处理

(1)读取数据

import cv2def get_bbox(gt_bbox, gt_class):MAX_NUM = 50gt_bbox2 = np.zeros((MAX_NUM, 4))gt_class2 = np.zeros((MAX_NUM,))for i in range(len(gt_bbox)):gt_bbox2[i, :] = gt_bbox[i, :]gt_class2[i] = gt_class[i]if i >= MAX_NUM:breakreturn gt_bbox2, gt_class2def get_img_data_from_file(record):im_file = record['im_file']h = record['h']w = record['w']is_crowd = record['is_crowd']gt_class = record['gt_class']gt_bbox = record['gt_bbox']difficult = record['difficult']img = cv2.imread(im_file)img = cv2.cvtColor(img, cv2.COLOR_BGR2RGB)assert img.shape[0] == int(h), \"image height of {} inconsistent in record({}) and img file({})".format(im_file, h, img.shape[0])assert img.shape[1] == int(w), \"image width of {} inconsistent in record({}) and img file({})".format(im_file, w, img.shape[1])gt_boxes, gt_labels = get_bbox(gt_bbox, gt_class)gt_boxes[:, 0] = gt_boxes[:, 0] / float(w)gt_boxes[:, 1] = gt_boxes[:, 1] / float(h)gt_boxes[:, 2] = gt_boxes[:, 2] / float(w)gt_boxes[:, 3] = gt_boxes[:, 3] / float(h)return img, gt_boxes, gt_labels, (h, w)

record = records[0]

img, gt_boxes, gt_labels, scales = get_img_data_from_file(record)

print('img shape:{}, \n gt_labels:{}, \n scales:{}\n'.format(img.shape, gt_labels, scales))(2)数据预处理

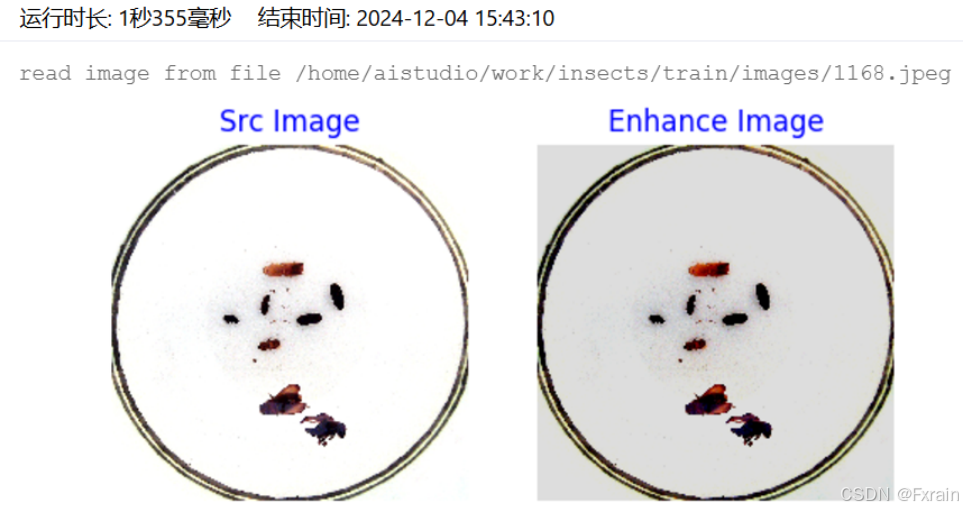

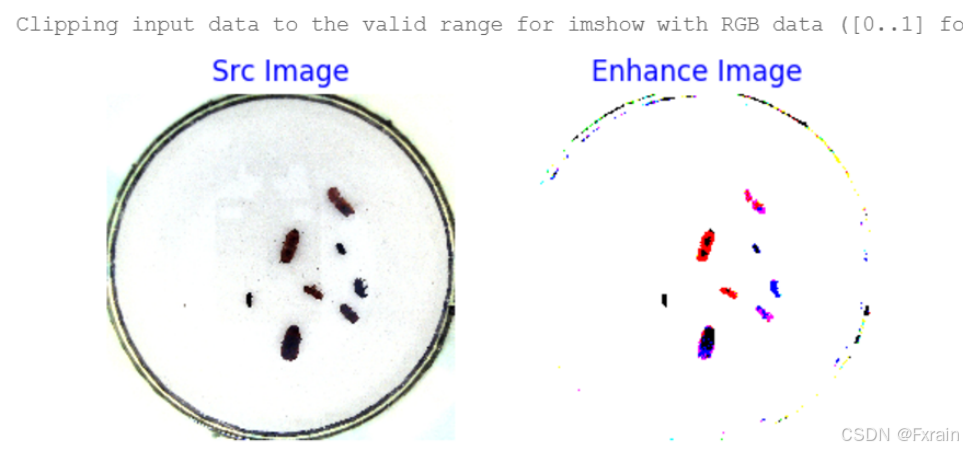

a.随机改变暗亮、对比度和颜色

import numpy as np

import cv2

from PIL import Image, ImageEnhance

import randomdef random_distort(img):def random_brightness(img, lower=0.5, upper=1.5):e = np.random.uniform(lower, upper)return ImageEnhance.Brightness(img).enhance(e)def random_contrast(img, lower=0.5, upper=1.5):e = np.random.uniform(lower, upper)return ImageEnhance.Contrast(img).enhance(e)def random_color(img, lower=0.5, upper=1.5):e = np.random.uniform(lower, upper)return ImageEnhance.Color(img).enhance(e)ops = [random_brightness, random_contrast, random_color]np.random.shuffle(ops)img = Image.fromarray(img)img = ops[0](img)img = ops[1](img)img = ops[2](img)img = np.asarray(img)return imgimport matplotlib.pyplot as plt

%matplotlib inline

def visualize(srcimg, img_enhance):plt.figure(num=2, figsize=(6,12))plt.subplot(1,2,1)plt.title('Src Image', color='#0000FF')plt.axis('off') plt.imshow(srcimg) srcimg_gtbox = records[0]['gt_bbox']srcimg_label = records[0]['gt_class']plt.subplot(1,2,2)plt.title('Enhance Image', color='#0000FF')plt.axis('off)plt.imshow(img_enhance)image_path = records[0]['im_file']

print("read image from file {}".format(image_path))

srcimg = Image.open(image_path)

srcimg = np.array(srcimg)img_enhance = random_distort(srcimg)

visualize(srcimg, img_enhance)

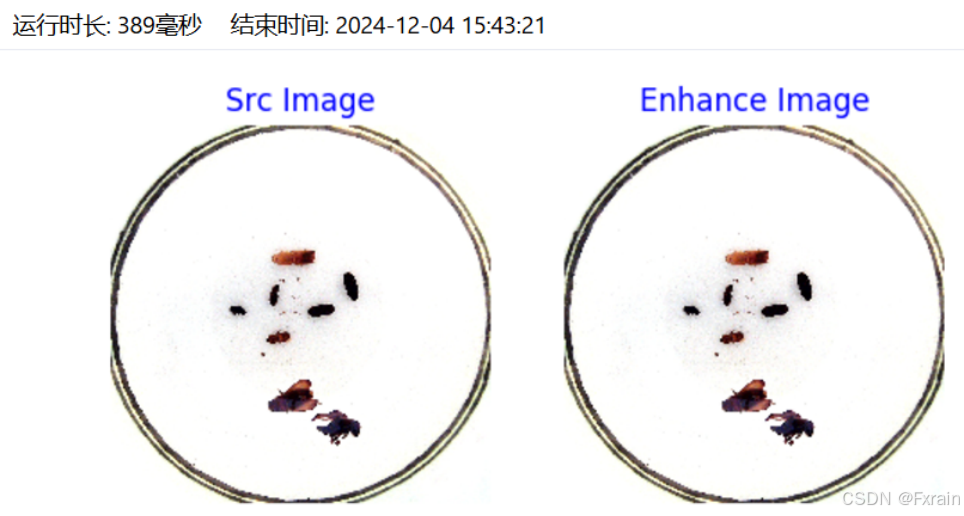

b.随机填充

def random_expand(img,gtboxes,max_ratio=4.,fill=None,keep_ratio=True,thresh=0.5):if random.random() > thresh:return img, gtboxesif max_ratio < 1.0:return img, gtboxesh, w, c = img.shaperatio_x = random.uniform(1, max_ratio)if keep_ratio:ratio_y = ratio_xelse:ratio_y = random.uniform(1, max_ratio)oh = int(h * ratio_y)ow = int(w * ratio_x)off_x = random.randint(0, ow - w)off_y = random.randint(0, oh - h)out_img = np.zeros((oh, ow, c))if fill and len(fill) == c:for i in range(c):out_img[:, :, i] = fill[i] * 255.0out_img[off_y:off_y + h, off_x:off_x + w, :] = imggtboxes[:, 0] = ((gtboxes[:, 0] * w) + off_x) / float(ow)gtboxes[:, 1] = ((gtboxes[:, 1] * h) + off_y) / float(oh)gtboxes[:, 2] = gtboxes[:, 2] / ratio_xgtboxes[:, 3] = gtboxes[:, 3] / ratio_yreturn out_img.astype('uint8'), gtboxessrcimg_gtbox = records[0]['gt_bbox']

srcimg_label = records[0]['gt_class']img_enhance, new_gtbox = random_expand(srcimg, srcimg_gtbox)

visualize(srcimg, img_enhance)

c.随机打乱真实框排列顺序

def shuffle_gtbox(gtbox, gtlabel):gt = np.concatenate([gtbox, gtlabel[:, np.newaxis]], axis=1)idx = np.arange(gt.shape[0])np.random.shuffle(idx)gt = gt[idx, :]return gt[:, :4], gt[:, 4]

3.7.4 批量数据读取 实验代码:

def get_img_size(mode):if (mode == 'train') or (mode == 'valid'):inds = np.array([0,1,2,3,4,5,6,7,8,9])ii = np.random.choice(inds)img_size = 320 + ii * 32else:img_size = 608return img_sizedef make_array(batch_data):img_array = np.array([item[0] for item in batch_data], dtype = 'float32')gt_box_array = np.array([item[1] for item in batch_data], dtype = 'float32')gt_labels_array = np.array([item[2] for item in batch_data], dtype = 'int32')img_scale = np.array([item[3] for item in batch_data], dtype='int32')return img_array, gt_box_array, gt_labels_array, img_scaleimport paddleclass TrainDataset(paddle.io.Dataset):def __init__(self, datadir, mode='train'):self.datadir = datadircname2cid = get_insect_names()self.records = get_annotations(cname2cid, datadir)self.img_size = 640 #get_img_size(mode)def __getitem__(self, idx):record = self.records[idx]# print("print: ", record)img, gt_bbox, gt_labels, im_shape = get_img_data(record, size=self.img_size)return img, gt_bbox, gt_labels, np.array(im_shape)def __len__(self):return len(self.records)train_dataset = TrainDataset(TRAINDIR, mode='train')

train_loader = paddle.io.DataLoader(train_dataset, batch_size=2, shuffle=True, num_workers=2, drop_last=True)img, gt_boxes, gt_labels, im_shape = next(train_loader())

print('img shape:{}\n gt_boxes shape:{}\n gt_labels shape:{}'.format(img.shape, gt_boxes.shape, gt_labels.shape))3.7.5 模型构建 实验代码:

from yolo import YOLOv33.7.6 计算损失函数 实验代码:

def get_loss(num_classes, outputs, gtbox, gtlabel, gtscore=None,anchors = [10, 13, 16, 30, 33, 23, 30, 61, 62, 45, 59, 119, 116, 90, 156, 198, 373, 326],anchor_masks = [[6, 7, 8], [3, 4, 5], [0, 1, 2]],ignore_thresh=0.7,use_label_smooth=False):losses = []downsample = 32for i, out in enumerate(outputs): anchor_mask_i = anchor_masks[i]loss = paddle.vision.ops.yolo_loss(x=out, gt_box=gtbox, gt_label=gtlabel, gt_score=gtscore, anchors=anchors, anchor_mask=anchor_mask_i, class_num=num_classes, ignore_thresh=ignore_thresh, downsample_ratio=downsample, use_label_smooth=False) losses.append(paddle.mean(loss)) downsample = downsample // 2 return sum(losses) 3.7.7 模型训练 实验代码:

import time

import os

import paddledef get_lr(base_lr = 0.0001, lr_decay = 0.1):bd = [10000, 20000]lr = [base_lr, base_lr * lr_decay, base_lr * lr_decay * lr_decay]learning_rate = paddle.optimizer.lr.PiecewiseDecay(boundaries=bd, values=lr)return learning_rate

MAX_EPOCH = 1ANCHORS = [10, 13, 16, 30, 33, 23, 30, 61, 62, 45, 59, 119, 116, 90, 156, 198, 373, 326]ANCHOR_MASKS = [[6, 7, 8], [3, 4, 5], [0, 1, 2]]IGNORE_THRESH = .7

NUM_CLASSES = 7TRAINDIR = '/home/aistudio/work/insects/train'

TESTDIR = '/home/aistudio/work/insects/test'

VALIDDIR = '/home/aistudio/work/insects/val'

paddle.set_device("gpu:0")

train_dataset = TrainDataset(TRAINDIR, mode='train')

valid_dataset = TrainDataset(VALIDDIR, mode='valid')

test_dataset = TrainDataset(VALIDDIR, mode='valid')

train_loader = paddle.io.DataLoader(train_dataset, batch_size=10, shuffle=True, num_workers=0, drop_last=True, use_shared_memory=False)

valid_loader = paddle.io.DataLoader(valid_dataset, batch_size=10, shuffle=False, num_workers=0, drop_last=False, use_shared_memory=False)

model = YOLOv3(num_classes = NUM_CLASSES)

learning_rate = get_lr()

opt = paddle.optimizer.Momentum(learning_rate=learning_rate,momentum=0.9,weight_decay=paddle.regularizer.L2Decay(0.0005),parameters=model.parameters())

# opt = paddle.optimizer.Adam(learning_rate=learning_rate, weight_decay=paddle.regularizer.L2Decay(0.0005), parameters=model.parameters())if __name__ == '__main__':for epoch in range(MAX_EPOCH):for i, data in enumerate(train_loader()):img, gt_boxes, gt_labels, img_scale = datagt_scores = np.ones(gt_labels.shape).astype('float32')gt_scores = paddle.to_tensor(gt_scores)img = paddle.to_tensor(img)gt_boxes = paddle.to_tensor(gt_boxes)gt_labels = paddle.to_tensor(gt_labels)outputs = model(img) loss = get_loss(NUM_CLASSES, outputs, gt_boxes, gt_labels, gtscore=gt_scores,anchors = ANCHORS,anchor_masks = ANCHOR_MASKS,ignore_thresh=IGNORE_THRESH,use_label_smooth=False) loss.backward() opt.step() opt.clear_grad()if i % 10 == 0:timestring = time.strftime("%Y-%m-%d %H:%M:%S",time.localtime(time.time()))print('{}[TRAIN]epoch {}, iter {}, output loss: {}'.format(timestring, epoch, i, loss.numpy()))if (epoch % 5 == 0) or (epoch == MAX_EPOCH -1):paddle.save(model.state_dict(), 'yolo_epoch{}'.format(epoch))model.eval()for i, data in enumerate(valid_loader()):img, gt_boxes, gt_labels, img_scale = datagt_scores = np.ones(gt_labels.shape).astype('float32')gt_scores = paddle.to_tensor(gt_scores)img = paddle.to_tensor(img)gt_boxes = paddle.to_tensor(gt_boxes)gt_labels = paddle.to_tensor(gt_labels)outputs = model(img)loss = get_loss(NUM_CLASSES,outputs, gt_boxes, gt_labels, gtscore=gt_scores,anchors = ANCHORS,anchor_masks = ANCHOR_MASKS,ignore_thresh=IGNORE_THRESH,use_label_smooth=False)if i % 1 == 0:timestring = time.strftime("%Y-%m-%d %H:%M:%S",time.localtime(time.time()))print('{}[VALID]epoch {}, iter {}, output loss: {}'.format(timestring, epoch, i, loss.numpy()))model.train()3.7.8 模型评估函数 实验代码:

def box_iou_xyxy(box1, box2):x1min, y1min, x1max, y1max = box1[0], box1[1], box1[2], box1[3]s1 = (y1max - y1min + 1.) * (x1max - x1min + 1.)x2min, y2min, x2max, y2max = box2[0], box2[1], box2[2], box2[3]s2 = (y2max - y2min + 1.) * (x2max - x2min + 1.)xmin = np.maximum(x1min, x2min)ymin = np.maximum(y1min, y2min)xmax = np.minimum(x1max, x2max)ymax = np.minimum(y1max, y2max)inter_h = np.maximum(ymax - ymin + 1., 0.)inter_w = np.maximum(xmax - xmin + 1., 0.)intersection = inter_h * inter_wunion = s1 + s2 - intersectioniou = intersection / unionreturn iou

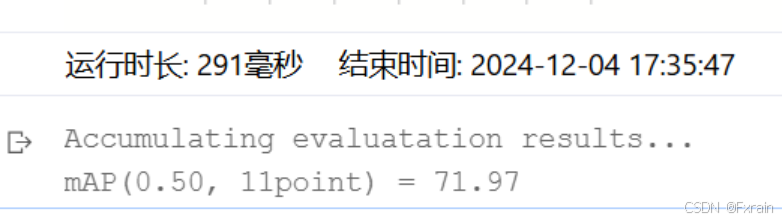

def nms(bboxes, scores, score_thresh, nms_thresh, pre_nms_topk, i=0, c=0):inds = np.argsort(scores)inds = inds[::-1]keep_inds = []while(len(inds) > 0):cur_ind = inds[0]cur_score = scores[cur_ind]# if score of the box is less than score_thresh, just drop itif cur_score < score_thresh:breakkeep = Truefor ind in keep_inds:current_box = bboxes[cur_ind]remain_box = bboxes[ind]iou = box_iou_xyxy(current_box, remain_box)if iou > nms_thresh:keep = Falsebreakif i == 0 and c == 4 and cur_ind == 951:print('suppressed, ', keep, i, c, cur_ind, ind, iou)if keep:keep_inds.append(cur_ind)inds = inds[1:]return np.array(keep_inds)def multiclass_nms(bboxes, scores, score_thresh=0.01, nms_thresh=0.45, pre_nms_topk=1000, pos_nms_topk=100):batch_size = bboxes.shape[0]class_num = scores.shape[1]rets = []for i in range(batch_size):bboxes_i = bboxes[i]scores_i = scores[i]ret = []for c in range(class_num):scores_i_c = scores_i[c]keep_inds = nms(bboxes_i, scores_i_c, score_thresh, nms_thresh, pre_nms_topk, i=i, c=c)if len(keep_inds) < 1:continuekeep_bboxes = bboxes_i[keep_inds]keep_scores = scores_i_c[keep_inds]keep_results = np.zeros([keep_scores.shape[0], 6])keep_results[:, 0] = ckeep_results[:, 1] = keep_scores[:]keep_results[:, 2:6] = keep_bboxes[:, :]ret.append(keep_results)if len(ret) < 1:rets.append(ret)continueret_i = np.concatenate(ret, axis=0)scores_i = ret_i[:, 1]if len(scores_i) > pos_nms_topk:inds = np.argsort(scores_i)[::-1]inds = inds[:pos_nms_topk]ret_i = ret_i[inds]rets.append(ret_i)return rets3.7.9 测试集上模型评估 代码:

import json

import paddleANCHORS = [10, 13, 16, 30, 33, 23, 30, 61, 62, 45, 59, 119, 116, 90, 156, 198, 373, 326]ANCHOR_MASKS = [[6, 7, 8], [3, 4, 5], [0, 1, 2]]VALID_THRESH = 0.01NMS_TOPK = 400

NMS_POSK = 100

NMS_THRESH = 0.45NUM_CLASSES = 7TESTDIR = '/home/aistudio/work/insects/val/images'

WEIGHT_FILE = '/home/aistudio/yolo_epoch50.pdparams' if __name__ == '__main__':model = YOLOv3(num_classes=NUM_CLASSES)params_file_path = WEIGHT_FILEmodel_state_dict = paddle.load(params_file_path)model.load_dict(model_state_dict)model.eval()total_results = []test_loader = test_data_loader(TESTDIR, batch_size= 1, mode='test')for i, data in enumerate(test_loader()):img_name, img_data, img_scale_data = dataimg = paddle.to_tensor(img_data)img_scale = paddle.to_tensor(img_scale_data)outputs = model.forward(img)bboxes, scores = model.get_pred(outputs,im_shape=img_scale,anchors=ANCHORS,anchor_masks=ANCHOR_MASKS,valid_thresh = VALID_THRESH)bboxes_data = bboxes.numpy()scores_data = scores.numpy()result = multiclass_nms(bboxes_data, scores_data,score_thresh=VALID_THRESH, nms_thresh=NMS_THRESH, pre_nms_topk=NMS_TOPK, pos_nms_topk=NMS_POSK)for j in range(len(result)):result_j = result[j]img_name_j = img_name[j]total_results.append([img_name_j, result_j.tolist()])print('processed {} pictures'.format(len(total_results)))print('')json.dump(total_results, open('pred_results.json', 'w'))3.7.10 模型预测 代码:

import numpy as np

import matplotlib.pyplot as plt

import matplotlib.patches as patches

from matplotlib.image import imread

import mathINSECT_NAMES = ['Boerner', 'Leconte', 'Linnaeus', 'acuminatus', 'armandi', 'coleoptera', 'linnaeus']def draw_rectangle(currentAxis, bbox, edgecolor = 'k', facecolor = 'y', fill=False, linestyle='-'):rect=patches.Rectangle((bbox[0], bbox[1]), bbox[2]-bbox[0]+1, bbox[3]-bbox[1]+1, linewidth=1,edgecolor=edgecolor,facecolor=facecolor,fill=fill, linestyle=linestyle)currentAxis.add_patch(rect)def draw_results(result, filename, draw_thresh=0.5):plt.figure(figsize=(10, 10))im = cv2.imread(filename)plt.imshow(im)currentAxis=plt.gca()colors = ['r', 'g', 'b', 'k', 'y', 'c', 'purple']for item in result:box = item[2:6]label = int(item[0])name = INSECT_NAMES[label]if item[1] > draw_thresh:draw_rectangle(currentAxis, box, edgecolor = colors[label])plt.text(box[0], box[1], name, fontsize=12, color=colors[label])import jsonimport paddleANCHORS = [10, 13, 16, 30, 33, 23, 30, 61, 62, 45, 59, 119, 116, 90, 156, 198, 373, 326]

ANCHOR_MASKS = [[6, 7, 8], [3, 4, 5], [0, 1, 2]]

VALID_THRESH = 0.01

NMS_TOPK = 400

NMS_POSK = 100

NMS_THRESH = 0.45NUM_CLASSES = 7

if __name__ == '__main__':image_name = '/home/aistudio/work/insects/test/images/2642.jpeg'params_file_path = '/home/aistudio/yolo_epoch50.pdparams'model = YOLOv3(num_classes=NUM_CLASSES)model_state_dict = paddle.load(params_file_path)model.load_dict(model_state_dict)model.eval()total_results = []test_loader = test_data_loader(image_name, mode='test')for i, data in enumerate(test_loader()):img_name, img_data, img_scale_data = dataimg = paddle.to_tensor(img_data)img_scale = paddle.to_tensor(img_scale_data)outputs = model.forward(img)bboxes, scores = model.get_pred(outputs,im_shape=img_scale,anchors=ANCHORS,anchor_masks=ANCHOR_MASKS,valid_thresh = VALID_THRESH)bboxes_data = bboxes.numpy()scores_data = scores.numpy()results = multiclass_nms(bboxes_data, scores_data,score_thresh=VALID_THRESH, nms_thresh=NMS_THRESH, pre_nms_topk=NMS_TOPK, pos_nms_topk=NMS_POSK)result = results[0]

draw_results(result, image_name, draw_thresh=0.4)结果如下:

四、总结

YOLOv3采用了全卷积神经网络作为其骨干网络,可以在保证网络深度的同时,有效缓解梯度消失问题。此外,YOLOv3还引入了多尺度特征融合策略,将网络划分为三个不同尺度的检测层,分别负责检测不同大小的目标。再将不同尺度的特征信息进行融合,以提高检测精度。同时,YOLOv3还采用了多目标预测的策略,即每个预测位置可以同时预测多个目标,进一步提高了检测的精度。

因此,YOLOv3作为一种高效的目标检测算法,通过独特的网络结构和训练策略,实现了高效、准确的目标检测。它在速度和精度之间达到了良好的平衡,并在实际应用中展现出了广泛的应用前景。