Python 训练营打卡 Day 45-Tensorboard

Tensorboard的原理

TensorBoard 的核心原理就是在训练过程中,把训练过程中的数据(比如损失、准确率、图片等)先记录到日志文件里,再通过工具把这些日志文件可视化成图表,这样就不用自己手动打印数据或者用其他工具画图

所以核心就是2个步骤:

数据怎么存?—— 先写日志文件

训练模型时,TensorBoard 会让程序把训练数据(比如损失值、准确率)和模型结构等信息,写入一个特殊的日志文件(.tfevents 文件)

数据怎么看?—— 用网页展示日志

写完日志后,TensorBoard 会启动一个本地网页服务,自动读取日志文件里的数据,用图表、图像、文本等形式展示出来。如果只用 print(损失值) 或者自己用 matplotlib 画图,不仅麻烦,还得手动保存数据、写代码,尤其训练几天几夜时,根本没法实时盯着看。而 TensorBoard 能自动把这些数据 “存下来 + 画出来”,还能生成网页版的可视化界面,随时刷新

1.1 日志目录自动管理

log_dir = 'runs/cifar10_mlp_experiment'

if os.path.exists(log_dir):i = 1while os.path.exists(f"{log_dir}_{i}"):i += 1log_dir = f"{log_dir}_{i}"

writer = SummaryWriter(log_dir) #关键入口,用于写入数据到日志目录自动避免日志目录重复。若 runs/cifar10_mlp_experiment 已存在,会生成 runs/cifar10_mlp_experiment_1、_2 等新目录,确保每次训练的日志独立存储

方便对比不同训练任务的结果(如不同超参数实验)

1.2 记录标量数据(Scalar)

# 记录每个 Batch 的损失和准确率

writer.add_scalar('Train/Batch_Loss', batch_loss, global_step)

writer.add_scalar('Train/Batch_Accuracy', batch_acc, global_step)# 记录每个 Epoch 的训练指标

writer.add_scalar('Train/Epoch_Loss', epoch_train_loss, epoch)

writer.add_scalar('Train/Epoch_Accuracy', epoch_train_acc, epoch)在 tensorboard的SCALARS 选项卡中查看曲线,支持多 run

1.3 可视化模型结构(Graph)

dataiter = iter(train_loader)

images, labels = next(dataiter)

images = images.to(device)

writer.add_graph(model, images) # 通过真实输入样本生成模型计算图TensorBoard 界面:在 GRAPHS 选项卡中查看模型层次结构(卷积层、全连接层等)

1.4 可视化图像(Image)

# 可视化原始训练图像

img_grid = torchvision.utils.make_grid(images[:8].cpu()) # 将多张图像拼接成网格状(方便可视化),将前8张图像拼接成一个网格

writer.add_image('原始训练图像', img_grid)# 可视化错误预测样本(训练结束后)

wrong_img_grid = torchvision.utils.make_grid(wrong_images[:display_count])

writer.add_image('错误预测样本', wrong_img_grid)展示原始图像、数据增强效果、错误预测样本等

1.5 记录权重和梯度直方图(Histogram)

if (batch_idx + 1) % 500 == 0:for name, param in model.named_parameters():writer.add_histogram(f'weights/{name}', param, global_step) # 权重分布if param.grad is not None:writer.add_histogram(f'grads/{name}', param.grad, global_step) # 梯度分布在 HISTOGRAMS 选项卡中查看不同层的参数分布随训练的变化。监控模型参数(如权重 weights)和梯度(grads)的分布变化,诊断训练问题(如梯度消失 / 爆炸)

1.6 启动tensorboard

运行代码后,会在指定目录(如 runs/cifar10_mlp_experiment_1)生成 .tfevents 文件,存储所有 TensorBoard 数据

在终端执行(需进入项目根目录):tensorboard --logdir=runs # 假设日志目录在 runs/ 下

打开浏览器,输入终端提示的 URL(通常为 http://localhost:6006)

二.tensorboard

2.1 cifar-10 MLP实战

import torch

import torch.nn as nn

import torch.optim as optim

import torchvision

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

import numpy as np

import matplotlib.pyplot as plt

import os# 设置随机种子以确保结果可复现

torch.manual_seed(42)

np.random.seed(42)# 1. 数据预处理

transform = transforms.Compose([transforms.ToTensor(), # 转换为张量transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5)) # 标准化处理

])# 2. 加载CIFAR-10数据集

train_dataset = datasets.CIFAR10(root='./data',train=True,download=True,transform=transform

)test_dataset = datasets.CIFAR10(root='./data',train=False,transform=transform

)# 3. 创建数据加载器

batch_size = 64

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False)# CIFAR-10的类别名称

classes = ('plane', 'car', 'bird', 'cat', 'deer', 'dog', 'frog', 'horse', 'ship', 'truck')# 4. 定义MLP模型(适应CIFAR-10的输入尺寸)

class MLP(nn.Module):def __init__(self):super(MLP, self).__init__()self.flatten = nn.Flatten() # 将3x32x32的图像展平为3072维向量self.layer1 = nn.Linear(3072, 512) # 第一层:3072个输入,512个神经元self.relu1 = nn.ReLU()self.dropout1 = nn.Dropout(0.2) # 添加Dropout防止过拟合self.layer2 = nn.Linear(512, 256) # 第二层:512个输入,256个神经元self.relu2 = nn.ReLU()self.dropout2 = nn.Dropout(0.2)self.layer3 = nn.Linear(256, 10) # 输出层:10个类别def forward(self, x):# 第一步:将输入图像展平为一维向量x = self.flatten(x) # 输入尺寸: [batch_size, 3, 32, 32] → [batch_size, 3072]# 第一层全连接 + 激活 + Dropoutx = self.layer1(x) # 线性变换: [batch_size, 3072] → [batch_size, 512]x = self.relu1(x) # 应用ReLU激活函数x = self.dropout1(x) # 训练时随机丢弃部分神经元输出# 第二层全连接 + 激活 + Dropoutx = self.layer2(x) # 线性变换: [batch_size, 512] → [batch_size, 256]x = self.relu2(x) # 应用ReLU激活函数x = self.dropout2(x) # 训练时随机丢弃部分神经元输出# 第三层(输出层)全连接x = self.layer3(x) # 线性变换: [batch_size, 256] → [batch_size, 10]return x # 返回未经过Softmax的logits# 检查GPU是否可用

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")# 初始化模型

model = MLP()

model = model.to(device) # 将模型移至GPU(如果可用)criterion = nn.CrossEntropyLoss() # 交叉熵损失函数

optimizer = optim.Adam(model.parameters(), lr=0.001) # Adam优化器# 创建TensorBoard的SummaryWriter,指定日志保存目录

log_dir = 'runs/cifar10_mlp_experiment'

# 如果目录已存在,添加后缀避免覆盖

if os.path.exists(log_dir):i = 1while os.path.exists(f"{log_dir}_{i}"):i += 1log_dir = f"{log_dir}_{i}"

writer = SummaryWriter(log_dir)# 5. 训练模型(使用TensorBoard记录各种信息)

def train(model, train_loader, test_loader, criterion, optimizer, device, epochs, writer):model.train() # 设置为训练模式# 记录训练开始时间,用于计算训练速度global_step = 0# 可视化模型结构dataiter = iter(train_loader)images, labels = next(dataiter)images = images.to(device)writer.add_graph(model, images) # 添加模型图# 可视化原始图像样本img_grid = torchvision.utils.make_grid(images[:8].cpu())writer.add_image('原始训练图像', img_grid)for epoch in range(epochs):running_loss = 0.0correct = 0total = 0for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device) # 移至GPUoptimizer.zero_grad() # 梯度清零output = model(data) # 前向传播loss = criterion(output, target) # 计算损失loss.backward() # 反向传播optimizer.step() # 更新参数# 统计准确率和损失running_loss += loss.item()_, predicted = output.max(1)total += target.size(0)correct += predicted.eq(target).sum().item()# 每100个批次记录一次信息到TensorBoardif (batch_idx + 1) % 100 == 0:batch_loss = loss.item()batch_acc = 100. * correct / total# 记录标量数据(损失、准确率)writer.add_scalar('Train/Batch_Loss', batch_loss, global_step)writer.add_scalar('Train/Batch_Accuracy', batch_acc, global_step)# 记录学习率writer.add_scalar('Train/Learning_Rate', optimizer.param_groups[0]['lr'], global_step)# 每500个批次记录一次直方图(权重和梯度)if (batch_idx + 1) % 500 == 0:for name, param in model.named_parameters():writer.add_histogram(f'weights/{name}', param, global_step)if param.grad is not None:writer.add_histogram(f'grads/{name}', param.grad, global_step)print(f'Epoch: {epoch+1}/{epochs} | Batch: {batch_idx+1}/{len(train_loader)} 'f'| 单Batch损失: {batch_loss:.4f} | 累计平均损失: {running_loss/(batch_idx+1):.4f}')global_step += 1# 计算当前epoch的平均训练损失和准确率epoch_train_loss = running_loss / len(train_loader)epoch_train_acc = 100. * correct / total# 记录每个epoch的训练损失和准确率writer.add_scalar('Train/Epoch_Loss', epoch_train_loss, epoch)writer.add_scalar('Train/Epoch_Accuracy', epoch_train_acc, epoch)# 测试阶段model.eval() # 设置为评估模式test_loss = 0correct_test = 0total_test = 0# 用于存储预测错误的样本wrong_images = []wrong_labels = []wrong_preds = []with torch.no_grad():for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += criterion(output, target).item()_, predicted = output.max(1)total_test += target.size(0)correct_test += predicted.eq(target).sum().item()# 收集预测错误的样本wrong_mask = (predicted != target).cpu()if wrong_mask.sum() > 0:wrong_batch_images = data[wrong_mask].cpu()wrong_batch_labels = target[wrong_mask].cpu()wrong_batch_preds = predicted[wrong_mask].cpu()wrong_images.extend(wrong_batch_images)wrong_labels.extend(wrong_batch_labels)wrong_preds.extend(wrong_batch_preds)epoch_test_loss = test_loss / len(test_loader)epoch_test_acc = 100. * correct_test / total_test# 记录每个epoch的测试损失和准确率writer.add_scalar('Test/Loss', epoch_test_loss, epoch)writer.add_scalar('Test/Accuracy', epoch_test_acc, epoch)# 计算并记录训练速度(每秒处理的样本数)# 这里简化处理,假设每个epoch的时间相同samples_per_epoch = len(train_loader.dataset)# 实际应用中应该使用time.time()来计算真实时间print(f'Epoch {epoch+1}/{epochs} 完成 | 训练准确率: {epoch_train_acc:.2f}% | 测试准确率: {epoch_test_acc:.2f}%')# 可视化预测错误的样本(只在最后一个epoch进行)if epoch == epochs - 1 and len(wrong_images) > 0:# 最多显示8个错误样本display_count = min(8, len(wrong_images))wrong_img_grid = torchvision.utils.make_grid(wrong_images[:display_count])# 创建错误预测的标签文本wrong_text = []for i in range(display_count):true_label = classes[wrong_labels[i]]pred_label = classes[wrong_preds[i]]wrong_text.append(f'True: {true_label}, Pred: {pred_label}')writer.add_image('错误预测样本', wrong_img_grid)writer.add_text('错误预测标签', '\n'.join(wrong_text), epoch)# 关闭TensorBoard写入器writer.close()return epoch_test_acc # 返回最终测试准确率# 6. 执行训练和测试

epochs = 20 # 训练轮次

print("开始训练模型...")

print(f"TensorBoard日志保存在: {log_dir}")

print("训练完成后,使用命令 `tensorboard --logdir=runs` 启动TensorBoard查看可视化结果")final_accuracy = train(model, train_loader, test_loader, criterion, optimizer, device, epochs, writer)

print(f"训练完成!最终测试准确率: {final_accuracy:.2f}%")Files already downloaded and verified

开始训练模型...

TensorBoard日志保存在: runs/cifar10_mlp_experiment_1

训练完成后,使用命令 `tensorboard --logdir=runs` 启动TensorBoard查看可视化结果

Epoch: 1/20 | Batch: 100/782 | 单Batch损失: 1.7407 | 累计平均损失: 1.9120

Epoch: 1/20 | Batch: 200/782 | 单Batch损失: 1.6190 | 累计平均损失: 1.8486

Epoch: 1/20 | Batch: 300/782 | 单Batch损失: 1.6698 | 累计平均损失: 1.8160

Epoch: 1/20 | Batch: 400/782 | 单Batch损失: 1.7142 | 累计平均损失: 1.7806

Epoch: 1/20 | Batch: 500/782 | 单Batch损失: 1.7804 | 累计平均损失: 1.7538

Epoch: 1/20 | Batch: 600/782 | 单Batch损失: 1.2317 | 累计平均损失: 1.7291

Epoch: 1/20 | Batch: 700/782 | 单Batch损失: 1.5249 | 累计平均损失: 1.7160

Epoch 1/20 完成 | 训练准确率: 39.54% | 测试准确率: 47.29%

Epoch: 2/20 | Batch: 100/782 | 单Batch损失: 1.4864 | 累计平均损失: 1.5138

Epoch: 2/20 | Batch: 200/782 | 单Batch损失: 1.4199 | 累计平均损失: 1.4940

Epoch: 2/20 | Batch: 300/782 | 单Batch损失: 1.2272 | 累计平均损失: 1.4763

Epoch: 2/20 | Batch: 400/782 | 单Batch损失: 1.5164 | 累计平均损失: 1.4702

Epoch: 2/20 | Batch: 500/782 | 单Batch损失: 1.4884 | 累计平均损失: 1.4676

Epoch: 2/20 | Batch: 600/782 | 单Batch损失: 1.6866 | 累计平均损失: 1.4647

Epoch: 2/20 | Batch: 700/782 | 单Batch损失: 1.4024 | 累计平均损失: 1.4629

Epoch 2/20 完成 | 训练准确率: 48.47% | 测试准确率: 50.17%

Epoch: 3/20 | Batch: 100/782 | 单Batch损失: 1.2678 | 累计平均损失: 1.3699

Epoch: 3/20 | Batch: 200/782 | 单Batch损失: 1.2753 | 累计平均损失: 1.3593

Epoch: 3/20 | Batch: 300/782 | 单Batch损失: 1.1745 | 累计平均损失: 1.3574

Epoch: 3/20 | Batch: 400/782 | 单Batch损失: 1.1988 | 累计平均损失: 1.3542

Epoch: 3/20 | Batch: 500/782 | 单Batch损失: 1.4076 | 累计平均损失: 1.3544

...

Epoch: 20/20 | Batch: 600/782 | 单Batch损失: 0.5646 | 累计平均损失: 0.3746

Epoch: 20/20 | Batch: 700/782 | 单Batch损失: 0.3850 | 累计平均损失: 0.3821

Epoch 20/20 完成 | 训练准确率: 86.17% | 测试准确率: 52.74%

训练完成!最终测试准确率: 52.74%TensorBoard日志保存在: runs/cifar10_mlp_experiment_1

可以在命令行中进入目前的环境,然后通过tensorboard --logdir=xxxx(目录)即可调出本地链接,点进去就是目前的训练信息,可以不断F5刷新来查看变化

在TensorBoard界面中,你可以看到:

- SCALARS 选项卡:展示损失曲线、准确率变化、学习率等标量数据----Scalar意思是标量,指只有大小、没有方向的量

- IMAGES 选项卡:展示原始训练图像和错误预测的样本

- GRAPHS 选项卡:展示模型的计算图结构

- HISTOGRAMS 选项卡:展示模型参数和梯度的分布直方图

2.2 cifar-10 CNN实战

import torch

import torch.nn as nn

import torch.optim as optim

from torchvision import datasets, transforms

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriter

import matplotlib.pyplot as plt

import numpy as np

import os

import torchvision # 记得导入 torchvision,之前代码里用到了其功能但没导入# 设置中文字体支持

plt.rcParams["font.family"] = ["SimHei"]

plt.rcParams['axes.unicode_minus'] = False # 解决负号显示问题# 检查GPU是否可用

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"使用设备: {device}")# 1. 数据预处理

train_transform = transforms.Compose([transforms.RandomCrop(32, padding=4),transforms.RandomHorizontalFlip(),transforms.ColorJitter(brightness=0.2, contrast=0.2, saturation=0.2, hue=0.1),transforms.RandomRotation(15),transforms.ToTensor(),transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))

])test_transform = transforms.Compose([transforms.ToTensor(),transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010))

])# 2. 加载CIFAR-10数据集

train_dataset = datasets.CIFAR10(root='./data',train=True,download=True,transform=train_transform

)test_dataset = datasets.CIFAR10(root='./data',train=False,transform=test_transform

)# 3. 创建数据加载器

batch_size = 64

train_loader = DataLoader(train_dataset, batch_size=batch_size, shuffle=True)

test_loader = DataLoader(test_dataset, batch_size=batch_size, shuffle=False)# 4. 定义CNN模型的定义(替代原MLP)

class CNN(nn.Module):def __init__(self):super(CNN, self).__init__() # 继承父类初始化# ---------------------- 第一个卷积块 ----------------------# 卷积层1:输入3通道(RGB),输出32个特征图,卷积核3x3,边缘填充1像素self.conv1 = nn.Conv2d(in_channels=3, # 输入通道数(图像的RGB通道)out_channels=32, # 输出通道数(生成32个新特征图)kernel_size=3, # 卷积核尺寸(3x3像素)padding=1 # 边缘填充1像素,保持输出尺寸与输入相同)# 批量归一化层:对32个输出通道进行归一化,加速训练self.bn1 = nn.BatchNorm2d(num_features=32)# ReLU激活函数:引入非线性,公式:max(0, x)self.relu1 = nn.ReLU()# 最大池化层:窗口2x2,步长2,特征图尺寸减半(32x32→16x16)self.pool1 = nn.MaxPool2d(kernel_size=2, stride=2) # stride默认等于kernel_size# ---------------------- 第二个卷积块 ----------------------# 卷积层2:输入32通道(来自conv1的输出),输出64通道self.conv2 = nn.Conv2d(in_channels=32, # 输入通道数(前一层的输出通道数)out_channels=64, # 输出通道数(特征图数量翻倍)kernel_size=3, # 卷积核尺寸不变padding=1 # 保持尺寸:16x16→16x16(卷积后)→8x8(池化后))self.bn2 = nn.BatchNorm2d(num_features=64)self.relu2 = nn.ReLU()self.pool2 = nn.MaxPool2d(kernel_size=2) # 尺寸减半:16x16→8x8# ---------------------- 第三个卷积块 ----------------------# 卷积层3:输入64通道,输出128通道self.conv3 = nn.Conv2d(in_channels=64, # 输入通道数(前一层的输出通道数)out_channels=128, # 输出通道数(特征图数量再次翻倍)kernel_size=3,padding=1 # 保持尺寸:8x8→8x8(卷积后)→4x4(池化后))self.bn3 = nn.BatchNorm2d(num_features=128)self.relu3 = nn.ReLU() # 复用激活函数对象(节省内存)self.pool3 = nn.MaxPool2d(kernel_size=2) # 尺寸减半:8x8→4x4# ---------------------- 全连接层(分类器) ----------------------# 计算展平后的特征维度:128通道 × 4x4尺寸 = 128×16=2048维self.fc1 = nn.Linear(in_features=128 * 4 * 4, # 输入维度(卷积层输出的特征数)out_features=512 # 输出维度(隐藏层神经元数))# Dropout层:训练时随机丢弃50%神经元,防止过拟合self.dropout = nn.Dropout(p=0.5)# 输出层:将512维特征映射到10个类别(CIFAR-10的类别数)self.fc2 = nn.Linear(in_features=512, out_features=10)def forward(self, x):# 输入尺寸:[batch_size, 3, 32, 32](batch_size=批量大小,3=通道数,32x32=图像尺寸)# ---------- 卷积块1处理 ----------x = self.conv1(x) # 卷积后尺寸:[batch_size, 32, 32, 32](padding=1保持尺寸)x = self.bn1(x) # 批量归一化,不改变尺寸x = self.relu1(x) # 激活函数,不改变尺寸x = self.pool1(x) # 池化后尺寸:[batch_size, 32, 16, 16](32→16是因为池化窗口2x2)# ---------- 卷积块2处理 ----------x = self.conv2(x) # 卷积后尺寸:[batch_size, 64, 16, 16](padding=1保持尺寸)x = self.bn2(x)x = self.relu2(x)x = self.pool2(x) # 池化后尺寸:[batch_size, 64, 8, 8]# ---------- 卷积块3处理 ----------x = self.conv3(x) # 卷积后尺寸:[batch_size, 128, 8, 8](padding=1保持尺寸)x = self.bn3(x)x = self.relu3(x)x = self.pool3(x) # 池化后尺寸:[batch_size, 128, 4, 4]# ---------- 展平与全连接层 ----------# 将多维特征图展平为一维向量:[batch_size, 128*4*4] = [batch_size, 2048]x = x.view(-1, 128 * 4 * 4) # -1自动计算批量维度,保持批量大小不变x = self.fc1(x) # 全连接层:2048→512,尺寸变为[batch_size, 512]x = self.relu3(x) # 激活函数(复用relu3,与卷积块3共用)x = self.dropout(x) # Dropout随机丢弃神经元,不改变尺寸x = self.fc2(x) # 全连接层:512→10,尺寸变为[batch_size, 10](未激活,直接输出logits)return x # 输出未经过Softmax的logits,适用于交叉熵损失函数# 初始化模型

model = CNN()

model = model.to(device) # 将模型移至GPU(如果可用)criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=0.001)

scheduler = optim.lr_scheduler.ReduceLROnPlateau(optimizer, # 指定要控制的优化器(这里是Adam)mode='min', # 监测的指标是"最小化"(如损失函数)patience=3, # 如果连续3个epoch指标没有改善,才降低LRfactor=0.5, # 降低LR的比例(新LR = 旧LR × 0.5)verbose=True # 打印学习率调整信息

)# ======================== TensorBoard 核心配置 ========================

# 创建 TensorBoard 日志目录(自动避免重复)

log_dir = "runs/cifar10_cnn_exp"

if os.path.exists(log_dir):version = 1while os.path.exists(f"{log_dir}_v{version}"):version += 1log_dir = f"{log_dir}_v{version}"

writer = SummaryWriter(log_dir) # 初始化 SummaryWriter# 5. 训练模型(整合 TensorBoard 记录)

def train(model, train_loader, test_loader, criterion, optimizer, scheduler, device, epochs, writer):model.train()all_iter_losses = [] iter_indices = [] global_step = 0 # 全局步骤,用于 TensorBoard 标量记录# (可选)记录模型结构:用一个真实样本走一遍前向传播,让 TensorBoard 解析计算图dataiter = iter(train_loader)images, labels = next(dataiter)images = images.to(device)writer.add_graph(model, images) # 写入模型结构到 TensorBoard# (可选)记录原始训练图像:可视化数据增强前/后效果img_grid = torchvision.utils.make_grid(images[:8].cpu()) # 取前8张writer.add_image('原始训练图像(增强前)', img_grid, global_step=0)for epoch in range(epochs):running_loss = 0.0correct = 0total = 0for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device)optimizer.zero_grad()output = model(data)loss = criterion(output, target)loss.backward()optimizer.step()# 记录迭代级损失iter_loss = loss.item()all_iter_losses.append(iter_loss)iter_indices.append(global_step + 1) # 用 global_step 对齐# 统计准确率running_loss += iter_loss_, predicted = output.max(1)total += target.size(0)correct += predicted.eq(target).sum().item()# ======================== TensorBoard 标量记录 ========================# 记录每个 batch 的损失、准确率batch_acc = 100. * correct / totalwriter.add_scalar('Train/Batch Loss', iter_loss, global_step)writer.add_scalar('Train/Batch Accuracy', batch_acc, global_step)# 记录学习率(可选)writer.add_scalar('Train/Learning Rate', optimizer.param_groups[0]['lr'], global_step)# 每 200 个 batch 记录一次参数直方图(可选,耗时稍高)if (batch_idx + 1) % 200 == 0:for name, param in model.named_parameters():writer.add_histogram(f'Weights/{name}', param, global_step)if param.grad is not None:writer.add_histogram(f'Gradients/{name}', param.grad, global_step)# 每 100 个 batch 打印控制台日志(同原代码)if (batch_idx + 1) % 100 == 0:print(f'Epoch: {epoch+1}/{epochs} | Batch: {batch_idx+1}/{len(train_loader)} 'f'| 单Batch损失: {iter_loss:.4f} | 累计平均损失: {running_loss/(batch_idx+1):.4f}')global_step += 1 # 全局步骤递增# 计算 epoch 级训练指标epoch_train_loss = running_loss / len(train_loader)epoch_train_acc = 100. * correct / total# ======================== TensorBoard epoch 标量记录 ========================writer.add_scalar('Train/Epoch Loss', epoch_train_loss, epoch)writer.add_scalar('Train/Epoch Accuracy', epoch_train_acc, epoch)# 测试阶段model.eval()test_loss = 0correct_test = 0total_test = 0wrong_images = [] # 存储错误预测样本(用于可视化)wrong_labels = []wrong_preds = []with torch.no_grad():for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += criterion(output, target).item()_, predicted = output.max(1)total_test += target.size(0)correct_test += predicted.eq(target).sum().item()# 收集错误预测样本(用于可视化)wrong_mask = (predicted != target)if wrong_mask.sum() > 0:wrong_batch_images = data[wrong_mask][:8].cpu() # 最多存8张wrong_batch_labels = target[wrong_mask][:8].cpu()wrong_batch_preds = predicted[wrong_mask][:8].cpu()wrong_images.extend(wrong_batch_images)wrong_labels.extend(wrong_batch_labels)wrong_preds.extend(wrong_batch_preds)# 计算 epoch 级测试指标epoch_test_loss = test_loss / len(test_loader)epoch_test_acc = 100. * correct_test / total_test# ======================== TensorBoard 测试集记录 ========================writer.add_scalar('Test/Epoch Loss', epoch_test_loss, epoch)writer.add_scalar('Test/Epoch Accuracy', epoch_test_acc, epoch)# (可选)可视化错误预测样本if wrong_images:wrong_img_grid = torchvision.utils.make_grid(wrong_images)writer.add_image('错误预测样本', wrong_img_grid, epoch)# 写入错误标签文本(可选)wrong_text = [f"真实: {classes[wl]}, 预测: {classes[wp]}" for wl, wp in zip(wrong_labels, wrong_preds)]writer.add_text('错误预测标签', '\n'.join(wrong_text), epoch)# 更新学习率调度器scheduler.step(epoch_test_loss)print(f'Epoch {epoch+1}/{epochs} 完成 | 训练准确率: {epoch_train_acc:.2f}% | 测试准确率: {epoch_test_acc:.2f}%')# 关闭 TensorBoard 写入器writer.close()# 绘制迭代级损失曲线(同原代码)plot_iter_losses(all_iter_losses, iter_indices)return epoch_test_acc# 6. 绘制迭代级损失曲线(同原代码,略)

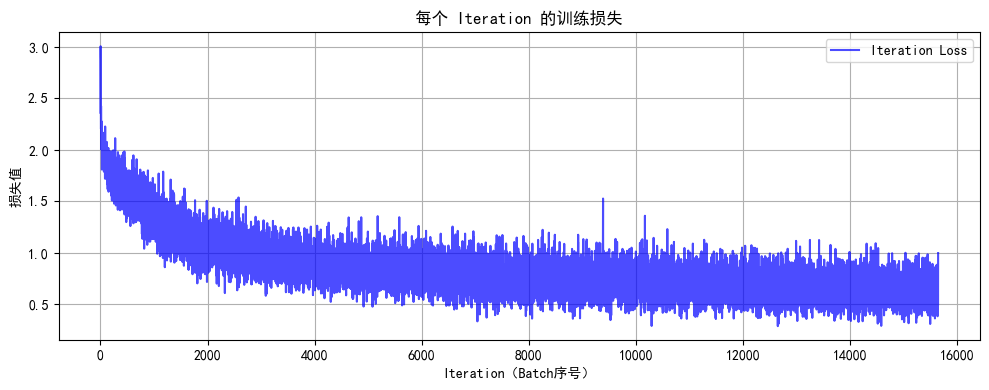

def plot_iter_losses(losses, indices):plt.figure(figsize=(10, 4))plt.plot(indices, losses, 'b-', alpha=0.7, label='Iteration Loss')plt.xlabel('Iteration(Batch序号)')plt.ylabel('损失值')plt.title('每个 Iteration 的训练损失')plt.legend()plt.grid(True)plt.tight_layout()plt.show()# (可选)CIFAR-10 类别名

classes = ('plane', 'car', 'bird', 'cat','deer', 'dog', 'frog', 'horse', 'ship', 'truck')# 7. 执行训练(传入 TensorBoard writer)

epochs = 20

print("开始使用CNN训练模型...")

print(f"TensorBoard 日志目录: {log_dir}")

print("训练后执行: tensorboard --logdir=runs 查看可视化")final_accuracy = train(model, train_loader, test_loader, criterion, optimizer, scheduler, device, epochs, writer)

print(f"训练完成!最终测试准确率: {final_accuracy:.2f}%")使用设备: cuda

Files already downloaded and verified

c:\Anaconda\envs\DL\lib\site-packages\torch\optim\lr_scheduler.py:60: UserWarning: The verbose parameter is deprecated. Please use get_last_lr() to access the learning rate.warnings.warn(

开始使用CNN训练模型...

TensorBoard 日志目录: runs/cifar10_cnn_exp

训练后执行: tensorboard --logdir=runs 查看可视化

Epoch: 1/20 | Batch: 100/782 | 单Batch损失: 1.8443 | 累计平均损失: 2.0492

Epoch: 1/20 | Batch: 200/782 | 单Batch损失: 1.6953 | 累计平均损失: 1.9327

Epoch: 1/20 | Batch: 300/782 | 单Batch损失: 1.7458 | 累计平均损失: 1.8564

Epoch: 1/20 | Batch: 400/782 | 单Batch损失: 1.6269 | 累计平均损失: 1.8037

Epoch: 1/20 | Batch: 500/782 | 单Batch损失: 1.4730 | 累计平均损失: 1.7677

Epoch: 1/20 | Batch: 600/782 | 单Batch损失: 1.5904 | 累计平均损失: 1.7364

Epoch: 1/20 | Batch: 700/782 | 单Batch损失: 1.4907 | 累计平均损失: 1.7071

Epoch 1/20 完成 | 训练准确率: 38.08% | 测试准确率: 52.81%

Epoch: 2/20 | Batch: 100/782 | 单Batch损失: 1.5340 | 累计平均损失: 1.4049

Epoch: 2/20 | Batch: 200/782 | 单Batch损失: 1.3281 | 累计平均损失: 1.3824

Epoch: 2/20 | Batch: 300/782 | 单Batch损失: 1.2271 | 累计平均损失: 1.3502

Epoch: 2/20 | Batch: 400/782 | 单Batch损失: 1.2071 | 累计平均损失: 1.3297

Epoch: 2/20 | Batch: 500/782 | 单Batch损失: 1.3005 | 累计平均损失: 1.3100

Epoch: 2/20 | Batch: 600/782 | 单Batch损失: 0.9058 | 累计平均损失: 1.2974

Epoch: 2/20 | Batch: 700/782 | 单Batch损失: 1.2274 | 累计平均损失: 1.2836

Epoch 2/20 完成 | 训练准确率: 54.36% | 测试准确率: 62.07%

Epoch: 3/20 | Batch: 100/782 | 单Batch损失: 0.9140 | 累计平均损失: 1.1770

Epoch: 3/20 | Batch: 200/782 | 单Batch损失: 1.0286 | 累计平均损失: 1.1412

Epoch: 3/20 | Batch: 300/782 | 单Batch损失: 1.0757 | 累计平均损失: 1.1284

Epoch: 3/20 | Batch: 400/782 | 单Batch损失: 0.9110 | 累计平均损失: 1.1183

Epoch: 3/20 | Batch: 500/782 | 单Batch损失: 1.0427 | 累计平均损失: 1.1069

Epoch: 3/20 | Batch: 600/782 | 单Batch损失: 1.1220 | 累计平均损失: 1.1035

...

Epoch: 20/20 | Batch: 500/782 | 单Batch损失: 0.5738 | 累计平均损失: 0.6354

Epoch: 20/20 | Batch: 600/782 | 单Batch损失: 0.5828 | 累计平均损失: 0.6405

Epoch: 20/20 | Batch: 700/782 | 单Batch损失: 0.5956 | 累计平均损失: 0.6364

Epoch 20/20 完成 | 训练准确率: 77.83% | 测试准确率: 80.71%

作业:对resnet18在cifar10上采用微调策略下,用tensorboard监控训练过程

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import DataLoader

from torchvision import datasets, transforms

from torchvision.models import resnet18

from torch.utils.tensorboard import SummaryWriter

import os# ---------------------- 1. 初始化TensorBoard写入器 ----------------------

log_dir = 'runs/resnet18_cifar10_finetune' # 日志存储路径(自动创建)

# 避免日志覆盖:若目录存在则自动递增

if os.path.exists(log_dir):i = 1while os.path.exists(f"{log_dir}_{i}"):i += 1log_dir = f"{log_dir}_{i}"

writer = SummaryWriter(log_dir)# ---------------------- 2. 数据加载(CIFAR-10) ----------------------

transform = transforms.Compose([transforms.Resize((224, 224)), # ResNet输入要求224x224transforms.ToTensor(),transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])train_dataset = datasets.CIFAR10(root='data', train=True, download=True, transform=transform)

train_loader = DataLoader(train_dataset, batch_size=32, shuffle=True, num_workers=4)val_dataset = datasets.CIFAR10(root='data', train=False, download=True, transform=transform)

val_loader = DataLoader(val_dataset, batch_size=32, shuffle=False, num_workers=4)# ---------------------- 3. 模型定义(ResNet-18微调) ----------------------

model = resnet18(pretrained=True) # 加载预训练模型

# 冻结前几层(微调策略:仅训练最后分类层)

for param in model.parameters():param.requires_grad = False

# 替换最后一层(CIFAR-10有10类)

model.fc = nn.Linear(model.fc.in_features, 10)# 手动定义设备变量(关键修复)

device = 'cuda' if torch.cuda.is_available() else 'cpu'

model = model.to(device) # 模型移动到设备# 记录模型结构到TensorBoard(使用手动定义的device)

dummy_input = torch.randn(1, 3, 224, 224).to(device) # 替换为device变量

writer.add_graph(model, dummy_input)# ---------------------- 4. 训练配置 ----------------------

criterion = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.fc.parameters(), lr=0.001) # 仅优化分类层参数# ---------------------- 5. 训练循环(含TensorBoard记录) ----------------------

epochs = 10

for epoch in range(epochs):# 训练阶段model.train()train_loss = 0.0train_correct = 0for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device) # 关键修改:使用显式定义的deviceoptimizer.zero_grad()output = model(data)loss = criterion(output, target)loss.backward()optimizer.step()# 累计指标train_loss += loss.item()pred = output.argmax(dim=1)train_correct += pred.eq(target).sum().item()# 每50个batch记录一次实时损失(可选)if batch_idx % 50 == 0:writer.add_scalar('Train/Loss', loss.item(), epoch * len(train_loader) + batch_idx)# 计算训练指标train_loss /= len(train_loader)train_acc = train_correct / len(train_dataset)# 验证阶段model.eval()val_loss = 0.0val_correct = 0with torch.no_grad():for data, target in val_loader:data, target = data.to(device), target.to(device) # 关键修改:使用显式定义的deviceoutput = model(data)val_loss += criterion(output, target).item()pred = output.argmax(dim=1)val_correct += pred.eq(target).sum().item()val_loss /= len(val_loader)val_acc = val_correct / len(val_dataset)# ---------------------- TensorBoard核心记录 ----------------------# 记录epoch级指标writer.add_scalar('Train/Loss_Epoch', train_loss, epoch)writer.add_scalar('Train/Accuracy_Epoch', train_acc, epoch)writer.add_scalar('Val/Loss_Epoch', val_loss, epoch)writer.add_scalar('Val/Accuracy_Epoch', val_acc, epoch)# 记录学习率(可选)writer.add_scalar('Train/LearningRate', optimizer.param_groups[0]['lr'], epoch)print(f'Epoch {epoch+1}/{epochs}, Train Loss: {train_loss:.4f}, Acc: {train_acc:.4f}, Val Loss: {val_loss:.4f}, Acc: {val_acc:.4f}')# 关闭写入器

writer.close()Files already downloaded and verified

Files already downloaded and verified

Epoch 1/10, Train Loss: 0.8055, Acc: 0.7344, Val Loss: 0.6126, Acc: 0.7944

Epoch 2/10, Train Loss: 0.6388, Acc: 0.7802, Val Loss: 0.6330, Acc: 0.7817

Epoch 3/10, Train Loss: 0.6181, Acc: 0.7862, Val Loss: 0.5696, Acc: 0.8046

Epoch 4/10, Train Loss: 0.6040, Acc: 0.7907, Val Loss: 0.5836, Acc: 0.8032

Epoch 5/10, Train Loss: 0.6049, Acc: 0.7913, Val Loss: 0.5759, Acc: 0.8039

Epoch 6/10, Train Loss: 0.5992, Acc: 0.7938, Val Loss: 0.5665, Acc: 0.8070

Epoch 7/10, Train Loss: 0.5922, Acc: 0.7947, Val Loss: 0.5902, Acc: 0.8024

Epoch 8/10, Train Loss: 0.5962, Acc: 0.7941, Val Loss: 0.5822, Acc: 0.8047

Epoch 9/10, Train Loss: 0.5863, Acc: 0.7963, Val Loss: 0.5858, Acc: 0.8003

Epoch 10/10, Train Loss: 0.5850, Acc: 0.7982, Val Loss: 0.5869, Acc: 0.8028@浙大疏锦行