目标检测中的损失函数(三) | SIoU WIoUv1 WIoUv2 WIoUv3

🚀该系列将会持续整理和更新BBR相关的问题,如有错误和不足恳请大家指正,欢迎讨论!!!

SCYLLA-IoU(SIoU)来自挂在2022年arxiv上的文章:《SIoU Loss: More Powerful Learning for Bounding Box Regression》

文章介绍了一个新的损失函数SIoU,用于边界框回归的训练。传统的边界框回归的损失函数依赖于预测框与真实框之间的距离、重叠面积和长宽比等度量,但是没有考虑预测框与真实框之间的方向。因此,SIoU引入了角度敏感的惩罚项,使得在训练过程中预测框更快地向最近的坐标轴靠近,从而减少了自由度,提高了训练速度和准确度。

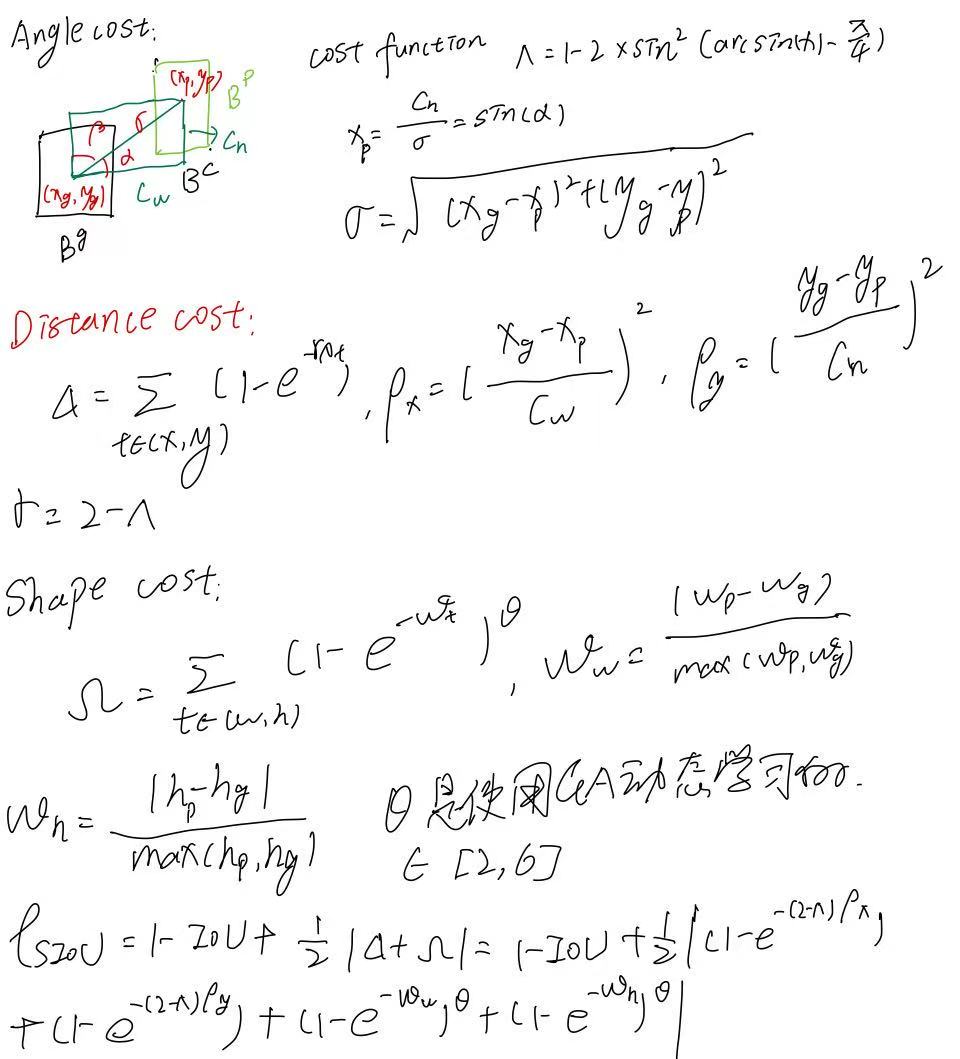

SIoU损失函数 = 角度损失 + 距离损失 + 形状损失 + IoU损失

Angle Cost(角度惩罚)

目的是让预测框优先向X或Y轴对齐,减少“漂移”自由度,数学表达中引入了角度惩罚函数。

Distance Cost(距离惩罚)

在角度惩罚的影响下重新定义了中心点距离损失,更贴合于角度优化。

Shape Cost(形状惩罚)

对预测框的宽高比和尺寸的偏差进行惩罚,提升尺度与比例的拟合性,使用遗传算法为每个数据集优化 θ控制惩罚强度。

IoU Cost

使用标准IoU定义衡量预测框和目标框之间的重叠程度。

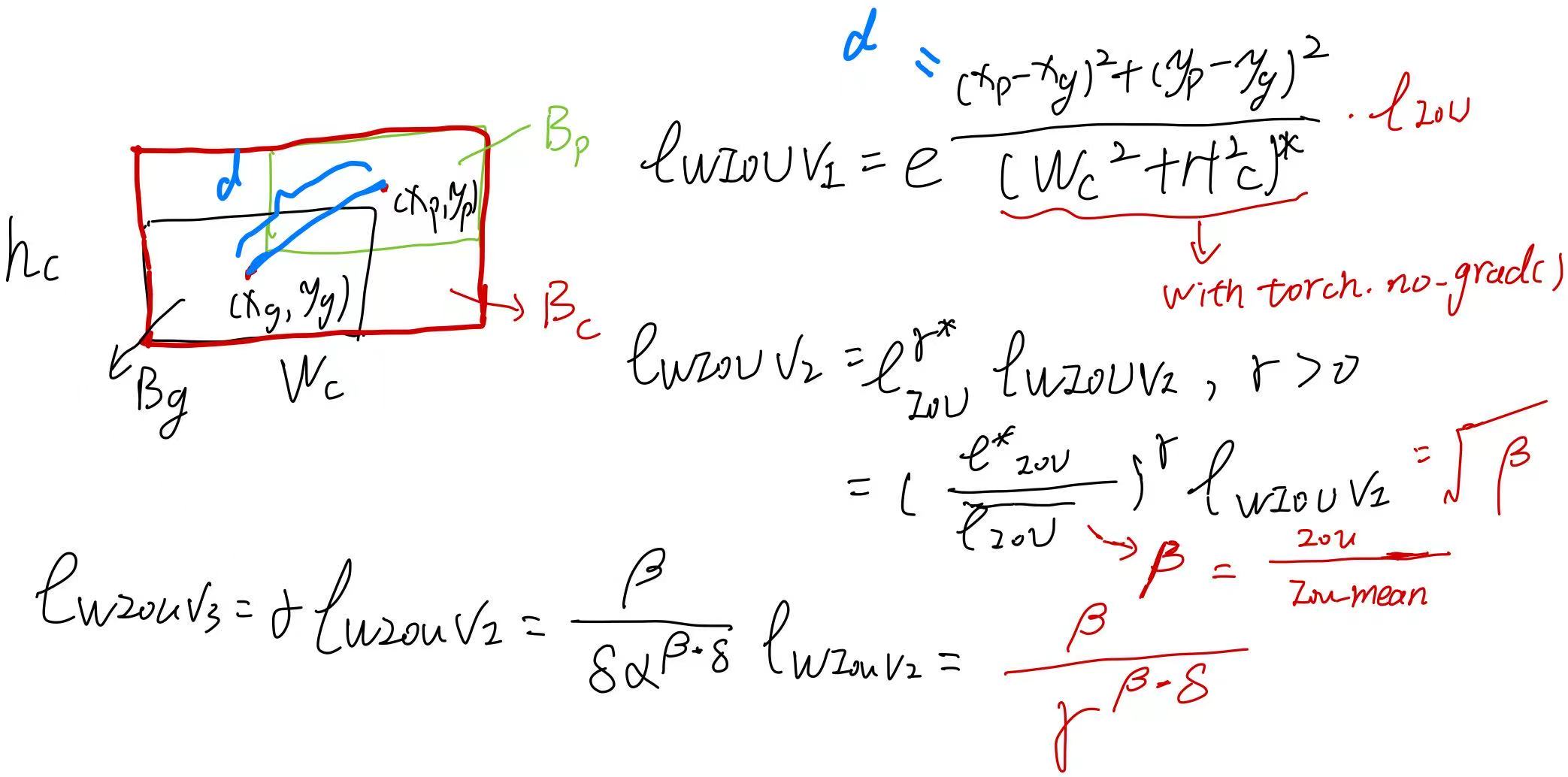

Wise-IoU(WIoU)来自挂在2023年arxiv上的文章:《Wise-IoU: Bounding Box Regression Loss with Dynamic Focusing Mechanism》

文章主要介绍了一种基于动态非单调聚焦机制(FM)的IoU损失函数WIoU,用于BBR的损失函数。WIoU采用异常度来评估锚框的质量,并提供了一种聪明的梯度增益分配策略,使其关注普通质量的锚框并提高检测器的整体性能。

文章详细地对已有且使用广泛地IoU loss做了一个详细地描述,分析了它们存在的问题。

该文作者做了一个详细地讲解:

Wise-IoU 作者导读:基于动态非单调聚焦机制的边界框损失_wiseiou-CSDN博客文章浏览阅读4.9w次,点赞176次,收藏662次。目标检测作为计算机视觉的核心问题,其检测性能依赖于损失函数的设计。边界框损失函数作为目标检测损失函数的重要组成部分,其良好的定义将为目标检测模型带来显著的性能提升。近年来的研究大多假设训练数据中的示例有较高的质量,致力于强化边界框损失的拟合能力。但我们注意到目标检测训练集中含有低质量示例,如果一味地强化边界框对低质量示例的回归,显然会危害模型检测性能的提升。Focal-EIoU v1 被提出以解决这个问题,但由于其聚焦机制是静态的,并未充分挖掘非单调聚焦机制的潜能。_wiseiouhttps://blog.csdn.net/qq_55745968/article/details/128888122?spm=1001.2014.3001.5502

🌍 WIoU的动机就是针对目标检测训练集中含有的低质量数据如何进行才能更好地进行边界框回归呢?

这篇文章的确缺少很多实验去说明超参数的设置以及这样的做法能否带来一个很好的性能。

主要有四个超参数,分别是alpha、delta、t和n。

✅ 创新点:

-

与以往采用策略(如 Focal Loss、Focal-EIoU)不同,WIoU 提出了动态的、非单调的梯度权重函数

-

基于outlier degree(离群度)计算每个 anchor box 相对质量的动态指标,从而调整梯度权重

🎯 解决的问题:

-

避免盲目加强对高质量或低质量样本的学习

-

减少低质量样本带来的“有害梯度”

-

提高模型对“普通质量样本”的关注,有利于泛化

在ultralytics-main/ultralytics/utils/metrics.py中的实现:

class WIoU_Scale:''' monotonous: {None: origin v1True: monotonic FM v2False: non-monotonic FM v3}momentum: The momentum of running mean'''iou_mean = 1.monotonous = False# 论文里面用的是0.05,作者说这样比较可解释一些# 1 - pow(0.05, 1 / (890 * 34))_momentum = 1 - 0.5 ** (1 / 7000) # 1e-2_is_train = Truedef __init__(self, iou):self.iou = iouself._update(self)@classmethoddef _update(cls, self):if cls._is_train: cls.iou_mean = (1 - cls._momentum) * cls.iou_mean + \cls._momentum * self.iou.detach().mean().item()@classmethoddef _scaled_loss(cls, self, gamma=1.9, delta=3):if isinstance(self.monotonous, bool):if self.monotonous:return (self.iou.detach() / self.iou_mean).sqrt()else:beta = self.iou.detach() / self.iou_meanalpha = delta * torch.pow(gamma, beta - delta)return beta / alphareturn 1def bbox_iou(box1, box2, xywh=True, GIoU=False, DIoU=False, CIoU=False, SIoU=False, EIoU=False, WIoU=False, Focal=False, alpha=1, gamma=0.5, scale=False, eps=1e-7):# Returns Intersection over Union (IoU) of box1(1,4) to box2(n,4)# Get the coordinates of bounding boxesif xywh: # transform from xywh to xyxy(x1, y1, w1, h1), (x2, y2, w2, h2) = box1.chunk(4, -1), box2.chunk(4, -1)w1_, h1_, w2_, h2_ = w1 / 2, h1 / 2, w2 / 2, h2 / 2b1_x1, b1_x2, b1_y1, b1_y2 = x1 - w1_, x1 + w1_, y1 - h1_, y1 + h1_b2_x1, b2_x2, b2_y1, b2_y2 = x2 - w2_, x2 + w2_, y2 - h2_, y2 + h2_else: # x1, y1, x2, y2 = box1b1_x1, b1_y1, b1_x2, b1_y2 = box1.chunk(4, -1)b2_x1, b2_y1, b2_x2, b2_y2 = box2.chunk(4, -1)w1, h1 = b1_x2 - b1_x1, (b1_y2 - b1_y1).clamp(eps)w2, h2 = b2_x2 - b2_x1, (b2_y2 - b2_y1).clamp(eps)# Intersection areainter = (b1_x2.minimum(b2_x2) - b1_x1.maximum(b2_x1)).clamp(0) * \(b1_y2.minimum(b2_y2) - b1_y1.maximum(b2_y1)).clamp(0)# Union Areaunion = w1 * h1 + w2 * h2 - inter + epsif scale:self = WIoU_Scale(1 - (inter / union))# IoU# iou = inter / union # ori iouiou = torch.pow(inter/(union + eps), alpha) # alpha iouif CIoU or DIoU or GIoU or EIoU or SIoU or WIoU:cw = b1_x2.maximum(b2_x2) - b1_x1.minimum(b2_x1) # convex (smallest enclosing box) widthch = b1_y2.maximum(b2_y2) - b1_y1.minimum(b2_y1) # convex heightif CIoU or DIoU or EIoU or SIoU or WIoU: # Distance or Complete IoU https://arxiv.org/abs/1911.08287v1c2 = (cw ** 2 + ch ** 2) ** alpha + eps # convex diagonal squaredrho2 = (((b2_x1 + b2_x2 - b1_x1 - b1_x2) ** 2 + (b2_y1 + b2_y2 - b1_y1 - b1_y2) ** 2) / 4) ** alpha # center dist ** 2if CIoU: # https://github.com/Zzh-tju/DIoU-SSD-pytorch/blob/master/utils/box/box_utils.py#L47v = (4 / math.pi ** 2) * (torch.atan(w2 / h2) - torch.atan(w1 / h1)).pow(2)with torch.no_grad():alpha_ciou = v / (v - iou + (1 + eps))if Focal:return iou - (rho2 / c2 + torch.pow(v * alpha_ciou + eps, alpha)), torch.pow(inter/(union + eps), gamma) # Focal_CIoUelse:# iou - (rho2 / c2 + (v * alpha_ciou + eps) ** alpha)return iou - (rho2 / c2 + torch.pow(v * alpha_ciou + eps, alpha)) # CIoUelif EIoU:rho_w2 = ((b2_x2 - b2_x1) - (b1_x2 - b1_x1)) ** 2rho_h2 = ((b2_y2 - b2_y1) - (b1_y2 - b1_y1)) ** 2cw2 = torch.pow(cw ** 2 + eps, alpha)ch2 = torch.pow(ch ** 2 + eps, alpha)if Focal:return iou - (rho2 / c2 + rho_w2 / cw2 + rho_h2 / ch2), torch.pow(inter/(union + eps), gamma) # Focal_EIouelse:return iou - (rho2 / c2 + rho_w2 / cw2 + rho_h2 / ch2) # EIouelif SIoU:# SIoU Loss https://arxiv.org/pdf/2205.12740.pdfs_cw = (b2_x1 + b2_x2 - b1_x1 - b1_x2) * 0.5 + epss_ch = (b2_y1 + b2_y2 - b1_y1 - b1_y2) * 0.5 + epssigma = torch.pow(s_cw ** 2 + s_ch ** 2, 0.5)sin_alpha_1 = torch.abs(s_cw) / sigmasin_alpha_2 = torch.abs(s_ch) / sigmathreshold = pow(2, 0.5) / 2sin_alpha = torch.where(sin_alpha_1 > threshold, sin_alpha_2, sin_alpha_1)angle_cost = torch.cos(torch.arcsin(sin_alpha) * 2 - math.pi / 2)rho_x = (s_cw / cw) ** 2rho_y = (s_ch / ch) ** 2gamma = angle_cost - 2distance_cost = 2 - torch.exp(gamma * rho_x) - torch.exp(gamma * rho_y)omiga_w = torch.abs(w1 - w2) / torch.max(w1, w2)omiga_h = torch.abs(h1 - h2) / torch.max(h1, h2)shape_cost = torch.pow(1 - torch.exp(-1 * omiga_w), 4) + torch.pow(1 - torch.exp(-1 * omiga_h), 4)if Focal:return iou - torch.pow(0.5 * (distance_cost + shape_cost) + eps, alpha), torch.pow(inter/(union + eps), gamma) # Focal_SIouelse:return iou - torch.pow(0.5 * (distance_cost + shape_cost) + eps, alpha) # SIouelif WIoU:if Focal:raise RuntimeError("WIoU do not support Focal.")elif scale:# WIoU_Scale._scaled_loss(self)return getattr(WIoU_Scale, '_scaled_loss')(self), (1 - iou) * torch.exp((rho2 / c2)), iou # WIoU https://arxiv.org/abs/2301.10051else:return iou, torch.exp((rho2 / c2)) # WIoU v1if Focal:return iou - rho2 / c2, torch.pow(inter/(union + eps), gamma) # Focal_DIoUelse:return iou - rho2 / c2 # DIoUc_area = cw * ch + eps # convex areaif Focal:return iou - torch.pow((c_area - union) / c_area + eps, alpha), torch.pow(inter/(union + eps), gamma) # Focal_GIoU https://arxiv.org/pdf/1902.09630.pdfelse:return iou - torch.pow((c_area - union) / c_area + eps, alpha) # GIoU https://arxiv.org/pdf/1902.09630.pdfif Focal:return iou, torch.pow(inter/(union + eps), gamma) # Focal_IoUelse:return iou # IoU在ultralytics-main/ultralytics/utils/loss.py中的调用,替换掉原有的那两行代码:

iou = bbox_iou(pbox, tbox[i], WIoU=True, scale=True).squeeze()

if type(iou) is tuple:lbox += (iou[1].detach() * (1.0 - iou[0])).mean()

else:lbox += (1.0 - iou).mean()这样写会报一个错误:

AttributeError: 'tuple' object has no attribute 'squeeze'

需要写成这个样子:

iou = bbox_iou(pbox, tbox[i], WIoU=True, scale=True)if type(iou) is tuple:if len(iou) == 2:lbox += (iou[1].detach().squeeze() * (1 - iou[0].squeeze())).mean()iou = iou[0].squeeze()else:lbox += (iou[0] * iou[1]).mean()iou = iou[2].squeeze()else:lbox += (1.0 - iou.squeeze()).mean() iou = iou.squeeze()

以上的实现来源于此:https://github.com/z1069614715/objectdetection_script/blob/master/yolo-improve/iou.py![]() https://github.com/z1069614715/objectdetection_script/blob/master/yolo-improve/iou.py

https://github.com/z1069614715/objectdetection_script/blob/master/yolo-improve/iou.py