6.11 打卡

DAY 50 预训练模型+CBAM模块

知识点回顾:

- resnet结构解析

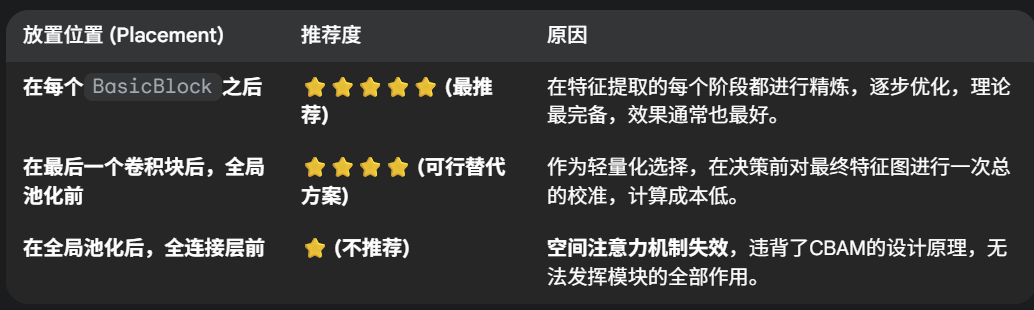

- CBAM放置位置的思考

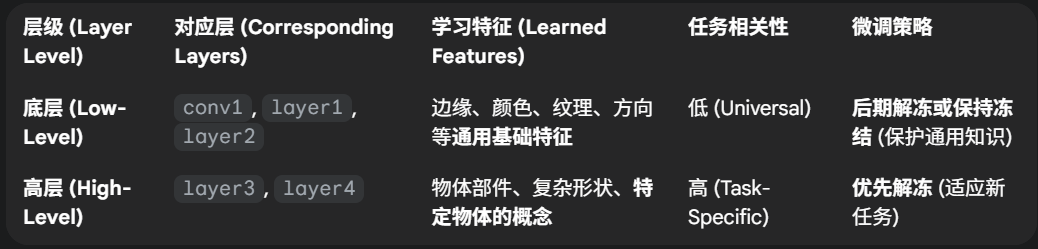

- 针对预训练模型的训练策略

- 差异化学习率

- 三阶段微调

ps:今日的代码训练时长较长,3080ti大概需要40min的训练时长

作业:

- 好好理解下resnet18的模型结构

- 尝试对vgg16+cbam进行微调策略

import torch

import torch.nn as nn

import torch.optim as optim

import torchvision

import torchvision.transforms as transforms

from torchvision import models

from torch.utils.data import DataLoader

import time

import copy# Check for CUDA availability

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

print(f"Using device: {device}")# --- CBAM Module Implementation ---

class ChannelAttention(nn.Module):def __init__(self, in_planes, ratio=16):super(ChannelAttention, self).__init__()self.avg_pool = nn.AdaptiveAvgPool2d(1)self.max_pool = nn.AdaptiveMaxPool2d(1)self.fc = nn.Sequential(nn.Conv2d(in_planes, in_planes // ratio, 1, bias=False),nn.ReLU(),nn.Conv2d(in_planes // ratio, in_planes, 1, bias=False))self.sigmoid = nn.Sigmoid()def forward(self, x):avg_out = self.fc(self.avg_pool(x))max_out = self.fc(self.max_pool(x))out = avg_out + max_outreturn self.sigmoid(out)class SpatialAttention(nn.Module):def __init__(self, kernel_size=7):super(SpatialAttention, self).__init__()assert kernel_size in (3, 7), 'kernel size must be 3 or 7'padding = 3 if kernel_size == 7 else 1self.conv1 = nn.Conv2d(2, 1, kernel_size, padding=padding, bias=False)self.sigmoid = nn.Sigmoid()def forward(self, x):avg_out = torch.mean(x, dim=1, keepdim=True)max_out, _ = torch.max(x, dim=1, keepdim=True)x_cat = torch.cat([avg_out, max_out], dim=1)x_att = self.conv1(x_cat)return self.sigmoid(x_att)class CBAM(nn.Module):def __init__(self, in_planes, ratio=16, kernel_size=7):super(CBAM, self).__init__()self.ca = ChannelAttention(in_planes, ratio)self.sa = SpatialAttention(kernel_size)def forward(self, x):x = x * self.ca(x) # Apply channel attentionx = x * self.sa(x) # Apply spatial attentionreturn x# --- VGG16 with CBAM Model ---

class VGG16_CBAM(nn.Module):def __init__(self, num_classes=10, pretrained=True):super(VGG16_CBAM, self).__init__()vgg_base = models.vgg16(weights=models.VGG16_Weights.IMAGENET1K_V1 if pretrained else None)self.features = vgg_base.features# CBAM module after feature extraction# VGG16 features output 512 channelsself.cbam = CBAM(in_planes=512) # Adaptive average pooling to get a fixed size output (e.g., 7x7 for original VGG classifier, or 1x1)# VGG's original avgpool is AdaptiveAvgPool2d(output_size=(7, 7))# If we keep this, input to classifier is 512 * 7 * 7self.avgpool = vgg_base.avgpool # Original VGG classifier:# (0): Linear(in_features=25088, out_features=4096, bias=True)# (1): ReLU(inplace=True)# (2): Dropout(p=0.5, inplace=False)# (3): Linear(in_features=4096, out_features=4096, bias=True)# (4): ReLU(inplace=True)# (5): Dropout(p=0.5, inplace=False)# (6): Linear(in_features=4096, out_features=1000, bias=True)# Modify the last layer of the classifier for the new number of classes# For CIFAR-10, num_classes = 10# The input to the classifier is 512 * 7 * 7 = 25088# Or if we change avgpool to nn.AdaptiveAvgPool2d((1,1)), then input is 512# Option 1: Keep original avgpool, input to classifier is 25088num_ftrs = vgg_base.classifier[0].in_features # Should be 25088# Option 2: Adapt for smaller feature map (e.g. 1x1 output from avgpool)# self.avgpool = nn.AdaptiveAvgPool2d((1,1)) # Output 512x1x1# num_ftrs = 512self.classifier = nn.Sequential(nn.Linear(num_ftrs, 4096),nn.ReLU(True),nn.Dropout(p=0.5),nn.Linear(4096, 4096),nn.ReLU(True),nn.Dropout(p=0.5),nn.Linear(4096, num_classes))# Initialize weights of the new classifier (optional, often helps)for m in self.classifier.modules():if isinstance(m, nn.Linear):nn.init.xavier_uniform_(m.weight)if m.bias is not None:nn.init.constant_(m.bias, 0)def forward(self, x):x = self.features(x)x = self.cbam(x)x = self.avgpool(x)x = torch.flatten(x, 1)x = self.classifier(x)return x# --- Data Preparation ---

# CIFAR-10 specific transforms

# VGG expects 224x224 images

transform_train = transforms.Compose([transforms.Resize(256),transforms.RandomResizedCrop(224),transforms.RandomHorizontalFlip(),transforms.ToTensor(),transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010)), # CIFAR-10 stats

])transform_test = transforms.Compose([transforms.Resize(256),transforms.CenterCrop(224),transforms.ToTensor(),transforms.Normalize((0.4914, 0.4822, 0.4465), (0.2023, 0.1994, 0.2010)),

])trainset = torchvision.datasets.CIFAR10(root='./data', train=True, download=True, transform=transform_train)

trainloader = DataLoader(trainset, batch_size=32, shuffle=True, num_workers=4, pin_memory=True) # Adjust batch_size based on GPU VRAMtestset = torchvision.datasets.CIFAR10(root='./data', train=False, download=True, transform=transform_test)

testloader = DataLoader(testset, batch_size=64, shuffle=False, num_workers=4, pin_memory=True)classes = ('plane', 'car', 'bird', 'cat', 'deer', 'dog', 'frog', 'horse', 'ship', 'truck')

num_classes = len(classes)# --- Training and Evaluation Functions ---

def train_model_epochs(model, criterion, optimizer, dataloader, num_epochs=10, accumulation_steps=1):model.train()scaler = torch.cuda.amp.GradScaler(enabled=torch.cuda.is_available()) # For mixed precisionfor epoch in range(num_epochs):running_loss = 0.0running_corrects = 0total_samples = 0optimizer.zero_grad() # Zero out gradients before starting accumulation for an effective batchfor i, (inputs, labels) in enumerate(dataloader):inputs, labels = inputs.to(device), labels.to(device)with torch.cuda.amp.autocast(enabled=torch.cuda.is_available()): # Mixed precision contextoutputs = model(inputs)loss = criterion(outputs, labels)loss = loss / accumulation_steps # Scale lossscaler.scale(loss).backward() # Scale loss and call backwardif (i + 1) % accumulation_steps == 0 or (i + 1) == len(dataloader):scaler.step(optimizer) # Perform optimizer stepscaler.update() # Update scaleroptimizer.zero_grad() # Zero out gradients for the next effective batch_, preds = torch.max(outputs, 1)running_loss += loss.item() * inputs.size(0) * accumulation_steps # Unscale loss for loggingrunning_corrects += torch.sum(preds == labels.data)total_samples += inputs.size(0)if (i + 1) % 100 == 0: # Log every 100 mini-batchesprint(f'Epoch [{epoch+1}/{num_epochs}], Batch [{i+1}/{len(dataloader)}], Loss: {loss.item()*accumulation_steps:.4f}')epoch_loss = running_loss / total_samplesepoch_acc = running_corrects.double() / total_samplesprint(f'Epoch {epoch+1}/{num_epochs} - Train Loss: {epoch_loss:.4f}, Acc: {epoch_acc:.4f}')return modeldef evaluate_model(model, criterion, dataloader):model.eval()running_loss = 0.0running_corrects = 0total_samples = 0with torch.no_grad():for inputs, labels in dataloader:inputs, labels = inputs.to(device), labels.to(device)with torch.cuda.amp.autocast(enabled=torch.cuda.is_available()):outputs = model(inputs)loss = criterion(outputs, labels)_, preds = torch.max(outputs, 1)running_loss += loss.item() * inputs.size(0)running_corrects += torch.sum(preds == labels.data)total_samples += inputs.size(0)epoch_loss = running_loss / total_samplesepoch_acc = running_corrects.double() / total_samplesprint(f'Test Loss: {epoch_loss:.4f}, Acc: {epoch_acc:.4f}\n')return epoch_acc# --- Fine-tuning Strategy Implementation ---

model_vgg_cbam = VGG16_CBAM(num_classes=num_classes, pretrained=True).to(device)

criterion = nn.CrossEntropyLoss()

accumulation_steps = 2 # Simulate larger batch size: 32*2 = 64 effective# **Phase 1: Train only the CBAM and the new classifier**

print("--- Phase 1: Training CBAM and Classifier ---")

# Freeze all layers in features

for param in model_vgg_cbam.features.parameters():param.requires_grad = False# Ensure CBAM and classifier parameters are trainable

for param in model_vgg_cbam.cbam.parameters():param.requires_grad = True

for param in model_vgg_cbam.classifier.parameters():param.requires_grad = True# Collect parameters to optimize for phase 1

params_to_optimize_phase1 = []

for name, param in model_vgg_cbam.named_parameters():if param.requires_grad:params_to_optimize_phase1.append(param)print(f"Phase 1 optimizing: {name}")optimizer_phase1 = optim.AdamW(params_to_optimize_phase1, lr=1e-3, weight_decay=1e-4)

# scheduler_phase1 = optim.lr_scheduler.StepLR(optimizer_phase1, step_size=5, gamma=0.1) # Optional scheduler# Reduced epochs for quicker demonstration, increase for better results

epochs_phase1 = 10 # e.g., 10-15 epochs

model_vgg_cbam = train_model_epochs(model_vgg_cbam, criterion, optimizer_phase1, trainloader, num_epochs=epochs_phase1, accumulation_steps=accumulation_steps)

evaluate_model(model_vgg_cbam, criterion, testloader)# **Phase 2: Unfreeze some later layers of the backbone (e.g., last VGG block) and train with a smaller LR**

print("\n--- Phase 2: Fine-tuning later backbone layers, CBAM, and Classifier ---")

# VGG16 features layers: total 31 layers (conv, relu, pool)

# Last block (conv5_1, relu, conv5_2, relu, conv5_3, relu, pool5) starts around index 24

# Unfreeze layers from index 24 onwards (last conv block)

# Note: VGG feature blocks end at indices: 4 (block1), 9 (block2), 16 (block3), 23 (block4), 30 (block5)

# Let's unfreeze block 5 (layers 24-30) and block 4 (layers 17-23)

unfreeze_from_layer_idx = 17 # Start of block4

for i, child in enumerate(model_vgg_cbam.features.children()):if i >= unfreeze_from_layer_idx:print(f"Phase 2 Unfreezing feature layer: {i} - {type(child)}")for param in child.parameters():param.requires_grad = True# else: # Keep earlier layers frozen# for param in child.parameters():# param.requires_grad = False # This is already done from phase 1, but good to be explicit if starting from scratch# Differential learning rates

# Backbone layers (newly unfrozen) get a smaller LR

# CBAM and classifier get a slightly larger LR (or same as backbone if preferred)

lr_backbone_phase2 = 1e-5

lr_head_phase2 = 5e-5 # CBAM and classifierparams_group_phase2 = [{'params': [], 'lr': lr_backbone_phase2, 'name': 'fine_tune_features'}, # For later backbone layers{'params': model_vgg_cbam.cbam.parameters(), 'lr': lr_head_phase2, 'name': 'cbam'},{'params': model_vgg_cbam.classifier.parameters(), 'lr': lr_head_phase2, 'name': 'classifier'}

]# Add only newly unfrozen feature layers to the optimizer group

for i, child in enumerate(model_vgg_cbam.features.children()):if i >= unfreeze_from_layer_idx:params_group_phase2[0]['params'].extend(list(child.parameters()))print(f"Phase 2 optimizing feature layer: {i} with lr {lr_backbone_phase2}")# Ensure early backbone layers are NOT in optimizer if they are frozen (param.requires_grad == False)

# The AdamW constructor below will only consider params with requires_grad=True from the list

optimizer_phase2 = optim.AdamW([p for p_group in params_group_phase2 for p in p_group['params'] if p.requires_grad], lr=lr_head_phase2 # Default LR, overridden by group LRs

)

# More explicit group definition:

optimizer_phase2 = optim.AdamW([{'params': [p for p in params_group_phase2[0]['params'] if p.requires_grad], 'lr': lr_backbone_phase2},{'params': [p for p in params_group_phase2[1]['params'] if p.requires_grad], 'lr': lr_head_phase2},{'params': [p for p in params_group_phase2[2]['params'] if p.requires_grad], 'lr': lr_head_phase2}

], weight_decay=1e-4)epochs_phase2 = 15 # e.g., 15-20 epochs

model_vgg_cbam = train_model_epochs(model_vgg_cbam, criterion, optimizer_phase2, trainloader, num_epochs=epochs_phase2, accumulation_steps=accumulation_steps)

evaluate_model(model_vgg_cbam, criterion, testloader)# **Phase 3: Unfreeze all layers and train with a very small learning rate**

print("\n--- Phase 3: Fine-tuning all layers with very small LR ---")

for param in model_vgg_cbam.features.parameters():param.requires_grad = True # Unfreeze all feature layers# Single very small learning rate for all parameters

lr_phase3 = 2e-6 # Or differential (earlier layers even smaller)

params_group_phase3 = [{'params': list(model_vgg_cbam.features[:unfreeze_from_layer_idx].parameters()), 'lr': lr_phase3 * 0.1, 'name':'early_features'}, # Earlier backbone layers{'params': list(model_vgg_cbam.features[unfreeze_from_layer_idx:].parameters()), 'lr': lr_phase3, 'name':'later_features'}, # Later backbone layers{'params': model_vgg_cbam.cbam.parameters(), 'lr': lr_phase3 * 2, 'name':'cbam'}, # CBAM slightly higher{'params': model_vgg_cbam.classifier.parameters(), 'lr': lr_phase3 * 2, 'name':'classifier'} # Classifier slightly higher

]

optimizer_phase3 = optim.AdamW(params_group_phase3, weight_decay=1e-5) # default LR is not used here# optimizer_phase3 = optim.AdamW(model_vgg_cbam.parameters(), lr=lr_phase3, weight_decay=1e-5) # Simpler: one LR for allepochs_phase3 = 15 # e.g., 15-20 epochs

model_vgg_cbam = train_model_epochs(model_vgg_cbam, criterion, optimizer_phase3, trainloader, num_epochs=epochs_phase3, accumulation_steps=accumulation_steps)

evaluate_model(model_vgg_cbam, criterion, testloader)print("Fine-tuning complete!")# Save the final model (optional)

# torch.save(model_vgg_cbam.state_dict(), 'vgg16_cbam_cifar10_final.pth')