第28周——InceptionV1实现猴痘识别

前言

- 🍨 本文为🔗365天深度学习训练营中的学习记录博客

- 🍖 原作者:K同学啊

一、前期准备

1.检查GPU

import torch

import torch.nn as nn

import torchvision.transforms as transforms

import torchvision

from torchvision import transforms, datasetsimport os,PIL,pathlibdevice = torch.device("cuda" if torch.cuda.is_available() else "cpu")device2.查看数据

import os,PIL,random,pathlibdata_dir = 'data/45-data/'

data_dir = pathlib.Path(data_dir)data_paths = list(data_dir.glob('*'))

classeNames = [str(path).split("\\")[2] for path in data_paths]

classeNames二、构建模型

1.划分数据集

total_datadir = 'data/45-data'train_transforms = transforms.Compose([transforms.Resize([224, 224]), # 将输入图片resize成统一尺寸transforms.ToTensor(), # 将PIL Image或numpy.ndarray转换为tensor,并归一化到[0,1]之间transforms.Normalize( # 标准化处理-->转换为标准正太分布(高斯分布),使模型更容易收敛mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]) # 其中 mean=[0.485,0.456,0.406]与std=[0.229,0.224,0.225] 从数据集中随机抽样计算得到的。

])total_data = datasets.ImageFolder(total_datadir,transform=train_transforms)

total_datatrain_size = int(0.8 * len(total_data))

test_size = len(total_data) - train_size

train_dataset, test_dataset = torch.utils.data.random_split(total_data, [train_size, test_size])

train_dataset, test_datasettrain_size,test_sizebatch_size = 32train_dl = torch.utils.data.DataLoader(train_dataset,batch_size=batch_size,shuffle=True,num_workers=1)

test_dl = torch.utils.data.DataLoader(test_dataset,batch_size=batch_size,shuffle=True,num_workers=1)for X, y in test_dl:print("Shape of X [N, C, H, W]: ", X.shape)print("Shape of y: ", y.shape, y.dtype)breakShape of X [N, C, H, W]: torch.Size([32, 3, 224, 224]) Shape of y: torch.Size([32]) torch.int64

2.创建模型

import torch

import torch.nn as nn

import torch.nn.functional as Fclass inception_block(nn.Module):def __init__(self, in_channels, ch1x1, ch3x3red, ch3x3, ch5x5red, ch5x5, pool_proj):super().__init__()# 1x1 conv branchself.branch1 = nn.Sequential(nn.Conv2d(in_channels, ch1x1, kernel_size=1),nn.BatchNorm2d(ch1x1),nn.ReLU(inplace=True))# 1x1 -> 3x3 conv branchself.branch2 = nn.Sequential(nn.Conv2d(in_channels, ch3x3red, kernel_size=1),nn.BatchNorm2d(ch3x3red),nn.ReLU(inplace=True),nn.Conv2d(ch3x3red, ch3x3, kernel_size=3, padding=1),nn.BatchNorm2d(ch3x3),nn.ReLU(inplace=True))# 1x1 -> 5x5 conv branchself.branch3 = nn.Sequential(nn.Conv2d(in_channels, ch5x5red, kernel_size=1),nn.BatchNorm2d(ch5x5red),nn.ReLU(inplace=True),nn.Conv2d(ch5x5red, ch5x5, kernel_size=5, padding=2),nn.BatchNorm2d(ch5x5),nn.ReLU(inplace=True))# 3x3 pool -> 1x1 conv branchself.branch4 = nn.Sequential(nn.MaxPool2d(kernel_size=3, stride=1, padding=1),nn.Conv2d(in_channels, pool_proj, kernel_size=1),nn.BatchNorm2d(pool_proj),nn.ReLU(inplace=True))def forward(self, x):return torch.cat([self.branch1(x),self.branch2(x),self.branch3(x),self.branch4(x)], dim=1) # 沿通道维度拼接class InceptionV1(nn.Module):def __init__(self, num_classes=1000):super().__init__()# 初始卷积层self.conv1 = nn.Conv2d(3, 64, kernel_size=7, stride=2, padding=3)self.maxpool1 = nn.MaxPool2d(3, stride=2, padding=1)self.conv2 = nn.Conv2d(64, 64, kernel_size=1)self.conv3 = nn.Conv2d(64, 192, kernel_size=3, padding=1)self.maxpool2 = nn.MaxPool2d(3, stride=2, padding=1)# Inception 模块self.inception3a = inception_block(192, 64, 96, 128, 16, 32, 32) # 输出通道: 64+128+32+32=256self.inception3b = inception_block(256, 128, 128, 192, 32, 96, 64) # 输出: 128+192+96+64=480self.maxpool3 = nn.MaxPool2d(3, stride=2, padding=1)self.inception4a = inception_block(480, 192, 96, 208, 16, 48, 64) # 192+208+48+64=512self.inception4b = inception_block(512, 160, 112, 224, 24, 64, 64) # 160+224+64+64=512self.inception4c = inception_block(512, 128, 128, 256, 24, 64, 64) # 128+256+64+64=512self.inception4d = inception_block(512, 112, 144, 288, 32, 64, 64) # 112+288+64+64=528self.inception4e = inception_block(528, 256, 160, 320, 32, 128, 128) # 256+320+128+128=832self.maxpool4 = nn.MaxPool2d(3, stride=2, padding=1)self.inception5a = inception_block(832, 256, 160, 320, 32, 128, 128) # 256+320+128+128=832self.inception5b = nn.Sequential(inception_block(832, 384, 192, 384, 48, 128, 128), # 384+384+128+128=1024nn.AdaptiveAvgPool2d((1, 1)), # 自适应池化到1x1nn.Dropout(0.4))# 分类器self.classifier = nn.Linear(1024, num_classes) # 输入特征数必须匹配池化后的通道数def forward(self, x):# 输入形状: [32, 3, 224, 224]x = F.relu(self.conv1(x)) # -> [32, 64, 112, 112]x = self.maxpool1(x) # -> [32, 64, 56, 56]x = F.relu(self.conv2(x)) # -> [32, 64, 56, 56]x = F.relu(self.conv3(x)) # -> [32, 192, 56, 56]x = self.maxpool2(x) # -> [32, 192, 28, 28]x = self.inception3a(x) # -> [32, 256, 28, 28]x = self.inception3b(x) # -> [32, 480, 28, 28]x = self.maxpool3(x) # -> [32, 480, 14, 14]x = self.inception4a(x) # -> [32, 512, 14, 14]x = self.inception4b(x) # -> [32, 512, 14, 14]x = self.inception4c(x) # -> [32, 512, 14, 14]x = self.inception4d(x) # -> [32, 528, 14, 14]x = self.inception4e(x) # -> [32, 832, 14, 14]x = self.maxpool4(x) # -> [32, 832, 7, 7]x = self.inception5a(x) # -> [32, 832, 7, 7]x = self.inception5b(x) # -> [32, 1024, 1, 1]x = torch.flatten(x, 1) # -> [32, 1024]x = self.classifier(x) # -> [32, num_classes]return x# 测试代码

if __name__ == "__main__":model = InceptionV1(num_classes=1000)dummy_input = torch.randn(32, 3, 224, 224) # 匹配输入形状[N, C, H, W]=[32,3,224,224]output = model(dummy_input)print("Output shape:", output.shape) # 应输出 torch.Size([32, 1000])

from torchsummary import summary

model=InceptionV1().to(device)

# 将模型移动到GPU(如果可用)summary(model, (3, 224, 224))

print(model)Output shape: torch.Size([32, 1000]) ----------------------------------------------------------------Layer (type) Output Shape Param # ================================================================Conv2d-1 [-1, 64, 112, 112] 9,472MaxPool2d-2 [-1, 64, 56, 56] 0Conv2d-3 [-1, 64, 56, 56] 4,160Conv2d-4 [-1, 192, 56, 56] 110,784MaxPool2d-5 [-1, 192, 28, 28] 0Conv2d-6 [-1, 64, 28, 28] 12,352BatchNorm2d-7 [-1, 64, 28, 28] 128ReLU-8 [-1, 64, 28, 28] 0Conv2d-9 [-1, 96, 28, 28] 18,528BatchNorm2d-10 [-1, 96, 28, 28] 192ReLU-11 [-1, 96, 28, 28] 0Conv2d-12 [-1, 128, 28, 28] 110,720BatchNorm2d-13 [-1, 128, 28, 28] 256ReLU-14 [-1, 128, 28, 28] 0Conv2d-15 [-1, 16, 28, 28] 3,088BatchNorm2d-16 [-1, 16, 28, 28] 32ReLU-17 [-1, 16, 28, 28] 0Conv2d-18 [-1, 32, 28, 28] 12,832BatchNorm2d-19 [-1, 32, 28, 28] 64ReLU-20 [-1, 32, 28, 28] 0MaxPool2d-21 [-1, 192, 28, 28] 0Conv2d-22 [-1, 32, 28, 28] 6,176BatchNorm2d-23 [-1, 32, 28, 28] 64ReLU-24 [-1, 32, 28, 28] 0inception_block-25 [-1, 256, 28, 28] 0Conv2d-26 [-1, 128, 28, 28] 32,896BatchNorm2d-27 [-1, 128, 28, 28] 256ReLU-28 [-1, 128, 28, 28] 0Conv2d-29 [-1, 128, 28, 28] 32,896BatchNorm2d-30 [-1, 128, 28, 28] 256ReLU-31 [-1, 128, 28, 28] 0Conv2d-32 [-1, 192, 28, 28] 221,376BatchNorm2d-33 [-1, 192, 28, 28] 384ReLU-34 [-1, 192, 28, 28] 0Conv2d-35 [-1, 32, 28, 28] 8,224BatchNorm2d-36 [-1, 32, 28, 28] 64ReLU-37 [-1, 32, 28, 28] 0Conv2d-38 [-1, 96, 28, 28] 76,896BatchNorm2d-39 [-1, 96, 28, 28] 192ReLU-40 [-1, 96, 28, 28] 0MaxPool2d-41 [-1, 256, 28, 28] 0Conv2d-42 [-1, 64, 28, 28] 16,448BatchNorm2d-43 [-1, 64, 28, 28] 128ReLU-44 [-1, 64, 28, 28] 0inception_block-45 [-1, 480, 28, 28] 0MaxPool2d-46 [-1, 480, 14, 14] 0Conv2d-47 [-1, 192, 14, 14] 92,352BatchNorm2d-48 [-1, 192, 14, 14] 384ReLU-49 [-1, 192, 14, 14] 0Conv2d-50 [-1, 96, 14, 14] 46,176BatchNorm2d-51 [-1, 96, 14, 14] 192ReLU-52 [-1, 96, 14, 14] 0Conv2d-53 [-1, 208, 14, 14] 179,920BatchNorm2d-54 [-1, 208, 14, 14] 416ReLU-55 [-1, 208, 14, 14] 0Conv2d-56 [-1, 16, 14, 14] 7,696BatchNorm2d-57 [-1, 16, 14, 14] 32ReLU-58 [-1, 16, 14, 14] 0Conv2d-59 [-1, 48, 14, 14] 19,248BatchNorm2d-60 [-1, 48, 14, 14] 96ReLU-61 [-1, 48, 14, 14] 0MaxPool2d-62 [-1, 480, 14, 14] 0Conv2d-63 [-1, 64, 14, 14] 30,784BatchNorm2d-64 [-1, 64, 14, 14] 128ReLU-65 [-1, 64, 14, 14] 0inception_block-66 [-1, 512, 14, 14] 0Conv2d-67 [-1, 160, 14, 14] 82,080BatchNorm2d-68 [-1, 160, 14, 14] 320ReLU-69 [-1, 160, 14, 14] 0Conv2d-70 [-1, 112, 14, 14] 57,456BatchNorm2d-71 [-1, 112, 14, 14] 224ReLU-72 [-1, 112, 14, 14] 0Conv2d-73 [-1, 224, 14, 14] 226,016BatchNorm2d-74 [-1, 224, 14, 14] 448ReLU-75 [-1, 224, 14, 14] 0Conv2d-76 [-1, 24, 14, 14] 12,312BatchNorm2d-77 [-1, 24, 14, 14] 48ReLU-78 [-1, 24, 14, 14] 0Conv2d-79 [-1, 64, 14, 14] 38,464BatchNorm2d-80 [-1, 64, 14, 14] 128ReLU-81 [-1, 64, 14, 14] 0MaxPool2d-82 [-1, 512, 14, 14] 0Conv2d-83 [-1, 64, 14, 14] 32,832BatchNorm2d-84 [-1, 64, 14, 14] 128ReLU-85 [-1, 64, 14, 14] 0inception_block-86 [-1, 512, 14, 14] 0Conv2d-87 [-1, 128, 14, 14] 65,664BatchNorm2d-88 [-1, 128, 14, 14] 256ReLU-89 [-1, 128, 14, 14] 0Conv2d-90 [-1, 128, 14, 14] 65,664BatchNorm2d-91 [-1, 128, 14, 14] 256ReLU-92 [-1, 128, 14, 14] 0Conv2d-93 [-1, 256, 14, 14] 295,168BatchNorm2d-94 [-1, 256, 14, 14] 512ReLU-95 [-1, 256, 14, 14] 0Conv2d-96 [-1, 24, 14, 14] 12,312BatchNorm2d-97 [-1, 24, 14, 14] 48ReLU-98 [-1, 24, 14, 14] 0Conv2d-99 [-1, 64, 14, 14] 38,464BatchNorm2d-100 [-1, 64, 14, 14] 128ReLU-101 [-1, 64, 14, 14] 0MaxPool2d-102 [-1, 512, 14, 14] 0Conv2d-103 [-1, 64, 14, 14] 32,832BatchNorm2d-104 [-1, 64, 14, 14] 128ReLU-105 [-1, 64, 14, 14] 0inception_block-106 [-1, 512, 14, 14] 0Conv2d-107 [-1, 112, 14, 14] 57,456BatchNorm2d-108 [-1, 112, 14, 14] 224ReLU-109 [-1, 112, 14, 14] 0Conv2d-110 [-1, 144, 14, 14] 73,872BatchNorm2d-111 [-1, 144, 14, 14] 288ReLU-112 [-1, 144, 14, 14] 0Conv2d-113 [-1, 288, 14, 14] 373,536BatchNorm2d-114 [-1, 288, 14, 14] 576ReLU-115 [-1, 288, 14, 14] 0Conv2d-116 [-1, 32, 14, 14] 16,416BatchNorm2d-117 [-1, 32, 14, 14] 64ReLU-118 [-1, 32, 14, 14] 0Conv2d-119 [-1, 64, 14, 14] 51,264BatchNorm2d-120 [-1, 64, 14, 14] 128ReLU-121 [-1, 64, 14, 14] 0MaxPool2d-122 [-1, 512, 14, 14] 0Conv2d-123 [-1, 64, 14, 14] 32,832BatchNorm2d-124 [-1, 64, 14, 14] 128ReLU-125 [-1, 64, 14, 14] 0inception_block-126 [-1, 528, 14, 14] 0Conv2d-127 [-1, 256, 14, 14] 135,424BatchNorm2d-128 [-1, 256, 14, 14] 512ReLU-129 [-1, 256, 14, 14] 0Conv2d-130 [-1, 160, 14, 14] 84,640BatchNorm2d-131 [-1, 160, 14, 14] 320ReLU-132 [-1, 160, 14, 14] 0Conv2d-133 [-1, 320, 14, 14] 461,120BatchNorm2d-134 [-1, 320, 14, 14] 640ReLU-135 [-1, 320, 14, 14] 0Conv2d-136 [-1, 32, 14, 14] 16,928BatchNorm2d-137 [-1, 32, 14, 14] 64ReLU-138 [-1, 32, 14, 14] 0Conv2d-139 [-1, 128, 14, 14] 102,528BatchNorm2d-140 [-1, 128, 14, 14] 256ReLU-141 [-1, 128, 14, 14] 0MaxPool2d-142 [-1, 528, 14, 14] 0Conv2d-143 [-1, 128, 14, 14] 67,712BatchNorm2d-144 [-1, 128, 14, 14] 256ReLU-145 [-1, 128, 14, 14] 0inception_block-146 [-1, 832, 14, 14] 0MaxPool2d-147 [-1, 832, 7, 7] 0Conv2d-148 [-1, 256, 7, 7] 213,248BatchNorm2d-149 [-1, 256, 7, 7] 512ReLU-150 [-1, 256, 7, 7] 0Conv2d-151 [-1, 160, 7, 7] 133,280BatchNorm2d-152 [-1, 160, 7, 7] 320ReLU-153 [-1, 160, 7, 7] 0Conv2d-154 [-1, 320, 7, 7] 461,120BatchNorm2d-155 [-1, 320, 7, 7] 640ReLU-156 [-1, 320, 7, 7] 0Conv2d-157 [-1, 32, 7, 7] 26,656BatchNorm2d-158 [-1, 32, 7, 7] 64ReLU-159 [-1, 32, 7, 7] 0Conv2d-160 [-1, 128, 7, 7] 102,528BatchNorm2d-161 [-1, 128, 7, 7] 256ReLU-162 [-1, 128, 7, 7] 0MaxPool2d-163 [-1, 832, 7, 7] 0Conv2d-164 [-1, 128, 7, 7] 106,624BatchNorm2d-165 [-1, 128, 7, 7] 256ReLU-166 [-1, 128, 7, 7] 0inception_block-167 [-1, 832, 7, 7] 0Conv2d-168 [-1, 384, 7, 7] 319,872BatchNorm2d-169 [-1, 384, 7, 7] 768ReLU-170 [-1, 384, 7, 7] 0Conv2d-171 [-1, 192, 7, 7] 159,936BatchNorm2d-172 [-1, 192, 7, 7] 384ReLU-173 [-1, 192, 7, 7] 0Conv2d-174 [-1, 384, 7, 7] 663,936BatchNorm2d-175 [-1, 384, 7, 7] 768ReLU-176 [-1, 384, 7, 7] 0Conv2d-177 [-1, 48, 7, 7] 39,984BatchNorm2d-178 [-1, 48, 7, 7] 96ReLU-179 [-1, 48, 7, 7] 0Conv2d-180 [-1, 128, 7, 7] 153,728BatchNorm2d-181 [-1, 128, 7, 7] 256ReLU-182 [-1, 128, 7, 7] 0MaxPool2d-183 [-1, 832, 7, 7] 0Conv2d-184 [-1, 128, 7, 7] 106,624BatchNorm2d-185 [-1, 128, 7, 7] 256ReLU-186 [-1, 128, 7, 7] 0inception_block-187 [-1, 1024, 7, 7] 0 AdaptiveAvgPool2d-188 [-1, 1024, 1, 1] 0Dropout-189 [-1, 1024, 1, 1] 0Linear-190 [-1, 1000] 1,025,000 ================================================================ Total params: 7,012,472 Trainable params: 7,012,472 Non-trainable params: 0 ---------------------------------------------------------------- Input size (MB): 0.57 Forward/backward pass size (MB): 69.61 Params size (MB): 26.75 Estimated Total Size (MB): 96.93 ---------------------------------------------------------------- InceptionV1((conv1): Conv2d(3, 64, kernel_size=(7, 7), stride=(2, 2), padding=(3, 3))(maxpool1): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)(conv2): Conv2d(64, 64, kernel_size=(1, 1), stride=(1, 1))(conv3): Conv2d(64, 192, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))(maxpool2): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)(inception3a): inception_block((branch1): Sequential((0): Conv2d(192, 64, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True))(branch2): Sequential((0): Conv2d(192, 96, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(96, 128, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))(4): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch3): Sequential((0): Conv2d(192, 16, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(16, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(16, 32, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))(4): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch4): Sequential((0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)(1): Conv2d(192, 32, kernel_size=(1, 1), stride=(1, 1))(2): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(3): ReLU(inplace=True)))(inception3b): inception_block((branch1): Sequential((0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True))(branch2): Sequential((0): Conv2d(256, 128, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(128, 192, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))(4): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch3): Sequential((0): Conv2d(256, 32, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(32, 96, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))(4): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch4): Sequential((0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)(1): Conv2d(256, 64, kernel_size=(1, 1), stride=(1, 1))(2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(3): ReLU(inplace=True)))(maxpool3): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)(inception4a): inception_block((branch1): Sequential((0): Conv2d(480, 192, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True))(branch2): Sequential((0): Conv2d(480, 96, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(96, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(96, 208, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))(4): BatchNorm2d(208, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch3): Sequential((0): Conv2d(480, 16, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(16, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(16, 48, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))(4): BatchNorm2d(48, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch4): Sequential((0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)(1): Conv2d(480, 64, kernel_size=(1, 1), stride=(1, 1))(2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(3): ReLU(inplace=True)))(inception4b): inception_block((branch1): Sequential((0): Conv2d(512, 160, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True))(branch2): Sequential((0): Conv2d(512, 112, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(112, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(112, 224, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))(4): BatchNorm2d(224, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch3): Sequential((0): Conv2d(512, 24, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(24, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))(4): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch4): Sequential((0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)(1): Conv2d(512, 64, kernel_size=(1, 1), stride=(1, 1))(2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(3): ReLU(inplace=True)))(inception4c): inception_block((branch1): Sequential((0): Conv2d(512, 128, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True))(branch2): Sequential((0): Conv2d(512, 128, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(128, 256, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))(4): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch3): Sequential((0): Conv2d(512, 24, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(24, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))(4): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch4): Sequential((0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)(1): Conv2d(512, 64, kernel_size=(1, 1), stride=(1, 1))(2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(3): ReLU(inplace=True)))(inception4d): inception_block((branch1): Sequential((0): Conv2d(512, 112, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(112, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True))(branch2): Sequential((0): Conv2d(512, 144, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(144, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(144, 288, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))(4): BatchNorm2d(288, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch3): Sequential((0): Conv2d(512, 32, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(32, 64, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))(4): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch4): Sequential((0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)(1): Conv2d(512, 64, kernel_size=(1, 1), stride=(1, 1))(2): BatchNorm2d(64, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(3): ReLU(inplace=True)))(inception4e): inception_block((branch1): Sequential((0): Conv2d(528, 256, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True))(branch2): Sequential((0): Conv2d(528, 160, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(160, 320, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))(4): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch3): Sequential((0): Conv2d(528, 32, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(32, 128, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))(4): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch4): Sequential((0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)(1): Conv2d(528, 128, kernel_size=(1, 1), stride=(1, 1))(2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(3): ReLU(inplace=True)))(maxpool4): MaxPool2d(kernel_size=3, stride=2, padding=1, dilation=1, ceil_mode=False)(inception5a): inception_block((branch1): Sequential((0): Conv2d(832, 256, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(256, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True))(branch2): Sequential((0): Conv2d(832, 160, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(160, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(160, 320, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))(4): BatchNorm2d(320, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch3): Sequential((0): Conv2d(832, 32, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(32, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(32, 128, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))(4): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch4): Sequential((0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)(1): Conv2d(832, 128, kernel_size=(1, 1), stride=(1, 1))(2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(3): ReLU(inplace=True)))(inception5b): Sequential((0): inception_block((branch1): Sequential((0): Conv2d(832, 384, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True))(branch2): Sequential((0): Conv2d(832, 192, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(192, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(192, 384, kernel_size=(3, 3), stride=(1, 1), padding=(1, 1))(4): BatchNorm2d(384, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch3): Sequential((0): Conv2d(832, 48, kernel_size=(1, 1), stride=(1, 1))(1): BatchNorm2d(48, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(2): ReLU(inplace=True)(3): Conv2d(48, 128, kernel_size=(5, 5), stride=(1, 1), padding=(2, 2))(4): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(5): ReLU(inplace=True))(branch4): Sequential((0): MaxPool2d(kernel_size=3, stride=1, padding=1, dilation=1, ceil_mode=False)(1): Conv2d(832, 128, kernel_size=(1, 1), stride=(1, 1))(2): BatchNorm2d(128, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(3): ReLU(inplace=True)))(1): AdaptiveAvgPool2d(output_size=(1, 1))(2): Dropout(p=0.4, inplace=False))(classifier): Linear(in_features=1024, out_features=1000, bias=True) )

3.编译及训练模型

loss_fn = nn.CrossEntropyLoss() # 创建损失函数

learn_rate = 1e-4 # 学习率

opt = torch.optim.SGD(model.parameters(),lr=learn_rate)# 训练循环

def train(dataloader, model, loss_fn, optimizer):size = len(dataloader.dataset) # 训练集的大小,一共60000张图片num_batches = len(dataloader) # 批次数目,1875(60000/32)train_loss, train_acc = 0, 0 # 初始化训练损失和正确率for X, y in dataloader: # 获取图片及其标签X, y = X.to(device), y.to(device)# 计算预测误差pred = model(X) # 网络输出loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失# 反向传播optimizer.zero_grad() # grad属性归零loss.backward() # 反向传播optimizer.step() # 每一步自动更新# 记录acc与losstrain_acc += (pred.argmax(1) == y).type(torch.float).sum().item()train_loss += loss.item()train_acc /= sizetrain_loss /= num_batchesreturn train_acc, train_lossdef test (dataloader, model, loss_fn):size = len(dataloader.dataset) # 测试集的大小,一共10000张图片num_batches = len(dataloader) # 批次数目,313(10000/32=312.5,向上取整)test_loss, test_acc = 0, 0# 当不进行训练时,停止梯度更新,节省计算内存消耗with torch.no_grad():for imgs, target in dataloader:imgs, target = imgs.to(device), target.to(device)# 计算losstarget_pred = model(imgs)loss = loss_fn(target_pred, target)test_loss += loss.item()test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()test_acc /= sizetest_loss /= num_batchesreturn test_acc, test_lossepochs = 20

train_loss = []

train_acc = []

test_loss = []

test_acc = []for epoch in range(epochs):model.train()epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, opt)model.eval()epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)train_acc.append(epoch_train_acc)train_loss.append(epoch_train_loss)test_acc.append(epoch_test_acc)test_loss.append(epoch_test_loss)template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%,Test_loss:{:.3f}')print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss, epoch_test_acc*100, epoch_test_loss))

print('Done')三、结果可视化

import matplotlib.pyplot as plt

#隐藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 #分辨率from datetime import datetime

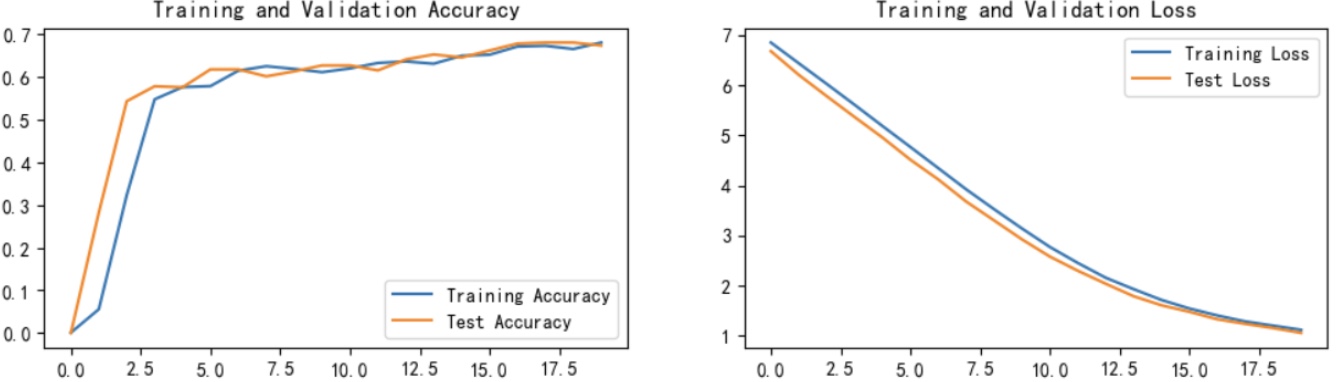

current_time = datetime.now() # 获取当前时间epochs_range = range(epochs)plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.xlabel(current_time) # 打卡请带上时间戳,否则代码截图无效plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

四、总结

其主要优点在于通过并行使用不同尺寸的卷积核(1×1、3×3、5×5)以及引入1×1卷积进行降维,在有效提升特征提取能力的同时大幅减少了计算量和参数数量,表现出较高的计算效率和良好的实际性能。然而,InceptionV1的网络结构较为复杂,不易手工实现与调试,且固定的卷积核组合在适应不同任务时灵活性不足。此外,虽然其参数量较小,但多分支结构在某些硬件平台上部署并不友好,后续版本也对其做了进一步优化。因此,InceptionV1是一种在性能与效率之间取得良好平衡的网络结构,但在实际应用中仍存在一定的改进空间。