大模型项目:普通蓝牙音响接入DeepSeek,解锁语音交互新玩法

本文附带视频讲解

【代码宇宙019】技术方案:蓝牙音响接入DeepSeek,解锁语音交互新玩法_哔哩哔哩_bilibili

目录

效果演示

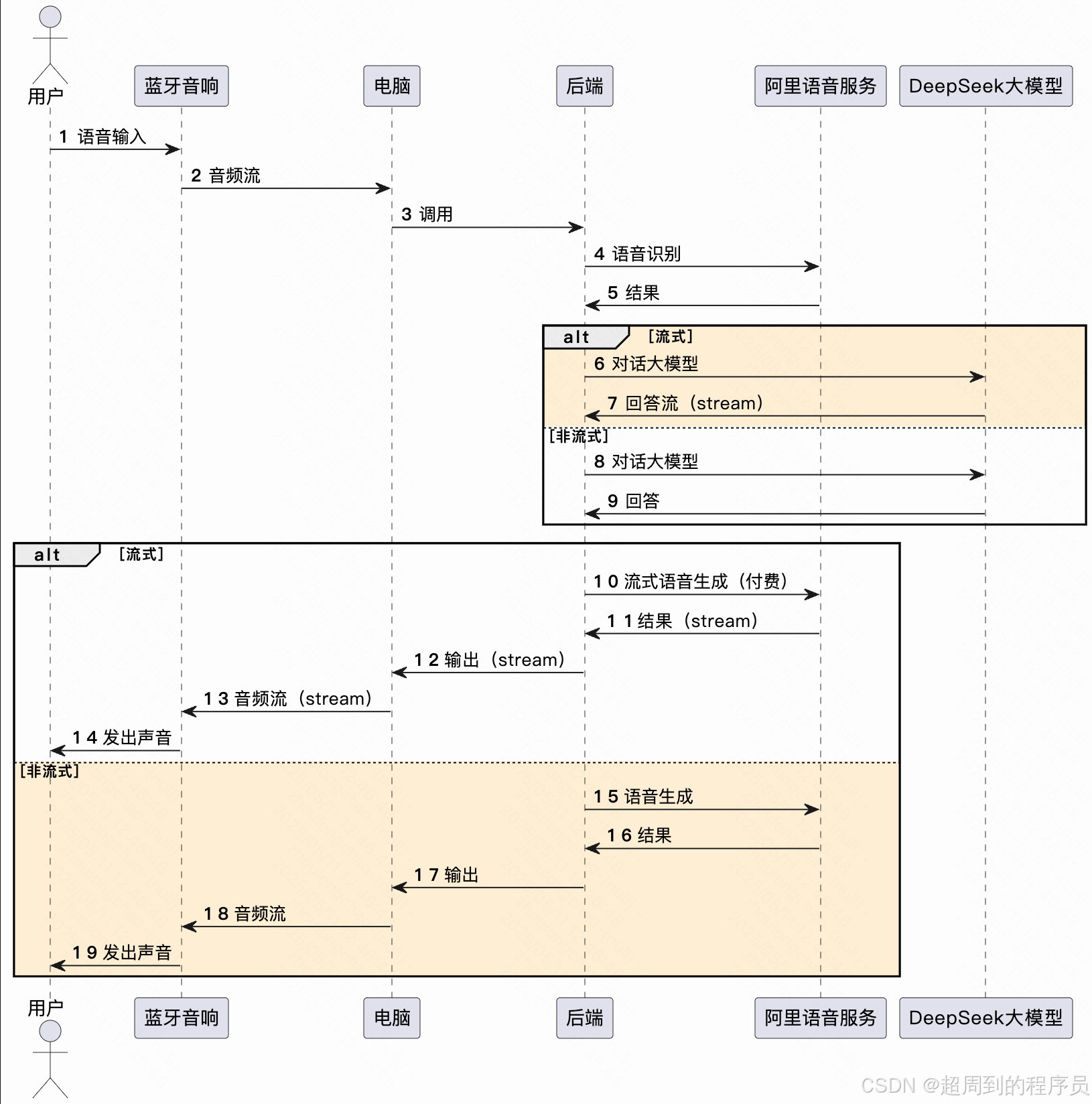

核心逻辑

技术实现

大模型对话(技术: LangChain4j 接入 DeepSeek)

语音识别(技术:阿里云-实时语音识别)

语音生成(技术:阿里云-语音生成)

效果演示

核心逻辑

技术实现

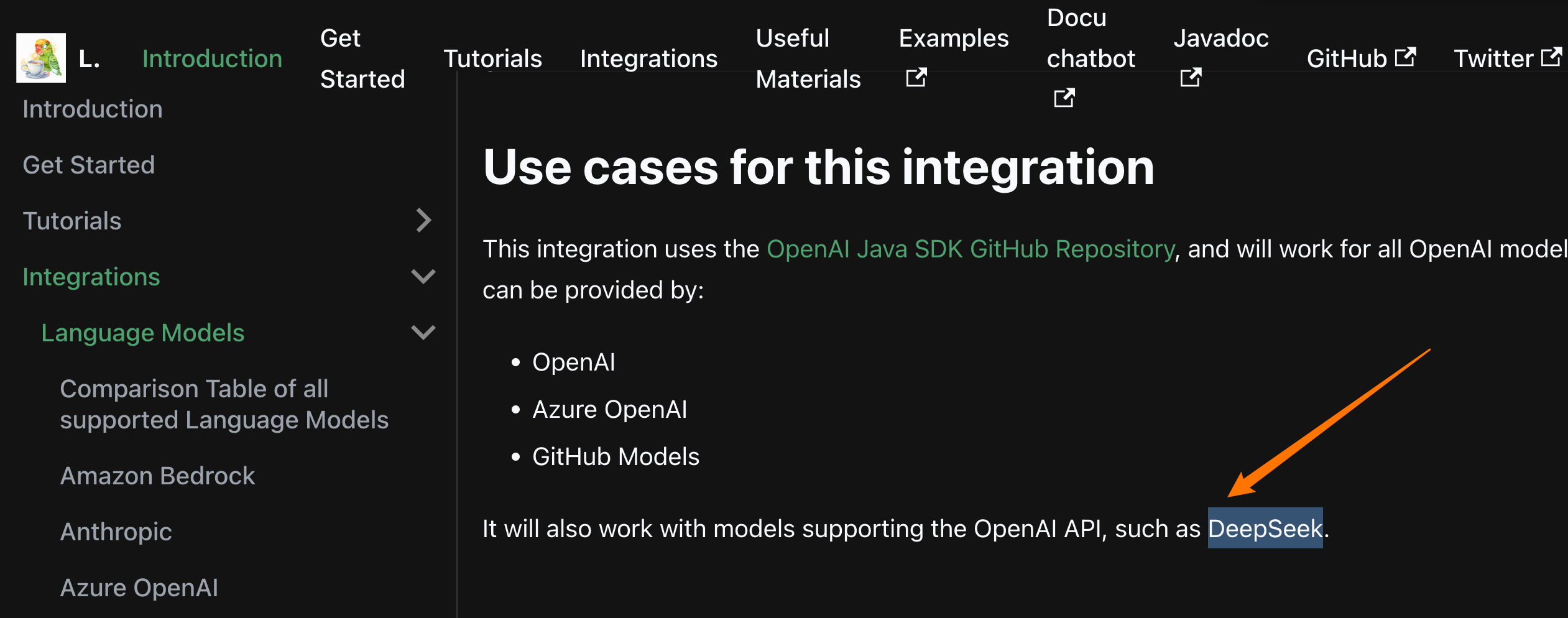

大模型对话(技术: LangChain4j 接入 DeepSeek)

常用依赖都在这里(不是最简),DeepSeek 目前没有单独的依赖,用 open-ai 协议的依赖可以兼容,官网这里有说明:OpenAI Official SDK | LangChain4j

<dependency><groupId>dev.langchain4j</groupId><artifactId>langchain4j-open-ai</artifactId><version>1.0.0-beta3</version>

</dependency>

<dependency><groupId>dev.langchain4j</groupId><artifactId>langchain4j</artifactId><version>1.0.0-beta3</version>

</dependency>

<dependency><groupId>dev.langchain4j</groupId><artifactId>langchain4j-spring-boot-starter</artifactId><version>1.0.0-beta3</version>

</dependency>请求 ds 的核心类

package ai.voice.assistant.client;/*** @Author:超周到的程序员* @Date:2025/4/25*/import ai.voice.assistant.config.DaemonProcess;

import ai.voice.assistant.service.llm.BaseChatClient;

import dev.langchain4j.data.message.ChatMessage;

import dev.langchain4j.data.message.SystemMessage;

import dev.langchain4j.data.message.UserMessage;

import dev.langchain4j.model.chat.response.ChatResponse;

import dev.langchain4j.model.chat.response.StreamingChatResponseHandler;

import dev.langchain4j.model.openai.OpenAiStreamingChatModel;import org.apache.logging.log4j.LogManager;

import org.apache.logging.log4j.Logger;

import org.springframework.beans.factory.annotation.Value;

import org.springframework.stereotype.Component;import java.util.ArrayList;

import java.util.List;

import java.util.concurrent.CountDownLatch;import com.alibaba.fastjson.JSON;@Component("deepSeekStreamClient")

public class DeepSeekStreamClient implements BaseChatClient {private static final Logger LOGGER = LogManager.getLogger(DeepSeekStreamClient.class);@Value("${certificate.llm.deepseek.key}")private String key;@Overridepublic String chat(String question) {if (question.isBlank()) {return "";}OpenAiStreamingChatModel model = OpenAiStreamingChatModel.builder().baseUrl("https://api.deepseek.com").apiKey(key).modelName("deepseek-chat").build();List<ChatMessage> messages = new ArrayList<>();messages.add(SystemMessage.from(prompt));messages.add(UserMessage.from(question));CountDownLatch countDownLatch = new CountDownLatch(1);StringBuilder answerBuilder = new StringBuilder();model.chat(messages, new StreamingChatResponseHandler() {@Overridepublic void onPartialResponse(String answerSplice) {// 语音生成(流式)// voiceGenerateStreamService.process(new String[] {answerSplice});

// System.out.println("== answerSplice: " + answerSplice);answerBuilder.append(answerSplice);}@Overridepublic void onCompleteResponse(ChatResponse chatResponse) {countDownLatch.countDown();}@Overridepublic void onError(Throwable throwable) {LOGGER.error("chat ds error, messages:{} err:", JSON.toJSON(messages), throwable);}});try {countDownLatch.await();} catch (InterruptedException e) {throw new RuntimeException(e);}String answer = answerBuilder.toString();LOGGER.info("chat ds end, answer:{}", answer);return answer;}

}语音识别(技术:阿里云-实时语音识别)

开发参考_智能语音交互(ISI)-阿里云帮助中心

开发日志记录——

这里在我的场景下遇到了会话断连的问题:

- 问题场景:阿里的实时语音识别,第一次对话后 10s 如果不说话那么会断开连接(阿里侧避免过多无用连接占用),本次做的蓝牙音响诉求是让他一直保活不断开,有需要就和它对话并且不想要唤醒词

- 解决方式:因此这里用了 catch 断连异常后再次执行监听方法的方式来兼容这个问题,其实也可以定时发送一个空包过去,但是那样不确定会不会额外增加费用,另外也要处理同时发送空包和人进行语音对话的问题,最终生成的音频文件播放哪个的顺序问题

<dependency><groupId>com.alibaba.nls</groupId><artifactId>nls-sdk-tts</artifactId><version>${ali-vioce-sdk.version}</version>

</dependency>

<dependency><groupId>com.alibaba.nls</groupId><artifactId>nls-sdk-transcriber</artifactId><version>${ali-vioce-sdk.version}</version>

</dependency>package ai.voice.assistant.service.voice;import ai.voice.assistant.config.VoiceConfig;

import ai.voice.assistant.service.llm.BaseChatClient;

import ai.voice.assistant.util.WavPlayerUtil;

import com.alibaba.nls.client.protocol.Constant;

import com.alibaba.nls.client.protocol.InputFormatEnum;

import com.alibaba.nls.client.protocol.NlsClient;

import com.alibaba.nls.client.protocol.SampleRateEnum;

import com.alibaba.nls.client.protocol.asr.SpeechTranscriber;

import com.alibaba.nls.client.protocol.asr.SpeechTranscriberListener;

import com.alibaba.nls.client.protocol.asr.SpeechTranscriberResponse;

import jakarta.annotation.PreDestroy;import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.beans.factory.annotation.Qualifier;

import org.springframework.stereotype.Service;import javax.sound.sampled.AudioFormat;

import javax.sound.sampled.AudioSystem;

import javax.sound.sampled.DataLine;

import javax.sound.sampled.TargetDataLine;/*** @Author:超周到的程序员* @Date:2025/4/23 此示例演示了从麦克风采集语音并实时识别的过程* (仅作演示,需用户根据实际情况实现)*/

@Service

public class VoiceRecognitionService {private static final Logger LOGGER = LoggerFactory.getLogger(VoiceRecognitionService.class);@Autowiredprivate NlsClient client;@Autowiredprivate VoiceConfig voiceConfig;@Autowiredprivate VoiceGenerateService voiceGenerateService;@Autowired

// @Qualifier("deepSeekStreamClient")@Qualifier("deepSeekMemoryClient")private BaseChatClient chatClient;public SpeechTranscriberListener getTranscriberListener() {SpeechTranscriberListener listener = new SpeechTranscriberListener() {//识别出中间结果.服务端识别出一个字或词时会返回此消息.仅当setEnableIntermediateResult(true)时,才会有此类消息返回@Overridepublic void onTranscriptionResultChange(SpeechTranscriberResponse response) {// 重要提示: task_id很重要,是调用方和服务端通信的唯一ID标识,当遇到问题时,需要提供此task_id以便排查LOGGER.info("name: {}, status: {}, index: {}, result: {}, time: {}",response.getName(),response.getStatus(),response.getTransSentenceIndex(),response.getTransSentenceText(),response.getTransSentenceTime());}@Overridepublic void onTranscriberStart(SpeechTranscriberResponse response) {LOGGER.info("task_id: {}, name: {}, status: {}",response.getTaskId(),response.getName(),response.getStatus());}@Overridepublic void onSentenceBegin(SpeechTranscriberResponse response) {LOGGER.info("task_id: {}, name: {}, status: {}",response.getTaskId(),response.getName(),response.getStatus());}//识别出一句话.服务端会智能断句,当识别到一句话结束时会返回此消息@Overridepublic void onSentenceEnd(SpeechTranscriberResponse response) {LOGGER.info("name: {}, status: {}, index: {}, result: {}, confidence: {}, begin_time: {}, time: {}",response.getName(),response.getStatus(),response.getTransSentenceIndex(),response.getTransSentenceText(),response.getConfidence(),response.getSentenceBeginTime(),response.getTransSentenceTime());if (response.getName().equals(Constant.VALUE_NAME_ASR_SENTENCE_END)) {if (response.getStatus() == 20000000) {// 识别完一句话,调用大模型String answer = chatClient.chat(response.getTransSentenceText());voiceGenerateService.process(answer);WavPlayerUtil.playWavFile("/Users/zhoulongchao/Desktop/file_code/project/p_me/ai-voice-assistant/tts_test.wav");}}}//识别完毕@Overridepublic void onTranscriptionComplete(SpeechTranscriberResponse response) {LOGGER.info("task_id: {}, name: {}, status: {}",response.getTaskId(),response.getName(),response.getStatus());}@Overridepublic void onFail(SpeechTranscriberResponse response) {// 重要提示: task_id很重要,是调用方和服务端通信的唯一ID标识,当遇到问题时,需要提供此task_id以便排查LOGGER.info("语音识别 task_id: {}, status: {}, status_text: {}",response.getTaskId(),response.getStatus(),response.getStatusText());}};return listener;}public void process() {SpeechTranscriber transcriber = null;try {// 创建实例,建立连接transcriber = new SpeechTranscriber(client, getTranscriberListener());transcriber.setAppKey(voiceConfig.getAppKey());// 输入音频编码方式transcriber.setFormat(InputFormatEnum.PCM);// 输入音频采样率transcriber.setSampleRate(SampleRateEnum.SAMPLE_RATE_16K);// 是否返回中间识别结果transcriber.setEnableIntermediateResult(true);// 是否生成并返回标点符号transcriber.setEnablePunctuation(true);// 是否将返回结果规整化,比如将一百返回为100transcriber.setEnableITN(false);//此方法将以上参数设置序列化为json发送给服务端,并等待服务端确认transcriber.start();AudioFormat audioFormat = new AudioFormat(16000.0F, 16, 1, true, false);DataLine.Info info = new DataLine.Info(TargetDataLine.class, audioFormat);TargetDataLine targetDataLine = (TargetDataLine) AudioSystem.getLine(info);targetDataLine.open(audioFormat);targetDataLine.start();System.out.println("You can speak now!");int nByte = 0;final int bufSize = 3200;byte[] buffer = new byte[bufSize];while ((nByte = targetDataLine.read(buffer, 0, bufSize)) > 0) {// 直接发送麦克风数据流transcriber.send(buffer, nByte);}transcriber.stop();} catch (Exception e) {LOGGER.info("语音识别 error: {}", e.getMessage());// 临时兼容,用于保持连接在逻辑上不断开,否则默认10s不说话会自动断连process();} finally {if (null != transcriber) {transcriber.close();}}}@PreDestroypublic void shutdown() {client.shutdown();}

}语音生成(技术:阿里云-语音生成)

开发参考_智能语音交互(ISI)-阿里云帮助中心

开发日志记录——

- 非线程安全:在调用完阿里的语音生成能力后,得到了音频文件,和播放打通的方法是建立一个临时文件,生成和播放都路由到这个文件,因为这个项目只是个人方便分阶段单元测试用可以这么写,如果有多个客户端,那么这种方式就不是线程安全的

- 回答延迟:这里我是使用的普通版的语音合成能力,初次接入支持免费体验 3 个月,其实可以使用流式语音合成能力,是另一个 sdk,具体可见文档:流式文本语音合成使用说明_智能语音交互(ISI)-阿里云帮助中心 因为目前流式语音合成能力需要付费,因此没有接入流式,因此每次需要收集完 ds 大模型的回答流之后才可以进行语音生成,会有 8s 延迟

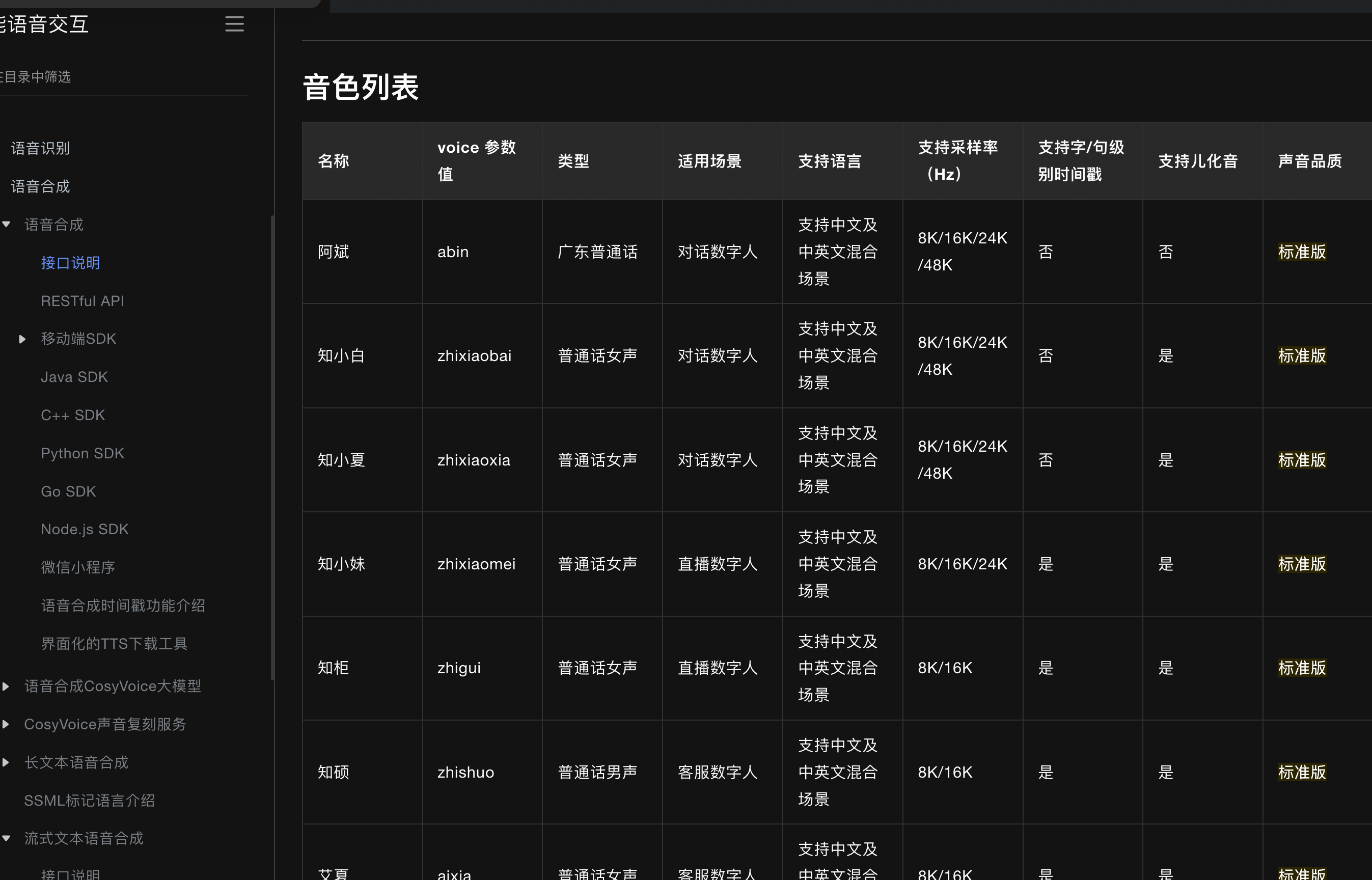

官网有 100 多种音色可以选:

<dependency><groupId>com.alibaba.nls</groupId><artifactId>nls-sdk-tts</artifactId><version>${ali-vioce-sdk.version}</version>

</dependency>

<dependency><groupId>com.alibaba.nls</groupId><artifactId>nls-sdk-transcriber</artifactId><version>${ali-vioce-sdk.version}</version>

</dependency>package ai.voice.assistant.service.voice;import ai.voice.assistant.config.VoiceConfig;

import com.alibaba.nls.client.protocol.NlsClient;

import com.alibaba.nls.client.protocol.OutputFormatEnum;

import com.alibaba.nls.client.protocol.SampleRateEnum;

import com.alibaba.nls.client.protocol.tts.;

import com.alibaba.nls.client.protocol.tts.SpeechSynthesizerListener;

import com.alibaba.nls.client.protocol.tts.SpeechSynthesizerResponse;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory;

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.stereotype.Service;import java.io.File;

import java.io.FileOutputStream;

import java.io.IOException;

import java.nio.ByteBuffer;

import java.util.concurrent.ScheduledExecutorService;/*** @Author:超周到的程序员* @Date:2025/4/23* 语音合成API调用* 流式合成TTS* 首包延迟计算*/

@Service

public class VoiceGenerateService {private static final Logger LOGGER = LoggerFactory.getLogger(VoiceGenerateService.class);private static long startTime;@Autowiredprivate VoiceConfig voiceConfig;@Autowiredprivate NlsClient client;private static SpeechSynthesizerListener getSynthesizerListener() {SpeechSynthesizerListener listener = null;try {listener = new SpeechSynthesizerListener() {File f = new File("tts_test.wav");FileOutputStream fout = new FileOutputStream(f);private boolean firstRecvBinary = true;//语音合成结束@Overridepublic void onComplete(SpeechSynthesizerResponse response) {// TODO 当onComplete时表示所有TTS数据已经接收完成,因此这个是整个合成延迟,该延迟可能较大,未必满足实时场景LOGGER.info("name:{} status:{} outputFile:{}", response.getStatus(), f.getAbsolutePath(), response.getName());}//语音合成的语音二进制数据@Overridepublic void onMessage(ByteBuffer message) {try {if (firstRecvBinary) {// TODO 此处是计算首包语音流的延迟,收到第一包语音流时,即可以进行语音播放,以提升响应速度(特别是实时交互场景下)firstRecvBinary = false;long now = System.currentTimeMillis();LOGGER.info("tts first latency : " + (now - VoiceGenerateService.startTime) + " ms");}byte[] bytesArray = new byte[message.remaining()];message.get(bytesArray, 0, bytesArray.length);fout.write(bytesArray);} catch (IOException e) {e.printStackTrace();}}@Overridepublic void onFail(SpeechSynthesizerResponse response) {// TODO 重要提示: task_id很重要,是调用方和服务端通信的唯一ID标识,当遇到问题时,需要提供此task_id以便排查LOGGER.info("语音合成 task_id: {}, status: {}, status_text: {}",response.getTaskId(),response.getStatus(),response.getStatusText());}@Overridepublic void onMetaInfo(SpeechSynthesizerResponse response) {

// System.out.println("MetaInfo event:{}" + response.getTaskId());}};} catch (Exception e) {e.printStackTrace();}return listener;}public void process(String text) {SpeechSynthesizer synthesizer = null;try {//创建实例,建立连接synthesizer = new SpeechSynthesizer(client, getSynthesizerListener());synthesizer.setAppKey(voiceConfig.getAppKey());//设置返回音频的编码格式synthesizer.setFormat(OutputFormatEnum.WAV);//设置返回音频的采样率synthesizer.setSampleRate(SampleRateEnum.SAMPLE_RATE_16K);//发音人synthesizer.setVoice("jielidou");//语调,范围是-500~500,可选,默认是0synthesizer.setPitchRate(50);//语速,范围是-500~500,默认是0synthesizer.setSpeechRate(30);//设置用于语音合成的文本synthesizer.setText(text);synthesizer.addCustomedParam("enable_subtitle", true);//此方法将以上参数设置序列化为json发送给服务端,并等待服务端确认long start = System.currentTimeMillis();synthesizer.start();LOGGER.info("tts start latency " + (System.currentTimeMillis() - start) + " ms");VoiceGenerateService.startTime = System.currentTimeMillis();//等待语音合成结束synthesizer.waitForComplete();LOGGER.info("tts stop latency " + (System.currentTimeMillis() - start) + " ms");} catch (Exception e) {e.printStackTrace();} finally {//关闭连接if (null != synthesizer) {synthesizer.close();}}}public void shutdown() {client.shutdown();}

}