ResNet残差神经网络的模型结构定义(pytorch实现)

ResNet残差神经网络的模型结构定义(pytorch实现)

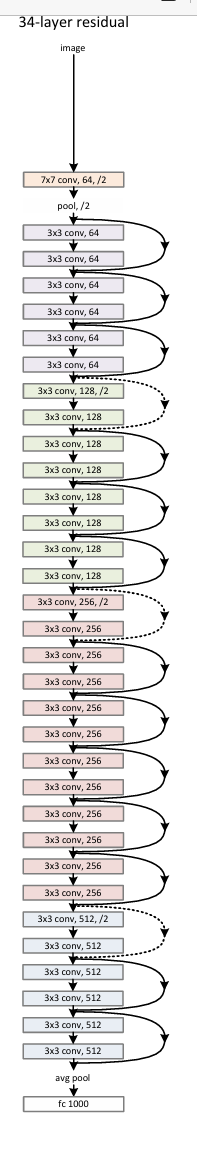

ResNet‑34

ResNet‑34的实现思路。核心在于:

- 定义残差块(BasicBlock)

- 用

_make_layer方法堆叠多个残差块 - 按照 ResNet‑34 的通道和层数配置来搭建网络

import torch

import torch.nn as nn

import torch.nn.functional as Fclass BasicBlock(nn.Module):expansion = 1 # 对于 BasicBlock,输出通道 = base_channels * expansiondef __init__(self, in_channels, out_channels, stride=1):super().__init__()# 第一个 3×3 卷积self.conv1 = nn.Conv2d(in_channels, out_channels, kernel_size=3,stride=stride, padding=1, bias=False)self.bn1 = nn.BatchNorm2d(out_channels)# 第二个 3×3 卷积self.conv2 = nn.Conv2d(out_channels, out_channels, kernel_size=3,stride=1, padding=1, bias=False)self.bn2 = nn.BatchNorm2d(out_channels)# 如果输入输出通道或下采样不一致,则用 1×1 卷积做一下“shortcut”self.shortcut = nn.Sequential()if stride != 1 or in_channels != out_channels * BasicBlock.expansion:self.shortcut = nn.Sequential(nn.Conv2d(in_channels, out_channels * BasicBlock.expansion,kernel_size=1, stride=stride, bias=False),nn.BatchNorm2d(out_channels * BasicBlock.expansion))def forward(self, x):out = F.relu(self.bn1(self.conv1(x)))out = self.bn2(self.conv2(out))# 残差连接out += self.shortcut(x)return F.relu(out)class ResNet(nn.Module):def __init__(self, block, layers, num_classes=1000):"""block: 残差块类型(BasicBlock 或 Bottleneck)layers: 每个 stage 包含多少个 block,例如 [3, 4, 6, 3] 对应 ResNet‑34num_classes: 最后分类数"""super().__init__()self.in_channels = 64# Stem:7×7 conv + maxpoolself.conv1 = nn.Conv2d(3, 64, kernel_size=7,stride=2, padding=3, bias=False)self.bn1 = nn.BatchNorm2d(64)self.pool1 = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)# 四个 stage,通道分别是 [64,128,256,512]self.layer1 = self._make_layer(block, 64, layers[0], stride=1)self.layer2 = self._make_layer(block, 128, layers[1], stride=2)self.layer3 = self._make_layer(block, 256, layers[2], stride=2)self.layer4 = self._make_layer(block, 512, layers[3], stride=2)# 全局平均池化 + 全连接self.avgpool = nn.AdaptiveAvgPool2d((1,1))self.fc = nn.Linear(512 * block.expansion, num_classes)def _make_layer(self, block, out_channels, num_blocks, stride):"""构造一个 stage,由 num_blocks 个 block 组成。第一个 block 可能带 stride 下采样,其余 block stride=1。"""strides = [stride] + [1] * (num_blocks - 1)layers = []for s in strides:layers.append(block(self.in_channels, out_channels, stride=s))self.in_channels = out_channels * block.expansionreturn nn.Sequential(*layers)def forward(self, x):x = F.relu(self.bn1(self.conv1(x)))x = self.pool1(x)x = self.layer1(x) # output size /4x = self.layer2(x) # output size /8x = self.layer3(x) # output size /16x = self.layer4(x) # output size /32x = self.avgpool(x) # [B, C, 1, 1]x = torch.flatten(x, 1)x = self.fc(x)return xdef resnet34(num_classes=1000):"""返回一个 ResNet-34 实例"""return ResNet(BasicBlock, [3, 4, 6, 3], num_classes)

关键点解析

-

BasicBlock- 两个连续的 3×3 卷积,均附带 BatchNorm 和 ReLU

- 当通道数或步幅不匹配时,用 1×1 卷积对输入做一下线性变换,才能做元素相加

-

_make_layer- 每个 stage 第一个残差块如果要做下采样,则 stride=2;其余都保持 stride=1

layers参数[3,4,6,3]精确对应了图中红、粉、灰、蓝四部分每层 block 的数量

-

整体流程

- 7×7、stride=2 下采样 → 最大池化 →

- 四个 stage(通道 64→128→256→512,每段下采样一次)→

- 全局平均池化 → 全连接分类

这样就完整复现了图中右侧那张“34-layer residual”结构。你可以直接调用 resnet34(),并像下面这样测试一下输出形状:

if __name__ == "__main__":model = resnet34(num_classes=1000)x = torch.randn(8, 3, 224, 224)y = model(x)print(y.shape) # torch.Size([8, 1000])

ResNet‑50

PyTorch 实现 ResNet‑50 。它与 ResNet‑34 唯一不同之处在于使用了 Bottleneck 模块,并且每个 stage 的 block 数量依次为 [3, 4, 6, 3](同 ResNet‑34),但每个 block 内部由三个卷积层组成,expansion 值为 4。

import torch

import torch.nn as nn

import torch.nn.functional as Fclass Bottleneck(nn.Module):# 输出通道 = base_channels * expansionexpansion = 4def __init__(self, in_channels, base_channels, stride=1):super().__init__()# 1×1 降维self.conv1 = nn.Conv2d(in_channels, base_channels, kernel_size=1,bias=False)self.bn1 = nn.BatchNorm2d(base_channels)# 3×3 卷积(可能下采样)self.conv2 = nn.Conv2d(base_channels, base_channels, kernel_size=3,stride=stride, padding=1, bias=False)self.bn2 = nn.BatchNorm2d(base_channels)# 1×1 升维self.conv3 = nn.Conv2d(base_channels, base_channels * Bottleneck.expansion,kernel_size=1, bias=False)self.bn3 = nn.BatchNorm2d(base_channels * Bottleneck.expansion)# shortcut 分支self.shortcut = nn.Sequential()if stride != 1 or in_channels != base_channels * Bottleneck.expansion:self.shortcut = nn.Sequential(nn.Conv2d(in_channels, base_channels * Bottleneck.expansion,kernel_size=1, stride=stride, bias=False),nn.BatchNorm2d(base_channels * Bottleneck.expansion))def forward(self, x):out = F.relu(self.bn1(self.conv1(x)))out = F.relu(self.bn2(self.conv2(out)))out = self.bn3(self.conv3(out))out += self.shortcut(x)return F.relu(out)class ResNet(nn.Module):def __init__(self, block, layers, num_classes=1000):super().__init__()self.in_channels = 64# Stem:7×7 conv + maxpoolself.conv1 = nn.Conv2d(3, 64, kernel_size=7,stride=2, padding=3, bias=False)self.bn1 = nn.BatchNorm2d(64)self.pool1 = nn.MaxPool2d(kernel_size=3, stride=2, padding=1)# 四个 stageself.layer1 = self._make_layer(block, 64, layers[0], stride=1)self.layer2 = self._make_layer(block, 128, layers[1], stride=2)self.layer3 = self._make_layer(block, 256, layers[2], stride=2)self.layer4 = self._make_layer(block, 512, layers[3], stride=2)# 池化 + 全连接self.avgpool = nn.AdaptiveAvgPool2d((1, 1))self.fc = nn.Linear(512 * block.expansion, num_classes)def _make_layer(self, block, base_channels, num_blocks, stride):"""构造一个 stage,由 num_blocks 个 block 组成。第一个 block 可能下采样(stride>1),其余保持 stride=1。"""strides = [stride] + [1] * (num_blocks - 1)layers = []for s in strides:layers.append(block(self.in_channels, base_channels, stride=s))self.in_channels = base_channels * block.expansionreturn nn.Sequential(*layers)def forward(self, x):x = F.relu(self.bn1(self.conv1(x)))x = self.pool1(x)x = self.layer1(x) # /4x = self.layer2(x) # /8x = self.layer3(x) # /16x = self.layer4(x) # /32x = self.avgpool(x) # [B, C, 1, 1]x = torch.flatten(x, 1)x = self.fc(x)return xdef resnet50(num_classes=1000):"""返回一个 ResNet-50 实例"""return ResNet(Bottleneck, [3, 4, 6, 3], num_classes)# 简单测试

if __name__ == "__main__":model = resnet50(num_classes=1000)x = torch.randn(4, 3, 224, 224)y = model(x)print(y.shape) # -> torch.Size([4, 1000])

说明

- Bottleneck 模块:三个卷积层依次为 1×1 → 3×3 → 1×1,最后一个 1×1 用来恢复维度(乘以

expansion=4)。 - shortcut 分支:当需下采样(stride=2)或输入输出维度不一致时,使用 1×1 卷积对齐后相加。

- layers 参数

[3,4,6,3]:分别对应四个 stage 中 Bottleneck block 的个数。

这样就完成了 ResNet‑50 的全结构定义。你可以直接调用 resnet50() 并将其与预训练权重或自己的数据集一起使用。

参考:Kaiming He 等人,Deep Residual Learning for Image Recognition (CVPR 2016).