Pytorch学习系列 | 实现天气识别

一、前置知识

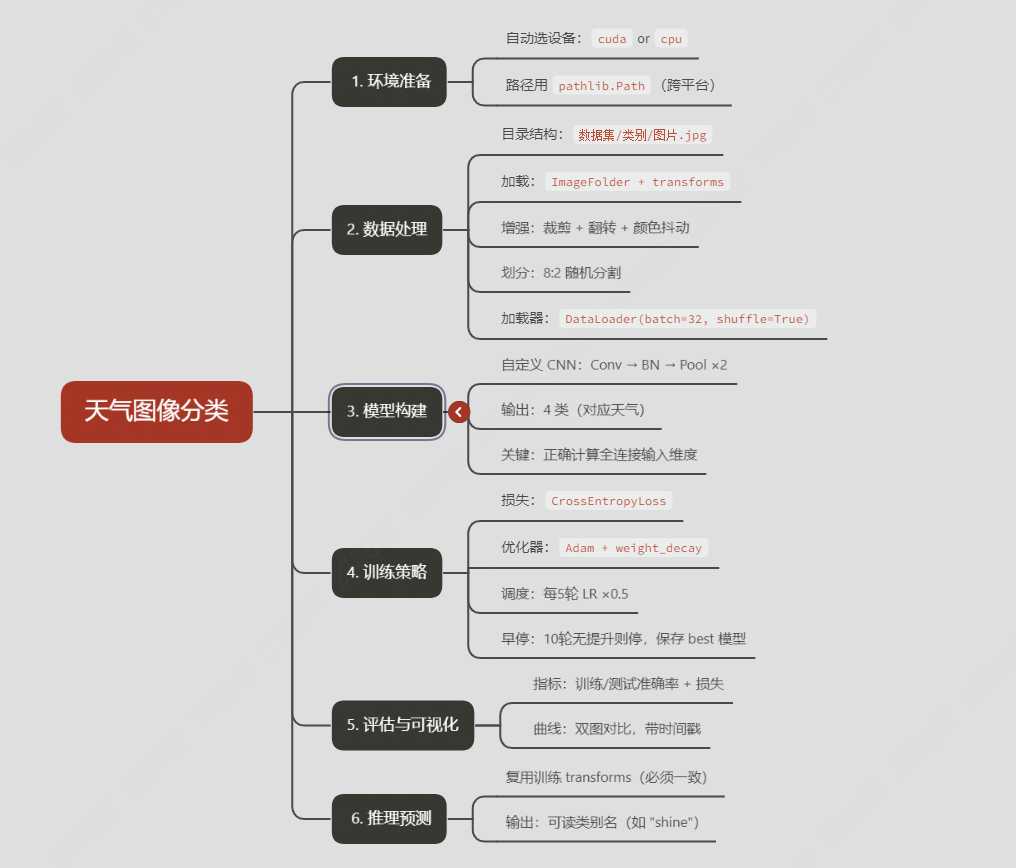

1、思维导图

2、文件夹常用操作总结

功能 |

|

|

拼接路径 |

|

|

获取当前目录 |

|

|

判断是否存在 |

|

|

获取文件名 |

|

|

获取父目录 |

|

|

遍历文件夹 |

|

|

二、代码实现

1、设置GPU

若设备支持GPU就使用GPU,否则使用CPU

import torch

import torch.nn as nn

import matplotlib.pyplot as plt

import torchvisiondevice = torch.device("cuda" if torch.cuda.is_available() else "cpu")device

/root/miniconda3/lib/python3.10/site-packages/torch/cuda/__init__.py:51: FutureWarning: The pynvml package is deprecated. Please install nvidia-ml-py instead. If you did not install pynvml directly, please report this to the maintainers of the package that installed pynvml for you.import pynvml # type: ignore[import]device(type='cuda')2、数据准备

2.1、识别数据路径

import os

import pathlib# 查看当前工作路径(确认路径是否正确)

print("当前工作路径:", os.getcwd())# 定义数据目录(建议用绝对路径更稳妥,相对路径依赖当前工作路径)

data_dir = './data/天气识别数据集/'

data_dir = pathlib.Path(data_dir)# 获取数据目录下的所有子路径(文件夹或文件)

data_paths = list(data_dir.glob('*'))# 提取每个子路径的名称(即类别名,自动适配系统分隔符)

classeNames = [path.name for path in data_paths]

classeNames

当前工作路径: /root/365天训练营/Pytorch实战

['cloudy', 'rain', 'shine', 'sunrise']2.2、图片加载

import matplotlib.pyplot as plt

from PIL import Image# 指定图像文件夹路径

image_folder = './data/天气识别数据集/cloudy/'# 获取文件夹中的所有图像文件

image_files = [f for f in os.listdir(image_folder) if f.endswith((".jpg", ".png", ".jpeg"))]# 创建Matplotlib图像

fig, axes = plt.subplots(3, 8, figsize=(8, 3))# 使用列表推导式加载和显示图像

for ax, img_file in zip(axes.flat, image_files):img_path = os.path.join(image_folder, img_file)img = Image.open(img_path)ax.imshow(img)ax.axis('off')# 显示图像

plt.tight_layout()

plt.show()

2.3、定义图像预处理流程

from torchvision import transforms, datasetstotal_datadir = './data/天气识别数据集/'# 关于transforms.Compose的更多介绍可以参考:https://blog.csdn.net/qq_38251616/article/details/124878863

#train_transforms = transforms.Compose([

# transforms.Resize([224, 224]), # 将输入图片resize成统一尺寸

# transforms.ToTensor(), # 将PIL Image或numpy.ndarray转换为tensor,并归一化到[0,1]之间

# transforms.Normalize( # 标准化处理-->转换为标准正太分布(高斯分布),使模型更容易收敛

# mean=[0.485, 0.456, 0.406],

# std=[0.229, 0.224, 0.225]) # 其中 mean=[0.485,0.456,0.406]与std=[0.229,0.224,0.225] 从数据集中随机抽样计算得到的。

#])

train_transforms = transforms.Compose([transforms.RandomResizedCrop(224, scale=(0.8, 1.0)), # 轻微随机裁剪(原固定Resize)transforms.RandomHorizontalFlip(p=0.5), # 新增:50%概率水平翻转transforms.ColorJitter(brightness=0.2, contrast=0.2), # 新增:轻微调整亮度/对比度transforms.Resize([224, 224]), # 保持原Resizetransforms.ToTensor(),transforms.Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225])

])total_data = datasets.ImageFolder(total_datadir,transform=train_transforms)

total_data

Dataset ImageFolderNumber of datapoints: 1125Root location: ./data/天气识别数据集/StandardTransform

Transform: Compose(RandomResizedCrop(size=(224, 224), scale=(0.8, 1.0), ratio=(0.75, 1.3333), interpolation=bilinear, antialias=warn)RandomHorizontalFlip(p=0.5)ColorJitter(brightness=(0.8, 1.2), contrast=(0.8, 1.2), saturation=None, hue=None)Resize(size=[224, 224], interpolation=bilinear, max_size=None, antialias=warn)ToTensor()Normalize(mean=[0.485, 0.456, 0.406], std=[0.229, 0.224, 0.225]))2.4、划分数据集

import torch

import torch.nn as nntrain_size = int(0.8 * len(total_data))

test_size = len(total_data) - train_size

train_dataset, test_dataset = torch.utils.data.random_split(total_data, [train_size, test_size])len(train_dataset), len(test_dataset)batch_size = 32train_dl = torch.utils.data.DataLoader(train_dataset,batch_size=batch_size,shuffle=True)

test_dl = torch.utils.data.DataLoader(test_dataset,batch_size=batch_size,shuffle=True)for X, y in test_dl:print("Shape of X [N, C, H, W]: ", X.shape)print("Shape of y: ", y.shape, y.dtype)break

Shape of X [N, C, H, W]: torch.Size([32, 3, 224, 224])

Shape of y: torch.Size([32]) torch.int643、构建CNN网络

import torch.nn.functional as Fclass Network_bn(nn.Module):def __init__(self):super(Network_bn, self).__init__()"""nn.Conv2d()函数:第一个参数(in_channels)是输入的channel数量第二个参数(out_channels)是输出的channel数量第三个参数(kernel_size)是卷积核大小第四个参数(stride)是步长,默认为1第五个参数(padding)是填充大小,默认为0"""self.conv1 = nn.Conv2d(in_channels=3, out_channels=12, kernel_size=5, stride=1, padding=0)self.bn1 = nn.BatchNorm2d(12)self.conv2 = nn.Conv2d(in_channels=12, out_channels=12, kernel_size=5, stride=1, padding=0)self.bn2 = nn.BatchNorm2d(12)self.pool1 = nn.MaxPool2d(2,2)self.conv4 = nn.Conv2d(in_channels=12, out_channels=24, kernel_size=5, stride=1, padding=0)self.bn4 = nn.BatchNorm2d(24)self.conv5 = nn.Conv2d(in_channels=24, out_channels=24, kernel_size=5, stride=1, padding=0)self.bn5 = nn.BatchNorm2d(24)self.pool2 = nn.MaxPool2d(2,2)self.fc1 = nn.Linear(24*50*50, len(classeNames))def forward(self, x):x = F.relu(self.bn1(self.conv1(x))) x = F.relu(self.bn2(self.conv2(x))) x = self.pool1(x) x = F.relu(self.bn4(self.conv4(x))) x = F.relu(self.bn5(self.conv5(x))) x = self.pool2(x) x = x.view(-1, 24*50*50)x = self.fc1(x)return xdevice = "cuda" if torch.cuda.is_available() else "cpu"

print("Using {} device".format(device))model = Network_bn().to(device)

model

Using cuda deviceNetwork_bn((conv1): Conv2d(3, 12, kernel_size=(5, 5), stride=(1, 1))(bn1): BatchNorm2d(12, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv2): Conv2d(12, 12, kernel_size=(5, 5), stride=(1, 1))(bn2): BatchNorm2d(12, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(pool1): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)(conv4): Conv2d(12, 24, kernel_size=(5, 5), stride=(1, 1))(bn4): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(conv5): Conv2d(24, 24, kernel_size=(5, 5), stride=(1, 1))(bn5): BatchNorm2d(24, eps=1e-05, momentum=0.1, affine=True, track_running_stats=True)(pool2): MaxPool2d(kernel_size=2, stride=2, padding=0, dilation=1, ceil_mode=False)(fc1): Linear(in_features=60000, out_features=4, bias=True)

)4、训练模型

4.1、设置超参数

loss_fn = nn.CrossEntropyLoss() # 创建损失函数

learn_rate = 1e-4 # 学习率

#opt = torch.optim.SGD(model.parameters(),lr=learn_rate)

opt = torch.optim.Adam(model.parameters(), lr=1e-4, weight_decay=1e-4)4.2 训练函数

# 训练循环

def train(dataloader, model, loss_fn, optimizer):size = len(dataloader.dataset) # 训练集的大小,一共60000张图片num_batches = len(dataloader) # 批次数目,1875(60000/32)train_loss, train_acc = 0, 0 # 初始化训练损失和正确率for X, y in dataloader: # 获取图片及其标签X, y = X.to(device), y.to(device)# 计算预测误差pred = model(X) # 网络输出loss = loss_fn(pred, y) # 计算网络输出和真实值之间的差距,targets为真实值,计算二者差值即为损失# 反向传播optimizer.zero_grad() # grad属性归零loss.backward() # 反向传播optimizer.step() # 每一步自动更新# 记录acc与losstrain_acc += (pred.argmax(1) == y).type(torch.float).sum().item()train_loss += loss.item()train_acc /= sizetrain_loss /= num_batchesreturn train_acc, train_lossdef test (dataloader, model, loss_fn):size = len(dataloader.dataset) # 测试集的大小,一共10000张图片num_batches = len(dataloader) # 批次数目,313(10000/32=312.5,向上取整)test_loss, test_acc = 0, 0# 当不进行训练时,停止梯度更新,节省计算内存消耗with torch.no_grad():for imgs, target in dataloader:imgs, target = imgs.to(device), target.to(device)# 计算losstarget_pred = model(imgs)loss = loss_fn(target_pred, target)test_loss += loss.item()test_acc += (target_pred.argmax(1) == target).type(torch.float).sum().item()test_acc /= sizetest_loss /= num_batchesreturn test_acc, test_loss#定义初始化参数

epochs = 20

train_loss = []

train_acc = []

test_loss = []

test_acc = []

# 初始化早停参数

best_test_acc = 0

patience = 10 # 连续10个epoch不提升则停止

counter = 0scheduler = torch.optim.lr_scheduler.StepLR(opt, step_size=5, gamma=0.5) # 每5个epoch学习率减半for epoch in range(epochs):model.train()epoch_train_acc, epoch_train_loss = train(train_dl, model, loss_fn, opt)model.eval()epoch_test_acc, epoch_test_loss = test(test_dl, model, loss_fn)# 更新学习率scheduler.step() train_acc.append(epoch_train_acc)train_loss.append(epoch_train_loss)test_acc.append(epoch_test_acc)test_loss.append(epoch_test_loss)# 新增:早停判断if epoch_test_acc > best_test_acc:best_test_acc = epoch_test_acccounter = 0 # 重置计数器torch.save(model.state_dict(), "model/best_model.pth") # 保存最优模型else:counter += 1if counter >= patience:print(f"早停于第{epoch+1} epoch,最优测试准确率:{best_test_acc*100:.1f}%")break # 提前停止训练template = ('Epoch:{:2d}, Train_acc:{:.1f}%, Train_loss:{:.3f}, Test_acc:{:.1f}%,Test_loss:{:.3f}')print(template.format(epoch+1, epoch_train_acc*100, epoch_train_loss, epoch_test_acc*100, epoch_test_loss))

print('Done')

Epoch: 1, Train_acc:74.1%, Train_loss:0.734, Test_acc:72.0%,Test_loss:0.654

Epoch: 2, Train_acc:82.6%, Train_loss:0.583, Test_acc:86.7%,Test_loss:0.297

Epoch: 3, Train_acc:86.2%, Train_loss:0.491, Test_acc:90.2%,Test_loss:0.226

Epoch: 4, Train_acc:88.3%, Train_loss:0.403, Test_acc:86.7%,Test_loss:0.271

Epoch: 5, Train_acc:85.9%, Train_loss:0.417, Test_acc:86.7%,Test_loss:0.307

Epoch: 6, Train_acc:89.0%, Train_loss:0.299, Test_acc:88.4%,Test_loss:0.247

Epoch: 7, Train_acc:92.8%, Train_loss:0.235, Test_acc:88.9%,Test_loss:0.203

Epoch: 8, Train_acc:91.7%, Train_loss:0.216, Test_acc:86.7%,Test_loss:0.412

Epoch: 9, Train_acc:93.0%, Train_loss:0.182, Test_acc:91.6%,Test_loss:0.174

Epoch:10, Train_acc:93.8%, Train_loss:0.192, Test_acc:90.7%,Test_loss:0.633

Epoch:11, Train_acc:95.3%, Train_loss:0.132, Test_acc:90.2%,Test_loss:0.186

Epoch:12, Train_acc:93.9%, Train_loss:0.149, Test_acc:91.1%,Test_loss:0.162

Epoch:13, Train_acc:95.6%, Train_loss:0.130, Test_acc:93.3%,Test_loss:0.263

Epoch:14, Train_acc:94.8%, Train_loss:0.161, Test_acc:93.3%,Test_loss:0.160

Epoch:15, Train_acc:96.0%, Train_loss:0.136, Test_acc:92.0%,Test_loss:0.186

Epoch:16, Train_acc:95.1%, Train_loss:0.143, Test_acc:92.0%,Test_loss:0.145

Epoch:17, Train_acc:95.7%, Train_loss:0.116, Test_acc:91.6%,Test_loss:0.205

Epoch:18, Train_acc:95.9%, Train_loss:0.123, Test_acc:92.0%,Test_loss:0.164

Epoch:19, Train_acc:95.7%, Train_loss:0.189, Test_acc:90.7%,Test_loss:0.214

Epoch:20, Train_acc:96.7%, Train_loss:0.151, Test_acc:91.1%,Test_loss:0.163

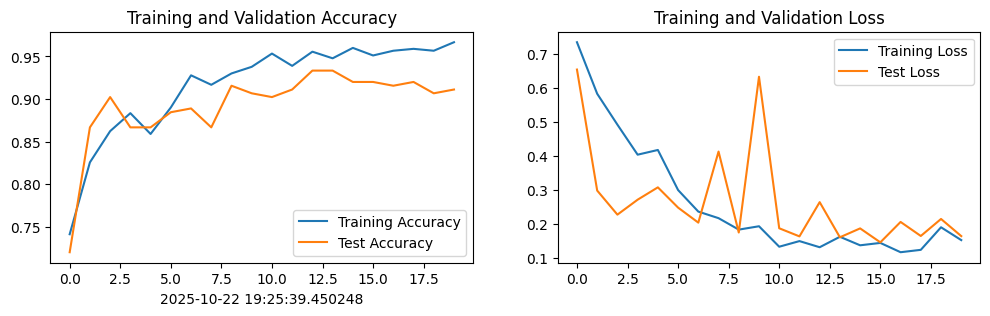

Done5、结果可视化

import matplotlib.pyplot as plt

#隐藏警告

import warnings

warnings.filterwarnings("ignore") #忽略警告信息

#plt.rcParams['font.sans-serif'] = ['SimHei'] # 用来正常显示中文标签

plt.rcParams['axes.unicode_minus'] = False # 用来正常显示负号

plt.rcParams['figure.dpi'] = 100 #分辨率from datetime import datetime

current_time = datetime.now() # 获取当前时间epochs_range = range(epochs)plt.figure(figsize=(12, 3))

plt.subplot(1, 2, 1)plt.plot(epochs_range, train_acc, label='Training Accuracy')

plt.plot(epochs_range, test_acc, label='Test Accuracy')

plt.legend(loc='lower right')

plt.title('Training and Validation Accuracy')

plt.xlabel(current_time) # 打卡请带上时间戳,否则代码截图无效plt.subplot(1, 2, 2)

plt.plot(epochs_range, train_loss, label='Training Loss')

plt.plot(epochs_range, test_loss, label='Test Loss')

plt.legend(loc='upper right')

plt.title('Training and Validation Loss')

plt.show()

6、预测本地图片

# 5. 预测函数

def predict(image_path):img = Image.open(image_path).convert('RGB') # 加载并转为RGBimg_tensor = train_transforms(img).unsqueeze(0).to(device) # 预处理+加批次维度with torch.no_grad():output = model(img_tensor)pred_idx = torch.argmax(output, dim=1).item() # 取最大概率索引return classeNames[pred_idx]print("预测结果:", predict("./data/test_image.jpg"))

预测结果: shine