CentCentOS7-OPenStack-Trian版搭建

环境软件说明

Windows 11

Vmware Workstaion pro 16.exe

CentOS-7-x86_64-DVD-1708.iso

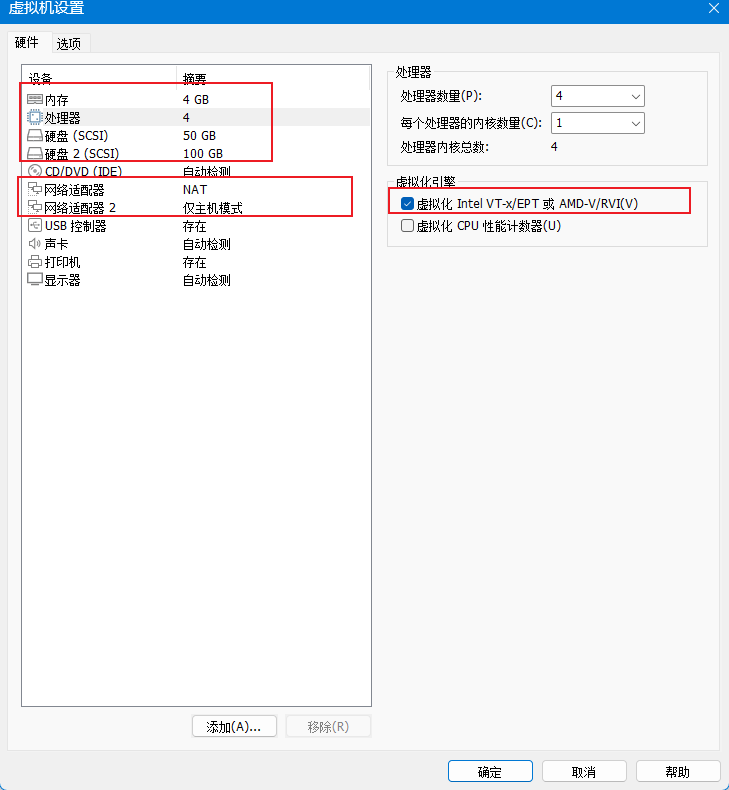

虚拟机设置

1.网卡设置

NAT网卡 地址192.168.220.0 网段

仅主机网卡 地址 192.168.10.0 网段

2.开启虚拟化Inter VT-x/EPT

3. 硬盘添加一个100G磁盘

4.使用 CentOS-7-x86_64-DVD-1708.iso镜像文件

环境初始化

1.设置主机名,关闭防火墙,设置时区,禁用NetworkManager,启用network,禁用selinux,设置主机映射

hostnamectl set-hostname controller

setenforce 0

timedatectl set-timezone "Asia/Shanghai"

systemctl disable NetworkManager

systemctl stop NetworkManager

systemctl enable network

systemctl start network

echo "192.168.10.100 controller" >> /etc/hosts

echo "192.168.10.200 compute" >> /etc/hosts

cat /etc/hosts2.禁用selinux,修改网卡配置,Nat为ens33 ,仅主机为ens34,根据实际更改

vi /etc/selinux/config

# 修改为SELINUX=disabledvi /etc/sysconfig/network-scripts/ifcfg-ens33# 修改为静态模式

BOOTPROTO=static

# 添加静态配置

IPADDR=192.168.220.100

PERFIX=24

GATEWAY=192.168.220.2

DNS1=114.114.114.114vi /etc/sysconfig/network-scripts/ifcfg-ens34# 修改为静态模式

BOOTPROTO=static

# 添加静态配置

ONBOOT=yes

IPADDR=192.168.10.100

PREFIX=24# 重启网络systemctl restart network#测试是否能上外网ping www.baidu.com -c 23.关机后克隆一台作为compute节点,修改主机名,并修改一下ip地址地址

Compute节点

hostnamectl set-hostname computevi /etc/sysconfig/network-scripts/ifcfg-ens33# 修改地址IPADDR=192.168.220.200vi /etc/sysconfig/network-scripts/ifcfg-ens34修改地址

ONBOOT=yes

IPADDR=192.168.10.200# 重启网络systemctl restart networkping controller -c 2以上便是环境配置流程

可以得到

| 主机名 | IP |

| controller | NAT : 192.168.220.100 仅主机 192.168.10.100 |

| compute | NAT : 192.168.220.200 仅主机 192.168.10.200 |

Yum源配置

双节点都配置以下yum源

# 移除源yum源mv /etc/yum.repolist/* /opt/

yum clean all#一键更换阿里云

curl -O https://file.tsyvps.com/yumcentos7.sh && chmod +x yumcentos7.sh && ./yumcentos7.sh# 编辑一个OpenStack源vi /etc/yum.repos.d/CentOS-Openstack-train-aliyun.repo[centos-openstack-train]

name=CentOS-7-OpenStack-Train

baseurl=https://mirrors.aliyun.com/centos/7/cloud/x86_64/openstack-train/

gpgcheck=0

enabled=1#[virt]以防后续报错(Requires: qemu-kvm-rhev >= 2.10.0)[Virt]

name=CentOS-$releasever - Base

baseurl=http://mirrors.aliyun.com/centos/7.9.2009/virt/x86_64/kvm-common/

gpgcheck=0# 清理并重建缓存yum clean all && yum makecache && yum repolist

配置时间同步

1.controller节点

[root@controller ~]# yum install chrony -y

[root@controller ~]# vim /etc/chrony.conf # controller节点需要改这三个地方

server ntp.aliyun.com iburst # 中间的ntp服务器可自己改,能同步就行

allow 192.168.10.0/24 #允许192.168.10.0/24 这个网段内的主机与这台服务器同步

local stratum 10 [root@controller ~]# systemctl restart chronyd

[root@controller ~]# chronyc sources

210 Number of sources = 1

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^* 203.107.6.88 2 6 377 54 +5019us[ +13ms] +/- 58ms

2. compute节点

[root@compute ~]# yum install chrony -y

[root@compute ~]# vim /etc/chrony.conf# 只需要改动一处地方

server controller iburst[root@compute ~]# systemctl restart chronyd

[root@compute ~]# chronyc sources

210 Number of sources = 1

MS Name/IP address Stratum Poll Reach LastRx Last sample

===============================================================================

^* controller 3 6 7 1 +6342ns[ -921us] +/- 29mscontroller节点下载OPenStack软件安装包(以下所有安装软件密码都会设置为123)

#不能先安装centos-release-openstack-train ,因为执行后会得多很多过期的yum源,其中有跟我们自己配置的yum源冲突,或者也可以指定yum源安装[root@controller ~]# yum install python2-openstackclient -y

[root@controller ~]# yum install centos-release-openstack-train -y# yum install centos-release-openstack-train -y 执行后会有很多不能用的源,所以移除或删除[root@localhost ~]# mv /etc/yum.repos.d/* /opt/

[root@localhost ~]# mv /opt/CentOS-Base-ali.repo /etc/yum.repos.d/

[root@localhost ~]# mv /opt/CentOS-Openstack-train-aliyun.repo /etc/yum.repos.d/[root@controller ~]# yum makecache && yum repolist

controller安装数据库

[root@controller ~]# yum install mariadb mariadb-server python2-PyMySQL -y[root@controller ~]# vi /etc/my.cnf.d/openstack.cnf#配置如下

[mysqld]

default-storage-engine = innodb

innodb_file_per_table = on

max_connections = 4096

collation-server = utf8_general_ci

character-set-server = utf8[root@localhost ~]# mysql_secure_installationEnter current password for root (enter for none): #回车

OK, successfully used password, moving on... Set root password? [Y/n] y #是否修改root密码,按y输入密码,演示使用123

New password: #密码不显示 ,123

Re-enter new password:

Password updated successfully!

Reloading privilege tables..... Success!Remove anonymous users? [Y/n] # 移除匿名用户,建议移除... Success!Disallow root login remotely? [Y/n] y 是否禁止root远程登录... Success!Remove test database and access to it? [Y/n] y #移除测试数据库- Dropping test database...... Success!- Removing privileges on test database...... Success!Reload privilege tables now? [Y/n] y # 重新加载权限,按Y... Success![root@localhost ~]#

Controller安装rabbitmq

[root@localhost ~]# yum install rabbitmq-server -y

[root@localhost ~]# systemctl enable rabbitmq-server.service --now

# 创建 用户openstack 密码123

[root@localhost ~]# rabbitmqctl add_user openstack 123

[root@localhost ~]# rabbitmqctl set_permissions openstack ".*" ".*" ".*"Controller安装memcached

[root@localhost ~]# yum install memcached python-memcached -y

[root@localhost ~]# vi /etc/sysconfig/memcached# 修改这一行,加上controller

OPTIONS="-l 127.0.0.1,::1,controller"systemctl enable memcached.service --now

安装etcd

[root@localhost ~]# yum install etcd -y[root@localhost ~]# vi /etc/etcd/etcd.conf# 注意修改ip地址为controller仅主机地址[member]

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="http://192.168.10.100:2380"

ETCD_LISTEN_CLIENT_URLS="http://192.168.10.100:2379"

ETCD_NAME="controller"[cluster]

ETCD_INITIAL_ADVERTISE_PEER_URLS="http://192.168.10.100:2380"

ETCD_ADVERTISE_CLIENT_URLS="http://192.168.10.100:2379"

ETCD_INITIAL_CLUSTER="controller=http://192.168.10.100:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster-01"

ETCD_INITIAL_CLUSTER_STATE="new"[root@controller ~]# systemctl enable --now etcdcontroller 安装 Keystone

1. OpenStack Keystone 服务的数据库初始化

[root@controller ~]# mysql -uroot -p123MariaDB [(none)]> CREATE DATABASE keystone;Query OK, 1 row affected (0.000 sec)MariaDB [(none)]> GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'localhost' IDENTIFIED BY '123';Query OK, 0 rows affected (0.000 sec)MariaDB [(none)]> GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%'IDENTIFIED BY '123';

Query OK, 0 rows affected (0.000 sec)MariaDB [(none)]> flush privileges;

Query OK, 0 rows affected (0.001 sec)MariaDB [(none)]> exit;2. 安装

[root@controller ~]# yum install openstack-keystone httpd mod_wsgi -y

[root@controller ~]# vi /etc/keystone/keystone.conf[database]

connection = mysql+pymysql://keystone:123@controller/keystone#注意修改自己的密码

[token]

provider = fernet[root@controller ~]# su -s /bin/sh -c "keystone-manage db_sync" keystone[root@controller ~]# keystone-manage fernet_setup --keystone-user keystone \--keystone-group keystone[root@controller ~]# keystone-manage credential_setup --keystone-user keystone \--keystone-group keystone#注意修改修改密码

[root@controller ~]# keystone-manage bootstrap --bootstrap-password 123 \

--bootstrap-admin-url http://controller:5000/v3/ \

--bootstrap-internal-url http://controller:5000/v3/ \

--bootstrap-public-url http://controller:5000/v3/ \

--bootstrap-region-id RegionOne[root@controller ~]# vi /etc/httpd/conf/httpd.conf

#添加

ServerName controller[root@controller ~]# ln -s /usr/share/keystone/wsgi-keystone.conf /etc/httpd/conf.d/[root@controller ~]# systemctl enable --now httpd3.编辑 admin-openrc 文件(openstack环境变量配置)

[root@controller ~]# vi admin-openrcexport OS_USERNAME=admin

export OS_PASSWORD=123

export OS_PROJECT_NAME=admin

export OS_USER_DOMAIN_NAME=Default

export OS_PROJECT_DOMAIN_NAME=Default

export OS_AUTH_URL=http://controller:5000/v3

export OS_IDENTITY_API_VERSION=3

export OS_IMAGE_API_VERSION=2#加载文件

[root@controller ~]# source admin-openrc4.测试

# 创建domain

[root@controller ~]# openstack domain create --description "An Example Domain" example

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | An Example Domain |

| enabled | True |

| id | 6e39da4c491b4eb5935fb9ca5c047f16 |

| name | example |

| options | {} |

| tags | [] |

+-------------+----------------------------------+#创建project[root@controller ~]# openstack project create --domain default --description "Service Project" service

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Service Project |

| domain_id | default |

| enabled | True |

| id | debb19d9a1f849ae83bfe0c97674607f |

| is_domain | False |

| name | service |

| options | {} |

| parent_id | default |

| tags | [] |

+-------------+----------------------------------+[root@controller ~]# unset OS_AUTH_URL OS_PASSWORD

[root@controller ~]# openstack --os-auth-url http://controller:5000/v3 \--os-project-domain-name Default --os-user-domain-name Default \--os-project-name admin --os-username admin token issue

Password: # 输入admin密码123

+------------+-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| Field | Value |

+------------+-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

| expires | 2025-08-27T05:09:55+0000 |

| id | gAAAAABoroUTUSzmHBoyNFSZbKuppMlfHfrP7jVw_gMsrZuuKpwueHHxpYdJVMTwAVsLdS-UzkZhDZaLitRGpD8RvTjNtppSBTum5nMucyfLbX58am8lQqxeOOEDiL5UFUaTXTkuMy8Au83R0h354Wjm9T6mpozRbE6xbWj8KT6EH4i2owDhTiM |

| project_id | 75128ca58e4d4409941651a2fb7a9cd4 |

| user_id | 5230b4e8fe3942e0a4b6378f41724beb |

+------------+-----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+

[root@controller ~]#安装glance

1.OpenStack Glance 服务的数据库初始化

[root@controller ~]# mysql -uroot -p123MariaDB [(none)]> CREATE DATABASE glance;

MariaDB [(none)]> GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'localhost' IDENTIFIED BY '123';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' IDENTIFIED BY '123';MariaDB [(none)]> exit;2.创建Glance用户

#重新加载环境[root@controller ~]# source admin-openrc

[root@controller ~]# openstack user create --domain default --password-prompt glance

User Password: #设置glance密码

Repeat User Password: #在输入glance密码

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | default |

| enabled | True |

| id | ebe6cc0d94b04b0aa48f2027471fdf38 |

| name | glance |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+# 绑定管理员角色[root@controller ~]# openstack role add --project service --user glance admin# 注册Glance服务到Keystone[root@controller ~]# openstack service create --name glance --description "OpenStack Image" image

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | OpenStack Image |

| enabled | True |

| id | bb84cb6a6f0247759b0abbc1c8b08103 |

| name | glance |

| type | image |

+-------------+----------------------------------+

[root@controller ~]#

3.给glance创建服务端点

在 OpenStack 中,所有服务(如 Glance、Nova、Neutron 等)都需要在 Keystone 中注册 访问端点

[root@controller ~]# openstack endpoint create --region RegionOne image public http://controller:9292[root@controller ~]# openstack endpoint create --region RegionOne image internal http://controller:9292[root@controller ~]# openstack endpoint create --region RegionOne image admin http://controller:92924. 安装Glance,glance-api 配置文件,同步数据库,启动服务,验证

[root@controller ~]# yum install openstack-glance -y

[root@controller ~]# vi /etc/glance/glance-api.conf# 注意修改密码[database]

connection = mysql+pymysql://glance:123@controller/glance

[keystone_authtoken]

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = glance

password = 123

[paste_deploy]

flavor = keystone

[glance_store]

stores = file,http

default_store = file

filesystem_store_datadir = /var/lib/glance/images/#同步数据库

[root@controller ~]# su -s /bin/sh -c "glance-manage db_sync" glance#启动服务[root@controller ~]# systemctl enable openstack-glance-api.service --now#验证服务

[root@controller ~]# source admin-openrc

#下载 .img文件

[root@controller ~]# curl -O http://download.cirros-cloud.net/0.4.0/cirros-0.4.0-x86_64-disk.img[root@controller ~]# ls

查看是否成功下载 cirros-0.4.0-x86_64-disk.img [root@controller ~]# glance image-create --name "cirros" --file cirros-0.4.0-x86_64-disk.img --disk-format qcow2 --container-format bare --visibility public+------------------+----------------------------------------------------------------------------------+

| Property | Value |

+------------------+----------------------------------------------------------------------------------+

| checksum | e6018c96d7221a552e0d07a86ba79bb9 |

| container_format | bare |

| created_at | 2025-08-27T06:05:02Z |

| disk_format | qcow2 |

| id | 33cb28d7-238c-418e-9da6-0680b1097cc8 |

| min_disk | 0 |

| min_ram | 0 |

| name | cirros |

| os_hash_algo | sha512 |

| os_hash_value | d8e7cfc1d0409b00116c32a3ce988a2807270ee513478e6d3af9bb2679a6763f0b921579d153d9d0 |

| | 8a61af3ee2515c0194433b7c344d4cb1dab617be1814563d |

| os_hidden | False |

| owner | 75128ca58e4d4409941651a2fb7a9cd4 |

| protected | False |

| size | 273 |

| status | active |

| tags | [] |

| updated_at | 2025-08-27T06:05:02Z |

| virtual_size | Not available |

| visibility | public |

+------------------+----------------------------------------------------------------------------------+[root@controller ~]# openstack image list# 输出一下即为成功+--------------------------------------+--------+--------+

| ID | Name | Status |

+--------------------------------------+--------+--------+

| 33cb28d7-238c-418e-9da6-0680b1097cc8 | cirros | active |

+--------------------------------------+--------+--------+安装placement

1. OpenStack placement 服务的数据库初始化

[root@controller ~]# mysql -uroot -p123MariaDB [(none)]> CREATE DATABASE placement;

MariaDB [(none)]> GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'localhost' IDENTIFIED BY '123';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON placement.* TO 'placement'@'%' IDENTIFIED BY '123';

MariaDB [(none)]> exit2. 配置用户

这三条命令依次完成了 Placement 服务的 “用户创建→权限分配→服务注册”,是 OpenStack 中服务部署的标准流程,确保 Placement 服务能正常接入 OpenStack 生态并被其他组件使用。

[root@controller ~]# openstack user create --domain default --password-prompt placement[root@controller ~]# openstack role add --project service --user placement admin[root@controller ~]# openstack service create --name placement --description "Placement API" placement3.创建服务端点

这三条命令 Placement 服务被完整接入 OpenStack 服务体系,支撑 Nova 等组件完成资源调度

[root@controller ~]# openstack endpoint create --region RegionOne placement public http://controller:8778

[root@controller ~]# openstack endpoint create --region RegionOne placement admin http://controller:8778

[root@controller ~]# openstack endpoint create --region RegionOne placement internal http://controller:87784.安装 placement,placement配置文件,同步数据库,重启httpd服务,验证服务

# 安装 placement[root@controller ~]# yum install openstack-placement-api -y# placement配置文件[root@controller ~]# vi /etc/placement/placement.conf

#注意将123修改为自己的密码[placement_database]

connection = mysql+pymysql://placement:123@controller/placement[api]

auth_strategy = keystone[keystone_authtoken]

auth_url = http://controller:5000/v3

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = placement

password = 123# 同步数据库 [root@controller ~]# su -s /bin/sh -c "placement-manage db sync" placement# 重启httpd服务[root@controller ~]# systemctl restart httpd# 验证服务[root@controller ~]# placement-status upgrade check

+----------------------------------+

| Upgrade Check Results |

+----------------------------------+

| Check: Missing Root Provider IDs |

| Result: Success |

| Details: None |

+----------------------------------+

| Check: Incomplete Consumers |

| Result: Success |

| Details: None |

+----------------------------------+[root@controller ~]# vi /etc/httpd/conf.d/00-placement-api.conf# 添加如下配置<Directory /usr/bin><IfVersion >= 2.4>Require all granted</IfVersion><IfVersion < 2.4>Order allow,denyAllow from all</IfVersion>

</Directory>安装nova

1. OpenStack Nova 服务的数据库初始化

[root@controller ~]# mysql -u root -p123

MariaDB [(none)]> CREATE DATABASE nova_api;

MariaDB [(none)]> CREATE DATABASE nova;

MariaDB [(none)]> CREATE DATABASE nova_cell0;

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'localhost' IDENTIFIED BY '123';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' IDENTIFIED BY '123';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' IDENTIFIED BY '123';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' IDENTIFIED BY '123';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'localhost' IDENTIFIED BY '123';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' IDENTIFIED BY '123';2. 配置用户

[root@controller ~]# openstack user create --domain default --password-prompt nova

User Password: #设置密码 123

Repeat User Password:

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | default |

| enabled | True |

| id | ba2a64b7afde4fb1b9cb3e63bc5220e1 |

| name | nova |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+[root@controller ~]# openstack role add --project service --user nova admin

[root@controller ~]# openstack service create --name nova --description "OpenStack Compute" compute+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | OpenStack Compute |

| enabled | True |

| id | 40699ef586f44cdfb4995d019a11ee5e |

| name | nova |

| type | compute |

+-------------+----------------------------------+# 创建服务端点[root@controller ~]# openstack endpoint create --region RegionOne compute public http://controller:8774/v2.1

[root@controller ~]# openstack endpoint create --region RegionOne compute internal http://controller:8774/v2.1

[root@controller ~]# openstack endpoint create --region RegionOne compute admin http://controller:8774/v2.13. 安装软件包,编辑配置文件 nova.conf,同步数据库,启动服务

[root@controller ~]# yum install openstack-nova-api openstack-nova-conductor openstack-nova-novncproxy openstack-nova-scheduler -y[root@controller ~]# vi /etc/nova/nova.conf

#注意将123改为自己设置的密码,以及my_ip设置为仅主机的ip地址[DEFAULT]

my_ip = 192.168.10.100

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

transport_url = rabbit://openstack:123@controller:5672/

enabled_apis = osapi_compute,metadata

[api_database]

connection = mysql+pymysql://nova:123@controller/nova_api

[database]

connection = mysql+pymysql://nova:123@controller/nova

[api]

auth_strategy = keystone

[keystone_authtoken]

www_authenticate_uri = http://controller:5000/

auth_url = http://controller:5000/

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = 123

[vnc]

enabled = true

server_listen = $my_ip

server_proxyclient_address = $my_ip

[glance]

api_servers = http://controller:9292

[oslo_concurrency]

lock_path = /var/lib/nova/tmp

[placement]

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://controller:5000/v3

username = placement

password = 123# 同步数据库[root@controller ~]# su -s /bin/sh -c "nova-manage api_db sync" nova

[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 map_cell0" nova

[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 create_cell --name=cell1 --verbose" nova

[root@controller ~]# su -s /bin/sh -c "nova-manage db sync" nova[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 list_cells" nova

+-------+--------------------------------------+------------------------------------------+-------------------------------------------------+----------+

| 名称 | UUID | Transport URL | 数据库连接 | Disabled |

+-------+--------------------------------------+------------------------------------------+-------------------------------------------------+----------+

| cell0 | 00000000-0000-0000-0000-000000000000 | none:/ | mysql+pymysql://nova:****@controller/nova_cell0 | False |

| cell1 | c6571fa4-adb6-4f58-97b5-99da1e309ee4 | rabbit://openstack:****@controller:5672/ | mysql+pymysql://nova:****@controller/nova | False |

+-------+--------------------------------------+------------------------------------------+-------------------------------------------------+----------+# 启动服务[root@controller ~]# systemctl enable openstack-nova-api.service openstack-nova-scheduler.service openstack-nova-conductor.service openstack-nova-novncproxy.service --now

compute安装nova-compute

[root@compute ~]# yum install openstack-nova-compute -y[root@compute ~]# vi /etc/nova/nova.conf[DEFAULT]

my_ip = 192.168.100.110

use_neutron = true

firewall_driver = nova.virt.firewall.NoopFirewallDriver

enabled_apis = osapi_compute,metadata

transport_url = rabbit://openstack:123@controller

[api]

auth_strategy = keystone

[keystone_authtoken]

www_authenticate_uri = http://controller:5000/

auth_url = http://controller:5000/

memcached_servers = controller:11211

auth_type = password

project_domain_name = Default

user_domain_name = Default

project_name = service

username = nova

password = 123

[vnc]

enabled = true

server_listen = 0.0.0.0

server_proxyclient_address = $my_ip

novncproxy_base_url = http://controller:6080/vnc_auto.html

[glance]

api_servers = http://controller:9292

[oslo_concurrency]

lock_path = /var/lib/nova/tmp

[placement]

region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://controller:5000/v3

username = placement

password = 123

[libvirt]

virt_type = qemu#查看是否开启虚拟化[root@compute ~]# egrep -c '(vmx|svm)' /proc/cpuinfo

# 如果等于0就是没开启 虚拟化,参考环境配置部分勾选虚拟化引擎[root@compute ~]# systemctl enable libvirtd.service openstack-nova-compute.service --now将计算节点添加到数据库,controller节点执行

[root@controller ~]# openstack compute service list --service nova-compute

[root@controller ~]# su -s /bin/sh -c "nova-manage cell_v2 discover_hosts --verbose" nova[root@controller ~]# openstack compute service list

安装Neutron

1. 数据库操作

[root@controller ~]# mysql -u root -p123MariaDB [(none)]> CREATE DATABASE neutron;

MariaDB [(none)]> GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'localhost' IDENTIFIED BY '123';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' IDENTIFIED BY '123';

MariaDB [(none)]> exit

2.创建用户

[root@controller ~]# openstack user create --domain default --password-prompt neutron

User Password:

Repeat User Password:

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | default |

| enabled | True |

| id | 60c45e8a9299463db53891f6ef3ddaf4 |

| name | neutron |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+[root@controller ~]# openstack role add --project service --user neutron admin#创建服务端点[root@controller ~]# openstack service create --name neutron --description "OpenStack Networking" network

[root@controller ~]# openstack endpoint create --region RegionOne network public http://controller:9696

[root@controller ~]# openstack endpoint create --region RegionOne network internal http://controller:9696

[root@controller ~]# openstack endpoint create --region RegionOne network admin http://controller:9696安装Self-service networks,编写配置文件

[root@controller ~]# yum install openstack-neutron openstack-neutron-ml2 openstack-neutron-linuxbridge ebtables -y# 编写配置文件 neutron.conf

[root@controller ~]# vi /etc/neutron/neutron.conf

[DEFAULT]

notify_nova_on_port_status_changes = true

notify_nova_on_port_data_changes = true

core_plugin = ml2

service_plugins = router

allow_overlapping_ips = true

transport_url = rabbit://openstack:123@controller

auth_strategy = keystone

[database]

connection = mysql+pymysql://neutron:123@controller/neutron

[keystone_authtoken]

www_authenticate_uri = http://controller:5000

auth_url = http://controller:5000

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = 123

[nova]

auth_url = http://controller:5000

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = nova

password = 123

[oslo_concurrency]

lock_path = /var/lib/neutron/tmp# 编写配置文件 ml2_conf.ini

[root@controller ~]# vi /etc/neutron/plugins/ml2/ml2_conf.ini

[ml2]

tenant_network_types = vxlan

mechanism_drivers = linuxbridge,l2population

type_drivers = flat,vlan,vxlan

extension_drivers = port_security

[ml2_type_flat]

flat_networks = provider

[ml2_type_vxlan]

vni_ranges = 1:1000

[securitygroup]

enable_ipset = true编写linuxbridge_agent.ini[root@controller ~]# vi /etc/neutron/plugins/ml2/linuxbridge_agent.ini

[linux_bridge]

physical_interface_mappings = provider:ens34

# 这里的ens34你改成你自己使用NAT的那一张网卡

[vxlan]

enable_vxlan = true

# IP改成自己的仅主机的ip

local_ip = 192.168.10.100

l2_population = true

[securitygroup]

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver# 打开桥接[root@controller ~]# modprobe br_netfilter[root@controller ~]# vi /etc/sysctl.conf

# 添加

net.bridge.bridge-nf-call-iptables=1

net.bridge.bridge-nf-call-ip6tables=1[root@controller ~]# sysctl -p

# 显示

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1# 编写 l3_agent.ini[root@controller ~]# vi /etc/neutron/l3_agent.ini

[DEFAULT]

interface_driver = linuxbridge# 编写dhcp_agent.ini[root@controller ~]# vim /etc/neutron/dhcp_agent.ini

# 添加[DEFAULT]

interface_driver = linuxbridge

dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

enable_isolated_metadata = true# 编写metadata_agent.ini

[root@controller ~]# vi /etc/neutron/metadata_agent.ini[DEFAULT]

nova_metadata_host = controllermetadata_proxy_shared_secret = 123# 完成安装[root@controller ~]# ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

[root@controller ~]# su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf --config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron# 启动服务[root@controller ~]#systemctl restart openstack-nova-api.service

[root@controller ~]#systemctl enable neutron-server.service neutron-linuxbridge-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service neutron-l3-agent.service --now# 验证服务[root@controller neutron]# openstack network agent list[root@controller ~]# openstack network agent list

+--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

| ID | Agent Type | Host | Availability Zone | Alive | State | Binary |

+--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+

| 764aca45-5d92-496a-af57-fe1f54e590fa | Linux bridge agent | controller | None | :-) | UP | neutron-linuxbridge-agent |

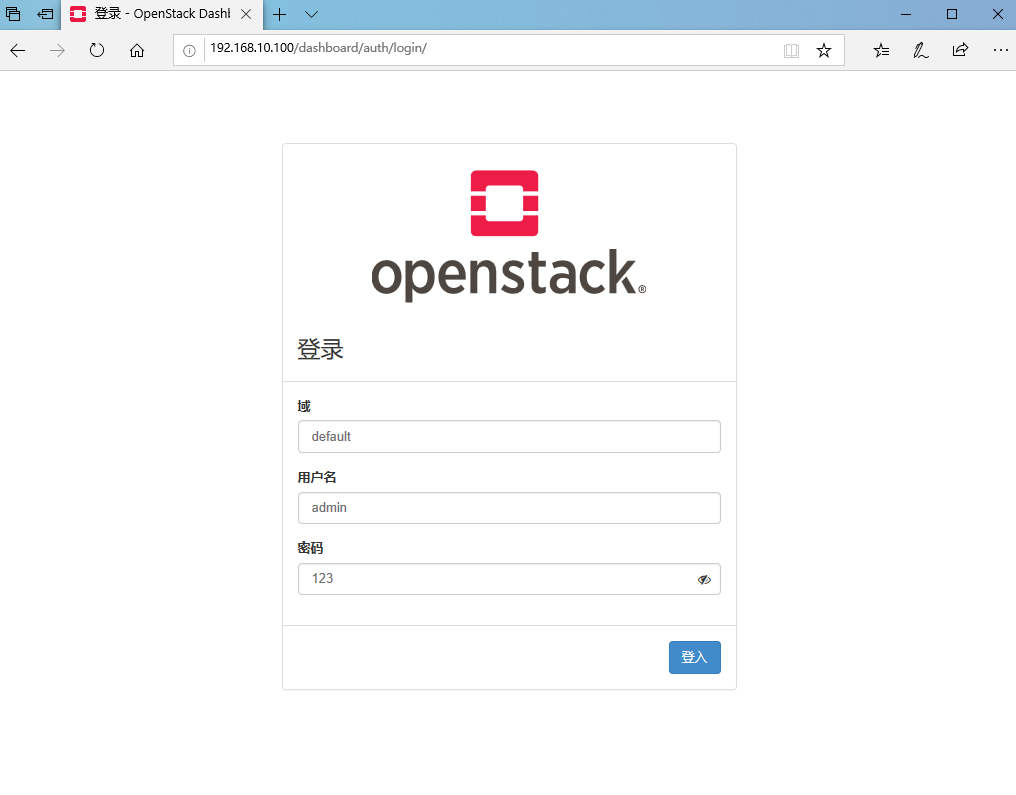

+--------------------------------------+--------------------+------------+-------------------+-------+-------+---------------------------+安装dashboard

[root@controller ~]# yum install openstack-dashboard -y[root@controller ~]# vi /etc/openstack-dashboard/local_settings# 这里面有些配置项是本来就存在的,直接修改即可,不存在的直接添加

# 可以 /name 搜索,示例:/OPENSTACK_HOST = OPENSTACK_HOST = "controller"

ALLOWED_HOSTS = ['*']

SESSION_ENGINE = 'django.contrib.sessions.backends.cache'

CACHES = {'default': {'BACKEND': 'django.core.cache.backends.memcached.MemcachedCache','LOCATION': 'controller:11211',}

}

OPENSTACK_KEYSTONE_URL = "http://%s:5000/v3" % OPENSTACK_HOST

OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = True

OPENSTACK_API_VERSIONS = {"identity": 3,"image": 2,"volume": 3,

}

OPENSTACK_KEYSTONE_DEFAULT_DOMAIN = "Default"

OPENSTACK_KEYSTONE_DEFAULT_ROLE = "user"

# 这里面如果你安装的neutron是 provider类型的禁用第三项,其他的不变

OPENSTACK_NEUTRON_NETWORK = {# 保留其他的,禁用掉 enable_auto_allocated_network'enable_auto_allocated_network': False,

、

}TIME_ZONE = "Asia/Shanghai"

# 这一行得加上

WEBROOT='/dashboard'[root@controller ~]# vi /etc/httpd/conf.d/openstack-dashboard.conf

# 加上这一行

WSGIApplicationGroup %{GLOBAL}[root@controller ~]# systemctl restart httpd.service memcached.service验证 :可以通过同网段的主机浏览器访问